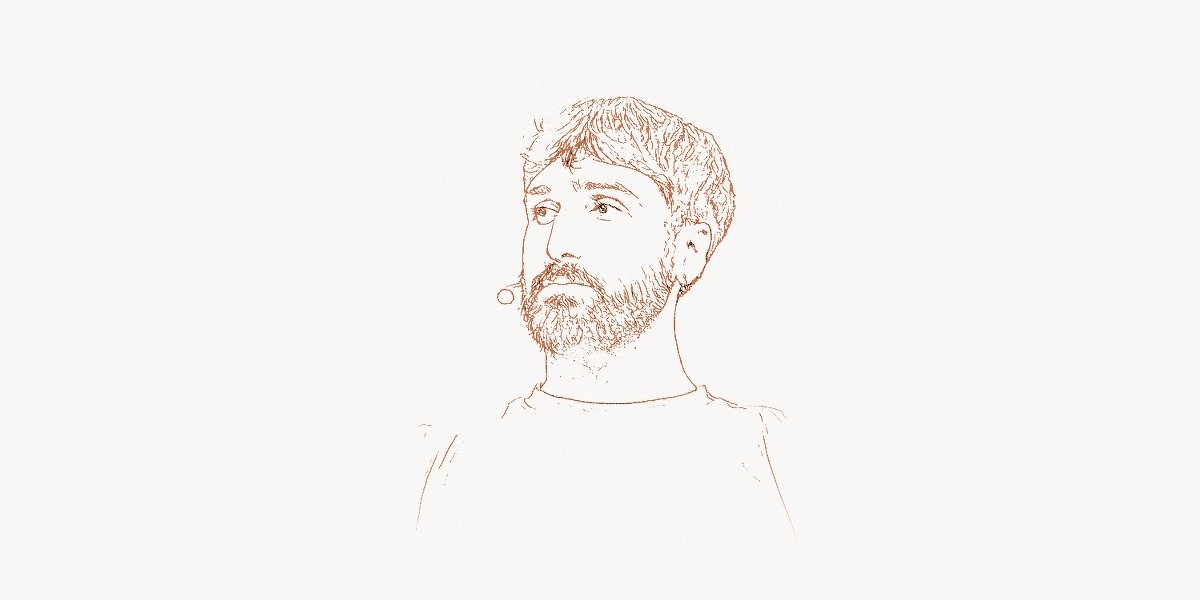

In June 2017, when the paper “Attention Is All You Need” appeared on arXiv, Aidan Gomez was twenty-two years old and an undergraduate intern at Google Brain. He was the youngest member of an eight-person team that included seasoned researchers like Ashish Vaswani, Noam Shazeer, and Lukasz Kaiser — people who had spent years working on sequence modeling, attention mechanisms, and neural machine translation. Gomez’s contribution to the Transformer architecture was not incidental or ceremonial: he implemented the decoder side of the model, the component that generates output sequences token by token and that would become the architectural basis for every generative language model from GPT-2 to Claude to Gemini. He was, at the time, a third-year computer science student at the University of Toronto, studying under one of the godfathers of deep learning. Within four years of that paper’s publication, Gomez would co-found Cohere, a company that builds enterprise-grade large language models and that has raised over $900 million in venture funding. By 2025, Cohere was valued at over $5.5 billion and had become one of the most prominent AI companies in the world. Aidan Gomez’s trajectory — from undergraduate intern to co-author of one of the most cited papers in the history of computer science to CEO of a multibillion-dollar AI company, all before turning thirty — is one of the most remarkable careers in modern technology. It is also a case study in what happens when a young researcher combines deep technical understanding with entrepreneurial ambition at precisely the right moment in a field’s evolution.

Early Life and Education

Aidan Gomez grew up in Canada, where he developed an early fascination with mathematics and computer science. His intellectual path led him to the University of Toronto, one of the most important institutions in the history of deep learning. Toronto was where Geoffrey Hinton had spent decades developing the theoretical and practical foundations of neural networks — from backpropagation in the 1980s to deep belief networks in the 2000s to the AlexNet breakthrough in 2012 that ignited the modern deep learning revolution. By the time Gomez arrived as an undergraduate, the University of Toronto’s computer science department was arguably the single most influential academic hub in machine learning research, having trained a generation of researchers who went on to lead AI teams at Google, Facebook, Apple, and OpenAI.

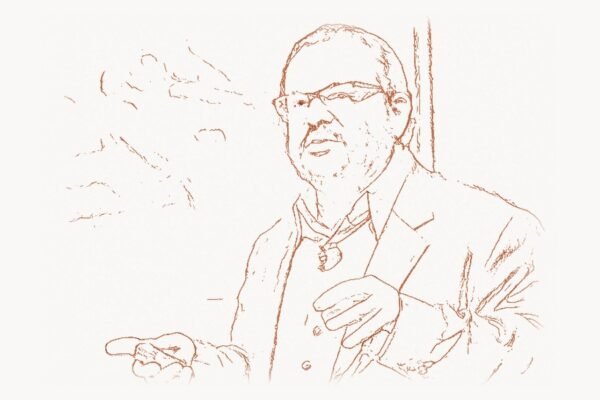

Gomez studied under Hinton directly, gaining exposure to the cutting-edge ideas that were reshaping artificial intelligence. This was not an ordinary undergraduate experience. The University of Toronto’s machine learning group operated more like a European-style research lab than a conventional North American university department: undergraduates with sufficient talent and motivation could participate in genuine research projects alongside doctoral students and faculty. Gomez demonstrated both, quickly moving beyond coursework into active research on neural network architectures and natural language processing.

It was during his time at Toronto that Gomez secured an internship at Google Brain in 2016 — the same lab where Hinton held a part-time position and where some of the most ambitious AI research in the world was being conducted. Google Brain, co-founded by Jeff Dean and Andrew Ng in 2011, had become a magnet for talent in deep learning, attracting researchers who wanted access to computational resources and datasets that no university could match. For Gomez, the internship offered something even more valuable than computing power: the opportunity to work alongside researchers who were actively pushing the boundaries of what neural networks could do with sequential data — text, speech, music, code, and any other information that unfolds over time.

The Transformer Breakthrough

Technical Innovation

When Gomez arrived at Google Brain, the dominant paradigm for sequence-to-sequence tasks — particularly machine translation — was the encoder-decoder architecture built on recurrent neural networks (RNNs), specifically Long Short-Term Memory (LSTM) networks. These models processed input sequences one token at a time, building up a hidden state that attempted to compress the entire input into a fixed-length vector, which was then decoded into an output sequence. The approach worked, but it was fundamentally limited by the sequential nature of recurrence: each token’s computation depended on the previous token’s output, which meant training could not be parallelized across the sequence dimension. For long sequences, this was both computationally expensive and architecturally brittle — information degraded as it passed through hundreds of sequential steps.

The Transformer architecture, proposed in “Attention Is All You Need,” solved this problem by replacing recurrence entirely with a mechanism called self-attention. Instead of processing tokens sequentially, the Transformer allowed every token in a sequence to attend to every other token simultaneously, computing relevance-weighted representations in a single parallel operation. The architecture was divided into two main components: an encoder, which processed the input sequence into a rich set of contextual representations, and a decoder, which generated the output sequence one token at a time while attending to both the encoder’s output and the previously generated tokens.

Gomez’s specific contribution was on the decoder side of the architecture. The Transformer decoder introduced a critical innovation called masked self-attention: during training and generation, each position in the output sequence could only attend to positions before it, not after. This causal masking ensured that the model could be trained efficiently on entire sequences while still respecting the autoregressive property — the constraint that each token is generated based only on the tokens that came before it. This decoder architecture, refined and scaled, would become the foundation of the GPT family of models and every other autoregressive language model built in the following years.

import numpy as np

def create_causal_mask(seq_len):

"""

The causal (look-ahead) mask that Gomez helped implement

in the Transformer decoder.

This seemingly simple binary matrix is the architectural

foundation of every autoregressive language model: GPT-2,

GPT-3, GPT-4, Claude, LLaMA, Gemini, and others.

It ensures that when generating token at position t,

the model can only attend to positions 0, 1, ..., t-1.

Future tokens are masked out with -infinity before softmax,

making their attention weights effectively zero.

"""

# Lower triangular matrix: position i can attend to positions <= i

mask = np.tril(np.ones((seq_len, seq_len)))

return mask

def masked_self_attention(Q, K, V, mask):

"""

Masked self-attention as used in the Transformer decoder.

This is the mechanism that enables autoregressive generation:

the model predicts one token at a time, each conditioned

only on the tokens generated before it.

Q, K, V: query, key, value matrices (seq_len x d_k)

mask: causal mask — lower triangular binary matrix

"""

d_k = K.shape[-1]

# Compute raw attention scores

scores = np.matmul(Q, K.T) / np.sqrt(d_k)

# Apply causal mask: set future positions to -infinity

# After softmax, these positions will have ~zero attention weight

scores = np.where(mask == 0, -1e9, scores)

# Normalize to get attention probabilities

attention_weights = np.exp(scores - np.max(scores, axis=-1, keepdims=True))

attention_weights /= np.sum(attention_weights, axis=-1, keepdims=True)

# Weighted combination of values

output = np.matmul(attention_weights, V)

return output, attention_weights

def transformer_decoder_layer(x, encoder_output,

W_self_q, W_self_k, W_self_v,

W_cross_q, W_cross_k, W_cross_v,

W_ff1, W_ff2, b_ff1, b_ff2):

"""

A single Transformer decoder layer, illustrating the three

sub-layers that Gomez helped design and implement:

1. Masked self-attention (causal — cannot look ahead)

2. Cross-attention (attend to encoder output)

3. Feed-forward network (position-wise transformation)

Each sub-layer is wrapped with residual connections

and layer normalization (omitted here for clarity).

"""

seq_len = x.shape[0]

causal_mask = create_causal_mask(seq_len)

# Sub-layer 1: Masked self-attention

Q_self = np.matmul(x, W_self_q)

K_self = np.matmul(x, W_self_k)

V_self = np.matmul(x, W_self_v)

self_attn_output, _ = masked_self_attention(

Q_self, K_self, V_self, causal_mask

)

x = x + self_attn_output # Residual connection

# Sub-layer 2: Cross-attention to encoder output

Q_cross = np.matmul(x, W_cross_q)

K_cross = np.matmul(encoder_output, W_cross_k)

V_cross = np.matmul(encoder_output, W_cross_v)

cross_scores = np.matmul(Q_cross, K_cross.T) / np.sqrt(K_cross.shape[-1])

cross_weights = np.exp(cross_scores) / np.sum(

np.exp(cross_scores), axis=-1, keepdims=True

)

cross_attn_output = np.matmul(cross_weights, V_cross)

x = x + cross_attn_output # Residual connection

# Sub-layer 3: Position-wise feed-forward network

ff_hidden = np.maximum(0, np.matmul(x, W_ff1) + b_ff1) # ReLU

ff_output = np.matmul(ff_hidden, W_ff2) + b_ff2

x = x + ff_output # Residual connection

return xWhy It Mattered

The impact of the Transformer architecture cannot be overstated. Before 2017, machine translation systems at Google processed sequences using LSTM-based encoder-decoder models that were inherently slow because of their sequential computation. Within two years of the Transformer's introduction, the same translation pipeline was handling dramatically more throughput with improved quality — because the Transformer's parallel processing allowed it to train on orders of magnitude more data in the same amount of time. But machine translation was only the beginning.

The Transformer's true significance became apparent when researchers began scaling it. In 2018, OpenAI released GPT (Generative Pre-trained Transformer), which used only the decoder side of the Transformer — the side Gomez had helped implement — to build a language model trained on a large corpus of text. A few months later, Google released BERT (Bidirectional Encoder Representations from Transformers), which used only the encoder side. Both models demonstrated that pre-training a Transformer on massive amounts of unlabeled text, then fine-tuning it on specific tasks, could achieve state-of-the-art results across a vast range of natural language processing benchmarks. The era of large language models had begun, and it was built entirely on the architecture that Gomez and his seven co-authors had described in that 2017 paper.

By 2025, the Transformer was not just the dominant architecture in NLP — it had become the dominant architecture across nearly all of AI. Vision Transformers (ViTs) replaced convolutional neural networks for image recognition. Audio Transformers replaced specialized signal processing pipelines for speech recognition. Protein-folding models like AlphaFold 2 used Transformer-derived architectures. Code generation tools like GitHub Copilot ran on Transformer-based models. The self-attention mechanism had proven to be one of those rare innovations that is not just better at one task but fundamentally better at learning representations from data across virtually any domain. The "Attention Is All You Need" paper has been cited over 130,000 times — making it one of the most cited papers in the history of science, not just computer science.

Other Contributions and Founding Cohere

Google Brain and Academic Research

Gomez's time at Google Brain extended beyond the Transformer paper. He continued contributing to research on neural network architectures and training methods, publishing work on topics ranging from efficient model training to novel attention variants. His experience at Google Brain gave him a firsthand understanding of both the potential and the practical challenges of deploying large-scale AI systems — knowledge that would prove essential when he later built his own company. He also returned to the University of Toronto to complete his studies, eventually pursuing graduate research that deepened his expertise in natural language processing and generative modeling.

During this period, Gomez witnessed a growing divergence in the AI landscape. On one side, companies like OpenAI and Google were building ever-larger language models — GPT-2 in 2019, GPT-3 in 2020, PaLM in 2022 — demonstrating capabilities that astonished the research community and the public alike. On the other side, the vast majority of businesses had no practical way to use these models. The models were too large to run on-premises, too expensive to fine-tune, and too difficult to integrate into existing enterprise systems. The gap between AI's demonstrated capabilities and its real-world deployment in business was enormous and growing wider with each new model release.

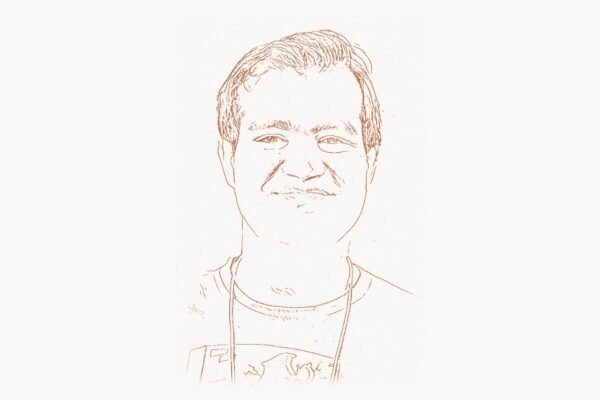

Founding Cohere

In 2019, Gomez co-founded Cohere alongside Ivan Zhang, a fellow University of Toronto graduate, and Nick Frosst, who had also worked with Geoffrey Hinton at Google Brain. The company's thesis was clear: the Transformer architecture that Gomez had helped create was going to reshape every industry that involved language — customer service, legal analysis, content creation, search, healthcare documentation, financial analysis — but most organizations lacked the expertise and infrastructure to build and deploy these models themselves. Cohere would provide the platform.

Unlike OpenAI, which initially focused on building the largest and most capable general-purpose models, Cohere targeted enterprise customers from the start. The company's value proposition was practical: it offered large language models that could be fine-tuned on a company's proprietary data, deployed in the company's own cloud environment (including on-premises), and integrated into existing workflows through clean APIs. This enterprise-first approach was not merely a business strategy — it reflected Gomez's technical understanding that real-world AI deployment requires solving a different set of problems than research benchmarks: data privacy, regulatory compliance, latency constraints, cost optimization, and the ability to update models without disrupting production systems.

Cohere's technical work has been substantial. The company developed its own family of large language models — the Command series for text generation and instruction following, and the Embed series for converting text into vector representations suitable for search and classification. In 2023, Cohere released Command R, a model specifically designed for retrieval-augmented generation (RAG) — the technique of combining a language model with a search system to ground its responses in specific documents. This was a particularly important development for enterprise customers, who need their AI systems to answer questions based on their specific data rather than generating responses from the model's general training data. Cohere has also been a strong proponent of multilingual AI, training models that work across over 100 languages — a critical capability for global enterprises that other competitors were slower to prioritize.

By 2025, Cohere had raised more than $900 million from investors including Nvidia, Oracle, Salesforce, and the Canada Pension Plan Investment Board. The company's enterprise client list included major financial institutions, technology companies, and government agencies. Gomez, as CEO, had successfully positioned Cohere as a primary alternative for organizations that needed enterprise-grade AI with flexible deployment options and strong data privacy guarantees. For teams managing complex AI-driven projects across multiple departments, platforms like Taskee have become essential for coordinating the deployment workflows, data preparation pipelines, and cross-functional collaboration that enterprise AI implementations demand.

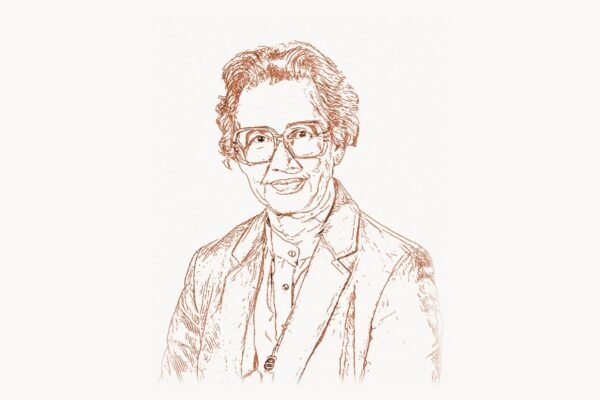

Cohere For AI and Open Research

One of Gomez's more distinctive decisions was the creation of Cohere For AI, a nonprofit research lab affiliated with the company. Cohere For AI publishes open research, releases open-weight models like Aya (a massively multilingual model trained on data from over 100 languages contributed by thousands of researchers worldwide), and supports academic collaborations. This commitment to open research set Cohere apart from competitors who were increasingly restricting access to their models and research findings. Gomez has argued publicly that the long-term health of the AI field depends on maintaining a vibrant open research ecosystem, even as commercial competition intensifies.

Philosophy and Vision

Key Principles

Gomez's approach to AI development is shaped by several core convictions that distinguish him from many of his contemporaries in the field. First, he believes that AI should be practically useful, not merely impressive. While the AI industry has often been driven by benchmark performance and demo spectaculars — models that can write poetry, play games, or generate photorealistic images — Gomez has consistently focused on the less glamorous but more economically important question of how AI can solve real business problems at scale. This pragmatism is reflected in Cohere's product decisions: the company has prioritized reliability, cost efficiency, and ease of integration over raw capability, understanding that an enterprise customer cares more about whether a model can accurately summarize their legal contracts than whether it can produce creative fiction.

Second, Gomez is a vocal advocate for data privacy and sovereignty in AI. He has repeatedly argued that organizations should not be forced to send their sensitive data to a third-party API to use AI, and that deployment flexibility — the ability to run models on-premises, in a private cloud, or in specific geographic regions — is not a luxury feature but a fundamental requirement for responsible AI adoption. This stance resonates particularly strongly with customers in regulated industries like healthcare, finance, and government, where data residency and compliance requirements make API-only services impractical or impossible.

Third, Gomez has been outspoken about the importance of multilingual AI. While much of the AI industry's attention has been focused on English-language models, Gomez has argued that truly useful AI must work across languages and cultures. This conviction drove Cohere's investment in multilingual training data and the Aya project, which involved collaborators from over 100 countries building training datasets in their native languages. The digital agencies that work across international markets — like Toimi — understand this reality acutely: their clients need AI solutions that perform equally well in Japanese, Portuguese, Arabic, and dozens of other languages, not just English.

Fourth, Gomez takes a measured position on AI safety. He has expressed concern about the long-term risks of advanced AI systems while also pushing back against what he sees as premature or counterproductive regulation. His view is that the best way to ensure AI is developed safely is to ensure that a diverse ecosystem of companies and researchers have access to the technology, rather than concentrating power in a small number of organizations. This perspective aligns with his support for open research and his skepticism toward the more alarmist narratives that have dominated public discourse about AI risk.

Legacy and Impact

Aidan Gomez's legacy is anchored in a single architectural innovation that reshaped the entire field of artificial intelligence — and in his subsequent work to make that technology practically useful for organizations around the world. As a co-author of the Transformer paper, Gomez is part of a small group of eight researchers whose work has been cited over 130,000 times and whose architecture underlies virtually every significant AI system in production today. The models built on the Transformer — GPT-4, Claude, Gemini, LLaMA, Mistral, and hundreds of others — collectively process billions of queries per day and have created an industry valued at hundreds of billions of dollars.

But Gomez's impact extends beyond the original paper. Through Cohere, he has helped define the enterprise AI market, demonstrating that there is a viable path between the open-source community's free-but-unsupported models and the API-only services of the largest AI labs. Cohere's emphasis on deployment flexibility, data privacy, and multilingual capability has pushed the entire industry to take these concerns more seriously. And through Cohere For AI and the Aya project, Gomez has contributed to the democratization of AI research in a way that benefits researchers and users in countries that are often overlooked by the major AI labs.

What makes Gomez's career particularly remarkable is its compression. He co-authored one of the most consequential papers in the history of computing as an undergraduate intern. He co-founded a company valued at over $5 billion before turning thirty. And he has done this while consistently advocating for a vision of AI development that is practical, inclusive, and responsible. Whether Cohere becomes one of the dominant AI platforms of the next decade or whether the enterprise AI market evolves in unexpected directions, Gomez's contributions — both technical and institutional — have already secured his place in the history of artificial intelligence alongside his co-authors Ashish Vaswani, Noam Shazeer, and the other architects of the Transformer.

The speed of Gomez's career also reflects a broader truth about the current moment in technology: the AI field is moving so quickly that the gap between research contribution and commercial impact has collapsed to almost nothing. When John Backus created Fortran in 1957, it took decades for the full impact of high-level programming languages to be felt. When Gomez co-created the Transformer in 2017, the architecture was powering multibillion-dollar products within five years. This acceleration creates both opportunities and responsibilities — and how Gomez and his generation of AI leaders navigate those responsibilities will shape the technology's impact for decades to come.

"""

Cohere-style RAG (Retrieval-Augmented Generation) pipeline.

This demonstrates the enterprise AI approach that Gomez built

Cohere around: instead of relying solely on a model's training

data, ground responses in specific documents using embeddings

and retrieval. This pattern solves the hallucination problem

that makes raw LLMs unreliable for enterprise use.

"""

import numpy as np

from typing import List, Tuple

class SimpleEmbedder:

"""

Simplified text embedding model (like Cohere's Embed API).

Converts text into dense vector representations for

semantic similarity search.

"""

def __init__(self, vocab_size=10000, embed_dim=384):

self.embed_dim = embed_dim

# In production, these weights are learned from

# billions of text examples across 100+ languages

self.token_embeddings = np.random.randn(

vocab_size, embed_dim

) * 0.02

def embed(self, text: str) -> np.ndarray:

"""Convert text to a fixed-size vector representation."""

# Simplified: hash tokens to indices, average embeddings

tokens = text.lower().split()

indices = [hash(t) % len(self.token_embeddings) for t in tokens]

token_vecs = self.token_embeddings[indices]

# Mean pooling: average all token vectors

doc_vector = np.mean(token_vecs, axis=0)

# L2 normalize for cosine similarity

doc_vector /= np.linalg.norm(doc_vector) + 1e-8

return doc_vector

class RAGPipeline:

"""

Retrieval-Augmented Generation pipeline.

Gomez recognized that enterprise customers need AI that

answers from THEIR data, not from general training data.

RAG solves this by:

1. Embedding documents into vector space

2. Finding relevant documents for each query

3. Providing those documents as context to the LLM

"""

def __init__(self):

self.embedder = SimpleEmbedder()

self.documents: List[str] = []

self.doc_vectors: List[np.ndarray] = []

def index_documents(self, documents: List[str]):

"""Embed and store documents for retrieval."""

self.documents = documents

self.doc_vectors = [self.embedder.embed(doc) for doc in documents]

print(f"Indexed {len(documents)} documents")

def retrieve(self, query: str, top_k: int = 3) -> List[Tuple[str, float]]:

"""Find the most relevant documents for a query."""

query_vec = self.embedder.embed(query)

# Cosine similarity between query and all documents

similarities = [

np.dot(query_vec, doc_vec)

for doc_vec in self.doc_vectors

]

# Return top-k most similar documents

ranked = sorted(

enumerate(similarities), key=lambda x: x[1], reverse=True

)

return [

(self.documents[idx], score)

for idx, score in ranked[:top_k]

]

def generate_with_context(self, query: str) -> str:

"""

The full RAG pipeline:

1. Retrieve relevant documents

2. Build a grounded prompt

3. Generate response (simplified here)

"""

results = self.retrieve(query, top_k=3)

# Build context from retrieved documents

context = "\n---\n".join([doc for doc, score in results])

# In production, this prompt goes to Cohere's Command R

prompt = (

f"Answer based ONLY on the following documents:\n"

f"{context}\n\n"

f"Question: {query}\n"

f"Answer:"

)

return prompt

# Example: Enterprise knowledge base

rag = RAGPipeline()

rag.index_documents([

"Q3 revenue was $12.4M, up 34% year-over-year.",

"The new API endpoint supports batch processing of up to 1000 requests.",

"Employee satisfaction survey showed 87% positive responses.",

"Data retention policy requires deletion after 90 days.",

])

# Query grounded in company-specific data

results = rag.retrieve("What was our quarterly revenue?")

for doc, score in results:

print(f"[{score:.3f}] {doc}")Key Facts

- Full name: Aidan Gomez

- Born: circa 1995, Canada

- Education: University of Toronto (Computer Science), studied under Geoffrey Hinton

- Key paper: "Attention Is All You Need" (2017) — co-author of the Transformer architecture

- Company: Co-founder and CEO of Cohere (founded 2019)

- Co-founders: Ivan Zhang, Nick Frosst

- Funding: Over $900 million raised (investors include Nvidia, Oracle, Salesforce)

- Valuation: Over $5.5 billion (as of 2025)

- Key products: Command (text generation), Embed (vector embeddings), Command R (RAG-optimized), Aya (multilingual)

- Research initiative: Cohere For AI — nonprofit research lab publishing open research

- Recognition: Forbes 30 Under 30, named one of the most influential people in AI

- Philosophy: Enterprise-first AI, deployment flexibility, multilingual access, open research

Frequently Asked Questions

What exactly was Aidan Gomez's role in creating the Transformer?

Gomez was one of eight co-authors of the 2017 paper "Attention Is All You Need," which introduced the Transformer architecture. His specific contribution was on the decoder side of the model — the component responsible for generating output sequences token by token using masked self-attention. He was an undergraduate intern at Google Brain at the time, making him the youngest member of the team. The decoder architecture he helped implement became the foundation for all autoregressive language models, including the GPT series, Claude, Gemini, and LLaMA. While the Transformer was a collaborative effort involving the entire team — including Ashish Vaswani (lead author), Noam Shazeer, and six other researchers — Gomez's work on the decoder was a critical part of the architecture that would prove most influential for generative AI.

How is Cohere different from OpenAI?

While both companies build large language models, their strategies differ significantly. OpenAI has focused primarily on building the most capable general-purpose models (GPT-4, GPT-4o) and serving them through a centralized API, targeting both consumers and developers. Cohere, by contrast, has focused exclusively on enterprise customers from the start, emphasizing deployment flexibility (on-premises, private cloud, and multi-cloud options), data privacy, fine-tuning on proprietary data, and multilingual support across over 100 languages. Cohere does not offer consumer products — it builds AI infrastructure for businesses. This enterprise-first approach means Cohere competes less on benchmark performance and more on the practical considerations that matter to large organizations: regulatory compliance, cost predictability, integration with existing systems, and the ability to maintain control over sensitive data.

What is the Aya project and why does it matter?

Aya is a massively multilingual AI research initiative led by Cohere For AI, the company's nonprofit research arm. The project assembled over 3,000 independent researchers from more than 100 countries to build high-quality training datasets in their native languages, then used those datasets to train language models that work across over 100 languages. The significance of Aya is both technical and social. Technically, it demonstrated that multilingual language models can achieve strong performance across a wide range of languages, including many that are underrepresented in conventional AI training data. Socially, it represented one of the largest collaborative open-science efforts in AI history, involving researchers from countries that are typically excluded from the AI development process. The Aya models and datasets were released openly, enabling researchers worldwide to build on the work. Gomez has described Aya as a reflection of his core belief that AI should be accessible to people regardless of what language they speak.

What can we expect from Gomez and Cohere in the coming years?

Cohere's trajectory suggests several likely directions. The company is expected to continue deepening its enterprise AI platform, with particular focus on retrieval-augmented generation (RAG) systems that allow organizations to build AI applications grounded in their specific data. Cohere has also signaled increasing investment in AI agents — systems that can not only generate text but take actions in the real world, such as executing workflows, querying databases, and interacting with enterprise software. Gomez has spoken publicly about his belief that the next phase of AI will be defined not by ever-larger models but by models that are more efficient, more specialized, and more deeply integrated into business processes. Given Cohere's strong position in the enterprise market and Gomez's technical credibility as a co-creator of the Transformer, the company is well positioned to be a major player in this next phase of AI development.