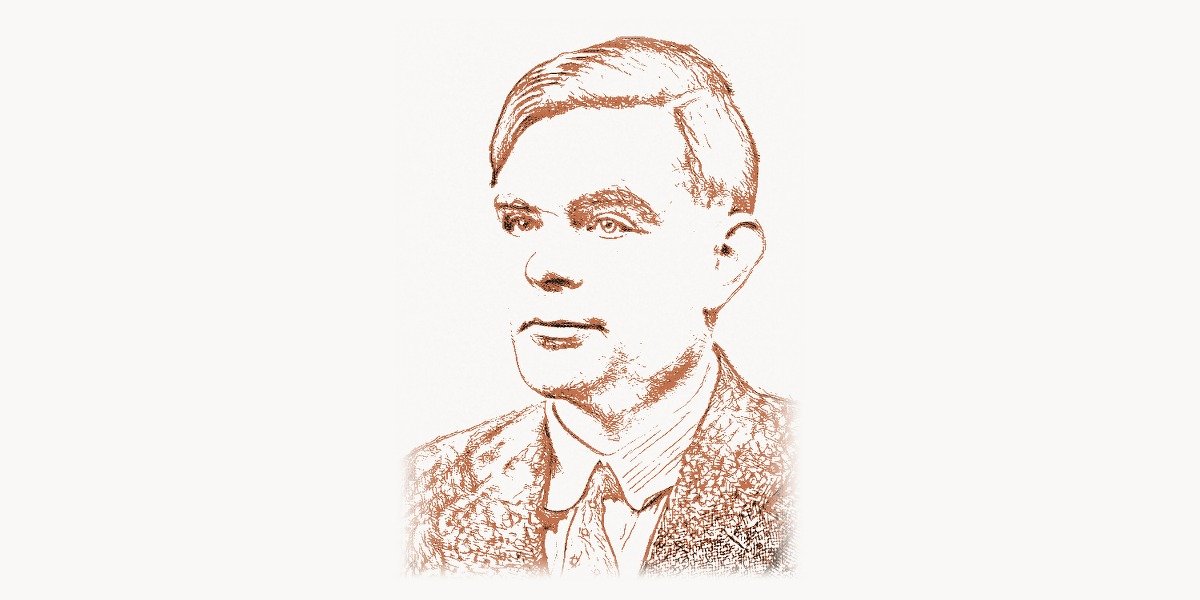

On June 8, 1954, a cleaning woman found Alan Turing dead in his bed at his home in Wilmslow, Cheshire, England. He was 41 years old. An inquest determined the cause of death to be cyanide poisoning; a half-eaten apple lay beside his bed. The coroner ruled it a suicide. Just twelve years earlier, Turing had been a national hero — the man whose codebreaking work at Bletchley Park during World War II had cracked the German Enigma machine and, by some historical estimates, shortened the war by two to four years, saving millions of lives. But by 1954, he had been prosecuted for homosexuality (then a criminal offense in England), stripped of his security clearance, and subjected to forced chemical castration. The tragedy of Turing’s death has, justly, drawn enormous attention. But the tragedy must not overshadow what he built. Before his death, Turing laid the mathematical foundations of computer science, defined the concept of the algorithm, proved fundamental theorems about the limits of computation, designed one of the first stored-program computers, proposed the first rigorous test for machine intelligence, and wrote pioneering work on mathematical biology. He did all of this in a career that spanned barely 20 years. Every computer in the world — from the phone in your pocket to the servers running web frameworks — is a physical realization of the abstract machine Turing described in 1936.

Early Life and Path to Technology

Alan Mathison Turing was born on June 23, 1912, in Maida Vale, London. His father, Julius Mathison Turing, was a member of the Indian Civil Service, and Alan spent much of his early childhood separated from his parents, who were frequently in India. He was raised by foster families in England and showed an early and intense aptitude for mathematics and science. At Sherborne School, a traditional English boarding school, Turing was something of a misfit — brilliant at mathematics and science but indifferent to the classical curriculum the school emphasized.

In 1931, Turing entered King’s College, Cambridge, where he studied mathematics. He was elected a Fellow of King’s College in 1935 at the age of 22, based on a dissertation proving the central limit theorem (independently rediscovering a result previously obtained by Jarl Waldemar Lindeberg). But it was his work the following year that would change history. In 1936, Turing published “On Computable Numbers, with an Application to the Entscheidungsproblem” — a paper that established the theoretical foundation of computer science.

After Cambridge, Turing spent two years (1936–1938) at Princeton University, where he completed his Ph.D. under Alonzo Church. His doctoral thesis extended his work on computability and explored ordinal logics. At Princeton, he was exposed to the mathematical logic community that included Church, Kurt Godel, and John von Neumann. Von Neumann was so impressed by Turing that he offered him a postdoctoral position at the Institute for Advanced Study, but Turing chose to return to England — a decision that would prove pivotal when war broke out in 1939.

The Breakthrough: The Turing Machine and Computability Theory

The Technical Innovation

Turing’s 1936 paper addressed the Entscheidungsproblem (decision problem), posed by David Hilbert in 1928: Is there a mechanical procedure that can determine, for any mathematical statement, whether it is provable? To answer this question, Turing first needed to define precisely what “mechanical procedure” meant — and in doing so, he invented the concept of the computer.

Turing described an abstract machine (now called a Turing machine) consisting of: an infinitely long tape divided into cells, each containing a symbol from a finite alphabet; a head that can read and write symbols on the tape and move one cell left or right; a state register that stores the current state of the machine; and a finite table of instructions (transition function) that determines, given the current state and the symbol being read, what symbol to write, which direction to move, and what state to enter next.

# A Turing machine simulation in Python

# This demonstrates Turing's original concept from 1936

class TuringMachine:

"""

The most fundamental model of computation.

Every computer ever built is equivalent to this.

"""

def __init__(self, tape, initial_state, transition_function, accept_states):

self.tape = list(tape)

self.head = 0

self.state = initial_state

self.transitions = transition_function # {(state, symbol): (new_state, write_symbol, direction)}

self.accept_states = accept_states

def step(self):

symbol = self.tape[self.head] if self.head < len(self.tape) else '_'

key = (self.state, symbol)

if key not in self.transitions:

return False # Halt — no transition defined

new_state, write_symbol, direction = self.transitions[key]

# Write

if self.head >= len(self.tape):

self.tape.append('_')

self.tape[self.head] = write_symbol

# Move

self.head += 1 if direction == 'R' else -1

if self.head < 0:

self.tape.insert(0, '_')

self.head = 0

# Transition

self.state = new_state

return True

def run(self, max_steps=10000):

steps = 0

while self.step() and steps < max_steps:

steps += 1

return self.state in self.accept_states

# Example: A Turing machine that checks if a binary number is even

# (checks if the last digit is 0)

transitions = {

('start', '0'): ('start', '0', 'R'),

('start', '1'): ('start', '1', 'R'),

('start', '_'): ('back', '_', 'L'),

('back', '0'): ('even', '0', 'R'),

('back', '1'): ('odd', '1', 'R'),

}

tm = TuringMachine("1100", 'start', transitions, {'even'})

print(tm.run()) # True — 1100 (binary 12) is evenThe genius of this definition was its universality. Turing proved that any computation that can be performed by any mechanical process can be performed by a Turing machine. This is the Church-Turing thesis — the foundational claim of computer science. He then proved that a Universal Turing Machine could simulate any other Turing machine, given a description of that machine as input. This is precisely what a modern computer does: it is a general-purpose machine that executes different programs (descriptions of specific computations).

Turing then used this framework to prove that the Entscheidungsproblem is unsolvable: there is no algorithm that can determine, for every possible program and input, whether the program will eventually halt. This is the Halting Problem, and its unsolvability is one of the most important results in mathematics and computer science. It established that there are fundamental limits to what any computer can do — limits that apply regardless of how fast the hardware is or how clever the software.

Why It Mattered

Turing's 1936 paper created computer science as a mathematical discipline. Before Turing, there were people building calculating machines and people studying mathematical logic, but there was no formal theory connecting the two. Turing provided that connection. His work showed that computation was a well-defined mathematical concept, that there existed a universal computing machine, and that there were precise limits to what computation could achieve.

The practical implications were enormous. The concept of the stored-program computer — a machine that treats its own program as data that can be read, modified, and executed — is directly derived from the Universal Turing Machine. When John McCarthy designed Lisp's eval function (a program that interprets other programs), he was implementing Turing's universal machine in software. When a JavaScript engine compiles and runs code at runtime, it is acting as a Universal Turing Machine.

Beyond Computability: Other Contributions

During World War II, Turing worked at Bletchley Park, the British codebreaking center, from September 1939. He was a central figure in breaking the German Enigma cipher. Turing developed the Bombe, an electromechanical device that automated the search for Enigma settings. The original Bombe design was based on work by Polish mathematicians (Marian Rejewski, Jerzy Rozycki, and Henryk Zygalski), but Turing's improvements — particularly his method of detecting contradictions in potential settings — made it vastly more effective. At its peak, Bletchley Park was decrypting thousands of German messages per day, providing Allied commanders with intelligence that influenced major strategic decisions, including the Battle of the Atlantic and the D-Day invasion.

After the war, Turing worked at the National Physical Laboratory (NPL) in London, where he designed the Automatic Computing Engine (ACE), one of the first detailed designs for a stored-program computer. The ACE design was remarkably advanced — Turing proposed hardware subroutine calls, a floating-point unit, and the use of the computer's memory to store both programs and data. A pilot version of the ACE was completed in 1950 and was, at the time, the fastest computer in the world.

In 1948, Turing moved to the University of Manchester, where he worked on the Manchester Mark 1 computer and turned his attention to artificial intelligence. His 1950 paper "Computing Machinery and Intelligence," published in the journal Mind, proposed what is now known as the Turing Test. Rather than asking "Can machines think?" — a question he considered too vague to be useful — Turing proposed a practical test: if a human interrogator, communicating with a machine through a text interface, could not reliably distinguish the machine from a human, the machine should be considered intelligent. This operational definition of intelligence has shaped AI research for over 70 years and remains a subject of active debate in the age of large language models.

In the last years of his life, Turing turned to mathematical biology. His 1952 paper "The Chemical Basis of Morphogenesis" proposed a mathematical model (reaction-diffusion equations) for how patterns — stripes, spots, spirals — form in biological organisms during development. This work was decades ahead of its time and has been confirmed by experimental evidence in the 2000s and 2010s. Turing's morphogenesis model is now used in computer graphics, materials science, and developmental biology.

Philosophy and Engineering Approach

Key Principles

Turing's intellectual approach was characterized by a willingness to ask fundamental questions and follow them to their logical conclusions, regardless of where they led. His work on computability began with a philosophical question (What does it mean to compute?) and produced a mathematical answer that founded an entire discipline. His work on AI began with a philosophical question (Can machines think?) and produced an operational definition that reframed the debate entirely.

He was both a theorist and a practical engineer, comfortable working at both levels simultaneously. At Bletchley Park, he combined mathematical insight with engineering pragmatism, designing machines that had to work under real-world constraints. At Manchester, he wrote chess-playing programs by hand (simulating the computer's operations himself, since the hardware was too limited to run them efficiently) — demonstrating a concrete, hands-on approach to testing theoretical ideas.

Turing had a distinctive approach to problem-solving that his colleagues often described as unconventional. He frequently arrived at correct results through paths that others found difficult to follow, making intuitive leaps that he would then justify rigorously. His proofs were often original not just in their results but in their methods — the diagonalization argument in his 1936 paper, for instance, was a novel application of a technique from set theory to computation.

He was also deeply interested in the nature of mind and consciousness, which informed his work on AI. He believed that machines could genuinely think — not merely simulate thinking — and that the distinction between "real" and "simulated" intelligence was less meaningful than most people assumed. This materialist perspective on consciousness, radical in the 1950s, has gained significant support from neuroscience and cognitive science in subsequent decades.

Legacy and Modern Relevance

Turing's influence on modern computing is total. Every computer, every programming language, every algorithm, every database is built on the theoretical framework he established in 1936. The concept of computational universality — that a single general-purpose machine can perform any computation — is the reason your laptop can run a web browser, a code editor, a game, and a spreadsheet. Without Turing's proof that such a universal machine was possible, the intellectual foundation for building one would not have existed.

The Turing Test, proposed in 1950, has taken on new urgency in the era of large language models. When GPT-4, Claude, and Gemini engage in conversations that are difficult to distinguish from human text, they are engaging with the exact scenario Turing described 75 years ago. The debate he started — about what it means for a machine to be intelligent, and whether behavioral indistinguishability from humans is a sufficient criterion — is now one of the most active areas of AI research and philosophy.

In 2009, British Prime Minister Gordon Brown issued a formal apology for the persecution Turing suffered. In 2013, Queen Elizabeth II granted Turing a posthumous royal pardon. In 2019, the Bank of England announced that Turing would appear on the new 50-pound note, which entered circulation in June 2021. The Alan Turing Institute, the UK's national institute for data science and AI, was established in 2015 and named in his honor.

The ACM's Turing Award, established in 1966, is the highest honor in computer science — the equivalent of the Nobel Prize for computing. Recipients include John McCarthy (1971), Edsger Dijkstra (1972), Donald Knuth (1974), John Backus (1977), Niklaus Wirth (1984), Dennis Ritchie and Ken Thompson (1983), and Tim Berners-Lee (2016). The award is named after Turing because his work is the foundation on which all of theirs was built.

Turing's life was cut tragically short, and it is impossible to know what he might have accomplished had he lived. He was working on mathematical biology, artificial intelligence, and the foundations of computation — any one of which might have yielded further breakthroughs. What he did accomplish in 41 years is staggering: he created the theoretical foundation of computer science, played a decisive role in winning the most destructive war in history, proposed the defining test of artificial intelligence, and pioneered mathematical biology. Few scientists in any field have had such a broad and lasting impact.

Key Facts

- Born: June 23, 1912, Maida Vale, London, England

- Died: June 7, 1954, Wilmslow, Cheshire, England

- Known for: Founding theoretical computer science, the Turing machine, breaking the Enigma code, the Turing Test, morphogenesis models

- Key projects: "On Computable Numbers" (1936), Bombe codebreaking machine (1939-1940), ACE computer design (1945), Turing Test (1950), morphogenesis theory (1952)

- Awards: Fellow of the Royal Society (1951), OBE (1946), posthumous Royal Pardon (2013)

- Education: B.A. from King's College, Cambridge (1934), Ph.D. from Princeton University (1938)

Frequently Asked Questions

Who is Alan Turing?

Alan Turing (1912–1954) was a British mathematician, logician, and computer scientist who is considered the father of theoretical computer science and artificial intelligence. He formalized the concept of computation with the Turing machine (1936), played a central role in breaking German codes during World War II at Bletchley Park, proposed the Turing Test for machine intelligence (1950), and made pioneering contributions to mathematical biology. The ACM Turing Award, the highest honor in computing, is named after him.

What did Alan Turing create?

Turing created the mathematical concept of the Turing machine (1936), which formalized what it means to compute and proved the existence of problems no algorithm can solve (the Halting Problem). He designed the Bombe, which cracked the German Enigma cipher during WWII. He designed the Automatic Computing Engine (ACE), one of the earliest stored-program computer designs. He proposed the Turing Test (1950), an operational definition of machine intelligence. He developed reaction-diffusion models for biological pattern formation (1952).

Why is Alan Turing important?

Turing is important because he established the mathematical foundation of all of computer science. Every computer is a physical implementation of his Universal Turing Machine concept. His proof of the Halting Problem defined the fundamental limits of computation. His wartime codebreaking contributed directly to the Allied victory in World War II. His Turing Test framed the central question of AI research for 75 years and remains relevant in the era of large language models. He is arguably the single most important figure in the history of computing.