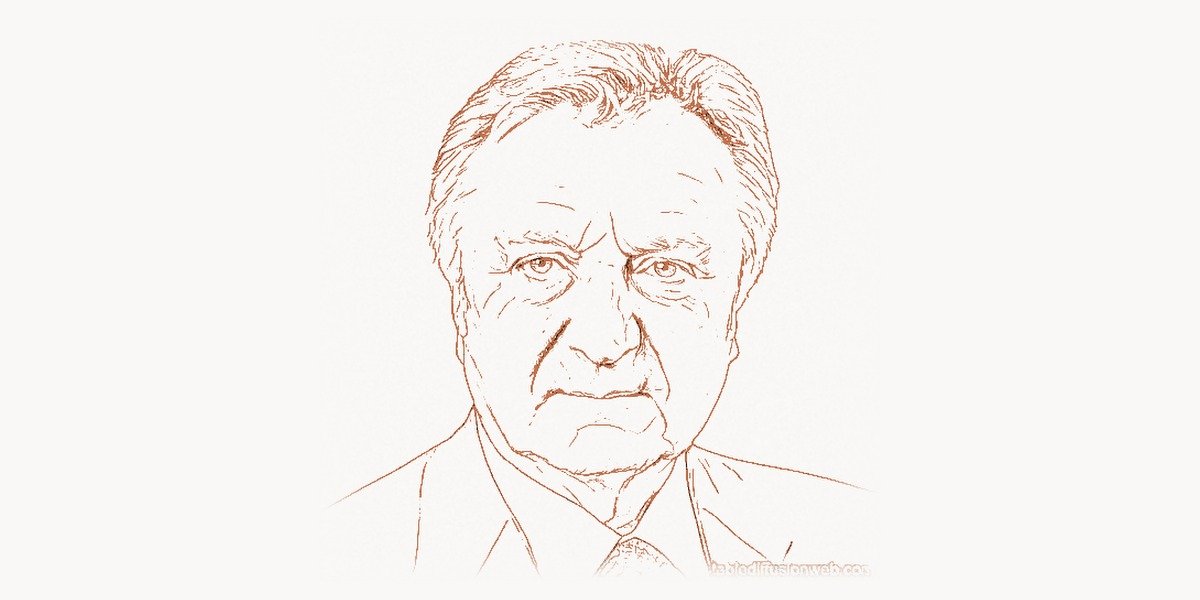

In the fall of 2012, a 26-year-old Ukrainian-Canadian graduate student entered a deep convolutional neural network into the ImageNet Large Scale Visual Recognition Challenge — an annual competition where teams build systems to classify 1.2 million images into 1,000 categories. The network, which he called AlexNet, achieved a top-5 error rate of 15.3%. The second-place entry, built using traditional hand-engineered feature extraction methods that represented decades of painstaking computer vision research, scored 26.2%. The gap — nearly eleven percentage points — was so large that it did not look like an incremental improvement. It looked like a different kind of technology entirely. The researcher’s name was Alex Krizhevsky. He was a Ph.D. student at the University of Toronto, working in the lab of Geoffrey Hinton. The paper he co-authored with Hinton and fellow student Ilya Sutskever — “ImageNet Classification with Deep Convolutional Neural Networks” — has been cited over 150,000 times and is widely regarded as the single most important paper in the modern history of artificial intelligence. AlexNet did not merely win a competition. It ended one era of AI research and began another. Before AlexNet, deep learning was a marginal pursuit championed by a small group of researchers whom the mainstream AI community largely ignored. After AlexNet, deep learning became the dominant paradigm in artificial intelligence, and the consequences — from self-driving cars to large language models to protein structure prediction — are still unfolding.

Early Life and Path to Technology

Alex Krizhevsky was born in 1986 in Nizhny Novgorod (then Gorky), in the former Soviet Union — the same city, coincidentally, where Ilya Sutskever was born in the same year. Krizhevsky’s family emigrated when he was young, eventually settling in Canada. He grew up in Toronto, where the University of Toronto’s computer science department would prove decisive for his career and for the future of artificial intelligence.

Krizhevsky enrolled at the University of Toronto, where he studied computer science. Even as an undergraduate, he was drawn to the problem of visual recognition — how to build systems that could look at an image and understand what it contained. This was one of the oldest and most stubborn problems in AI. Humans perform visual recognition effortlessly and unconsciously — we identify objects, faces, scenes, and spatial relationships in milliseconds. But building machines that could do the same had defeated researchers for decades. The difficulty lies in the gap between raw pixel data and semantic meaning: a photograph of a cat is, to a computer, nothing but a matrix of numbers. Extracting the concept “cat” from that matrix is a problem of staggering complexity.

Krizhevsky joined Geoffrey Hinton’s lab for his graduate work. Hinton, along with Yann LeCun and Yoshua Bengio, was one of the so-called “godfathers of deep learning” — researchers who had spent decades developing neural network methods when the rest of the AI community had largely abandoned them. In the early 2000s, the mainstream view was that neural networks were too difficult to train, too computationally expensive, and too prone to getting stuck in poor local minima. The dominant approaches to computer vision relied on hand-crafted features — algorithms like SIFT (Scale-Invariant Feature Transform) and HOG (Histogram of Oriented Gradients) that human researchers had carefully designed to detect edges, corners, textures, and shapes. These features were then fed into traditional machine learning classifiers like support vector machines.

Hinton’s lab operated on a different premise: that if you could build a deep enough neural network and train it on enough data, the network would learn to extract its own features — features that might be far better than anything humans could design. The problem was making this work in practice. Deep networks suffered from vanishing gradients (signals growing weaker as they propagated backward through many layers), slow training times, and overfitting (memorizing training data rather than learning generalizable patterns). Krizhevsky’s contribution was to solve these practical problems with a combination of architectural innovation, engineering ingenuity, and a crucial bet on hardware that almost nobody else was making.

The AlexNet Breakthrough

The Technical Innovation

AlexNet was a deep convolutional neural network with eight learned layers — five convolutional layers followed by three fully connected layers — containing approximately 60 million trainable parameters. By modern standards, this is minuscule: today’s large language models have hundreds of billions or trillions of parameters. But in 2012, it was the largest neural network ever trained on a real-world visual recognition task, and it required several innovations to make training feasible at all.

The first key innovation was the use of ReLU (Rectified Linear Unit) activation functions. Previous neural networks typically used sigmoid or hyperbolic tangent (tanh) activation functions, which suffer from saturation — for very large or very small inputs, the gradient approaches zero, effectively killing the learning signal. This vanishing gradient problem made it extremely difficult to train networks deeper than a few layers. The ReLU function, defined simply as f(x) = max(0, x), does not saturate for positive inputs. Its gradient is either 0 (for negative inputs) or 1 (for positive inputs), allowing gradients to flow unchanged through deep networks. Krizhevsky showed that networks with ReLU activations trained several times faster than equivalent networks with tanh activations — a speedup that was essential for making deep network training practical.

import numpy as np

# Core innovations behind AlexNet (2012) — the CNN that

# sparked the deep learning revolution

# ============================================

# Innovation 1: ReLU Activation Function

# ============================================

# Before AlexNet: sigmoid/tanh saturate, killing gradients

# After AlexNet: ReLU became the default for deep networks

def sigmoid(x):

return 1 / (1 + np.exp(-x))

def relu(x):

"""The activation function that changed everything.

Simple, fast, and gradient-preserving for positive inputs."""

return np.maximum(0, x)

# Demonstrate the vanishing gradient problem

x = np.array([5.0])

print(f"Sigmoid gradient at x=5: {(sigmoid(x) * (1 - sigmoid(x)))[0]:.6f}")

print(f"ReLU gradient at x=5: {1.0}")

# Sigmoid ≈ 0.0067 → gradient nearly vanishes

# ReLU = 1.0 → gradient flows freely through deep layers

# ============================================

# Innovation 2: GPU-Parallelized Convolutions

# ============================================

# Krizhevsky split the network across TWO NVIDIA GTX 580 GPUs

# Each GPU handled half the feature maps (filters)

# Cross-GPU communication only at specific layers

def conv2d_simplified(image, kernel, stride=1):

"""Simplified 2D convolution — the core operation of a CNN.

AlexNet used 96 filters (11x11x3) in the first layer alone.

Krizhevsky wrote custom CUDA kernels to run these on GPUs."""

h, w = image.shape

kh, kw = kernel.shape

out_h = (h - kh) // stride + 1

out_w = (w - kw) // stride + 1

output = np.zeros((out_h, out_w))

for i in range(out_h):

for j in range(out_w):

region = image[

i*stride : i*stride + kh,

j*stride : j*stride + kw

]

output[i, j] = np.sum(region * kernel)

return output

# ============================================

# Innovation 3: Dropout Regularization

# ============================================

# Prevents overfitting by randomly zeroing 50% of neurons

# during training — forces the network to learn redundant

# representations instead of co-adapting features

def dropout(layer_output, keep_prob=0.5, training=True):

"""Dropout as used in AlexNet's fully connected layers.

At test time, multiply by keep_prob instead of dropping."""

if not training:

return layer_output * keep_prob

mask = np.random.binomial(1, keep_prob, size=layer_output.shape)

return layer_output * mask / keep_prob

# ============================================

# Innovation 4: Data Augmentation

# ============================================

# Krizhevsky artificially expanded the training set by:

# - Random 224x224 crops from 256x256 images

# - Horizontal flips (doubles effective dataset)

# - PCA-based color jittering (altering RGB intensities)

def random_crop(image, crop_size=224, original_size=256):

"""Extract a random crop — one of AlexNet's augmentations.

A 256x256 image yields many possible 224x224 crops,

effectively multiplying the training data."""

max_offset = original_size - crop_size

y = np.random.randint(0, max_offset)

x = np.random.randint(0, max_offset)

return image[y:y+crop_size, x:x+crop_size]

def horizontal_flip(image, prob=0.5):

"""Random horizontal flip — doubles effective dataset."""

if np.random.random() < prob:

return image[:, ::-1]

return image

# ============================================

# AlexNet Architecture Summary

# ============================================

alexnet_layers = [

"Conv1: 96 filters (11×11), stride 4 → ReLU → MaxPool → LRN",

"Conv2: 256 filters (5×5), pad 2 → ReLU → MaxPool → LRN",

"Conv3: 384 filters (3×3), pad 1 → ReLU",

"Conv4: 384 filters (3×3), pad 1 → ReLU",

"Conv5: 256 filters (3×3), pad 1 → ReLU → MaxPool",

"FC6: 4096 neurons → ReLU → Dropout(0.5)",

"FC7: 4096 neurons → ReLU → Dropout(0.5)",

"FC8: 1000 neurons (output classes) → Softmax",

]

print("\nAlexNet Architecture (Krizhevsky et al., 2012):")

print(f"Total parameters: ~60 million")

print(f"Training: ~90 epochs on 1.2M ImageNet images")

print(f"Hardware: 2× NVIDIA GTX 580 (3GB each), ~6 days training")

print(f"Result: 15.3% top-5 error (vs 26.2% for second place)\n")

for layer in alexnet_layers:

print(f" {layer}")The second innovation — and the one most personally associated with Krizhevsky — was the use of GPUs for neural network training. In 2012, GPUs were primarily used for rendering video games. They were designed to perform many simple mathematical operations in parallel — exactly the kind of computation that matrix multiplication requires, and matrix multiplication is the core operation of neural networks. Krizhevsky recognized that NVIDIA GPUs, originally designed for graphics, could accelerate neural network training by orders of magnitude compared to CPUs. He wrote custom CUDA kernels — low-level GPU programs — that implemented convolutional operations with exceptional efficiency. This was not a trivial engineering task. CUDA programming requires managing memory transfers between CPU and GPU, optimizing memory access patterns for GPU architecture, and handling the parallel execution model. Krizhevsky's CUDA implementation was so efficient that it became the template for subsequent GPU-accelerated deep learning frameworks. Today, NVIDIA's entire AI business — now worth over a trillion dollars — traces its origin to the insight that Krizhevsky demonstrated: that GPUs are the natural hardware for training neural networks.

AlexNet was trained on two NVIDIA GTX 580 graphics cards, each with only 3 GB of memory. Because the network was too large to fit on a single GPU, Krizhevsky devised an ingenious model-parallel architecture: he split the network across both GPUs, with each GPU responsible for half the feature maps at each layer. The GPUs communicated at certain layers (allowing filters on one GPU to access feature maps on the other) but operated independently at others. This split-GPU design was driven by hardware constraints, but it inadvertently demonstrated a principle — model parallelism — that would become central to training the massive models of the 2020s.

The third major innovation was dropout regularization, a technique developed by Hinton that Krizhevsky implemented in AlexNet's fully connected layers. During training, dropout randomly sets each neuron's output to zero with probability 0.5. This forces the network to learn redundant, distributed representations rather than relying on any single neuron. At test time, all neurons are active, and their outputs are scaled accordingly. Dropout dramatically reduced overfitting and has since become a standard component of virtually every deep learning architecture.

Additional innovations included local response normalization (a form of competitive inhibition between neurons), overlapping max pooling (using pooling windows that overlap rather than tile), and extensive data augmentation — random crops, horizontal flips, and PCA-based color perturbations that artificially expanded the training set and improved the network's ability to generalize.

Why It Mattered

The significance of AlexNet cannot be overstated. Before 2012, the computer vision community had spent decades developing hand-engineered feature extraction pipelines. Teams of Ph.D. researchers would spend years designing algorithms to detect specific visual patterns — edges at particular orientations, texture gradients, color histograms, spatial relationships. These hand-crafted features were then fed into traditional machine learning classifiers. The state of the art improved by fractions of a percent each year. AlexNet obliterated this paradigm. It showed that a neural network, given enough data and compute, could learn features directly from raw pixels that were dramatically superior to anything humans had designed.

The impact on the ImageNet competition was immediate: by 2014, every competitive entry used deep convolutional networks. More broadly, AlexNet triggered a cascade that transformed the entire field of artificial intelligence. Within five years of its publication, deep learning had become the dominant approach in computer vision, speech recognition, natural language processing, game playing, robotics, drug discovery, and scientific computing. The paper is the inflection point that separates the era of hand-engineered AI from the era of learned representations. Every neural network trained today — from the image classifiers in smartphone cameras to the large language models behind conversational AI — descends conceptually from AlexNet.

The GPU Computing Bet

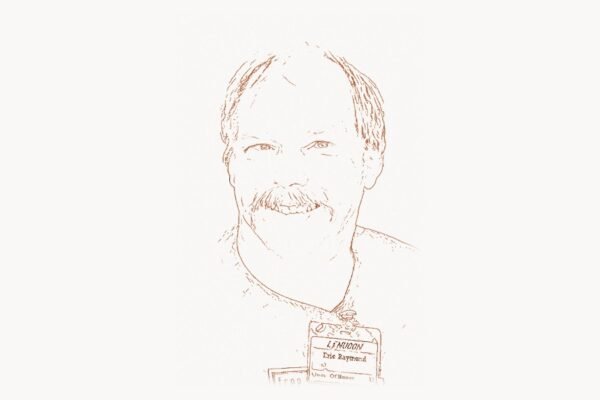

Krizhevsky's decision to use GPUs was not simply a pragmatic engineering choice. It was a bet against the mainstream that required substantial technical risk. In 2010 and 2011, when Krizhevsky was developing the CUDA code that would power AlexNet, GPU computing for machine learning was almost unheard of. NVIDIA's CUDA platform had been available since 2007, but it was used primarily for scientific simulations and cryptocurrency mining. The machine learning community overwhelmingly used CPUs, and most researchers did not know how to write GPU code.

Krizhevsky taught himself CUDA programming and wrote a highly optimized library for GPU-accelerated convolutional neural network operations. This library, called cuda-convnet (later cuda-convnet2), became the foundation for GPU-based deep learning. It implemented efficient convolution, pooling, activation, and backpropagation operations directly in CUDA, bypassing the overhead of higher-level frameworks. The library was released as open source and was widely adopted by researchers before being superseded by frameworks like Theano, Caffe, and eventually TensorFlow and PyTorch.

The cuda-convnet code was remarkable for its optimization. Krizhevsky exploited the memory hierarchy of NVIDIA GPUs — shared memory, texture memory, constant memory — to maximize throughput. He hand-tuned thread block sizes, memory access patterns, and kernel launch configurations for the specific operations needed by convolutional neural networks. This level of hardware-aware optimization was unusual in machine learning research, where most work was done at a much higher level of abstraction. Krizhevsky's willingness to descend to the metal — to write GPU assembly-level code in service of a machine learning goal — was a key factor in AlexNet's success. The training time for AlexNet was approximately five to six days on two GTX 580 GPUs. Without GPU acceleration, the same computation would have taken months or longer on the CPUs of the era, making the research impractical.

Beyond AlexNet: Career and Contributions

After the AlexNet breakthrough, Krizhevsky's career took a path that diverged from the high-profile trajectories of his collaborators. While Hinton became a vice president at Google and later won the Nobel Prize in Physics (2024) for foundational work on neural networks, and while Sutskever co-founded OpenAI and became one of the most prominent figures in AI research, Krizhevsky pursued a quieter path.

In 2013, Krizhevsky, Hinton, and Sutskever co-founded a company called DNNresearch, which was acquired by Google almost immediately. At Google, Krizhevsky worked on deep learning research, contributing to the rapidly growing effort to apply neural networks across Google's products. However, he left Google in 2017 and has maintained a relatively low public profile since then. He has not published extensively after the AlexNet paper, and he has given few interviews or public talks.

This trajectory is notable precisely because it contrasts with the celebrity culture that has grown around AI research. Krizhevsky built the system that started the deep learning revolution, then stepped away from the spotlight. His contribution was concentrated in a single, extraordinary moment of engineering and scientific insight — a moment that changed the world.

The AlexNet paper itself deserves attention as a piece of scientific writing. It is remarkably clear and specific. Every architectural decision is documented and justified. The experimental methodology is transparent. The results are presented without hype or exaggeration. The paper's first author — Krizhevsky — was responsible for the implementation, the GPU code, the network architecture, and the training procedure. It represents a complete engineering achievement: not just a theoretical insight, but a working system that demonstrably outperformed everything that came before it.

Philosophy and Engineering Approach

Key Principles

Krizhevsky's approach to research was characterized by deep engineering pragmatism. While theoretical elegance is valued in academic computer science, Krizhevsky's work demonstrated that practical engineering — getting the code to run fast enough, the memory usage low enough, the training stable enough — is often the decisive factor in research breakthroughs. AlexNet was not built on a new theoretical insight about neural networks. The convolutional neural network architecture had been developed by Yann LeCun in the late 1980s. The backpropagation algorithm had been popularized by Hinton and others in the 1980s. What was new about AlexNet was the engineering: the GPU implementation, the careful architecture tuning, the combination of regularization techniques, and the scale of training data made possible by the ImageNet dataset.

This engineering-first approach reflects a broader truth about the history of deep learning. Many of the ideas behind modern AI — neural networks, backpropagation, convolutional architectures, attention mechanisms — were proposed years or even decades before they became practically useful. What changed was not the theory but the engineering: faster hardware (GPUs), larger datasets (ImageNet, Common Crawl), better software frameworks (TensorFlow, PyTorch), and the accumulated engineering knowledge of how to train deep networks reliably. Krizhevsky's AlexNet was the proof that engineering could unlock the latent potential of ideas that had existed for decades. For development teams today, having the right tools and development environments remains as critical for productivity as the right hardware was for Krizhevsky's breakthrough.

A second characteristic of Krizhevsky's approach was willingness to work at the hardware level. Most machine learning researchers treat hardware as a black box — they write code in Python, use high-level libraries, and let the framework handle hardware optimization. Krizhevsky went in the opposite direction, writing CUDA kernels that directly controlled GPU thread execution. This willingness to cross the abstraction boundary between software and hardware gave him a performance advantage that was essential to AlexNet's success. It also established a pattern that continues today: the most important advances in AI often come from researchers who understand both the algorithms and the hardware they run on.

A third principle visible in Krizhevsky's work is the importance of scale. AlexNet was trained on the full ImageNet dataset — 1.2 million labeled images in 1,000 categories — which was the largest high-quality image dataset available at the time. Previous neural network research had been limited to much smaller datasets (tens of thousands of images), and on those smaller datasets, neural networks did not clearly outperform hand-crafted methods. It was the combination of a large, deep network with a large dataset that produced the breakthrough. This lesson — that scale matters, sometimes more than architectural innovation — has been the dominant theme of AI research ever since, culminating in the massive language models of the 2020s.

import numpy as np

# The deep learning pipeline that AlexNet demonstrated:

# Raw Data → Learned Features → Classification

# This replaced decades of hand-engineered computer vision

# ============================================

# Before AlexNet: Hand-Engineered Features

# ============================================

# Researchers manually designed feature detectors like SIFT, HOG

# This took years of domain expertise per feature type

def hand_engineered_pipeline(image):

"""Traditional CV pipeline (pre-2012).

Each step was manually designed by researchers."""

edges = detect_edges_sobel(image) # Step 1: edge detection

gradients = compute_hog(edges) # Step 2: gradient histograms

keypoints = extract_sift(image) # Step 3: keypoint matching

features = bag_of_visual_words(keypoints) # Step 4: feature encoding

prediction = svm_classify(features) # Step 5: SVM classifier

return prediction

# Years of PhD research per step. Each step hand-tuned.

# Best ImageNet top-5 error: ~26% (2011)

# ============================================

# After AlexNet: End-to-End Learning

# ============================================

# The network learns its OWN features from raw pixels

# No human-designed feature extraction needed

class ConvolutionalBlock:

"""A single convolutional block — AlexNet's building unit.

The network stacks these blocks to learn hierarchical features:

Layer 1: edges, color blobs

Layer 2: corners, textures

Layer 3: object parts (eyes, wheels)

Layer 4: object assemblies

Layer 5: whole objects in context"""

def __init__(self, in_channels, out_channels, kernel_size, stride=1):

self.weights = np.random.randn(

out_channels, in_channels, kernel_size, kernel_size

) * 0.01

self.bias = np.zeros(out_channels)

def forward(self, x):

# Convolution → ReLU → (optional pooling)

# All parameters are LEARNED, not hand-designed

conv_output = self._convolve(x)

activated = np.maximum(0, conv_output) # ReLU

return activated

def _convolve(self, x):

"""Simplified convolution operation."""

return x # Placeholder for actual convolution

# ============================================

# The Key Insight: Features Emerge from Data

# ============================================

# When trained on 1.2 million images, the network discovers:

learned_features_by_layer = {

"Layer 1": [

"Gabor-like edge detectors at various orientations",

"Color-opponent filters (red-green, blue-yellow)",

"Frequency-selective filters",

],

"Layer 2": [

"Corner and junction detectors",

"Texture patterns (striped, dotted, smooth)",

"Color and edge combinations",

],

"Layer 3-4": [

"Object parts: eyes, noses, wheels, windows",

"Repeated textures: fur, brick, fabric",

"Spatial arrangements of lower-level features",

],

"Layer 5": [

"Whole-object detectors: faces, animals, vehicles",

"Pose-invariant object representations",

"Scene-level context features",

],

}

print("Features AlexNet learned WITHOUT human guidance:")

for layer, features in learned_features_by_layer.items():

print(f"\n {layer}:")

for f in features:

print(f" → {f}")

print("\nThis hierarchy — from edges to objects — was discovered")

print("automatically by backpropagation through 60 million")

print("parameters over ~90 training epochs on ImageNet.")Legacy and Modern Relevance

Alex Krizhevsky's legacy is defined by a single system that changed the course of technology. AlexNet did not just advance the state of the art in computer vision — it demonstrated that deep neural networks, trained on large datasets with GPU acceleration, could learn representations of the world that were fundamentally superior to anything humans could manually engineer. This demonstration triggered a chain reaction that has reshaped every domain of artificial intelligence and, increasingly, every domain of human activity that involves data.

The immediate descendant of AlexNet was a series of progressively deeper and more sophisticated convolutional networks. VGGNet (2014) showed that using smaller filters in deeper networks improved performance. GoogLeNet/Inception (2014) introduced multi-scale processing within a single layer. ResNet (2015) solved the degradation problem in very deep networks with skip connections, enabling networks with 152 layers — an order of magnitude deeper than AlexNet. Each of these architectures built directly on the foundation Krizhevsky established, and each pushed image recognition accuracy further toward and eventually beyond human-level performance.

Beyond computer vision, the AlexNet moment catalyzed the application of deep learning to every corner of AI. In natural language processing, the success of learned representations in vision inspired researchers to develop word embeddings (Word2Vec, 2013), recurrent neural network models for language, and eventually the Transformer architecture (2017) that powers today's large language models. In speech recognition, deep neural networks replaced Gaussian mixture models almost overnight after 2012. In game playing, deep reinforcement learning produced systems that mastered Atari games (2013), Go (2016), and StarCraft (2019). In scientific computing, deep learning has accelerated drug discovery, protein folding (AlphaFold), climate modeling, and materials science. All of these developments trace a direct conceptual lineage to AlexNet's demonstration that learned representations outperform hand-engineered ones.

The GPU computing paradigm that Krizhevsky pioneered has become the hardware foundation of the entire AI industry. NVIDIA, whose gaming GPUs Krizhevsky repurposed for deep learning, has transformed into the world's most valuable semiconductor company, with a market capitalization exceeding $3 trillion. The company's AI-specific hardware — the A100, H100, and B200 data center GPUs — is designed specifically for the kind of matrix operations that Krizhevsky first demonstrated could be done on consumer graphics cards. Cloud providers like AWS, Google Cloud, and Azure now offer GPU clusters for AI training as a core service. The entire infrastructure of modern AI — the hardware, the data centers, the power consumption, the supply chain — flows from the insight that Krizhevsky demonstrated in 2012: that neural networks and GPUs are natural partners. For teams managing complex AI and web development projects, platforms like Taskee provide the project management infrastructure needed to coordinate the kind of multidisciplinary work that modern AI development demands.

Krizhevsky's open-source cuda-convnet library also helped establish a culture of open research in deep learning that persists today. By releasing his GPU code publicly, he enabled other researchers to build on his work without having to replicate months of CUDA optimization. This culture of open tools — continued by Theano, Caffe, TensorFlow, PyTorch, and Hugging Face — has been a key accelerator of AI progress. The contrast with previous eras of AI research, where implementations were often proprietary or unavailable, is stark. The open ecosystem that Krizhevsky helped initiate is one reason that AI has advanced so rapidly in the decade since AlexNet.

The story of AlexNet also illustrates a recurring pattern in the history of technology: breakthroughs often come not from entirely new ideas but from the right combination of existing ideas, applied at the right scale, at the right time. Convolutional neural networks had been developed by Yann LeCun in the late 1980s. Backpropagation had been developed in the 1980s. GPU computing had been available since 2007. The ImageNet dataset had been created by Fei-Fei Li and her team starting in 2007. What Krizhevsky did was combine all of these elements — deep CNNs, GPU training, large-scale data, and a set of practical training techniques — into a system that demonstrably worked. The individual components were not new; the synthesis was. This synthesis required both deep technical knowledge and exceptional engineering skill, and it is this combination that makes Krizhevsky's achievement so significant. Modern web development teams benefit from a similar ecosystem of tools and platforms — solutions like Toimi for building professional web presences demonstrate how the right combination of technologies can produce results greater than the sum of their parts.

Alex Krizhevsky's name may be less well known to the general public than those of some of his contemporaries in AI. He has not led a major AI lab, co-founded a billion-dollar startup, or become a public commentator on AI policy. But within the field, his contribution is understood with crystalline clarity. He built the system that started the deep learning revolution. Every image classifier, every language model, every AI-powered application in use today exists in part because a graduate student in Toronto decided to train a deep neural network on GPUs and prove that it could outperform decades of hand-engineered computer vision. The AlexNet paper is the starting gun of the modern AI era, and Alex Krizhevsky is the person who pulled the trigger.

Key Facts

- Born: 1986, Nizhny Novgorod (Gorky), Soviet Union (now Russia)

- Nationality: Ukrainian-Canadian

- Known for: Creating AlexNet, the convolutional neural network that won ImageNet 2012 and launched the modern deep learning era

- Key paper: "ImageNet Classification with Deep Convolutional Neural Networks" (2012, 150,000+ citations), co-authored with Ilya Sutskever and Geoffrey Hinton

- Education: University of Toronto, Ph.D. student under Geoffrey Hinton

- Career: DNNresearch co-founder (2013, acquired by Google), Google (2013–2017)

- Key innovations: GPU-accelerated deep learning (cuda-convnet), ReLU activation in deep CNNs, split-GPU model parallelism, dropout regularization in practice

- Mentors: Geoffrey Hinton (Ph.D. advisor)

- Collaborators: Ilya Sutskever (AlexNet co-author), Geoffrey Hinton (AlexNet co-author and advisor)

Frequently Asked Questions

Who is Alex Krizhevsky?

Alex Krizhevsky is a Ukrainian-Canadian computer scientist who created AlexNet, the deep convolutional neural network that won the 2012 ImageNet Large Scale Visual Recognition Challenge and triggered the modern deep learning revolution. Born in 1986 in Nizhny Novgorod, Russia, Krizhevsky studied at the University of Toronto under Geoffrey Hinton. His AlexNet paper, co-authored with Ilya Sutskever and Hinton, has been cited over 150,000 times and is widely considered the most influential paper in recent AI history. Krizhevsky's key contributions included the GPU-accelerated implementation (cuda-convnet), the practical application of ReLU activations, and the demonstration that deep neural networks trained on large datasets could dramatically outperform hand-engineered computer vision methods.

What is AlexNet and why was it important?

AlexNet is a deep convolutional neural network with eight learned layers and approximately 60 million parameters. It won the 2012 ImageNet competition with a top-5 error rate of 15.3%, nearly eleven percentage points better than the second-place entry (26.2%), which used traditional hand-engineered features. This massive performance gap demonstrated conclusively that deep neural networks trained on GPUs could learn visual features far superior to anything humans could manually design. AlexNet is considered the starting point of the modern deep learning era. Within a few years of its publication, deep learning replaced traditional methods as the dominant approach in computer vision, natural language processing, speech recognition, and virtually every other area of artificial intelligence.

What were the key technical innovations in AlexNet?

AlexNet introduced several innovations that became standard in deep learning. First, it used ReLU activation functions instead of sigmoid or tanh, which solved the vanishing gradient problem and dramatically accelerated training. Second, Krizhevsky wrote custom CUDA kernels to train the network on two NVIDIA GTX 580 GPUs, pioneering the use of GPU computing for deep learning — an approach that now underpins the entire AI hardware industry. Third, it employed dropout regularization in the fully connected layers, which reduced overfitting by randomly disabling neurons during training. Fourth, it used extensive data augmentation (random crops, horizontal flips, color perturbations) to artificially expand the training dataset. Fifth, it implemented a split-GPU architecture that distributed the network across two GPUs, an early form of model parallelism. These innovations, combined with training on the full 1.2-million-image ImageNet dataset, produced results that were qualitatively superior to any previous approach.

How did AlexNet influence modern AI?

AlexNet's influence on modern AI is pervasive. It directly inspired the series of progressively deeper convolutional networks — VGGNet, GoogLeNet, ResNet — that pushed image recognition beyond human-level accuracy. It catalyzed the application of deep learning to natural language processing, leading through a chain of innovations (word embeddings, RNNs, attention mechanisms, Transformers) to today's large language models like GPT. It established GPUs as the standard hardware for AI training, creating an industry now worth hundreds of billions of dollars led by NVIDIA. It demonstrated the principle that scale matters — that larger networks trained on more data produce qualitatively better results — which has been the dominant theme of AI research ever since. And its open-source cuda-convnet library helped establish the culture of open tooling that has accelerated AI progress. Every AI system in use today, from smartphone cameras to conversational assistants, is a descendant of the paradigm Krizhevsky established.