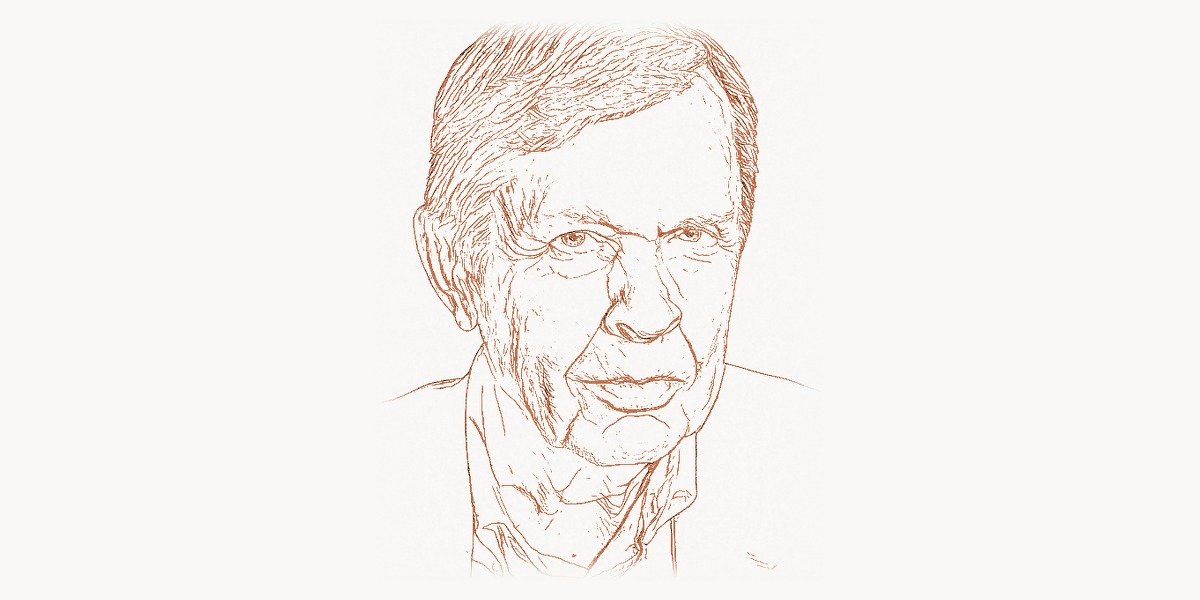

In 1977, three researchers at Bell Labs created a programming language so concise that its name was just three letters — AWK — formed from the initials of its creators: Alfred Aho, Peter Weinberger, and Brian Kernighan. The language was designed to solve a specific class of problems: processing structured text, extracting fields, and producing formatted reports. Nearly fifty years later, AWK remains installed on every Unix and Linux system in the world, still used daily by system administrators, data engineers, and developers who need to transform text quickly and reliably. But AWK, significant as it is, represents only a fraction of Alfred Aho’s contributions to computer science. His co-authorship of the “Dragon Book” — Compilers: Principles, Techniques, and Tools — defined how an entire generation of computer scientists learned to build compilers. His theoretical work on string matching algorithms produced the Aho-Corasick algorithm, a multi-pattern search method that powers antivirus scanners, intrusion detection systems, and network security tools worldwide. His contributions to the tools egrep and fgrep brought efficient regular expression matching to every Unix command line. And in 2020, the Association for Computing Machinery awarded Aho the Turing Award — computing’s highest honor — alongside Jeffrey Ullman, for “fundamental algorithms and theory underlying programming language implementation.” Alfred Aho is one of those rare figures whose work operates simultaneously at the highest levels of theoretical computer science and in the most practical tools that working programmers use every day.

Early Life and Path to Technology

Alfred Vaino Aho was born on August 9, 1941, in Timmins, Ontario, Canada — a mining town in northeastern Ontario, far from any major center of computing research. His parents were of Finnish descent, part of the Finnish immigrant community that had settled in northern Ontario’s mining regions in the early twentieth century. Growing up in Timmins, Aho attended local schools and developed an early aptitude for mathematics and science that would eventually carry him far from the nickel mines of his hometown.

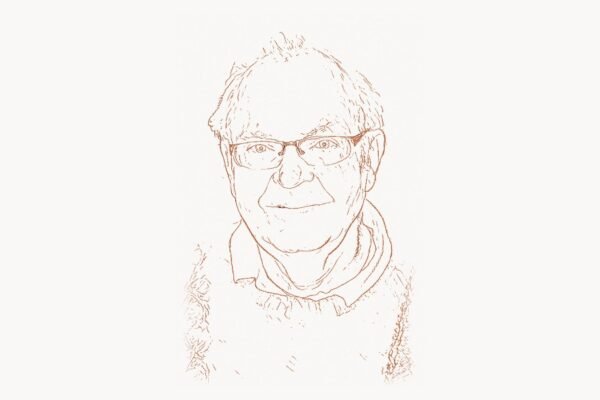

Aho pursued his undergraduate education at the University of Toronto, where he earned a Bachelor of Applied Science in Engineering Physics in 1963. The engineering physics program — the same rigorous, broadly based program that Brian Kernighan had attended just a year earlier — gave Aho a strong foundation in both theoretical mathematics and practical engineering. It was at Toronto that Aho first encountered computing and recognized that the intersection of mathematics and machines was where he wanted to build his career.

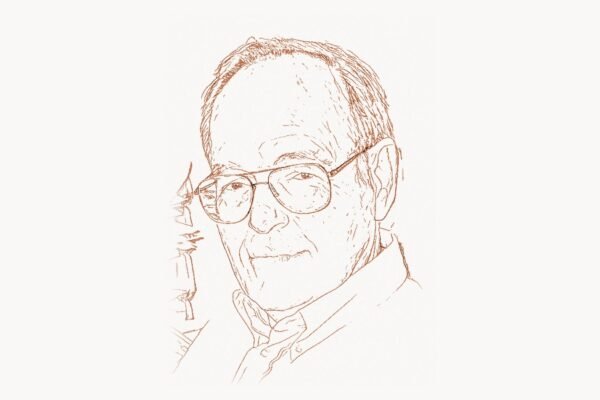

For graduate work, Aho went to Princeton University, one of the leading centers for theoretical computer science in the 1960s. He earned his Ph.D. in 1967 under the supervision of John Hopcroft, writing a dissertation on indexed grammars — a class of formal languages more powerful than context-free grammars but still amenable to mathematical analysis. This work placed Aho squarely at the intersection of formal language theory and practical computation, a position he would occupy for the rest of his career. The relationship with Hopcroft would prove fruitful: together with Jeffrey Ullman, they would co-author The Design and Analysis of Computer Algorithms (1974), one of the foundational textbooks in algorithms and complexity theory.

Upon completing his doctorate, Aho joined Bell Labs in Murray Hill, New Jersey — the legendary research institution where Dennis Ritchie and Ken Thompson were building Unix and where the C programming language was being developed. Bell Labs in the late 1960s and 1970s was the epicenter of systems programming innovation, and Aho found himself surrounded by colleagues whose work would define modern computing. It was in this environment, collaborating with Weinberger and Kernighan, that AWK was born. And it was at Bell Labs that Aho would do much of his most important work on pattern matching, compilers, and the tools that made Unix powerful.

The Breakthrough: AWK and the Dragon Book

AWK — A Language for Text Processing

By the mid-1970s, Unix had established itself as the operating system of choice in research labs and universities, but its text-processing capabilities had a gap. The shell could pipe data between programs. The tool sed could perform line-by-line text substitution. But there was no convenient way to do field-based processing — splitting each line of input into columns, performing computations on specific fields, and producing formatted output. Writing such programs in C was tedious and error-prone for what should have been simple tasks.

Aho, Weinberger, and Kernighan designed AWK to fill this gap. The language was built around a simple but powerful paradigm: pattern-action rules. An AWK program consists of a series of rules, each containing a pattern (a condition to test) and an action (code to execute when the pattern matches). AWK automatically reads input line by line, splits each line into fields (by default, separated by whitespace), and applies each rule. This implicit loop eliminated the boilerplate of opening files, reading lines, and parsing fields — the programmer could focus on the logic.

# AWK — Aho, Weinberger, Kernighan (1977)

# Demonstrates AWK's core pattern-action paradigm

# Example: Analyze a server log file

# Input format: timestamp ip_address status_code response_time url

# Rule 1: Count total requests and accumulate response times

{

total++

sum_time += $4

}

# Rule 2: Count errors (status codes 400-599)

$3 >= 400 {

errors++

error_urls[$5]++

}

# Rule 3: Track slowest requests (over 2 seconds)

$4 > 2.0 {

slow++

if ($4 > max_time) {

max_time = $4

slowest_url = $5

}

}

# END rule: Print summary report after all input is processed

END {

printf "\n=== Server Log Analysis ===\n"

printf "Total requests: %d\n", total

printf "Error responses: %d (%.1f%%)\n", errors, (errors/total)*100

printf "Slow requests: %d (%.1f%%)\n", slow, (slow/total)*100

printf "Average response: %.3f seconds\n", sum_time/total

printf "Slowest request: %.3f seconds (%s)\n", max_time, slowest_url

printf "\nTop error URLs:\n"

for (url in error_urls)

printf " %4d %s\n", error_urls[url], url

}AWK introduced several features that were ahead of their time. Associative arrays — what modern languages call dictionaries, hash maps, or objects — were a first-class data structure in AWK, years before they became standard in mainstream languages. The language supported regular expressions for pattern matching, formatted output with printf, and user-defined functions. It was interpreted, not compiled, which made it ideal for quick, exploratory data processing — the kind of work that modern developers might reach for Python or Perl to do.

The design of AWK reflected Aho’s deep understanding of formal languages and automata theory. The pattern-matching engine used efficient finite automaton techniques that Aho had developed in his theoretical work. This was not a coincidence — AWK was the product of a mind that could move fluidly between abstract theory and practical tool-building. The language was simultaneously a theoretical demonstration of how formal language techniques could be applied to text processing and a supremely practical tool for everyday Unix use.

The Dragon Book — Defining Compiler Education

If AWK demonstrated Aho’s ability to create practical tools, the “Dragon Book” demonstrated his ability to systematize and teach an entire field. Compilers: Principles, Techniques, and Tools, first published in 1986 by Aho, Ravi Sethi, and Jeffrey Ullman (and updated in a second edition in 2006 with Monica Lam as a fourth author), earned its nickname from the cover illustration depicting a knight (labeled “syntax-directed translation”) battling a dragon (labeled “complexity of compiler design”). The book became — and remains — the definitive textbook on compiler construction.

The Dragon Book covers the entire pipeline of compilation: lexical analysis (breaking source code into tokens), syntax analysis (parsing tokens into abstract syntax trees), semantic analysis (type checking and scope resolution), intermediate code generation, optimization, and final code generation. Each stage is presented with rigorous theoretical foundations — formal grammars, automata theory, type systems — alongside practical algorithms that could be directly implemented.

What made the Dragon Book exceptional was its balance between theory and practice. Aho and Ullman were both deeply rooted in theoretical computer science — their earlier textbook on automata theory and formal languages was equally influential. But the Dragon Book never let theory become an end in itself. Every theoretical concept was motivated by a practical compiler design problem, and every algorithm was presented with enough detail to implement. This approach produced a textbook that was used in virtually every university compiler course worldwide for decades. Computer science graduates from the 1980s through the 2020s — whether they went on to build compilers, design programming languages like Pascal and its successors, or write web applications — learned the fundamentals of language implementation from the Dragon Book.

The book’s influence extends beyond traditional compilers. The techniques it describes — lexical analysis with finite automata, parsing with context-free grammars, syntax-directed translation, data-flow analysis — are fundamental to tools like linters, formatters, IDEs, database query processors, and configuration file parsers. When a modern code editor highlights syntax errors or suggests completions, it uses techniques that the Dragon Book made accessible to generations of programmers.

The Aho-Corasick Algorithm and Pattern Matching

In 1975, Aho and Margaret Corasick published a paper describing an algorithm for simultaneously searching for multiple patterns in a text string. The Aho-Corasick algorithm constructs a finite automaton from a set of search patterns (called a “dictionary”) and then scans the input text in a single pass, identifying all occurrences of all patterns. The algorithm runs in O(n + m + z) time, where n is the length of the text, m is the total length of all patterns, and z is the number of matches found — it is optimal in the sense that it processes each character of the input exactly once, regardless of how many patterns are being searched for.

This was a significant theoretical and practical advance. Before Aho-Corasick, searching for multiple patterns typically required either running separate searches for each pattern (which multiplied the cost by the number of patterns) or using complex heuristics that did not guarantee optimal performance. The Aho-Corasick algorithm solved the problem definitively, and its practical applications turned out to be enormous.

Antivirus software uses Aho-Corasick to scan files for thousands of known malware signatures simultaneously. Network intrusion detection systems like Snort use it to scan network packets for attack patterns. The Unix tool fgrep — which Aho wrote — uses the algorithm to search for fixed strings efficiently. Content filtering systems, spam detectors, and DNA sequence analysis tools all employ variants of Aho-Corasick. The algorithm is a textbook example of how theoretical computer science — in this case, automata theory and algorithmic analysis — can produce solutions with massive real-world impact.

Aho also contributed to egrep, which implements extended regular expression matching using efficient nondeterministic-to-deterministic finite automaton conversion — another direct application of the formal language theory that Aho had mastered during his doctoral work at Princeton. The grep family of tools, with Aho’s contributions to fgrep and egrep, became indispensable components of the Unix toolkit and remain essential to every developer who works on Linux systems today.

Foundational Textbooks and Academic Work

Beyond the Dragon Book, Aho co-authored several other textbooks that became standard references in computer science education. The Design and Analysis of Computer Algorithms (1974), written with John Hopcroft and Jeffrey Ullman, was one of the first comprehensive treatments of algorithms and computational complexity. The book covered graph algorithms, sorting, searching, string matching, NP-completeness, and the mathematical techniques needed to analyze algorithm efficiency. It helped establish algorithms as a core subject in computer science curricula — work that Donald Knuth had begun with The Art of Computer Programming and that Aho, Hopcroft, and Ullman made accessible in textbook form.

Data Structures and Algorithms (1983), also with Hopcroft and Ullman, was a more introductory treatment aimed at undergraduates. Foundations of Computer Science (1992), with Ullman, covered the mathematical and theoretical underpinnings of computing — logic, sets, graphs, automata, and complexity theory. Together, these textbooks formed a coherent curriculum that shaped how computer science was taught at universities worldwide. Thousands of professors assigned these books, and hundreds of thousands of students learned algorithms, data structures, and theoretical computer science from Aho’s clear, rigorous exposition.

In 2000, Aho left Bell Labs (which had been reorganized following the breakup of AT&T and the formation of Lucent Technologies) and joined the faculty of Columbia University in New York City as the Lawrence Gussman Professor of Computer Science. At Columbia, he continued to teach and research, focusing on programming languages, compilers, and software engineering education. He also served as chair of the computer science department, guiding the next generation of computer scientists and bringing his decades of industry research experience into the academic environment.

Philosophy and Engineering Approach

Key Principles

Aho’s work is characterized by a distinctive approach: the application of rigorous mathematical theory to practical engineering problems. Where many researchers are either theoreticians or practitioners, Aho has consistently bridged the two worlds. His theoretical work on formal languages and automata directly informed his practical work on pattern matching tools and compiler construction. His textbooks translate deep theory into accessible, implementable knowledge.

This theory-to-practice pipeline is visible in everything Aho has done. The Aho-Corasick algorithm emerged from automata theory and became the engine of antivirus software. AWK emerged from formal language design principles and became a daily tool for millions of system administrators. The Dragon Book emerged from formal grammar theory and taught the world how to build compilers. In each case, Aho demonstrated that the most practical solutions often come from the deepest theoretical understanding.

Aho has spoken about the importance of choosing the right level of abstraction. A compiler, he has observed, is fundamentally a translator — it takes a program written in one language and produces an equivalent program in another language. This simple framing clarifies what might otherwise seem overwhelmingly complex. Similarly, AWK’s power comes from choosing the right abstraction: lines split into fields, with pattern-action rules applied to each line. The abstraction matches the problem domain so well that complex data-processing tasks become trivial to express.

His collaboration style — working closely with co-authors like Kernighan, Ullman, Hopcroft, Weinberger, and Sethi — reflects a belief that the best work emerges from combining different strengths. Kernighan brought clarity of exposition and a user-centered design sensibility to AWK. Ullman brought complementary theoretical depth to the textbooks. Hopcroft brought expertise in graph algorithms and complexity theory. Aho’s genius was partly in choosing the right collaborators and partly in synthesizing theoretical insights with practical needs.

For modern development teams working on complex software projects — whether building compilers, designing domain-specific languages, or developing text-processing pipelines — the principles Aho championed remain essential. Teams using project management platforms like Taskee to coordinate compiler development or language design work are engaging with the same collaborative patterns that produced AWK and the Dragon Book: dividing complex problems into manageable components, assigning work based on individual strengths, and integrating the results into a coherent whole.

The Turing Award and Later Recognition

In 2020, the Association for Computing Machinery awarded Alfred Aho and Jeffrey Ullman the A.M. Turing Award for “fundamental algorithms and theory underlying programming language implementation.” The citation specifically recognized their work on the theory of formal languages, the design and analysis of algorithms for compiler construction, and the authorship of textbooks that educated generations of computer scientists. The Turing Award — often called the “Nobel Prize of computing” — acknowledged that Aho and Ullman’s combined theoretical and practical contributions had fundamentally shaped how programming languages are designed, implemented, and understood.

The award was fitting recognition for a career that had consistently operated at the boundary between theory and practice. Aho’s algorithms power tools used by millions of people who have never heard his name. His textbooks trained the engineers who build modern programming languages, database systems, and development tools. His co-creation of AWK influenced the entire lineage of scripting languages — from Perl to Python to Ruby — that power modern web development and data science.

Aho is a member of the National Academy of Engineering and the National Academy of Sciences — dual membership that reflects the breadth of his contributions across both engineering practice and scientific theory. He is a Fellow of the ACM, a Fellow of the IEEE, and a Fellow of the American Association for the Advancement of Science. He has received the IEEE John von Neumann Medal and numerous other honors recognizing his lifetime of contributions to computing.

Legacy and Modern Relevance

Alfred Aho’s legacy is embedded in the infrastructure of modern computing in ways that most developers never see directly but encounter constantly. Every time a programmer uses grep to search through code, they benefit from Aho’s work on efficient pattern matching. Every time a compiler transforms source code into machine instructions, it uses techniques that Aho systematized and taught. Every time an antivirus scanner checks a file against thousands of malware signatures, it uses the Aho-Corasick algorithm. Every time a system administrator uses AWK to process a log file or extract data from a CSV, they are using a tool Aho co-designed nearly fifty years ago.

AWK’s influence extends well beyond its direct usage. Larry Wall explicitly cited AWK as one of the primary inspirations for Perl, and through Perl, AWK’s design ideas — pattern matching, associative arrays, implicit iteration, field splitting — flowed into PHP, Ruby, and the broader ecosystem of scripting languages that power the web. When a Python developer uses a dictionary, splits a string into fields, or iterates over lines of a file, they are using concepts that AWK pioneered in 1977.

The Dragon Book continues to be assigned in compiler courses at universities worldwide. While newer textbooks have appeared, Aho’s work remains the reference against which all others are measured. The techniques it describes — lexical analysis, parsing, type checking, code generation, optimization — are not only relevant to traditional compilers but also to the modern landscape of language server protocols, just-in-time compilation, transpilers (like TypeScript to JavaScript), and domain-specific languages. Web development agencies like Toimi that build sophisticated front-end toolchains rely on compilation concepts — bundling, minification, transpilation, tree-shaking — that trace their intellectual lineage to the foundations Aho helped establish.

/*

* Simplified Aho-Corasick: core concept demonstration

* Multi-pattern string matching in O(n + m + z) time

*

* This is the algorithm behind fgrep, antivirus scanners,

* and network intrusion detection systems

*/

#define ALPHABET_SIZE 256

#define MAX_STATES 1000

struct AhoCorasick {

int go_to[MAX_STATES][ALPHABET_SIZE]; /* goto function */

int failure[MAX_STATES]; /* failure links */

int output[MAX_STATES]; /* output function */

int num_states;

};

/*

* Key insight: build a trie from all patterns, then add

* "failure links" that allow the automaton to efficiently

* fall back when a partial match fails — without ever

* re-scanning characters already read.

*

* Result: scan the entire input ONCE, finding ALL

* occurrences of ALL patterns simultaneously.

*

* Usage in fgrep (written by Aho):

* fgrep -f patterns.txt logfile.txt

* Searches for thousands of patterns in a single pass.

*

* Without Aho-Corasick, searching for K patterns in text

* of length N would take O(N * K) time.

* With Aho-Corasick, it takes O(N + M + Z) regardless

* of K — where M is total pattern length, Z is matches.

*/Aho’s textbooks on algorithms and data structures — co-authored with Hopcroft and Ullman — helped establish the standard computer science curriculum. The concepts they covered — graph traversal, sorting, hashing, dynamic programming, NP-completeness — remain the core material tested in technical interviews at every major technology company and taught in every accredited computer science program. When Dijkstra’s shortest-path algorithm or depth-first search appears in an algorithms course, it is often presented using frameworks and notation that Aho and his co-authors popularized.

At Columbia University, Aho continues to influence the field through teaching and mentorship. His courses on programming languages and compilers bring the same theory-to-practice approach that characterized his Bell Labs work to new generations of students. Many of his former students and collaborators now hold influential positions in academia and industry, extending the impact of his intellectual tradition.

Perhaps the most remarkable aspect of Aho’s career is the durability of his contributions. AWK, designed in 1977, is still used daily in 2025. The Aho-Corasick algorithm, published in 1975, powers critical security infrastructure. The Dragon Book, first published in 1986, remains the standard compiler textbook. In a field where technologies often become obsolete within a decade, Aho’s work has endured for half a century — a testament to the power of building on deep theoretical foundations rather than chasing trends. Alfred Aho demonstrated that the most lasting practical impact comes from the most rigorous theoretical thinking, and that a tool built on the right abstractions can serve programmers for generations.

Key Facts

- Born: August 9, 1941, Timmins, Ontario, Canada

- Known for: Co-creating AWK, co-authoring the “Dragon Book,” Aho-Corasick algorithm, contributions to egrep/fgrep, foundational algorithms textbooks

- Key projects: AWK programming language (1977), Compilers: Principles, Techniques, and Tools (1986/2006), Aho-Corasick multi-pattern matching algorithm (1975), fgrep, egrep

- Awards: Turing Award (2020, with Jeffrey Ullman), IEEE John von Neumann Medal, Member of National Academy of Engineering, Member of National Academy of Sciences, ACM Fellow, IEEE Fellow

- Education: B.A.Sc. from University of Toronto (1963), Ph.D. from Princeton University (1967)

- Career: Bell Labs (1967–2000), Columbia University (2000–present)

Frequently Asked Questions

Who is Alfred Aho?

Alfred Vaino Aho (born August 9, 1941) is a Canadian-American computer scientist who spent over three decades at Bell Labs and is now the Lawrence Gussman Professor of Computer Science at Columbia University. He is best known for co-creating the AWK programming language (with Peter Weinberger and Brian Kernighan), co-authoring the “Dragon Book” on compiler design (with Jeffrey Ullman, Ravi Sethi, and Monica Lam), and inventing the Aho-Corasick multi-pattern string matching algorithm. He received the Turing Award in 2020 for fundamental contributions to programming language implementation.

What did Alfred Aho create?

Aho co-created AWK (1977), a text-processing language that remains a standard tool on every Unix and Linux system. He co-authored the Aho-Corasick algorithm (1975), used in antivirus software, intrusion detection systems, and the fgrep tool. He co-authored the Dragon Book, the definitive textbook on compiler construction. He also co-wrote foundational textbooks on algorithms, data structures, and formal language theory with Hopcroft and Ullman, and contributed to the Unix tools egrep and fgrep, which brought efficient pattern matching to the command line.

What is the Dragon Book?

Compilers: Principles, Techniques, and Tools, known as the “Dragon Book” for its cover illustration, is a textbook on compiler design first published in 1986 by Aho, Sethi, and Ullman (with a second edition in 2006 adding Monica Lam as co-author). It covers the complete compilation pipeline — lexical analysis, parsing, semantic analysis, code generation, and optimization — with rigorous theoretical foundations and practical algorithms. It has been the standard textbook for university compiler courses worldwide for nearly four decades.

What is the Aho-Corasick algorithm?

The Aho-Corasick algorithm (1975) is a string-searching algorithm that finds all occurrences of multiple patterns in a text simultaneously, in a single pass. It builds a finite automaton from the set of search patterns and then scans the input in O(n + m + z) time, where n is the text length, m is the total pattern length, and z is the number of matches. It is widely used in antivirus software, network intrusion detection, content filtering, and the Unix tool fgrep.

Why is Alfred Aho important for modern web development?

AWK, which Aho co-designed, directly influenced Perl, which in turn influenced PHP, Ruby, and Python — languages that power much of the modern web. The compiler construction techniques Aho systematized in the Dragon Book underpin the transpilers, bundlers, and build tools that modern web developers rely on daily. His pattern matching algorithms are used in code editors, search tools, and security systems. Every developer who uses grep, writes regular expressions, or benefits from syntax highlighting in their IDE is using technology rooted in Aho’s theoretical and practical contributions.