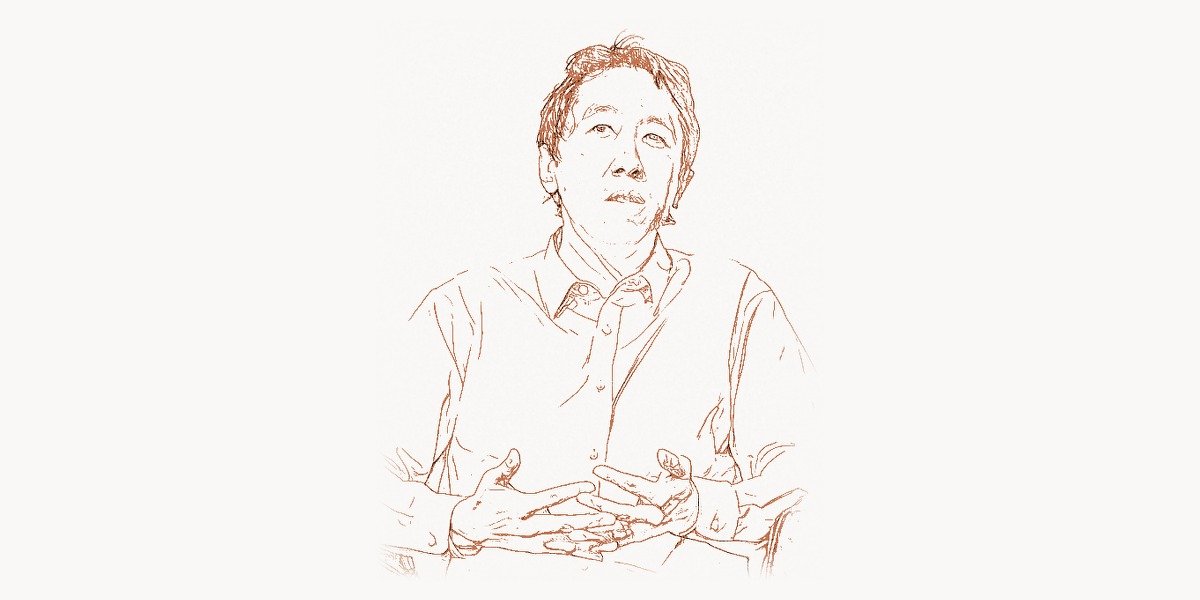

In 2011, a Stanford professor quietly uploaded a machine learning course to the internet. Within weeks, 100,000 students from 196 countries had enrolled — more people than Stanford had educated in its entire 120-year history. The professor was Andrew Ng, and that single experiment would reshape global education, launch one of the world’s largest online learning platforms, and prove that artificial intelligence expertise did not have to remain locked inside the halls of elite research universities. But the course was only one chapter of a career that has consistently pushed the boundaries of what AI can do and who can learn to do it. From co-founding Google Brain, one of the most influential deep learning research groups ever assembled, to leading Baidu’s AI division through a period of explosive growth, to building deeplearning.ai and Landing AI to bridge the gap between cutting-edge research and real-world deployment, Andrew Ng has spent two decades making artificial intelligence simultaneously more powerful and more accessible. He has published over 200 research papers, trained millions of students, advised governments and Fortune 500 companies, and become one of the most recognized voices in the global AI community. His work sits at the intersection of three forces that are reshaping the modern world: the rapid advancement of deep learning algorithms, the democratization of technical education through online platforms, and the deployment of AI systems in industries that had never before considered machine intelligence relevant to their operations.

Early Life and Education

Andrew Yan-Tak Ng was born in London in 1976 to parents who had emigrated from Hong Kong. His father, Ronald Ng, was a physician, and his mother had a background in business. The family moved several times during Andrew’s childhood — from London to Hong Kong, then to Singapore — giving him an early exposure to different educational systems and cultures. This itinerant upbringing instilled adaptability and an awareness of how dramatically access to education varied across the world, themes that would later define much of his professional mission.

Ng showed exceptional aptitude for mathematics and science from a young age. He attended Raffles Institution in Singapore, one of the most academically rigorous schools in Southeast Asia, before moving to the United States for university. He earned his undergraduate degree from Carnegie Mellon University in 1997, studying computer science with a focus on statistical learning. Carnegie Mellon’s robotics and AI programs were among the strongest in the world, and the environment exposed Ng to both the theoretical foundations and practical ambitions of machine intelligence research.

He then pursued a master’s degree at the Massachusetts Institute of Technology, working under Michael Jordan (the machine learning researcher, not the basketball player — a clarification Ng has had to make more than once). Jordan’s group at MIT was at the forefront of probabilistic approaches to machine learning, and this training gave Ng a strong foundation in Bayesian methods, graphical models, and statistical inference. After MIT, Ng moved to the University of California, Berkeley, where he completed his Ph.D. in computer science in 2002. His doctoral dissertation focused on reinforcement learning — specifically, using reward shaping to guide autonomous helicopter flight. The helicopter project was characteristic of Ng’s approach: he combined rigorous theoretical work with a dramatic, visually compelling real-world demonstration. A helicopter that could perform autonomous aerobatic maneuvers made for compelling research and compelling footage, and the project brought early attention to Ng’s ability to bridge theory and application.

In 2002, at the age of 26, Ng joined the Stanford University faculty as an assistant professor in the computer science department. Stanford would become his intellectual home for the next decade and a half, and it was there that he would launch the projects that established him as one of the most influential figures in both AI research and technical education.

The Google Brain and Coursera Breakthrough

Technical Innovation

By the mid-2000s, Ng had established a productive research group at Stanford focused on machine learning applications in robotics, computer vision, and natural language processing. But the project that would transform both his career and the broader AI landscape began in 2011, when Ng partnered with Google to create what became known as Google Brain. The core idea was ambitious and simple: take the largest computing infrastructure in the world (Google’s data centers), apply it to train the largest neural networks anyone had ever attempted, and see what emerged.

The Google Brain team, which included Jeff Dean (one of Google’s most legendary engineers) and Greg Corrado, built a system using 16,000 CPU cores distributed across 1,000 machines. They trained a neural network with over a billion connections — orders of magnitude larger than anything that had been tried before — on unlabeled data from YouTube videos. The system was not told what to look for. It was simply exposed to millions of video frames and allowed to learn whatever statistical structure it could find. What it found was cats. The network spontaneously developed a neuron that responded specifically to cat faces, despite never having been told what a cat was. This result, published in 2012, was a vivid demonstration that large-scale unsupervised learning could discover meaningful concepts directly from raw data.

The technical significance was profound. Previous neural network research had been constrained by limited data and limited compute. Ng and the Google Brain team demonstrated that scaling up both dimensions simultaneously — using massive datasets and massive parallel computation — could produce qualitatively different results. The network was not just better at the same tasks that smaller networks could do; it was capable of tasks that smaller networks simply could not perform. This insight — that scale itself is a critical variable in deep learning — has become one of the defining principles of modern AI research. It directly influenced the approach taken by later projects, including the large language models developed by OpenAI and other organizations.

The Google Brain project grew rapidly after its initial success. It became a permanent research division within Google and produced a stream of influential work in deep learning, reinforcement learning, and natural language processing. The team developed and open-sourced TensorFlow, which became the most widely used deep learning framework in the world for several years. Many members of the original Google Brain team went on to leadership positions across the AI industry, making the project not just a research success but an extraordinary talent incubator.

Why It Mattered

While Google Brain was advancing the state of the art in AI research, Ng was simultaneously launching a revolution in education. In the fall of 2011, Stanford offered three computer science courses online for free: Ng’s machine learning course, Peter Norvig and Sebastian Thrun’s artificial intelligence course, and Jennifer Widom’s databases course. The response was staggering. Ng’s machine learning course attracted 100,000 enrollees. The experience convinced Ng that online education could operate at a scale and with an impact that traditional universities simply could not match.

In 2012, Ng co-founded Coursera with fellow Stanford professor Daphne Koller. The platform launched with courses from Stanford, Princeton, the University of Michigan, and the University of Pennsylvania, and it grew rapidly to become one of the world’s largest online education platforms. The fundamental insight behind Coursera was that the marginal cost of educating one additional student online was essentially zero. A professor who spent a week recording a course could educate millions of students, whereas the same professor teaching in a physical classroom could reach at most a few hundred per year. This asymmetry meant that high-quality education could be distributed globally at a fraction of the traditional cost.

Ng’s machine learning course on Coursera, which evolved from his Stanford lectures, became one of the most popular online courses in history. Millions of students have completed it. For many engineers and aspiring data scientists around the world — particularly those in countries without strong AI research programs — this course was their introduction to machine learning. It taught the mathematical foundations (linear algebra, calculus, optimization) alongside practical implementation skills, and it did so with a clarity and enthusiasm that made complex material genuinely accessible. The pedagogical approach reflected Ng’s conviction that AI should not be an exclusive discipline accessible only to graduates of elite programs. Anyone willing to invest the time and effort should be able to learn it.

The dual impact of Google Brain and Coursera — advancing the frontier of AI research while simultaneously lowering the barriers to AI education — was a rare combination. Most researchers focus on pushing the boundary of what is technically possible. Most educators focus on communicating existing knowledge effectively. Ng did both simultaneously, and the combination amplified each effort: his research credibility gave his educational materials authority, while his educational outreach ensured that the ideas his research generated spread far more rapidly than they would have through academic publications alone. This model of simultaneous innovation and democratization would become the template for everything Ng did afterward.

Other Major Contributions

Stanford Machine Learning Course and CS229. Before Coursera existed, Ng’s CS229 (Machine Learning) at Stanford had already become one of the most popular courses in the university’s history. His lecture notes, which covered everything from linear regression and logistic regression to support vector machines and neural networks, were widely circulated online and became a de facto textbook for a generation of machine learning practitioners. The course was notable for its emphasis on mathematical rigor combined with practical intuition — Ng had a gift for explaining why an algorithm worked, not just how to implement it. The Python programming language, which Ng increasingly adopted in his teaching materials, became the lingua franca of machine learning in part because educators like Ng made it the language of instruction.

"""

Logistic Regression with Gradient Descent — a foundational ML algorithm

that Andrew Ng's CS229 course taught to millions of students worldwide.

This implementation demonstrates the core optimization loop that

underpins much of modern machine learning.

"""

import numpy as np

def sigmoid(z):

"""The sigmoid activation function — maps any real number to (0, 1)."""

return 1.0 / (1.0 + np.exp(-np.clip(z, -500, 500)))

def compute_cost(X, y, weights):

"""

Binary cross-entropy loss function.

Measures how far our predictions are from the true labels.

Ng emphasized that understanding the cost function is the key

to understanding any ML algorithm.

"""

m = len(y)

predictions = sigmoid(X @ weights)

# Clip to avoid log(0)

eps = 1e-7

cost = -(1/m) * (

y.T @ np.log(predictions + eps) +

(1 - y).T @ np.log(1 - predictions + eps)

)

return float(cost)

def gradient_descent(X, y, learning_rate=0.01, iterations=1000):

"""

The optimization loop that Ng taught as the 'engine' of ML:

1. Make predictions with current weights

2. Compute the error (cost)

3. Calculate the gradient (direction of steepest ascent)

4. Update weights in the opposite direction

5. Repeat until convergence

"""

m, n = X.shape

weights = np.zeros((n, 1))

cost_history = []

for i in range(iterations):

# Forward pass: compute predictions

predictions = sigmoid(X @ weights)

# Compute gradient: how much each weight contributes to error

gradient = (1/m) * (X.T @ (predictions - y))

# Update step: move weights against the gradient

weights -= learning_rate * gradient

if i % 100 == 0:

cost = compute_cost(X, y, weights)

cost_history.append(cost)

return weights, cost_history

# Example: classifying data points

np.random.seed(42)

# Generate synthetic dataset

m_samples = 200

X_raw = np.random.randn(m_samples, 2)

y = ((X_raw[:, 0] + X_raw[:, 1]) > 0).astype(float).reshape(-1, 1)

# Add bias term (intercept)

X = np.hstack([np.ones((m_samples, 1)), X_raw])

# Train the model

learned_weights, costs = gradient_descent(X, y, learning_rate=0.5, iterations=2000)

print(f"Final cost: {costs[-1]:.4f}")

print(f"Learned weights: {learned_weights.flatten().round(3)}")

# The model learns that both features contribute positively to the decisionBaidu AI Group. In 2014, Ng made a surprising move: he left Google to become Vice President and Chief Scientist of Baidu, the Chinese technology giant. At Baidu, he led a team of over 1,300 researchers and engineers working on AI applications including speech recognition, natural language processing, computer vision, and autonomous driving. Under his leadership, Baidu’s speech recognition system, Deep Speech 2, achieved accuracy levels that rivaled human transcription in certain benchmarks. The system used end-to-end deep learning — feeding raw audio directly into a neural network that produced text, bypassing the traditional pipeline of handcrafted feature extraction and language modeling. Deep Speech 2 was recognized by MIT Technology Review as one of the ten breakthrough technologies of 2016.

Ng’s tenure at Baidu also gave him perspective on how AI was being deployed at scale in one of the world’s largest technology markets. China’s combination of massive datasets (from hundreds of millions of mobile users), significant government investment in AI, and a highly competitive technology sector created an environment where AI applications moved from research to production faster than almost anywhere else in the world. This experience informed Ng’s later thinking about how organizations should approach AI adoption — an issue he would address directly with his next ventures.

deeplearning.ai. After leaving Baidu in 2017, Ng founded deeplearning.ai, an education technology company focused specifically on AI education. Where Coursera was a broad platform offering courses across many disciplines, deeplearning.ai was focused: it produced a curated sequence of deep learning courses designed to take a student from basic neural network concepts to advanced topics like sequence models, generative adversarial networks, and transformer architectures. The Deep Learning Specialization on Coursera, produced by deeplearning.ai, has been completed by millions of students and is widely regarded as one of the best introductions to deep learning available anywhere. Ng also launched the AI Fund, a venture studio that builds AI-focused startups, and the AI Transformation Playbook, a guide for enterprises seeking to integrate AI into their operations.

Landing AI. In 2017, Ng also founded Landing AI, a company focused on bringing AI to manufacturing and industrial applications. While most AI attention and investment has focused on consumer internet applications (recommendation systems, advertising, social media), Ng recognized that manufacturing — which accounts for roughly 16% of global GDP — had been largely untouched by the deep learning revolution. Landing AI developed tools for visual inspection (using computer vision to detect defects in manufactured products), predictive maintenance, and quality control. The company’s approach emphasized what Ng calls “data-centric AI” — the idea that improving the quality and consistency of training data is often more effective than improving the model architecture. This was a significant philosophical shift from the prevailing “model-centric” approach in academic AI research, and it reflected Ng’s practical experience deploying AI in environments where data is messy, limited, and expensive to collect. Managing the complex workflows involved in industrial AI deployments — from data labeling pipelines to model validation schedules — requires systematic project management tools that can coordinate cross-functional teams of data scientists, engineers, and domain experts.

Philosophy and Approach

Key Principles

Andrew Ng’s career has been guided by several principles that set him apart from many of his contemporaries in AI research.

AI is the new electricity. Ng’s most famous analogy compares artificial intelligence to electricity: just as electricity transformed every major industry roughly a century ago, AI will transform every major industry in the coming decades. The comparison is not merely rhetorical. Electricity did not just enable new products (light bulbs, radios); it fundamentally restructured how factories operated, how cities were designed, how work was organized, and how people lived. Ng argues that AI will have the same breadth of impact, and that most of the value will come not from AI-native companies but from existing companies that successfully integrate AI into their operations. This perspective has shaped his focus on education (everyone needs to understand AI, not just researchers) and on enterprise adoption (the real economic impact of AI will come from traditional industries like manufacturing, healthcare, and agriculture).

This vision connects to the work of the pioneers who built the foundations Ng relies on. The deep learning algorithms at the heart of modern AI trace back to Geoffrey Hinton’s backpropagation work, the convolutional architectures developed by Yann LeCun, and the theoretical frameworks established by Yoshua Bengio. Ng built upon their research and made it accessible to the world.

Data-centric AI. While much of the AI research community focuses on developing better algorithms and architectures, Ng has increasingly advocated for a “data-centric” approach — focusing on improving the quality, consistency, and relevance of training data rather than solely on improving models. He argues that in many real-world applications, the model architecture is already good enough, and the bottleneck is the data. A well-curated dataset with consistent labels can often produce better results than a state-of-the-art model trained on noisy, inconsistent data. This perspective emerged from his experience at Landing AI, where manufacturing clients often had limited amounts of data with significant labeling inconsistencies.

The 70% rule. Ng has spoken about his approach to decision-making: if you are 70% sure that something is the right thing to do, you should do it rather than waiting until you are 90% or 95% sure. The cost of moving too slowly, he argues, is generally higher than the cost of occasionally making a wrong decision that can be corrected. This bias toward action has characterized both his career decisions (moving from academia to Google to Baidu and back to entrepreneurship) and his advice to others. It reflects a broader engineering sensibility: in a fast-moving field, the ability to iterate quickly is more valuable than the ability to plan perfectly.

Education as the multiplier. Perhaps the most distinctive aspect of Ng’s philosophy is his deep belief in education as a force multiplier for technological progress. He has argued repeatedly that the limiting factor in AI progress is not algorithms, data, or compute — it is the number of people who understand how to apply AI effectively. By educating millions of people in machine learning fundamentals, Ng has seeded AI capabilities across thousands of organizations and dozens of countries that would otherwise have had no access to this expertise. The downstream effects of this educational investment are difficult to quantify but almost certainly represent the largest impact of his career.

Legacy and Impact

Andrew Ng occupies a unique position in the history of artificial intelligence. He is not primarily known for a single theoretical breakthrough — his research contributions, while substantial, are part of a larger body of work in machine learning rather than a singular paradigm-shifting discovery like Hinton’s backpropagation or LeCun’s convolutional neural networks. Instead, Ng’s legacy rests on three interconnected achievements that together have shaped the modern AI landscape more broadly than any single paper could.

First, he demonstrated that scale matters. The Google Brain project, with its thousands of CPUs training billion-parameter networks on vast datasets, was an early and influential proof that scaling up neural networks produces qualitatively new capabilities. This insight has been the driving force behind the last decade of AI progress, from AlphaGo to GPT-4 and beyond. Every major AI lab in the world now operates on the principle that more data and more compute yield better models, and Ng was among the first to prove it empirically.

Second, he democratized AI education on a scale that no one had previously achieved or even attempted. The millions of students who have completed his courses represent a global workforce that did not exist before. Companies in countries with no tradition of AI research can now hire engineers who learned machine learning from Ng’s Coursera courses. Startups in emerging markets can build AI-powered products because their founders took Ng’s Deep Learning Specialization. The economic and social impact of this educational contribution will compound for decades. For modern web development teams integrating AI features into their products, working with a professional development agency that understands both the AI and web layers of the stack can dramatically accelerate time to market.

Third, he has consistently pushed for AI to be deployed in industries beyond the technology sector. His work at Landing AI, his AI Transformation Playbook, and his public advocacy have all been aimed at convincing traditional industries — manufacturing, agriculture, healthcare — that AI is not just a Silicon Valley phenomenon but a general-purpose technology that can improve any process where data is generated and decisions are made. This perspective, which treats AI as infrastructure rather than product, may ultimately prove to be his most consequential insight.

Ng has received numerous honors and recognitions throughout his career. He was named to the Time 100 list of most influential people. He has received the AAAI Feigenbaum Prize and has been recognized as one of the most influential computer scientists in the world by multiple rankings. He is a member of the National Academy of Engineering. But the metrics that probably matter most to Ng himself are the ones that reflect reach: the millions of course completions, the thousands of companies that have adopted AI practices he advocated, and the global community of AI practitioners that his educational efforts helped create.

At a time when artificial intelligence is simultaneously the most promising and most debated technology in the world, Andrew Ng’s career offers a model for how technical excellence and a commitment to broad access can reinforce each other. He has shown that making powerful technology more accessible does not dilute it — it amplifies it. And he has demonstrated that the most important contribution a scientist can make is not always a paper or a patent, but a platform that allows millions of other people to build on the same foundations.

Key Facts

- Born: 1976, London, United Kingdom

- Education: B.S. from Carnegie Mellon University, M.S. from MIT, Ph.D. from UC Berkeley (2002)

- Stanford appointment: Associate Professor of Computer Science (joined 2002)

- Google Brain: Co-founded in 2011 with Jeff Dean and Greg Corrado; trained billion-parameter neural networks on Google’s infrastructure

- Coursera: Co-founded in 2012 with Daphne Koller; grew to 100+ million registered learners worldwide

- Baidu: Vice President and Chief Scientist (2014–2017); led 1,300+ person AI team

- deeplearning.ai: Founded 2017; Deep Learning Specialization completed by millions of students

- Landing AI: Founded 2017; pioneered data-centric AI approach for manufacturing

- AI Fund: Venture studio building AI-focused startups across multiple industries

- Key quote: “AI is the new electricity” — articulating the transformative breadth of AI’s impact across all industries

- Publications: 200+ research papers in machine learning, robotics, and AI applications

- Honors: Time 100, AAAI Feigenbaum Prize, National Academy of Engineering member

FAQ

What is Andrew Ng most famous for?

Andrew Ng is most widely known for three accomplishments: co-founding Coursera, which became one of the world’s largest online education platforms with over 100 million learners; co-founding Google Brain, the deep learning research group at Google that demonstrated the power of large-scale neural network training and helped develop TensorFlow; and creating the most popular machine learning course in history, originally taught at Stanford and later offered on Coursera, which has introduced millions of people to the fundamentals of AI and machine learning. His career uniquely combines frontier research contributions with a sustained commitment to making AI accessible to a global audience, which is why he is often called the most influential educator in artificial intelligence.

How did Andrew Ng contribute to the development of deep learning?

Ng’s primary contribution to deep learning was demonstrating that scale — large datasets combined with massive computational resources — could produce qualitatively new capabilities in neural networks. The Google Brain project in 2011–2012 trained neural networks with over a billion parameters on Google’s distributed computing infrastructure, producing results (like unsupervised cat face detection) that smaller networks simply could not achieve. This work helped establish the scaling hypothesis that has driven the last decade of AI progress. Ng also contributed important research in self-supervised learning, transfer learning, and reinforcement learning for robotics (including his influential autonomous helicopter work at Stanford). His research on sparse autoencoding and feature learning helped advance unsupervised representation learning. While theoretical pioneers like Geoffrey Hinton, Yann LeCun, and Yoshua Bengio laid the algorithmic foundations, Ng played a critical role in demonstrating that those foundations could support systems of unprecedented scale and capability.

What is the data-centric AI approach that Andrew Ng advocates?

Data-centric AI is a methodology that Ng has championed since approximately 2020, based on his experience deploying AI in manufacturing and other real-world applications through Landing AI. The core idea is that in many practical AI applications, the model architecture is already adequate — the bottleneck to better performance is the quality and consistency of the training data. Traditional machine learning workflows fix the data and iterate on the model (trying different architectures, hyperparameters, and training procedures). Data-centric AI inverts this: it fixes the model and iterates on the data — improving labels, removing inconsistencies, augmenting underrepresented cases, and ensuring that every example in the training set conveys clear and consistent information. This approach is particularly relevant in domains like manufacturing inspection, medical imaging, and agricultural monitoring, where datasets are often small, labeling requires domain expertise, and inconsistent labels are common. Ng has organized data-centric AI competitions, published research on the approach, and built tools at Landing AI that implement these principles, making the methodology increasingly influential in applied machine learning.