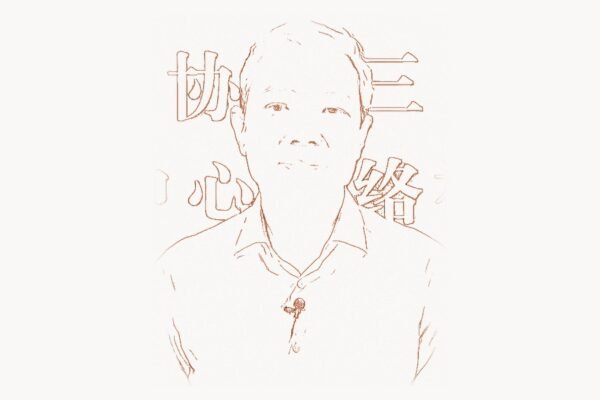

On June 12, 2017, a team of eight researchers at Google published a paper on arXiv titled “Attention Is All You Need.” The paper proposed a new neural network architecture called the Transformer — a model that replaced the recurrent and convolutional layers that had dominated sequence modeling for a decade with a mechanism called self-attention. The lead author was Ashish Vaswani, a 30-year-old researcher at Google Brain who had spent years studying attention mechanisms in neural networks. The paper was presented at the 31st Conference on Neural Information Processing Systems (NeurIPS) in December 2017. Within five years, the Transformer architecture would become the foundation of virtually every major breakthrough in artificial intelligence: GPT-4, BERT, PaLM, LLaMA, Claude, Gemini, DALL-E, Stable Diffusion, AlphaFold 2, Codex, Whisper. The entire modern AI industry — a sector valued at hundreds of billions of dollars by 2025 — runs on the architecture that Vaswani and his co-authors introduced in that 15-page paper. It is, by any reasonable measure, one of the most consequential technical papers in the history of computing, ranking alongside Turing’s 1936 paper on computable numbers and Shannon’s 1948 paper on information theory in its impact on the field.

Early Life and Education

Ashish Vaswani grew up in India, where he developed an early interest in mathematics and computer science. He pursued his undergraduate studies in India before moving to the United States for graduate work. Vaswani enrolled at the University of Southern California (USC), where he completed his PhD in computer science, focusing on natural language processing and machine learning. His doctoral research centered on statistical approaches to language modeling and sequence transduction — the problem of converting one sequence of symbols into another, which underlies tasks like machine translation, speech recognition, and text summarization.

During his PhD work, Vaswani became deeply familiar with the limitations of the dominant architectures for sequence modeling. Recurrent neural networks (RNNs), and their more sophisticated variants — Long Short-Term Memory networks (LSTMs, introduced by Sepp Hochreiter and Jurgen Schmidhuber in 1997) and Gated Recurrent Units (GRUs) — processed sequences one element at a time, from left to right. This sequential nature created two fundamental problems: first, the models were slow to train because they could not process sequence elements in parallel; second, they struggled with long-range dependencies because information had to pass through many sequential steps to travel from one part of a sequence to another, degrading along the way. These problems were well known in the research community, and various approaches had been tried to mitigate them — bidirectional RNNs, attention mechanisms layered on top of recurrent models, convolutional approaches to sequence modeling — but none had solved the fundamental bottleneck of sequential computation.

After completing his PhD, Vaswani joined Google Brain, the company’s deep learning research division. Google Brain, founded in 2011 by Andrew Ng and Jeff Dean, had become one of the world’s leading AI research labs, with a culture that encouraged ambitious, high-risk research projects. It was at Google Brain that Vaswani would collaborate with Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan Gomez, Lukasz Kaiser, and Illia Polosukhin on the work that would reshape the field of artificial intelligence.

The Transformer Breakthrough

Technical Innovation

The core insight of the Transformer architecture was radical in its simplicity: attention is all you need. Rather than processing sequences through recurrent connections (where each step depends on the previous step) or through convolutional filters (which process local windows of the sequence), the Transformer processes the entire sequence simultaneously using a mechanism called self-attention. Self-attention allows every element in a sequence to directly attend to every other element, computing a weighted sum of their representations based on their relevance to each other. This means that a word at position 1 can directly interact with a word at position 500 in a single computational step, without the information having to pass through 499 intermediate steps as it would in an RNN.

The self-attention mechanism works through three learned linear projections: queries (Q), keys (K), and values (V). For each element in the sequence, the model computes a query vector (representing what information the element is looking for), a key vector (representing what information the element contains), and a value vector (representing the actual information to be transmitted). Attention scores are computed by taking the dot product of each query with all keys, scaling by the square root of the dimension, and applying a softmax function to obtain weights. These weights are then used to compute a weighted sum of the value vectors.

import numpy as np

def scaled_dot_product_attention(Q, K, V, mask=None):

"""

The core computation of the Transformer architecture.

This single function is the foundation of GPT, BERT,

and every modern large language model.

Q: Queries — shape (seq_len, d_k) — "what am I looking for?"

K: Keys — shape (seq_len, d_k) — "what do I contain?"

V: Values — shape (seq_len, d_v) — "what information do I carry?"

Vaswani et al. discovered that this mechanism alone,

without any recurrence or convolution, could model

complex sequential dependencies.

"""

d_k = K.shape[-1]

# Step 1: Compute attention scores

# Each query asks: "how relevant is each key to me?"

scores = np.matmul(Q, K.T) / np.sqrt(d_k)

# Step 2: Optional masking (for autoregressive decoding)

# Prevents attending to future positions during generation

if mask is not None:

scores = np.where(mask == 0, -1e9, scores)

# Step 3: Softmax normalization — convert scores to probabilities

attention_weights = np.exp(scores) / np.sum(

np.exp(scores), axis=-1, keepdims=True

)

# Step 4: Weighted sum of values

# Each position gathers information from the entire sequence

output = np.matmul(attention_weights, V)

return output, attention_weights

def multi_head_attention(X, W_q, W_k, W_v, W_o, n_heads):

"""

Multi-head attention: run multiple attention operations in parallel,

each with different learned projections.

Vaswani's key insight: different heads learn to attend to

different types of relationships:

- Head 1 might learn syntactic dependencies (subject-verb)

- Head 2 might learn coreference (pronoun-antecedent)

- Head 3 might learn semantic similarity

- Head 4 might learn positional patterns

This is analogous to having multiple "experts" each

examining the sequence from a different perspective.

"""

d_model = X.shape[-1]

d_k = d_model // n_heads

heads = []

for i in range(n_heads):

# Each head gets its own Q, K, V projections

Q = np.matmul(X, W_q[i]) # Project into query space

K = np.matmul(X, W_k[i]) # Project into key space

V = np.matmul(X, W_v[i]) # Project into value space

head_output, _ = scaled_dot_product_attention(Q, K, V)

heads.append(head_output)

# Concatenate all heads and project back to model dimension

concatenated = np.concatenate(heads, axis=-1)

output = np.matmul(concatenated, W_o)

return outputThe Transformer introduced multi-head attention, which runs multiple self-attention operations in parallel, each with different learned projections. This allows the model to attend to information from different representation subspaces at different positions simultaneously. In practice, different attention heads learn to capture different types of relationships — some heads learn syntactic structure, others learn coreference resolution, and others learn semantic similarity. The original Transformer used 8 attention heads; modern large language models use 32, 64, or even 128 heads.

Because the Transformer processes all positions simultaneously rather than sequentially, it has no inherent notion of position — it cannot distinguish between the first and last elements of a sequence. To solve this, Vaswani and his co-authors introduced positional encoding: sinusoidal functions of different frequencies that are added to the input embeddings to inject information about the absolute position of each element. The choice of sinusoidal functions was deliberate — the authors hypothesized that this encoding would allow the model to learn to attend to relative positions, because for any fixed offset k, the positional encoding at position p+k can be represented as a linear function of the encoding at position p.

The complete Transformer architecture consists of an encoder and a decoder, each made of stacked layers. Each encoder layer contains a multi-head self-attention sublayer and a position-wise feed-forward network, with residual connections and layer normalization around each sublayer. The decoder is similar but includes an additional cross-attention sublayer that attends to the encoder output, and uses masked self-attention to prevent attending to future positions during autoregressive generation. The original paper used 6 layers for both encoder and decoder, with a model dimension of 512 and 8 attention heads — about 65 million parameters total.

Why It Mattered

The Transformer’s impact was immediate and transformative. On the WMT 2014 English-to-German translation benchmark, the Transformer achieved a BLEU score of 28.4 — improving over the previous best results by more than 2 BLEU points while requiring significantly less training time. The English-to-French model achieved 41.0 BLEU, establishing a new state of the art. But more important than the benchmark scores was the training efficiency: because self-attention processes all positions in parallel, Transformers could be trained on GPUs far more efficiently than RNNs. The paper reported that the base model required only 12 hours of training on 8 NVIDIA P100 GPUs — a fraction of the time required for comparable recurrent models.

This parallelizability was the key that unlocked the scaling revolution in AI. RNNs could not be efficiently scaled because their sequential nature created a fundamental bottleneck — doubling the sequence length roughly doubled the training time. Transformers, by contrast, scaled with the square of the sequence length (due to the all-to-all attention computation) but could distribute that computation across many parallel processors. This meant that researchers could train much larger models on much more data by simply adding more GPUs — a insight that would lead directly to the era of large language models.

In 2018, Google released BERT (Bidirectional Encoder Representations from Transformers), which used only the encoder portion of the Transformer to create powerful language representations by pre-training on massive text corpora. BERT achieved state-of-the-art results on 11 NLP benchmarks simultaneously and became the dominant approach to natural language understanding. That same year, OpenAI — under the leadership of Sam Altman — released GPT (Generative Pre-trained Transformer), which used only the decoder portion to generate text autoregressively. GPT-2 (2019) showed that scaling the Transformer to 1.5 billion parameters produced surprisingly coherent text generation. GPT-3 (2020), at 175 billion parameters, demonstrated that sufficiently large Transformers could perform tasks they were never explicitly trained for — few-shot and zero-shot learning. GPT-4 (2023) and its successors proved that further scaling, combined with techniques like reinforcement learning from human feedback (RLHF), could produce systems with broad, general-purpose reasoning capabilities.

The Transformer also proved remarkably versatile beyond language. Vision Transformers (ViT, 2020) applied the architecture to image recognition by treating image patches as sequence elements, challenging the decades-long dominance of convolutional neural networks in computer vision. The same architecture powers DeepMind’s AlphaFold 2, which solved the protein structure prediction problem — one of the grand challenges of biology. Transformers generate images (DALL-E, Stable Diffusion), transcribe speech (Whisper), write code (Codex, Copilot), compose music, play games, and control robots. The architecture that Vaswani and his co-authors designed for machine translation turned out to be a universal computation engine for learning patterns in sequential data of virtually any kind.

The research community recognized this impact quickly. “Attention Is All You Need” became one of the most cited papers in the history of computer science, accumulating over 100,000 citations by 2025. Among the paper’s eight co-authors, several went on to found major AI companies: Noam Shazeer co-founded Character.AI; Aidan Gomez co-founded Cohere; Llion Jones co-founded Sakana AI; and Ilya Sutskever, who was deeply influenced by the Transformer architecture, served as chief scientist at OpenAI during the development of GPT-3 and GPT-4.

Beyond the Transformer: Other Contributions

Before the Transformer paper, Vaswani had contributed to research on attention mechanisms in neural machine translation. His earlier work at Google explored how attention could be applied to various sequence-to-sequence tasks, building the theoretical and empirical foundation that would culminate in the Transformer. He contributed to research on neural machine translation at Google that improved Google Translate’s quality, moving it from phrase-based statistical approaches to neural network-based methods.

After the Transformer’s publication, Vaswani continued working on advancing the architecture. He contributed to research on scaling Transformer models, exploring how increased model size and training data affected performance across different tasks. His work at Google Brain during this period helped establish the empirical scaling laws that would later guide the development of large language models — the observation that model performance improves predictably as a function of model size, dataset size, and compute budget.

In 2022, Vaswani co-founded Essential AI alongside Niki Parmar, another co-author of the Transformer paper. Essential AI focuses on building enterprise AI products powered by foundation models — large-Scale AI systems that can be adapted to specific business applications. The company represents Vaswani’s transition from pure research to applied AI, aiming to bring the power of Transformer-based models into practical enterprise workflows. Essential AI raised significant venture capital funding, reflecting the market’s confidence in Vaswani’s ability to translate research breakthroughs into commercial products. For teams building AI-powered products, platforms like Taskee provide essential project management capabilities that help coordinate the complex workflows involved in developing and deploying machine learning systems at scale.

Vaswani’s work on sequence modeling extends beyond the Transformer itself. He has contributed to research on efficient attention mechanisms that address one of the Transformer’s key limitations: the quadratic computational cost of self-attention with respect to sequence length. Processing a sequence of length n requires computing n-by-n attention matrices, which becomes prohibitively expensive for very long sequences. Various approaches to linear or sub-quadratic attention have been explored by the research community, and Vaswani’s insights on the fundamental trade-offs in attention computation have informed this ongoing line of research.

Philosophy and Approach to Research

Key Principles

Vaswani’s research philosophy is characterized by a willingness to challenge fundamental assumptions. The dominant assumption in sequence modeling before 2017 was that recurrence was essential — that you needed some form of sequential processing to model sequential data. The Transformer paper’s title, “Attention Is All You Need,” was itself a provocation: it claimed that the entire recurrent machinery that the field had spent decades developing could be replaced by a single mechanism. This kind of radical simplification — stripping away complexity to find the essential core of a problem — is a hallmark of breakthrough research in computer science, echoing the tradition of researchers like Geoffrey Hinton, who repeatedly overturned conventional wisdom about what neural networks could and could not do.

A second principle evident in Vaswani’s work is the importance of computational efficiency as a design constraint. The Transformer was not just a better model — it was a more trainable model. By eliminating sequential dependencies, it could leverage the massive parallelism of modern GPU hardware in ways that RNNs could not. This awareness of the relationship between model architecture and hardware capabilities — designing models that align with the strengths of available compute infrastructure — has become a central principle in modern AI research. The best architecture is not the one that is most theoretically elegant but the one that most effectively converts available compute into model capability.

Vaswani’s collaborative approach to research is also notable. The Transformer paper had eight co-authors, each contributing distinct expertise: Vaswani and Shazeer brought deep knowledge of attention mechanisms; Parmar and Uszkoreit contributed expertise in sequence modeling; Kaiser and Gomez brought experience with neural machine translation; Jones and Polosukhin provided engineering and systems knowledge. The paper itself was a product of genuine intellectual collaboration, with different team members contributing different components of the architecture. This collaborative model — bringing together researchers with complementary expertise to tackle a fundamental problem — has become the standard approach to large-scale AI research, exemplified by the massive teams that have produced models like GPT-4 and Gemini.

A third principle in Vaswani’s approach is the pursuit of generality. The Transformer was designed for machine translation, but its architecture contained no translation-specific assumptions. It was a general mechanism for learning relationships between elements in sequences. This generality — the absence of task-specific inductive biases — turned out to be the architecture’s greatest strength, allowing it to be applied to language understanding, image recognition, protein structure prediction, code generation, and dozens of other domains. The lesson, which has shaped the entire field of AI research since 2017, is that general-purpose architectures trained on large amounts of data often outperform specialized architectures designed for specific tasks. The development and coordination of such large-scale AI projects benefits enormously from structured project management approaches — agencies like Toimi bring the kind of disciplined technical project management that complex AI deployments demand.

Legacy and Modern Relevance

The Transformer architecture is, as of 2025, the single most important technical contribution to the field of artificial intelligence since the backpropagation algorithm. It is the foundation of large language models (GPT-4, Claude, Gemini, LLaMA), image generation models (DALL-E, Stable Diffusion, Midjourney), multimodal models (GPT-4V, Gemini Ultra), speech models (Whisper), code models (Codex, AlphaCode), scientific models (AlphaFold 2, ESMFold), and countless other applications. The architecture has not been superseded in the eight years since its publication — it has been extended, scaled, and refined, but the core mechanism of multi-head self-attention remains at the heart of virtually every state-of-the-art AI system.

The economic impact is staggering. The AI industry, built almost entirely on Transformer-based models, reached hundreds of billions of dollars in market value by 2025. Companies founded specifically to build or deploy Transformer-based systems — OpenAI, Anthropic, Cohere, Character.AI, Mistral, Inflection, and many others — have collectively raised tens of billions in venture capital. Existing technology companies — Google, Microsoft, Meta, Amazon, Apple — have reoriented their strategies around Transformer-based AI. The chip industry, led by NVIDIA, has been transformed by the demand for hardware optimized for Transformer training and inference, with NVIDIA’s market capitalization exceeding $3 trillion driven largely by AI chip demand.

Vaswani’s personal trajectory — from Google Brain researcher to co-founder of Essential AI — mirrors the broader shift in the AI field from academic research to commercial application. The Transformer paper was pure research, published openly and without commercial intent. But the technology it described became so powerful and so broadly applicable that it spawned an entire industry. The researchers who created the architecture have become some of the most sought-after figures in technology, and the companies they founded are at the forefront of the effort to build practical AI systems.

The Transformer also catalyzed a philosophical reckoning about the nature of intelligence and the future of AI-assisted software development. When GPT-3 demonstrated that a sufficiently large Transformer trained on enough text could generate coherent, contextually appropriate responses to virtually any prompt, it raised questions about the nature of language understanding that had previously been confined to philosophy and cognitive science. Are these models merely sophisticated pattern matchers, or is something more fundamental happening? The debate continues, but the Transformer made it unavoidable by producing systems whose behavior is difficult to distinguish from understanding in many contexts.

The research legacy extends to the methodology as well. The Transformer paper demonstrated that a small team with a clear insight and rigorous execution could change an entire field overnight. It validated the approach of bold architectural innovation over incremental improvement. And it showed that the most impactful research sometimes comes not from inventing entirely new components but from combining existing ideas — attention, residual connections, layer normalization, positional encoding — in the right way. Every one of the Transformer’s individual components existed before the paper. What Vaswani and his co-authors contributed was the insight that these components, assembled in the right architecture without recurrence, were sufficient to achieve state-of-the-art results — and that the resulting model could be scaled to capabilities that no one had imagined.

For developers working with modern AI tools and frameworks, understanding the Transformer architecture is now as fundamental as understanding HTTP or SQL. Whether you are building applications with the latest development tools, training custom models, or integrating AI APIs into web applications, the Transformer is the engine under the hood. And for researchers pushing the boundaries of what AI can do, the Transformer remains the starting point — the architecture that every new approach must either build upon or convincingly surpass.

Key Facts

- Full name: Ashish Vaswani

- Known for: Lead author of “Attention Is All You Need” (2017), co-creator of the Transformer architecture

- Education: PhD in Computer Science, University of Southern California (USC)

- Key affiliations: Google Brain (researcher), Essential AI (co-founder, 2022)

- Landmark paper: “Attention Is All You Need” — presented at NeurIPS 2017, 100,000+ citations by 2025

- Technical contributions: Self-attention mechanism, multi-head attention, positional encoding, encoder-decoder Transformer architecture

- Impact: Foundation of GPT, BERT, PaLM, LLaMA, Claude, Gemini, DALL-E, AlphaFold 2, Codex, Whisper, and virtually all modern AI systems

- Co-authors: Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan Gomez, Lukasz Kaiser, Illia Polosukhin

Frequently Asked Questions

Who is Ashish Vaswani?

Ashish Vaswani is an Indian-American computer scientist and AI researcher who is best known as the lead author of the 2017 paper “Attention Is All You Need,” which introduced the Transformer architecture. This architecture became the foundation of all modern large language models, including GPT-4, BERT, Claude, and Gemini. Vaswani completed his PhD at the University of Southern California and worked as a researcher at Google Brain before co-founding Essential AI in 2022. His work on the Transformer is widely regarded as one of the most consequential contributions to artificial intelligence in the 21st century, enabling the development of AI systems that can generate text, translate languages, write code, create images, predict protein structures, and perform hundreds of other tasks.

What is the Transformer architecture and why is it important?

The Transformer is a neural network architecture that processes sequences using a mechanism called self-attention, which allows every element in a sequence to directly attend to every other element in parallel. Before the Transformer, sequence models like RNNs and LSTMs processed elements one at a time, making them slow to train and poor at capturing long-range dependencies. The Transformer eliminated this sequential bottleneck entirely, enabling massive parallelization on GPU hardware. This parallelizability was the key insight that enabled the scaling of AI models to billions and then trillions of parameters — the approach that produced systems like GPT-4, Claude, and Yoshua Bengio’s research into deep learning foundations. The Transformer’s generality — it contains no task-specific assumptions — also allowed it to be applied far beyond its original machine translation purpose, powering breakthroughs in computer vision, biology, speech recognition, and code generation.

What is Essential AI and what does it do?

Essential AI is an artificial intelligence company co-founded by Ashish Vaswani and Niki Parmar in 2022. Both co-founders were among the eight authors of the original Transformer paper. Essential AI builds enterprise AI products powered by foundation models — large-scale AI systems based on the Transformer architecture that can be customized for specific business applications. The company represents the transition of Transformer technology from research labs to practical commercial deployment. Essential AI has raised significant venture capital funding and is focused on developing AI systems that can be integrated into enterprise workflows, reflecting the broader industry trend of applying large language model capabilities to real-world business problems. The company builds on the deep technical expertise that Vaswani and Parmar developed during their years of foundational research at Google Brain, bringing that knowledge to bear on the challenge of making AI practically useful for organizations at scale.