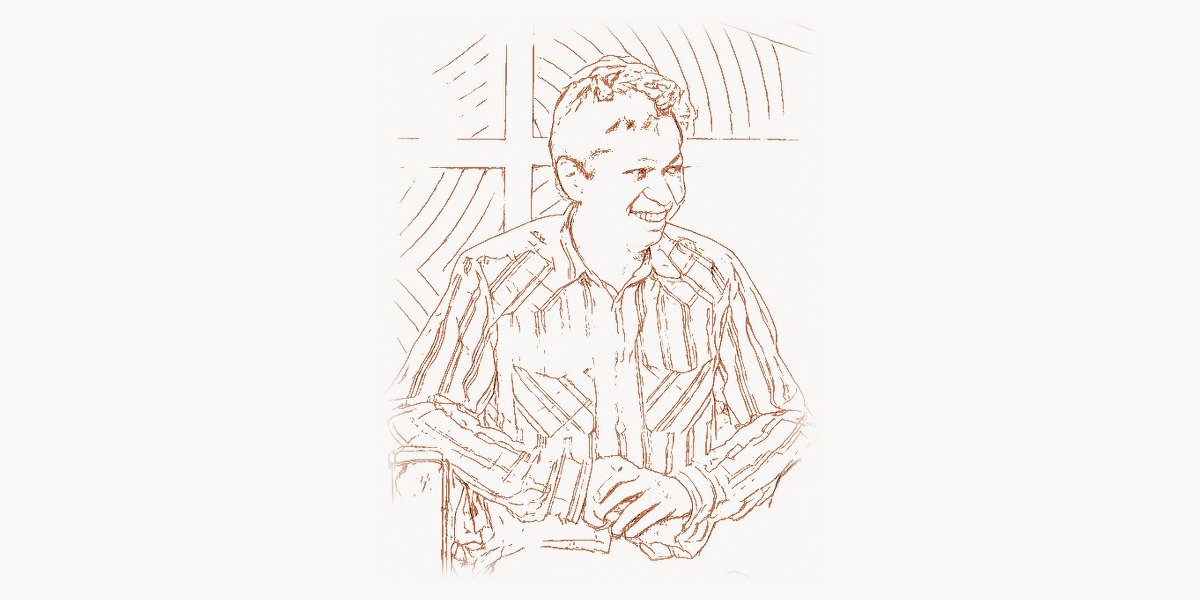

In 2009, a 27-year-old former Google employee sat in a small apartment in Palo Alto, building a website that nobody asked for. The product had no viral loop, no celebrity endorsements, and no obvious revenue model. When it launched in March 2010 as an invite-only beta, it attracted just 200 users in its first few months. Silicon Valley investors passed on it repeatedly — the idea of a digital pinboard where people could save images of things they found beautiful or useful seemed trivially simple in an era dominated by real-time social feeds and status updates. But Ben Silbermann kept building. He hand-wrote personal emails to those first 200 users, asking what they liked and what frustrated them. He drove to local craft fairs and design meetups, handing out business cards. Within five years, that quiet, stubborn persistence had produced one of the most influential platforms on the internet — a visual discovery engine used by over 400 million people, responsible for driving more referral traffic to retail websites than any social network except Facebook, and a company valued at over $40 billion at its IPO. Silbermann did not invent social networking. He did something more subtle: he reimagined what a social platform could be when it was organized not around people, but around ideas.

Early Life and the Path to Silicon Valley

Ben Silbermann was born on July 14, 1982, in Des Moines, Iowa. His parents were both doctors — his father an ophthalmologist and his mother a dermatologist — and the expectation was that Ben would follow them into medicine. As a child, Silbermann was an avid collector — stamps, insects, coins, baseball cards — a tendency that would later prove surprisingly relevant to his life’s work. There was something about finding interesting objects, organizing them into categories, and returning to examine them that appealed deeply to him.

Silbermann attended Yale University, where he studied political science rather than computer science. During his junior year, he attended a talk by a Google recruiter that changed his trajectory. The idea that technology companies were building products used by millions of people captivated him. After graduating in 2003, he joined Google as a product specialist, working on advertising products including Google Shopping.

At Google, Silbermann spent three years gaining a front-row seat to how people searched for and discovered products online. The experience planted the seeds of an insight he would later build Pinterest around: existing discovery tools were fundamentally text-based, but human desire — what people wanted to buy, build, cook, or wear — was fundamentally visual. Google was extraordinarily good at finding answers to questions you could articulate in words, but it was not designed to help you explore ideas you could not yet name.

In 2008, Silbermann left Google and co-founded a small company with college friend Paul Sciarra, building an iPhone app called Tote — a mobile shopping catalog. Tote failed commercially, but it revealed something important: users were more interested in saving and collecting products they liked than in buying them immediately. People wanted to curate. Tote pointed directly toward Pinterest.

Building Pinterest: From Pinboard to Visual Discovery Engine

The Technical Foundation

In late 2009, Silbermann recruited Evan Sharp, an architecture student at Columbia with a design background, to join him and Sciarra. The core concept was deceptively simple: users could create virtual pinboards organized by topic and “pin” images from anywhere on the web. Other users could follow boards, repin content, and discover new ideas through collections others had curated.

The technical challenges behind this simplicity were substantial. Pinterest needed to crawl and index billions of images, extract meaningful signals about what each image contained, and build a recommendation system that could surface relevant content based on each user’s interests. One of the earliest critical decisions was investing heavily in image processing and visual similarity algorithms. While most social platforms in 2010 treated images as attachments to text, Pinterest treated images as the primary unit of content — meaning its search and discovery systems needed to understand images at a semantic level.

# Simplified visual similarity engine concept

# Pinterest pioneered visual search at scale, using deep learning

# to understand image content and recommend related pins

import numpy as np

from collections import defaultdict

class VisualSearchEngine:

"""

Pinterest's visual search uses convolutional neural networks

to generate embedding vectors for each image. Similar images

produce similar vectors, enabling content-based recommendations.

Core workflow:

1. Generate feature embeddings for each image

2. Build an index for fast approximate nearest neighbor search

3. Retrieve visually similar pins for recommendations

"""

def __init__(self, embedding_dim=128):

self.embedding_dim = embedding_dim

self.pin_embeddings = {} # pin_id -> embedding vector

self.board_index = defaultdict(list) # board_id -> [pin_ids]

self.category_signals = {} # pin_id -> category scores

def compute_embedding(self, image_data):

"""

In production, Pinterest uses a deep CNN (based on

architectures like ResNet or EfficientNet) trained on

billions of pins to generate 128-dimensional embeddings.

The model learns to place visually similar images

close together in embedding space — a mid-century

modern chair and a Scandinavian desk end up near each

other, while a tropical beach photo is far away.

"""

embedding = np.random.randn(self.embedding_dim)

return embedding / np.linalg.norm(embedding)

def index_pin(self, pin_id, image_data, board_id, metadata):

"""Index a new pin for visual search and recommendations."""

embedding = self.compute_embedding(image_data)

self.pin_embeddings[pin_id] = embedding

self.board_index[board_id].append(pin_id)

self.category_signals[pin_id] = {

'visual_category': metadata.get('category'),

'save_count': 0,

'click_through_rate': 0.0,

'freshness_score': 1.0

}

def find_similar_pins(self, query_pin_id, top_k=20):

"""

Find the top-K most visually similar pins.

Production Pinterest uses approximate nearest neighbor

search (e.g., HNSW or ScaNN) for sub-millisecond latency

across billions of pins.

"""

query_vec = self.pin_embeddings[query_pin_id]

similarities = []

for pin_id, emb in self.pin_embeddings.items():

if pin_id == query_pin_id:

continue

score = np.dot(query_vec, emb)

similarities.append((pin_id, score))

similarities.sort(key=lambda x: x[1], reverse=True)

return similarities[:top_k]

def generate_home_feed(self, user_interests, num_pins=50):

"""

Pinterest's home feed blends multiple signals:

- Visual similarity to pins the user has saved

- Board co-occurrence (pins often saved together)

- Engagement signals (saves, clicks, close-ups)

- Freshness and content quality scores

- Advertiser relevance (promoted pins)

"""

candidate_pins = []

for interest_pin_id in user_interests:

similar = self.find_similar_pins(interest_pin_id, top_k=10)

for pin_id, visual_score in similar:

signals = self.category_signals.get(pin_id, {})

final_score = (

0.4 * visual_score +

0.25 * signals.get('click_through_rate', 0) +

0.2 * min(signals.get('save_count', 0) / 1000, 1.0) +

0.15 * signals.get('freshness_score', 0)

)

candidate_pins.append((pin_id, final_score))

candidate_pins.sort(key=lambda x: x[1], reverse=True)

seen = set()

results = []

for pin_id, score in candidate_pins:

if pin_id not in seen:

seen.add(pin_id)

results.append(pin_id)

if len(results) >= num_pins:

break

return resultsBy 2015, Pinterest had launched Pinterest Lens, a visual search tool that allowed users to point their phone camera at a physical object and instantly find similar items on Pinterest. This technology, built on deep convolutional neural networks trained on billions of pins, was among the first large-scale deployments of visual search in a consumer product, predating similar features from Amazon and Google by several years.

Growth and the Power of Curation

Pinterest’s early growth was slow by Silicon Valley standards. Silbermann personally emailed every one of the first 5,000 users and gave out his personal phone number. He organized meetups — which he called “Pinups” — in local boutiques and cafes. This approach was radically different from the growth-hacking tactics favored by most startups. By December 2011, Pinterest had become one of the top 10 social network services. By early 2012, comScore reported 11.7 million unique monthly visitors, making it the fastest site in history to break the 10-million threshold at the time.

What made Pinterest different from Facebook, Twitter, or Instagram was its organizing principle. Other social networks were organized around people and their relationships. Pinterest was organized around interests. Your home feed showed you ideas related to things you cared about — home renovation, meal planning, travel destinations — regardless of whether you had any social connection to the person who originally pinned the content.

The interest-based architecture also meant Pinterest content had an unusually long shelf life. A tweet had a half-life measured in minutes. But a pin — a recipe, a home design idea, a workout routine — could continue generating engagement for months or years. This made Pinterest uniquely valuable for businesses, because the return on creating high-quality visual content compounded over time.

Technical Innovations at Scale

As Pinterest grew to hundreds of millions of users, the engineering challenges became enormous. Early infrastructure relied on Amazon Web Services, MySQL sharded across hundreds of instances, Redis for caching, and custom-built image processing pipelines. The team developed novel approaches to image storage and serving that minimized latency while handling massive scale — challenges directly analogous to web performance optimization problems in modern applications.

One of Pinterest’s most impactful technical contributions was PinSage, a graph convolutional network for generating embeddings at web scale. Published in 2018, PinSage was the first industrial application of graph neural networks across billions of nodes and edges. The system learned to represent each pin as a dense vector by aggregating information from its neighborhood in the Pinterest graph — the boards it appeared on, other pins on those boards, and the visual features of the image itself.

# PinSage-inspired graph-based recommendation concept

# Pinterest's graph neural network for web-scale recommendations

from typing import List, Dict, Set, Tuple

from dataclasses import dataclass, field

import random

@dataclass

class Pin:

pin_id: str

visual_embedding: List[float]

boards: Set[str] = field(default_factory=set)

@dataclass

class Board:

board_id: str

pins: List[str] = field(default_factory=list)

topic: str = ""

class PinSageRecommender:

"""

PinSage uses localized graph convolutions to learn embeddings

that capture both visual content and collaborative signals

from how users organize pins into boards.

Key insight: pins that frequently co-occur on the same boards

are likely related, even if they look different visually.

The algorithm:

1. Sample neighborhood via random walks on the pin-board graph

2. Aggregate neighbor features using importance pooling

3. Combine with the pin's own visual/text features

4. Train using a max-margin ranking loss

"""

def __init__(self, embedding_dim=256, num_walks=500):

self.embedding_dim = embedding_dim

self.num_walks = num_walks

self.pins: Dict[str, Pin] = {}

self.boards: Dict[str, Board] = {}

self.learned_embeddings: Dict[str, List[float]] = {}

def sample_neighborhood(self, pin_id: str) -> Dict[str, float]:

"""

Sample local neighborhood using random walks.

This is the key scalability trick: instead of aggregating

ALL neighbors (billions of pins), PinSage samples a

fixed-size neighborhood, weighting by visit frequency.

"""

visit_counts: Dict[str, int] = {}

pin = self.pins[pin_id]

for _ in range(self.num_walks):

current_pin = pin_id

# Step 1: move to a random board containing this pin

current_boards = list(self.pins[current_pin].boards)

if not current_boards:

break

board_id = random.choice(current_boards)

# Step 2: move to a random pin on that board

board_pins = self.boards[board_id].pins

if board_pins:

next_pin = random.choice(board_pins)

if next_pin != pin_id:

visit_counts[next_pin] = (

visit_counts.get(next_pin, 0) + 1

)

total = sum(visit_counts.values())

if total == 0:

return {}

return {pid: c / total for pid, c in visit_counts.items()}

def compute_embedding(self, pin_id: str) -> List[float]:

"""

Compute PinSage embedding by aggregating neighborhood

features with importance pooling. The final embedding

captures visual content, text features, and graph context.

"""

neighborhood = self.sample_neighborhood(pin_id)

agg = [0.0] * self.embedding_dim

for neighbor_id, weight in neighborhood.items():

neighbor = self.pins.get(neighbor_id)

if neighbor and neighbor.visual_embedding:

for i in range(min(len(neighbor.visual_embedding),

self.embedding_dim)):

agg[i] += weight * neighbor.visual_embedding[i]

pin = self.pins[pin_id]

own = pin.visual_embedding or [0.0] * self.embedding_dim

combined = [0.6 * own[i] + 0.4 * agg[i]

for i in range(self.embedding_dim)]

self.learned_embeddings[pin_id] = combined

return combined

def recommend(self, query_pin_id: str,

top_k: int = 25) -> List[Tuple[str, float]]:

"""Find pins with the most similar PinSage embeddings."""

if query_pin_id not in self.learned_embeddings:

self.compute_embedding(query_pin_id)

query = self.learned_embeddings[query_pin_id]

scores = []

for pid, emb in self.learned_embeddings.items():

if pid == query_pin_id:

continue

dot = sum(a * b for a, b in zip(query, emb))

nq = sum(a ** 2 for a in query) ** 0.5

ne = sum(b ** 2 for b in emb) ** 0.5

if nq > 0 and ne > 0:

scores.append((pid, dot / (nq * ne)))

scores.sort(key=lambda x: x[1], reverse=True)

return scores[:top_k]PinSage improved Pinterest’s recommendation quality dramatically and influenced subsequent research in graph-based machine learning across the industry.

Monetization and the Commerce Vision

Building a business model for Pinterest was one of Silbermann’s most challenging tasks. The solution was Promoted Pins — advertisements that looked and behaved exactly like organic pins, appearing in search results and feeds based on user interest relevance. Launched in 2014, Promoted Pins proved remarkably effective because Pinterest users were often in a planning or shopping mindset, translating to significantly higher commercial intent than most social platforms.

Silbermann pushed further into commerce with Shop the Look (allowing users to tap items in a pin to buy them), Product Pins with real-time pricing, and a full shopping search experience. By the early 2020s, Pinterest had evolved from a digital scrapbook into a visual commerce platform. This evolution was consistent with Silbermann’s original insight at Google: the most natural path from wanting something to buying it runs through images, not text queries.

Philosophy and Leadership Approach

The Anti-Social Social Network

Silbermann’s vision was shaped by a belief that technology platforms could serve human flourishing rather than merely capture attention. In an era when most social media companies used algorithmic feeds and notification spam to maximize time on platform, Silbermann took a deliberately different approach. He frequently described Pinterest as a “catalog of ideas” rather than a social network.

Pinterest did not have public like counts that could drive social comparison. The platform did not optimize its feed for controversy or emotional arousal. Instead, Pinterest optimized for what Silbermann called “actionability” — whether users actually did something useful with the content they found. This made Pinterest an outlier: while other platforms faced scrutiny over mental health and political polarization, Pinterest largely avoided these controversies.

For teams working on product development in similar environments, tools like Taskee provide structured workflow management that helps maintain focus on long-term product vision rather than short-term metric optimization — the same discipline Silbermann applied throughout Pinterest’s development.

Transition and Legacy

In 2022, Silbermann stepped down as CEO, handing the role to Bill Ready, a former Google executive with deep commerce expertise. Silbermann transitioned to Executive Chairman, maintaining strategic oversight while accelerating the company’s commerce ambitions. The decision reflected a maturity rare among founder-CEOs — a willingness to recognize that building a company from zero requires different skills than scaling it. Digital agencies like Toimi often help businesses navigate similar transitions, aligning product vision with technical execution as platforms evolve.

Impact and Legacy

Silbermann demonstrated that a technology platform could be built around positive human aspirations — creativity, planning, self-improvement — rather than around addictive engagement patterns. Pinterest’s technical contributions have been equally significant: the company’s work on visual search helped pioneer deep learning for visual discovery at consumer scale, and PinSage advanced the state of the art in recommendation systems.

Pinterest’s influence on web design has been enormous. The platform’s masonry-style grid layout became one of the most widely imitated design patterns on the web. Its demonstration that visual content could drive commerce more effectively than text-based search helped accelerate the shift toward visual-first product discovery across e-commerce.

Perhaps most importantly, Silbermann proved that patience could be a competitive advantage. In an industry obsessed with hockey-stick growth and blitzscaling, he built Pinterest slowly and deliberately. The platform’s gradual growth produced a user base genuinely invested in the product — a community of creators and curators who used Pinterest as an integral part of their creative processes. That community, built through thousands of personal emails and dozens of in-person meetups, became the foundation of a business that weathered multiple economic cycles and industry shifts.

Silbermann’s story is a reminder that the most impactful technology founders are not always the ones with the loudest voices. Sometimes the people who change the world are the quiet collectors — the ones who notice that human beings have a deep need to find, save, and organize beautiful things, and who have the patience to build a tool that serves that need with care and precision.

Frequently Asked Questions

What did Ben Silbermann do before founding Pinterest?

Before founding Pinterest, Silbermann worked at Google for three years as a product specialist on advertising and shopping products. After leaving Google in 2008, he co-founded a company with Y Combinator-era colleague Paul Sciarra that built an iPhone shopping app called Tote. While Tote failed commercially, it revealed user behaviors around collecting and saving products that directly inspired Pinterest. Silbermann studied political science at Yale and had no formal computer science training — a background that gave him an unusually human-centered perspective on product design.

How did Pinterest’s visual search technology work?

Pinterest’s visual search used deep convolutional neural networks trained on billions of pin images to generate dense vector embeddings — numerical representations capturing each image’s semantic content. When a user pointed their camera at an object, the system computed an embedding and performed approximate nearest-neighbor search across the entire pin corpus to find visually similar content. This was enhanced by PinSage, a graph neural network combining visual features with collaborative signals from the pin-board graph. The approach was among the first industrial deployments of graph neural networks at web scale.

Why is Pinterest considered different from other social media platforms?

Pinterest is organized around interests and ideas rather than people and social relationships. While Facebook and Instagram center on following friends, Pinterest centers on discovering content related to specific topics. This produces several distinctive characteristics: content has a much longer shelf life (pins remain relevant for months or years), commercial intent is significantly higher (people use Pinterest to plan purchases), and the platform has largely avoided controversies around mental health and political polarization that affected other social networks.

What was PinSage and why was it important?

PinSage was a graph convolutional neural network developed by Pinterest’s engineering team and published in 2018. It was the first industrial application of graph neural networks at the scale of billions of nodes and edges. PinSage learned to represent each pin by aggregating information from its neighborhood in the Pinterest graph — the boards it appeared on, other pins on those boards, and each image’s visual features. The system used random-walk-based neighborhood sampling for scalability, enabling it to process web-scale graphs that were orders of magnitude larger than previous graph neural network applications. PinSage significantly improved recommendation quality and influenced graph-based machine learning research across the industry.

What is Pinterest’s business model?

Pinterest generates revenue primarily through Promoted Pins — paid advertisements visually identical to organic pins that appear in search results and home feeds based on interest relevance. The model is particularly effective because Pinterest users are often in a planning or shopping mindset, translating to higher commercial intent and better conversion rates. Pinterest expanded into direct commerce with Shop the Look, Product Pins with real-time pricing, and visual shopping search. The company went public in April 2019 and has continued growing its advertising and commerce capabilities. The alignment between user intent and business model is one of Pinterest’s strongest competitive advantages.