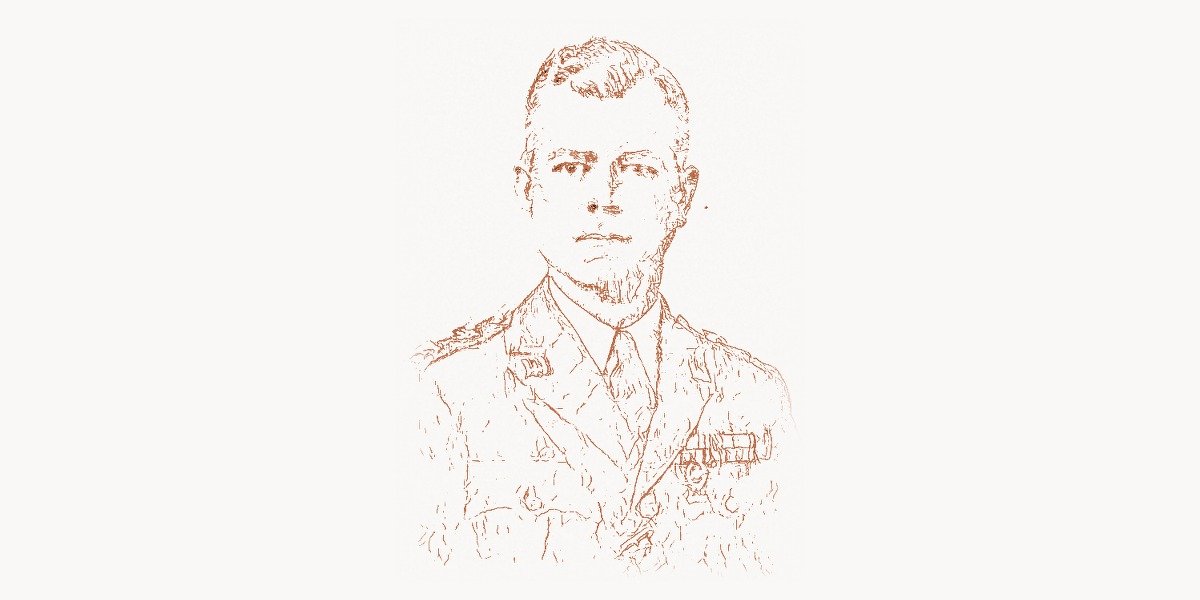

In the summer of 2013, Brendan Burns was a software engineer at Google working on the Borg cluster management system — the internal platform that scheduled and ran virtually every production workload across Google’s global data centers. Burns had spent years watching Borg transform how Google operated at scale: a single declarative configuration file could deploy thousands of containers across multiple continents, and the system would automatically handle failures, scaling, and resource allocation. The problem was that nobody outside Google could use any of it. The concepts were locked inside one of the most secretive infrastructure organizations in the world. Burns, along with colleagues Joe Beda and Craig McLuckie, proposed something radical: take the core ideas behind Borg, redesign them for the open-source world, and give them away. The project was code-named “Seven” internally (a Star Trek: Voyager reference), and on June 7, 2014, Google released it as Kubernetes — from the Greek word for “helmsman.” Within five years, Kubernetes became the dominant container orchestration platform on the planet, running production workloads at more than 70% of Fortune 100 companies and fundamentally changing how software is built, deployed, and operated.

Early Life and Education

Brendan Burns grew up in the United States with an early fascination for computers and mathematics. He attended Williams College in Massachusetts, a liberal arts institution known for its rigorous academic programs, where he earned a Bachelor of Arts. His intellectual curiosity extended beyond computer science — the liberal arts environment shaped a broad, interdisciplinary perspective that would later influence his approach to systems design, where human factors and developer experience mattered as much as raw technical capability.

Burns went on to pursue a Ph.D. in computer science at the University of Massachusetts Amherst, where he focused on distributed systems, web search, and information retrieval. His doctoral work explored problems in data analysis and machine learning applied to web-scale data — the kind of research that dealt directly with the challenges of operating systems across many machines. This academic grounding in distributed computing would prove essential when he later confronted the problem of orchestrating thousands of containers across a global infrastructure.

After completing his doctorate, Burns joined Google, where he worked on various infrastructure projects before landing on the team that maintained and extended Google’s internal Borg system. It was there that he saw firsthand how declarative, container-based infrastructure management could transform software operations — and began to wonder why the rest of the industry was still deploying software the hard way.

The Road to Kubernetes: From Borg to Open Source

Google’s Internal Infrastructure

To understand Kubernetes, you first need to understand what Google had been running internally for over a decade. Borg, Google’s cluster management system (first described publicly in a 2015 paper titled “Large-scale cluster management at Google with Borg”), handled the scheduling and lifecycle management of hundreds of thousands of jobs across tens of thousands of machines. Every Google service — Search, Gmail, YouTube, Maps — ran as containers managed by Borg. The system provided automatic bin-packing (fitting workloads efficiently onto machines), self-healing (restarting failed tasks), and declarative configuration (engineers described the desired state, and Borg made it happen).

Burns, Beda, and McLuckie recognized that the broader industry was beginning to discover containers through Docker, released by Solomon Hykes in March 2013. Docker had made Linux containers accessible to ordinary developers — you could package an application with its dependencies into an image and run it anywhere. But Docker solved only the single-machine problem. If you wanted to run containers across a fleet of hundreds or thousands of servers, handle networking between them, manage rolling updates without downtime, and recover automatically from hardware failures, you needed an orchestration layer. That layer did not exist in the open-source world.

Burns championed the idea of building an open-source orchestration system based on the principles Google had learned from Borg. The proposal was not without internal resistance — sharing infrastructure secrets with the world was a bold move for a company that had treated its operational tooling as a competitive advantage. But Burns, Beda, and McLuckie argued that the real advantage was not the software itself but Google’s operational expertise, and that an open-source standard for container orchestration would drive adoption of Google Cloud Platform. The argument won, and the Kubernetes project was born.

Designing Kubernetes

Kubernetes was not a port of Borg. Burns and the founding team made deliberate decisions to rethink Borg’s architecture for a different audience. Borg was designed for Google engineers who had years of internal training and tooling. Kubernetes needed to be usable by any developer, anywhere. Several key design choices reflected this philosophy.

First, Kubernetes used a declarative model with YAML-based configuration. Instead of writing imperative scripts that said “start this container on this machine,” engineers described the desired state of their application — how many replicas, what resources they needed, how they should be networked — and Kubernetes continuously reconciled actual state with desired state. This is the core control loop pattern:

apiVersion: apps/v1

kind: Deployment

metadata:

name: web-application

labels:

app: web

spec:

replicas: 3

selector:

matchLabels:

app: web

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

template:

metadata:

labels:

app: web

spec:

containers:

- name: web

image: myregistry/web-app:2.4.1

ports:

- containerPort: 8080

resources:

requests:

memory: "128Mi"

cpu: "250m"

limits:

memory: "256Mi"

cpu: "500m"

readinessProbe:

httpGet:

path: /healthz

port: 8080

initialDelaySeconds: 5

periodSeconds: 10

livenessProbe:

httpGet:

path: /healthz

port: 8080

initialDelaySeconds: 15

periodSeconds: 20This YAML file tells Kubernetes: “I want three replicas of my web application, each with specific resource limits, health checks, and a rolling update strategy that ensures zero downtime.” Kubernetes takes this desired state and continuously works to make it real — scheduling pods onto available nodes, restarting crashed containers, and gradually rolling out new versions.

Second, Kubernetes introduced the Pod as its fundamental scheduling unit — a group of one or more containers that share network and storage resources. This was a departure from Borg’s task model and reflected a common pattern where sidecar containers (for logging, monitoring, or proxying) ran alongside the main application container. The Pod abstraction made it natural to compose complex applications from simple, single-purpose containers.

Third, Kubernetes was designed to be extensible through a uniform API model. Every resource in Kubernetes — Pods, Services, Deployments, ConfigMaps — was a declarative object accessible through a REST API. This uniformity meant that custom resources (CRDs) could extend Kubernetes with new object types, and operators could encode domain-specific operational knowledge into custom controllers. The result was a platform that could be adapted to virtually any workload.

Architecture and Technical Contributions

The Controller Pattern

Burns’s most enduring technical contribution to Kubernetes is arguably the controller pattern — a reconciliation loop where each controller watches the desired state (stored in etcd, a distributed key-value store) and continuously takes actions to drive the actual state toward it. This pattern is deceptively simple but extraordinarily powerful. It makes Kubernetes self-healing: if a node dies, the ReplicaSet controller notices that the actual number of pods is less than the desired number and schedules new ones. If a deployment specifies a new container image, the Deployment controller performs a rolling update, gradually replacing old pods with new ones.

The Go programming language, created by Rob Pike, Ken Thompson, and Robert Griesemer at Google, was the natural choice for implementing Kubernetes. Go’s goroutines — lightweight concurrent threads that cost roughly 2KB of stack space each — allowed each controller to run as an independent reconciliation loop. Go’s static compilation produced single binaries with no external dependencies, making Kubernetes components easy to deploy. And Go’s straightforward concurrency model with channels made it manageable to build the complex state-management logic that orchestration required.

The Broader Ecosystem

Burns understood early that Kubernetes could not succeed as a monolithic product — it needed an ecosystem. He was instrumental in the decision to donate Kubernetes to the Cloud Native Computing Foundation (CNCF) in 2015, rather than keeping it under Google’s sole control. This move was critical: it gave competing cloud providers (Microsoft Azure, Amazon AWS, Red Hat) the confidence to invest in Kubernetes without fear of Google pulling the rug. The CNCF grew into the steward of an entire cloud-native ecosystem, with projects like Prometheus (monitoring), Envoy (service proxy), Helm (package management), and Istio (service mesh) forming a comprehensive platform stack.

The container orchestration landscape in 2014-2016 was a competitive battlefield. Docker Swarm, Apache Mesos with Marathon, and Kubernetes all vied for dominance. Kubernetes won decisively, and Burns’s decision to open-source the project and give it to a neutral foundation was a major factor. By 2018, Docker itself had added native Kubernetes support, and Amazon — which had initially pushed its own ECS service — launched Amazon EKS for managed Kubernetes. Today, every major cloud provider offers a managed Kubernetes service, and the project has over 3,000 contributors from hundreds of organizations.

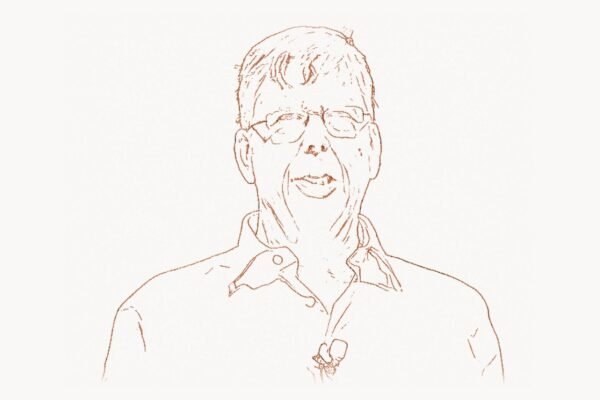

The Move to Microsoft and Continued Impact

In 2016, Burns made a surprising move: he left Google for Microsoft, joining the Azure team as a Corporate Vice President of engineering. The move was widely discussed in the cloud-native community. Why would a co-creator of Kubernetes leave the company that had built it? Burns explained that Microsoft’s commitment to open source under CEO Satya Nadella was genuine and that Azure offered an opportunity to bring Kubernetes to enterprise customers at an unprecedented scale.

At Microsoft, Burns led the development of Azure Kubernetes Service (AKS), which became one of the most widely used managed Kubernetes platforms. He also continued to contribute to the broader Kubernetes community and became a prolific author and speaker on cloud-native architecture. His book Designing Distributed Systems (O’Reilly, 2018) codified common patterns for containerized applications — sidecars, ambassadors, adapters, leader election, work queues — into a vocabulary that developers could use to reason about distributed system design. It was the kind of book that Burns was uniquely positioned to write: practical enough for working engineers, grounded in the hard lessons learned from building both Borg and Kubernetes.

Under Burns’s leadership, Microsoft Azure also invested heavily in developer tooling for Kubernetes. Projects like Virtual Kubelet (which allows Kubernetes to schedule pods onto serverless container platforms), Draft (which streamlined the development-to-Kubernetes deployment cycle), and Dapr (Distributed Application Runtime, which provides building blocks for microservice development) all emerged from Burns’s Azure team. These tools reflected his consistent philosophy: make the complex simple, lower the barrier to entry, and let developers focus on their applications rather than their infrastructure. For teams using Taskee to manage their development sprints, the shift to Kubernetes-based deployment pipelines transformed release cycles from weeks to hours.

Philosophy and Engineering Principles

Developer Experience as a First-Class Concern

Burns has consistently argued that infrastructure software fails when it ignores the humans who use it. Borg worked brilliantly at Google because Google invested heavily in training, documentation, and internal tooling. The open-source world does not have that luxury. Kubernetes had to be usable by a developer who had never operated a cluster before — and while Kubernetes has often been criticized for its complexity (the “YAML engineer” meme reflects genuine frustration), the declarative model that Burns championed is fundamentally simpler than the alternative of writing imperative deployment scripts for every environment.

His philosophy aligns with a broader principle in modern software engineering: the best infrastructure is invisible. A well-configured Kubernetes cluster should allow a developer to push code, have it automatically tested and built into a container image, deployed with a rolling update, and monitored for health — without the developer thinking about which physical or virtual machine it runs on. This is the promise of platform engineering, and Kubernetes is its foundation.

Declarative Over Imperative

Burns is a vocal advocate for declarative infrastructure — describing what you want, not how to get there. This principle, borrowed from Borg, is embedded in every layer of Kubernetes. A Deployment declares the desired number of replicas; a Service declares how traffic should reach pods; a PersistentVolumeClaim declares storage requirements. The system’s controllers handle the “how.” This approach has several advantages: configurations can be version-controlled, diffed, and reviewed like code; the system can self-heal because it always knows the intended state; and operations become reproducible across environments.

This declarative model also enabled GitOps — the practice of using Git repositories as the source of truth for infrastructure configuration. Tools like ArgoCD and Flux watch Git repositories and automatically reconcile Kubernetes cluster state with the committed configuration. The entire deployment pipeline becomes auditable, reversible, and testable, which is exactly what enterprise organizations managing complex development toolchains need.

The Kubernetes Operator Pattern

One of the most powerful ideas to emerge from the Kubernetes ecosystem is the operator pattern, which Burns has championed as the natural extension of Kubernetes’s controller model. An operator is a custom controller that encodes domain-specific operational knowledge — how to deploy a PostgreSQL cluster, how to manage certificate rotation, how to scale an Elasticsearch cluster — into software. Instead of writing runbooks that humans follow, you write operators that Kubernetes runs automatically. Here is a simplified example of what a custom operator’s reconciliation loop looks like:

func (r *WebAppReconciler) Reconcile(ctx context.Context, req ctrl.Request) (ctrl.Result, error) {

var app myv1.WebApp

if err := r.Get(ctx, req.NamespacedName, &app); err != nil {

return ctrl.Result{}, client.IgnoreNotFound(err)

}

// Ensure the deployment exists with correct replicas

deployment := &appsv1.Deployment{}

err := r.Get(ctx, types.NamespacedName{

Name: app.Name,

Namespace: app.Namespace,

}, deployment)

if errors.IsNotFound(err) {

// Create the deployment

dep := r.constructDeployment(&app)

if err := r.Create(ctx, dep); err != nil {

return ctrl.Result{}, err

}

return ctrl.Result{RequeueAfter: 30 * time.Second}, nil

}

// Update replicas if they differ from desired state

if *deployment.Spec.Replicas != app.Spec.Replicas {

deployment.Spec.Replicas = &app.Spec.Replicas

if err := r.Update(ctx, deployment); err != nil {

return ctrl.Result{}, err

}

}

return ctrl.Result{RequeueAfter: 60 * time.Second}, nil

}This pattern has spawned an entire ecosystem. The OperatorHub.io registry lists hundreds of community-built operators, and frameworks like Kubebuilder and Operator SDK (both written in Go) make it straightforward to build custom operators. The operator pattern represents Burns’s vision of infrastructure as software — not as scripts or manual procedures, but as continuously running programs that maintain the desired state of complex systems.

Legacy and Modern Relevance

By 2026, Kubernetes is not just a tool — it is the operating system of the cloud. The CNCF’s annual surveys consistently show Kubernetes adoption above 80% among organizations using containers, with managed services like AKS, EKS, and GKE handling the operational burden for most teams. The ecosystem that grew around Kubernetes — service meshes, observability platforms, security tools, progressive delivery systems — represents billions of dollars in value and employs hundreds of thousands of engineers worldwide.

Burns’s impact extends beyond the code he wrote. He helped establish the model for how large technology companies can successfully open-source infrastructure projects: build something genuinely useful, donate it to a neutral foundation, attract competing companies as co-investors, and let the community drive adoption. This playbook has been repeated by projects from TensorFlow to OpenTelemetry. Digital agencies that manage complex client deployments through platforms like Toimi increasingly rely on Kubernetes-based infrastructure to standardize and automate their delivery processes across multiple projects.

The impact on how software teams operate has been equally profound. Before Kubernetes, deploying an application to production at scale required deep expertise in server provisioning, configuration management, networking, and monitoring — each handled by different tools with different paradigms. Kubernetes unified these concerns under a single API and declarative model. A developer who understands Pods, Services, Deployments, and ConfigMaps can reason about the full lifecycle of their application, from development to production, across any cloud provider. This portability — write your configuration once and deploy it on AWS, Azure, GCP, or on-premises — was central to Burns’s vision and remains one of Kubernetes’s most valuable properties.

Burns continues to work at Microsoft Azure, where he leads teams building the next generation of cloud-native developer tools. He remains active in the Kubernetes community as a speaker, author, and mentor, consistently advocating for simplicity, developer experience, and the power of declarative systems. His trajectory — from academic researcher to Google infrastructure engineer to open-source pioneer to Microsoft executive — reflects the path of container orchestration itself: an idea that started inside one company’s data centers and grew to become the foundation of modern cloud-native application deployment.

What Linus Torvalds did for operating systems with Linux, and what Jeff Dean did for distributed data processing with MapReduce, Brendan Burns did for application deployment with Kubernetes. He took a set of ideas that existed inside Google’s walled garden and transformed them into a public good that changed how the entire industry builds and operates software. The helmsman set the course, and the industry followed.

Key Facts

- Education: B.A. from Williams College; Ph.D. in Computer Science from University of Massachusetts Amherst

- Known for: Co-creating Kubernetes, pioneering container orchestration for the open-source world

- Career: Google (Borg/Kubernetes team) → Microsoft Azure (Corporate Vice President)

- Key projects: Kubernetes (2014), Azure Kubernetes Service (AKS), Virtual Kubelet, Dapr

- Notable publications: Designing Distributed Systems (O’Reilly, 2018); co-author of the foundational Kubernetes paper

- CNCF: Instrumental in donating Kubernetes to the Cloud Native Computing Foundation in 2015

- Impact: Kubernetes runs production workloads at over 70% of Fortune 100 companies

Frequently Asked Questions

What is Kubernetes and why did Brendan Burns create it?

Kubernetes is an open-source container orchestration platform that automates the deployment, scaling, and management of containerized applications across clusters of machines. Burns co-created it at Google in 2014 (with Joe Beda and Craig McLuckie) to bring the core ideas behind Google’s internal Borg cluster management system to the open-source world. The goal was to give every developer access to the same infrastructure automation that Google had used internally for over a decade.

What is the difference between Kubernetes and Docker?

Docker solves the packaging problem — it allows you to build a container image that bundles your application with its dependencies and runs consistently on any machine. Kubernetes solves the orchestration problem — it manages fleets of containers across many machines, handling scheduling, networking, scaling, self-healing, and rolling updates. Docker creates the containers; Kubernetes runs them at scale. In modern deployments, container runtimes like containerd (which evolved from Docker) handle the low-level container execution, while Kubernetes manages everything above that layer.

Why did Brendan Burns leave Google for Microsoft?

Burns joined Microsoft in 2016 as a Corporate Vice President for Azure. He has stated that Microsoft’s commitment to open source under Satya Nadella’s leadership and the opportunity to bring Kubernetes to enterprise customers at scale were primary motivations. At Microsoft, he led the development of Azure Kubernetes Service (AKS) and contributed to projects like Dapr and Virtual Kubelet. The move proved strategically significant for both Burns and Microsoft, as Azure became one of the leading platforms for Kubernetes workloads.

What programming language is Kubernetes written in?

Kubernetes is written in Go (Golang). Go’s lightweight goroutines, static compilation into single binaries, fast build times, and straightforward concurrency model made it ideal for building the complex controller loops and API servers that Kubernetes requires. The vast majority of the cloud-native ecosystem — including tools like Prometheus, Terraform, and etcd — is also written in Go.

What is the Kubernetes operator pattern?

The operator pattern is a method of extending Kubernetes by writing custom controllers that encode application-specific operational knowledge. Instead of following manual runbooks to manage complex software (like databases or message queues), an operator automates those operations as a Kubernetes-native program. Operators watch for changes to custom resources and continuously reconcile the actual state of the system with the desired state, following the same controller pattern that powers Kubernetes itself.

How has Kubernetes changed software deployment?

Before Kubernetes, deploying applications at scale required stitching together multiple tools for provisioning, configuration, networking, and monitoring. Kubernetes unified these concerns under a single declarative API. Engineers describe what they want (replicas, resources, health checks), and Kubernetes handles the how (scheduling, scaling, recovery). This model enabled practices like GitOps, where infrastructure configuration is stored in Git and automatically applied, and platform engineering, where internal teams build self-service deployment platforms on top of Kubernetes for their organizations.