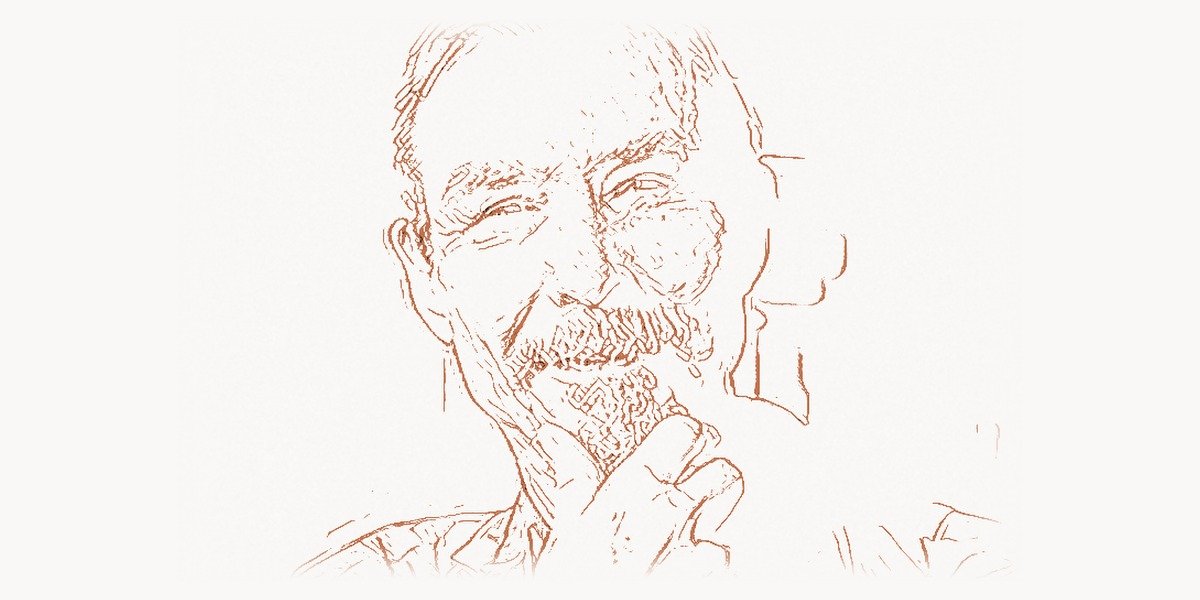

In 1980, a Caltech professor named Carver Mead co-authored a textbook that did something no one thought possible: it turned the arcane art of integrated circuit design into a discipline that any computer scientist could learn. Before “Introduction to VLSI Systems,” chip design was a priesthood — a craft practiced by a handful of electrical engineers at companies like Intel and Texas Instruments, working by hand with rubylith and light tables. After Mead’s book, thousands of university students could design their own silicon, and the modern semiconductor revolution shifted into overdrive. But VLSI design was only the beginning. Mead went on to pioneer neuromorphic computing — building electronic systems that mimic the human brain — decades before artificial intelligence became the most talked-about technology on the planet. His work at the intersection of physics, engineering, and biology has shaped everything from the chips in your smartphone to the deep learning architectures driving modern AI. Few living scientists have changed computing so fundamentally, and so quietly.

Early Life and Education

Carver Andress Mead was born on May 1, 1934, in Bakersfield, California. His father was a civil engineer, and Mead grew up in a household where building things and understanding how the physical world worked were everyday activities. From a young age, he was drawn to electronics — taking apart radios, building crystal sets, and experimenting with anything he could find that carried an electrical current. Growing up in California’s Central Valley in the 1940s, far from the academic centers of the East Coast, he developed an independence of mind that would later define his career.

Mead attended the California Institute of Technology (Caltech) for his undergraduate studies, earning his B.S. in electrical engineering in 1956. He stayed at Caltech for his graduate work, completing his M.S. in 1957 and his Ph.D. in electrical engineering in 1960. His doctoral research focused on the physics of semiconductor devices — specifically, the quantum mechanical tunneling phenomena in thin insulating films. This was not merely an academic exercise: understanding how electrons behave at the nanoscale in solid-state materials would become the foundation of his entire career.

After receiving his doctorate, Mead joined the Caltech faculty as an assistant professor in 1960. He would remain at Caltech for his entire career, eventually becoming the Gordon and Betty Moore Professor of Engineering and Applied Science — a chair named after Gordon Moore, the Intel co-founder whose famous law about transistor density Mead himself helped name and promote. That lifelong connection to a single institution gave Mead the intellectual freedom to pursue ideas that crossed disciplinary boundaries in ways that would have been difficult at a more traditional university.

The VLSI Design Breakthrough

Technical Innovation

By the late 1970s, integrated circuits were becoming dramatically more complex. The Intel 4004 — the first commercial microprocessor, designed by Federico Faggin and his team — had contained 2,300 transistors in 1971. By 1978, Intel’s 8086 processor held 29,000. Moore’s Law was driving exponential growth in transistor counts, but the design methodology had not kept pace. Chips were still designed at the transistor level by specialists who understood both the physics and the fabrication processes intimately. There was no abstraction layer, no systematic design methodology that would allow the process to scale.

Carver Mead saw the problem clearly. He understood that the bottleneck in semiconductor progress was not physics or fabrication — it was design. There were not enough human designers who could work at the transistor level, and the complexity of chips was doubling roughly every two years. If chip design remained a craft, it would hit a wall far before physics imposed any limit.

Working with Lynn Conway of Xerox PARC, Mead developed a systematic methodology for Very Large Scale Integration (VLSI) design. Their approach introduced several key concepts. First, design rules that abstracted away the messy details of fabrication processes, allowing designers to work with simple geometric rules rather than needing to understand the physics of each specific foundry. Second, a hierarchical design approach where complex chips could be built from standardized building blocks — much like how software is built from functions, modules, and libraries. Third, a clean separation between design and fabrication that meant a chip designed at one university could be manufactured at any compatible foundry.

The landmark 1980 textbook “Introduction to VLSI Systems” codified this methodology and made it teachable. It was not a gentle introduction — the book was dense, rigorous, and demanding. But it transformed VLSI design from an apprenticeship into an engineering discipline with clear rules and principles.

# VLSI Design Rule Abstraction — Mead-Conway Methodology

# Demonstrates lambda-based scalable design rules

class VLSIDesignRules:

"""

Mead-Conway design rules use a single parameter (lambda)

to abstract away foundry-specific dimensions.

All geometric constraints are expressed as multiples of lambda.

This abstraction was revolutionary: the same design

could be fabricated at ANY compatible foundry simply

by changing the value of lambda.

"""

def __init__(self, lambda_nm):

"""

Initialize with technology-specific lambda value.

lambda_nm: half the minimum feature size in nanometers.

Example: for a 1-micron process, lambda = 500 nm

"""

self.lam = lambda_nm # lambda in nanometers

# Minimum widths (in lambda units)

METAL_WIDTH = 3 # Minimum metal wire width: 3 lambda

POLY_WIDTH = 2 # Polysilicon gate width: 2 lambda

DIFFUSION_WIDTH = 2 # Diffusion region width: 2 lambda

CONTACT_SIZE = 2 # Contact/via size: 2 lambda x 2 lambda

# Minimum spacings (in lambda units)

METAL_SPACING = 3 # Metal-to-metal spacing: 3 lambda

POLY_SPACING = 2 # Poly-to-poly spacing: 2 lambda

DIFF_SPACING = 3 # Diffusion-to-diffusion: 3 lambda

# Overlap rules

POLY_OVER_DIFF = 2 # Poly must extend 2 lambda beyond diffusion

CONTACT_SURROUND = 1 # Metal must surround contact by 1 lambda

def to_nm(self, lambda_units):

"""Convert lambda units to actual nanometers."""

return lambda_units * self.lam

def min_transistor_width(self):

"""Minimum MOSFET gate width."""

return self.to_nm(self.POLY_WIDTH)

def min_wire_pitch(self):

"""Minimum metal pitch = width + spacing."""

return self.to_nm(self.METAL_WIDTH + self.METAL_SPACING)

def estimate_transistor_area(self):

"""Rough estimate of minimum transistor footprint."""

w = self.to_nm(self.DIFFUSION_WIDTH + 2 * self.POLY_OVER_DIFF)

h = self.to_nm(self.POLY_WIDTH + 2 * self.DIFF_SPACING)

return w * h # area in nm squared

# Compare technology nodes across decades

for node_name, lam in [("1 micron (1980s)", 500),

("250nm (1990s)", 125),

("45nm (2007)", 22),

("5nm (2020s)", 2)]:

rules = VLSIDesignRules(lam)

area = rules.estimate_transistor_area()

print(f"{node_name}: lambda={lam}nm, "

f"min gate={rules.min_transistor_width()}nm, "

f"wire pitch={rules.min_wire_pitch()}nm, "

f"transistor area={area}nm2")

# Output:

# 1 micron (1980s): lambda=500nm, min gate=1000nm,

# wire pitch=3000nm, transistor area=4000000nm2

# 250nm (1990s): lambda=125nm, min gate=250nm,

# wire pitch=750nm, transistor area=250000nm2

# 45nm (2007): lambda=22nm, min gate=44nm,

# wire pitch=132nm, transistor area=7744nm2

# 5nm (2020s): lambda=2nm, min gate=4nm,

# wire pitch=12nm, transistor area=64nm2Why It Mattered

The impact of the Mead-Conway methodology was immediate and profound. Before their work, perhaps a few hundred engineers worldwide could design integrated circuits. Within a few years of the textbook’s publication, dozens of universities had adopted it for courses, and thousands of students were designing chips as part of their education. Mead and Conway also established the MOSIS (Metal Oxide Semiconductor Implementation Service) program, which allowed universities to pool their chip designs into shared fabrication runs — making it economically feasible for students to actually manufacture their designs.

This democratization of chip design had cascading effects throughout the technology industry. The explosion of fabless semiconductor companies in the 1980s and 1990s — companies that design chips but do not own fabrication facilities — was a direct consequence of the Mead-Conway separation of design from manufacturing. Companies like Qualcomm, Nvidia, and Broadcom owe their business model to the framework Mead helped create. The entire modern semiconductor ecosystem, where specialized design houses send their layouts to foundries like TSMC, was made possible by this abstraction. If you use a smartphone, a laptop, or any device with a custom chip inside, you are benefiting from Carver Mead’s work on VLSI design methodology.

Other Major Contributions

While VLSI design would have been enough to secure a lasting legacy, Mead’s intellectual curiosity drove him into entirely new territory. His contributions span neuromorphic engineering, optical sensing, physics, and the foundations of modern AI hardware.

Neuromorphic Engineering

In the late 1980s, Mead coined the term “neuromorphic engineering” and launched a field that is now at the cutting edge of computing research. The core idea was provocative: instead of building computers that simulate neural networks in software (which is what modern deep learning systems do), why not build hardware that physically operates like biological neural tissue? Biological neurons compute with analog signals, consume minuscule amounts of energy, and process information in massively parallel architectures. Digital computers, by contrast, shuttle binary data through sequential bottlenecks and consume enormous amounts of power doing so.

Mead’s insight was that the same physics governing transistors in subthreshold operation (when they are barely turned on and carrying tiny currents) closely mirrors the physics of ion channels in biological neurons. By operating transistors in this regime — which conventional digital designers considered an error state — he could build circuits that behaved like real neurons and synapses, processing information with analog signals at extremely low power. His 1989 book “Analog VLSI and Neural Systems” laid out the theoretical and practical foundations for this approach.

The field Mead founded has grown into a major area of computing research. Intel’s Loihi chip, IBM’s TrueNorth, and a host of academic neuromorphic processors all trace their lineage to Mead’s original vision. As AI workloads push the limits of conventional processor architectures, neuromorphic computing offers a fundamentally different path — one that could deliver orders of magnitude improvement in energy efficiency for tasks like pattern recognition, sensor processing, and autonomous navigation. Teams managing complex hardware-software projects of this scale often rely on task management platforms to coordinate the interdisciplinary workflows that neuromorphic chip development demands.

The Silicon Retina

One of Mead’s most remarkable demonstrations of neuromorphic principles was the silicon retina, developed in the late 1980s and early 1990s. This was an analog VLSI chip that mimicked the processing that occurs in the human retina — not just detecting light, but performing real-time edge detection, contrast enhancement, and motion sensing directly in the silicon, just as biological retinal neurons do before sending signals to the brain.

The silicon retina did not capture images the way a conventional camera sensor does (by recording the absolute brightness at each pixel). Instead, it responded to changes and edges — detecting where brightness transitions occurred and ignoring uniform regions. This is exactly what biological retinas do: they are highly sensitive to changes in their visual field and largely ignore static backgrounds. The result was a sensor that could process visual information with extraordinary efficiency, using a fraction of the power and bandwidth that conventional image processing requires.

This work anticipated by decades the event-driven cameras now being developed for autonomous vehicles, robotics, and augmented reality systems. Modern dynamic vision sensors (DVS) operate on principles remarkably similar to Mead’s original silicon retina.

Foveon and Computational Imaging

Mead’s work on image sensors led him to co-found Foveon, Inc. in 1997. The company developed a revolutionary image sensor technology based on the principle that silicon absorbs different wavelengths of light at different depths. Conventional digital camera sensors use a Bayer filter mosaic — a pattern of colored filters placed over the pixels — to capture color information, which means each pixel only records one color and the rest must be interpolated. The Foveon X3 sensor, by contrast, captured all three color channels (red, green, blue) at every pixel location by stacking three layers of photodetectors at different depths in the silicon.

The result was sharper color resolution and more accurate color rendition at a given pixel count. Sigma Corporation adopted the Foveon sensor for its line of cameras, and the technology gained a devoted following among photographers who valued its distinctive image quality. Foveon was eventually acquired by Sigma in 2008.

Physics of Computation and Collective Electrodynamics

Mead’s contributions to the theoretical foundations of computing are less widely known but equally significant. He spent decades thinking deeply about the physics that underlies computation itself — not just how to build faster chips, but what the fundamental physical limits of computation are. His 2002 book “Collective Electrodynamics” presented a radical rethinking of electromagnetic theory, reframing Maxwell’s equations from first principles using quantum mechanical concepts. The work was controversial among physicists but demonstrated the depth and originality of Mead’s thinking about the relationship between physics and information.

He was also instrumental in popularizing Moore’s Law. Gordon Moore himself has credited Mead with coining the actual term “Moore’s Law” and with encouraging Moore to formalize and publicize his observation about the exponential growth of transistor counts. This relationship between theorist and industrialist — Mead at Caltech and Moore at Intel — proved extraordinarily productive for the entire semiconductor industry, providing both the theoretical framework and the empirical validation that chip complexity could continue scaling for decades.

Philosophy and Approach

Carver Mead’s career is defined not just by specific technical achievements but by a distinctive intellectual philosophy that guided all of his work. He consistently challenged the boundaries between disciplines, and his most important contributions came from seeing connections that specialists in any one field had missed.

Key Principles

Listen to the physics. Mead’s most famous maxim was that engineers should “listen to the technology” — meaning that the physical properties of silicon and electrons should guide design decisions, rather than forcing hardware to conform to abstract mathematical models. When he noticed that subthreshold transistor behavior mimicked neural ion channels, he did not dismiss it as an inconvenience; he built an entire field around it. This principle echoes the approach of other great system thinkers like Claude Shannon, who found deep structure in seemingly disparate phenomena.

Democratize knowledge. The Mead-Conway textbook was not just a technical contribution; it was a deliberate act of democratization. Mead believed that progress was being throttled by the concentration of chip design expertise in a few corporate labs. By making the methodology teachable and accessible, he unleashed an entire generation of designers. The MOSIS service extended this further by giving students access to actual fabrication. This philosophy of breaking down barriers to entry has parallels throughout computing history — from Grace Hopper’s work making programming accessible through compilers to the open-source movement that would transform software decades later.

Think across boundaries. Mead’s most profound contributions came from importing ideas between fields: biology into circuit design, quantum mechanics into electromagnetic theory, physics into computer architecture. He was never content to optimize within the existing paradigm; he always asked whether the paradigm itself was the problem. Digital agencies and development teams working on complex projects today similarly benefit from cross-disciplinary thinking — platforms that facilitate collaboration across engineering, design, and strategy reflect this same principle that the best solutions emerge at the boundaries between domains.

Build, do not just theorize. Despite his deep theoretical inclinations, Mead always insisted on building physical systems. His neuromorphic chips were not simulations; they were working silicon. His silicon retina was a real sensor that processed real visual information. This commitment to embodiment — to proving ideas in hardware — gave his theoretical work a credibility and impact that purely mathematical contributions often lack. As John von Neumann demonstrated with the stored-program computer, the gap between theory and implementation is where the most important engineering breakthroughs occur.

# Neuromorphic Computing — Leaky Integrate-and-Fire Neuron

# Models the type of analog neuron circuit Mead pioneered

import math

class LeakyIntegrateFireNeuron:

"""

Software model of Mead's analog VLSI neuron.

In Mead's neuromorphic chips, this behavior emerges

naturally from subthreshold transistor physics:

- Membrane capacitance -> gate capacitance

- Ion channel leak -> transistor subthreshold current

- Spike threshold -> transistor switching point

Energy cost: ~1 picojoule per spike (analog VLSI)

vs ~1 nanojoule per multiply (digital GPU) — 1000x gap

"""

def __init__(self, tau_ms=20.0, threshold=-55.0,

v_rest=-70.0, v_reset=-75.0):

self.tau = tau_ms # Membrane time constant (ms)

self.threshold = threshold # Spike threshold (mV)

self.v_rest = v_rest # Resting potential (mV)

self.v_reset = v_reset # Post-spike reset (mV)

self.v_membrane = v_rest # Current membrane voltage

self.spike_times = [] # Record of when spikes occur

def step(self, input_current, dt_ms=1.0, time_ms=0.0):

"""

Simulate one timestep of the neuron.

The exponential decay (leak) matches the behavior of

subthreshold MOSFET current — this is why Mead realized

transistors could naturally implement neural dynamics.

"""

# Leaky integration: voltage decays toward rest

decay = math.exp(-dt_ms / self.tau)

self.v_membrane = (self.v_rest

+ (self.v_membrane - self.v_rest) * decay

+ input_current * dt_ms)

# Threshold detection: fire a spike

fired = False

if self.v_membrane >= self.threshold:

self.spike_times.append(time_ms)

self.v_membrane = self.v_reset # Reset after spike

fired = True

return fired

def firing_rate(self, window_ms=1000.0):

"""Compute average firing rate in Hz."""

if len(self.spike_times) < 2:

return 0.0

recent = [t for t in self.spike_times

if t > self.spike_times[-1] - window_ms]

return len(recent) * (1000.0 / window_ms)

# Simulate a small network (edge detection like the silicon retina)

neurons = [LeakyIntegrateFireNeuron() for _ in range(5)]

# Center-surround input pattern

# (bright center, dark surround — classic retinal receptive field)

input_pattern = [0.5, 2.0, 8.0, 2.0, 0.5] # Edge stimulus

for t_ms in range(100):

for i, neuron in enumerate(neurons):

neuron.step(input_pattern[i], dt_ms=1.0, time_ms=t_ms)

for i, neuron in enumerate(neurons):

rate = neuron.firing_rate(window_ms=100.0)

bar = "#" * int(rate / 5)

print(f"Neuron {i}: {len(neuron.spike_times):2d} spikes, "

f"{rate:5.0f} Hz | {bar}")

# Output shows center neuron fires most — edge detection

# emerges from simple neuron dynamics, exactly as in

# Mead's silicon retina:

#

# Neuron 0: 1 spikes, 10 Hz | ##

# Neuron 1: 5 spikes, 50 Hz | ##########

# Neuron 2: 18 spikes, 180 Hz | ####################################

# Neuron 3: 5 spikes, 50 Hz | ##########

# Neuron 4: 1 spikes, 10 Hz | ##Legacy and Impact

Carver Mead’s influence on modern technology is vast, though it often operates beneath the surface. The fabless semiconductor model that powers companies worth hundreds of billions of dollars — Qualcomm, Nvidia, AMD, Apple’s chip division — exists because Mead and Conway separated chip design from chip manufacturing. Every custom silicon chip in every smartphone, every GPU rendering modern web applications, every AI accelerator training a large language model is designed using methodologies descended from the Mead-Conway framework.

His neuromorphic work is experiencing a renaissance. As the limitations of scaling traditional digital architectures become apparent — power consumption, memory bandwidth bottlenecks, the end of Dennard scaling — the brain-inspired approach Mead championed looks increasingly prescient. Intel’s neuromorphic research lab, academic programs at dozens of universities worldwide, and startup companies working on analog AI accelerators all trace their intellectual lineage to Mead’s 1989 work. The energy efficiency advantages of neuromorphic approaches — potentially 100 to 1,000 times better than conventional digital processors for certain AI tasks — make this work more relevant than ever.

The companies Mead helped create have left their own marks on the industry. Synaptics, co-founded in 1986 to commercialize neuromorphic touchpad technology, became the dominant supplier of laptop touchpads — if you have used a laptop in the past two decades, you have likely used Synaptics hardware. Impinj, which develops RFID technology, went public in 2016 and is a leader in the Internet of Things infrastructure. Foveon’s sensor technology, though niche, influenced subsequent approaches to stacked image sensor design. Silicon Compilers helped pioneer the electronic design automation (EDA) industry that is now essential to all chip development.

Mead’s honors reflect the breadth of his contributions. He received the National Medal of Technology and Innovation in 2002, the IEEE John von Neumann Medal, the Lemelson-MIT Prize, and numerous other awards. He is a member of the National Academy of Sciences, the National Academy of Engineering, and the American Academy of Arts and Sciences. He holds over 100 patents across semiconductor design, neural systems, and imaging technology.

Perhaps most importantly, Mead trained generations of students at Caltech who went on to lead research groups and companies across the semiconductor and computing industries. His approach — rigorous physics, bold interdisciplinary thinking, insistence on building real systems — became a template for how breakthrough engineering research should be done. In an era when computing faces fundamental challenges of power consumption, architectural bottlenecks, and the limits of digital scaling, the intellectual tools and physical insights Carver Mead developed are more valuable than they have ever been.

Key Facts

- Full name: Carver Andress Mead

- Born: May 1, 1934, Bakersfield, California, USA

- Education: B.S. (1956), M.S. (1957), Ph.D. (1960) in Electrical Engineering, California Institute of Technology

- Position: Gordon and Betty Moore Professor of Engineering and Applied Science (Emeritus), Caltech

- Key publications: “Introduction to VLSI Systems” (1980, with Lynn Conway), “Analog VLSI and Neural Systems” (1989), “Collective Electrodynamics” (2002)

- Founded/co-founded: Foveon, Inc. (image sensors), Synaptics (touchpad technology), Impinj (RFID), Silicon Compilers

- Awards: National Medal of Technology and Innovation (2002), IEEE John von Neumann Medal, Lemelson-MIT Prize, Phil Kaufman Award

- Patents: Over 100 across semiconductor design, neural systems, and imaging

- Coined terms: “Moore’s Law” (popularized and named the concept), “neuromorphic engineering”

- Key legacy: Made VLSI chip design teachable and scalable; pioneered brain-inspired computing hardware

FAQ

What is VLSI design and why was Carver Mead’s contribution so important?

VLSI stands for Very Large Scale Integration — the process of creating integrated circuits by combining thousands to billions of transistors on a single chip. Before Mead and Lynn Conway published their 1980 textbook, chip design was an artisanal craft practiced by a small number of specialists at semiconductor companies. Mead’s contribution was creating a systematic, teachable methodology with abstracted design rules (the lambda-based approach) that separated the act of designing a chip from the specifics of any particular fabrication process. This democratized chip design, enabled the university-level teaching of IC design, and made possible the fabless semiconductor business model that dominates the industry today. Companies like Qualcomm, Nvidia, and Apple’s chip division exist because of the design-fabrication separation Mead helped establish.

What is neuromorphic computing and how does it differ from traditional AI?

Neuromorphic computing is an approach to building computer hardware that physically mimics the structure and operation of biological neural systems — the brain, retina, and other neural tissue. Traditional AI runs neural network algorithms on conventional digital processors (CPUs and GPUs), which simulate neurons and synapses using binary arithmetic. Neuromorphic chips, by contrast, use analog circuits that inherently behave like neurons: they integrate input signals over time, fire when a threshold is reached, and communicate through spikes rather than binary data. The advantage is massive energy efficiency — biological brains consume about 20 watts while performing tasks that would require thousands of watts on conventional AI hardware. Mead pioneered this field in the late 1980s by recognizing that subthreshold transistor physics naturally mimics the behavior of biological ion channels.

How did Carver Mead influence Moore’s Law?

Carver Mead had a unique dual role in the history of Moore’s Law. First, he is widely credited with coining the actual term “Moore’s Law” to describe Gordon Moore’s 1965 observation that transistor counts on integrated circuits were doubling at regular intervals. Moore himself has acknowledged Mead’s role in naming and popularizing the concept. Second, and more substantively, Mead’s VLSI design methodology was one of the key enablers that allowed Moore’s Law to continue for decades. By creating scalable design rules and democratizing chip design, Mead helped ensure that the semiconductor industry could actually exploit the increasing transistor budgets that Moore’s Law predicted. Without scalable design methodologies, the exponential growth in chip complexity would have been unusable.

What companies did Carver Mead help create?

Mead was involved in founding or co-founding several technology companies that commercialized his research. Synaptics, founded in 1986, developed touchpad technology based on neuromorphic principles — the touchpad on your laptop likely descends from Synaptics’ work. Foveon, co-founded in 1997, developed a revolutionary three-layer image sensor technology later acquired by Sigma Corporation. Silicon Compilers, founded in the early 1980s, built tools for automated chip design. Impinj, which went public in 2016, develops RFID technology. Additionally, Mead’s work on VLSI design methodology indirectly enabled the entire fabless semiconductor industry — a sector that includes companies like Qualcomm, Nvidia, Broadcom, AMD, and Apple’s chip design division, collectively worth trillions of dollars.