In the early 1970s, computer science faced an existential crisis. Programs were becoming more complex, yet there was no rigorous mathematical foundation for reasoning about what they actually meant. Languages like LISP and ALGOL allowed programmers to write recursive functions and self-referential structures, but mathematicians could not explain why these constructs worked — or prove that they were correct. Enter Dana Stewart Scott, a logician whose breathtaking insight was to build an entirely new branch of mathematics — domain theory — that finally gave programs a solid semantic foundation. His work did not just solve a theoretical puzzle; it reshaped how we understand computation itself, from type systems in modern functional languages to the formal verification tools that safeguard today’s critical software infrastructure.

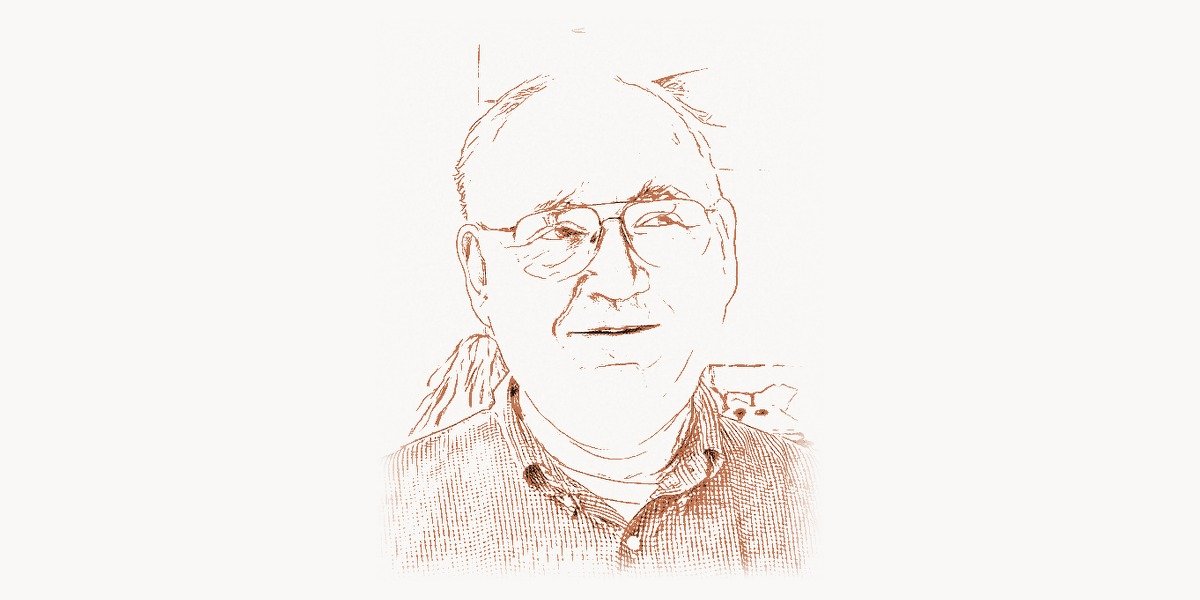

Early Life and the Making of a Mathematical Mind

Dana Stewart Scott was born on October 11, 1932, in Berkeley, California. Growing up in a university town steeped in intellectual culture, Scott showed early promise in mathematics and logic. He enrolled at the University of California, Berkeley, where he earned his bachelor’s degree in 1954. It was at Berkeley that Scott first encountered the formal logic tradition that would shape his entire career — the legacy of Alfred Tarski, one of the twentieth century’s greatest logicians.

Scott then moved to Princeton University for his doctoral work, studying under the legendary Alan Turing collaborator Alonzo Church. Church, the inventor of the lambda calculus and the man who independently arrived at the same fundamental results as Turing on computability, was the perfect mentor for a mind drawn to the deepest questions about mathematics and computation. Under Church’s supervision, Scott completed his Ph.D. in 1958 with a dissertation on convergent sequences of complete theories — a topic in mathematical logic that demonstrated his ability to navigate the most abstract terrain of formal reasoning.

What made Scott exceptional even among Princeton’s elite was his ability to move fluidly between pure logic, algebra, and topology. He did not see these as separate disciplines but as different lenses for examining the same underlying structures. This cross-disciplinary vision would prove essential when he later tackled the problem of giving meaning to computer programs.

The Turing Award and Nondeterministic Automata Theory

In 1976, Dana Scott shared the ACM Turing Award with Michael O. Rabin for their joint paper “Finite Automata and Their Decision Problems,” published in 1959. This work, written when both men were barely in their mid-twenties, fundamentally reshaped theoretical computer science.

The key innovation was the concept of nondeterministic finite automata (NFA). Before Scott and Rabin, automata theory dealt exclusively with deterministic machines — devices that, given a current state and an input symbol, transitioned to exactly one next state. Scott and Rabin showed that allowing a machine to be in multiple states simultaneously — or equivalently, to “guess” the right transition — did not increase the computational power of finite automata. Every nondeterministic finite automaton could be converted into an equivalent deterministic one, though potentially at an exponential blowup in the number of states.

This result was far more than a curiosity. It established a foundational paradigm: nondeterminism as a conceptual tool that simplifies descriptions without changing fundamental capabilities. The subset construction they introduced — converting an NFA to a DFA by treating sets of NFA states as single DFA states — remains one of the most elegant and widely taught algorithms in computer science. Every student who has taken a course in formal languages or compiler design has encountered this technique.

Here is a simplified illustration of the subset construction, showing how an NFA’s state transitions map to a corresponding DFA:

# Subset construction: NFA → DFA conversion

# Demonstrates the Scott-Rabin powerset construction

def nfa_to_dfa(nfa_transitions, nfa_start, nfa_accept, alphabet):

"""

Convert NFA to DFA via subset construction.

nfa_transitions: dict mapping (state, symbol) -> set of states

"""

from collections import deque

dfa_start = frozenset([nfa_start])

dfa_transitions = {}

dfa_accept = set()

visited = set()

queue = deque([dfa_start])

while queue:

current = queue.popleft()

if current in visited:

continue

visited.add(current)

# A DFA state is accepting if it contains any NFA accept state

if current & nfa_accept:

dfa_accept.add(current)

for symbol in alphabet:

# Compute the union of all NFA transitions

next_states = frozenset(

s for nfa_state in current

for s in nfa_transitions.get((nfa_state, symbol), set())

)

if next_states:

dfa_transitions[(current, symbol)] = next_states

if next_states not in visited:

queue.append(next_states)

return dfa_transitions, dfa_start, dfa_accept

# Example: NFA that accepts strings ending in "ab"

nfa_trans = {

('q0', 'a'): {'q0', 'q1'},

('q0', 'b'): {'q0'},

('q1', 'b'): {'q2'},

}

nfa_start = 'q0'

nfa_accept = frozenset(['q2'])

alphabet = {'a', 'b'}

dfa_trans, dfa_start, dfa_acc = nfa_to_dfa(

nfa_trans, nfa_start, nfa_accept, alphabet

)

print(f"DFA start: {set(dfa_start)}")

print(f"DFA accepting states: {[set(s) for s in dfa_acc]}")

for (state, sym), target in sorted(dfa_trans.items(), key=str):

print(f" {set(state)} --{sym}--> {set(target)}")The paper also introduced the concept of nondeterministic Turing machines — a formalization that would later become central to complexity theory and the famous P vs NP problem. When Stephen Cook defined the class NP in 1971, he built directly on the framework Scott and Rabin had established. The very “N” in NP stands for nondeterministic, a tribute to the conceptual apparatus these two young mathematicians created.

Domain Theory: Giving Meaning to Computation

If the work with Rabin established Scott’s reputation, domain theory cemented his legacy as one of the most profound thinkers in the history of computer science. The problem Scott tackled in the late 1960s was deceptively simple to state: how do you assign mathematical meaning to a computer program?

Consider a recursive function definition. In a language like LISP — the brainchild of John McCarthy — you could write a function that calls itself. But from a mathematical standpoint, this was deeply problematic. A function is supposed to be a well-defined mapping from inputs to outputs. A recursive definition is circular: the function is defined in terms of itself. How can you guarantee such a thing even exists?

The solution came to Scott during a pivotal period in the late 1960s and early 1970s. Working alongside Christopher Strachey at Oxford University, Scott developed denotational semantics — a framework that assigns a mathematical object (a “denotation”) to every construct in a programming language. The key insight was that programs should be interpreted as continuous functions on specially structured mathematical spaces called domains.

The Lattice-Theoretic Foundation

A Scott domain (more precisely, a continuous lattice or directed-complete partial order, or dcpo) is a partially ordered set with certain completeness properties. The ordering represents “information content” — one element is below another if the second contains at least as much information as the first. At the bottom of every domain sits a special element ⊥ (pronounced “bottom”), representing complete absence of information — the undefined or non-terminating computation.

The brilliance of this approach is that recursive definitions become fixed-point equations. A recursive function definition like f(x) = if x == 0 then 1 else x * f(x-1) is interpreted as: find a function f that is a fixed point of a certain operator. Scott’s fixed-point theorem guarantees that such a fixed point always exists and can be obtained as the limit of an ascending chain of approximations, starting from ⊥.

This is not merely abstract elegance. It provides a rigorous basis for proving that programs are correct, that compilers preserve program meaning, and that program transformations (optimizations) do not change behavior. The entire field of formal verification — from tools used to verify microprocessor designs to those that check safety-critical software in aviation and medicine — traces its mathematical foundations back to Scott’s domains.

Scott Continuity and the Lambda Calculus

One of Scott’s most celebrated achievements was providing the first mathematical model of the untyped lambda calculus. Church’s lambda calculus, the theoretical foundation behind functional programming, required a space D such that the function space D → D could be embedded back into D. In conventional set theory, this is impossible for any non-trivial set (by cardinality arguments). Scott’s ingenious solution was to work with continuous lattices, where “function” means “Scott-continuous function” — a function that preserves directed suprema. In this setting, the space of continuous functions from D to D can indeed be represented within D itself.

The resulting model, known as D∞ (D-infinity), was constructed as an inverse limit of an ascending sequence of domains. This construction showed that the lambda calculus — and by extension, functional programming languages — rests on solid mathematical ground. Here is a conceptual sketch of how fixed-point semantics works in practice:

-- Denotational semantics: fixed-point construction for recursive functions

-- Demonstrates Scott's least fixed-point theorem (Kleene chain)

-- The "bottom" element represents non-termination

data Lifted a = Bottom | Value a deriving (Show, Eq)

-- A functional that represents the recursive definition of factorial:

-- fact(n) = if n == 0 then 1 else n * fact(n-1)

-- We abstract over the recursive call as a parameter 'f'

factFunctional :: (Integer -> Lifted Integer) -> (Integer -> Lifted Integer)

factFunctional f n

| n < 0 = Bottom -- undefined for negative inputs

| n == 0 = Value 1 -- base case

| otherwise = case f (n - 1) of

Bottom -> Bottom -- if recursive call diverges, so do we

Value r -> Value (n * r) -- otherwise, multiply

-- Compute the least fixed point by iterating from ⊥

-- Each iteration "unfolds" one more level of recursion

-- ⊥, F(⊥), F(F(⊥)), F(F(F(⊥))), ... converges to the fixed point

lfp :: ((Integer -> Lifted Integer) -> (Integer -> Lifted Integer))

-> Integer

-> Lifted Integer

lfp functional = limit

where

-- The bottom function: undefined everywhere

bot _ = Bottom

-- Generate the Kleene chain: [⊥, F(⊥), F²(⊥), ...]

chain = iterate functional bot

-- The limit: try successive approximations until one gives a value

limit n = head [ r | approx <- chain, let r = approx n, r /= Bottom ]

-- Usage: lfp factFunctional computes factorial via Scott's construction

-- lfp factFunctional 5 => Value 120

-- At iteration k, the approximation handles inputs 0..k-1

-- The chain converges pointwise to the true factorial functionThis fixed-point approach became the standard technique in denotational semantics. It influenced the design of languages like Haskell, where lazy evaluation is deeply connected to the idea of computing with partial information and converging toward complete results — precisely the operational manifestation of Scott’s domain-theoretic framework.

Collaboration with Christopher Strachey

The partnership between Dana Scott and Christopher Strachey at Oxford represents one of the most fruitful collaborations in the history of programming language theory. Strachey, a brilliant and eccentric British computer scientist, had been working on making programming languages more rigorous. He had deep intuitions about language design but lacked the mathematical machinery to formalize them.

Scott provided exactly that machinery. Together, they developed the Scott-Strachey approach to denotational semantics, first outlined in their 1971 technical monograph “Toward a Mathematical Semantics for Computer Languages.” Their framework gave precise mathematical meaning to features that had previously resisted formalization: assignment statements, goto statements, exception handling, and concurrency primitives.

The influence of this collaboration extends far beyond academia. Modern type systems — from the Hindley-Milner type inference used in ML (created by Robin Milner) and Haskell to the sophisticated dependent types in Agda and Idris — all rest on semantic foundations that trace back to Scott and Strachey’s work. When a compiler checks that your types are consistent, it is implicitly relying on mathematical structures that Scott helped define.

Contributions to Logic and Set Theory

Scott’s contributions extend well beyond computer science into pure mathematics and logic. His work on Boolean-valued models of set theory provided an alternative approach to Paul Cohen’s forcing technique for proving the independence of the continuum hypothesis. While Cohen’s method used generic filters, Scott showed that the same results could be obtained using Boolean-valued models — a perspective that many logicians find more intuitive and algebraically natural.

Scott also made fundamental contributions to modal logic, developing the concept of canonical models and proving completeness theorems that remain central to the field. His work on the topological interpretation of modal logic connected this branch of philosophy and mathematics to the same lattice-theoretic structures that underlie domain theory, revealing deep unities across seemingly disparate fields.

In set theory, Scott proved that measurable cardinals cannot exist in Gödel’s constructible universe L — a result that established a fundamental boundary between different conceptions of the set-theoretic universe. This theorem showed that large cardinal axioms genuinely expand the mathematical universe beyond what can be constructed, a philosophical insight with implications that mathematicians continue to explore.

Influence on Modern Programming Languages

The practical impact of Scott’s theoretical work is visible across the entire landscape of modern programming. Every functional programming language — from Haskell and OCaml to Scala and F# — embodies ideas that Scott helped formalize. The concept of lazy evaluation in Haskell, where expressions are only computed when their values are needed, is a direct computational realization of Scott’s continuous functions on domains.

Type theory, which has become central to modern language design, was profoundly shaped by Scott’s semantic insights. When Anders Hejlsberg designed TypeScript’s type system or when the Rust language (conceived by Graydon Hoare) uses its ownership model to guarantee memory safety, the underlying type-theoretic frameworks owe a substantial debt to the semantic foundations Scott established.

Program verification and formal methods — fields that have become increasingly important as software controls everything from medical devices to autonomous vehicles — are built on denotational semantics. Tools like Coq, Isabelle, and Lean use type theories and logical frameworks that descend from Scott’s work. When engineers at companies like Amazon and Microsoft use formal methods to verify their cloud infrastructure, they are applying, at several removes, the mathematical structures Dana Scott created.

Even in practical software project management, the principles of formal specification and rigorous reasoning that Scott championed have indirect effects. Modern teams using Taskee to manage complex development workflows benefit from decades of research into how software systems can be specified, verified, and reliably built — a research tradition that Scott’s semantic foundations made possible.

Academic Career and Mentorship

Scott held positions at some of the world’s most prestigious institutions. After Princeton, he worked at the University of Chicago, Stanford University, and the University of Amsterdam before settling at Carnegie Mellon University, where he spent the bulk of his later career as a University Professor of Computer Science, Philosophy, and Mathematical Logic — a triple appointment that reflected the remarkable breadth of his contributions.

At Oxford in the early 1970s, Scott was instrumental in establishing the Programming Research Group as a world center for programming language theory. His time at Oxford not only produced the foundational work on denotational semantics but also attracted and inspired a generation of researchers who went on to shape the field. The British tradition of programming language semantics — which includes luminaries like Tony Hoare, Robin Milner, and Peter Landin — was profoundly enriched by Scott’s presence.

At Carnegie Mellon, Scott continued to bridge logic, mathematics, and computer science. He mentored doctoral students who themselves became leaders in formal methods, type theory, and programming language research. His approach — always seeking the deepest mathematical structure beneath practical problems — set a standard for theoretical computer science that endures today.

Honors and Recognition

Beyond the Turing Award, Scott received virtually every major honor available to a mathematician and computer scientist. He was elected to the National Academy of Sciences, the American Academy of Arts and Sciences, and the British Academy. He received the LeRoy P. Steele Prize from the American Mathematical Society and numerous honorary doctorates from universities around the world.

In 2012, Scott was awarded the Rolf Schock Prize in Logic and Philosophy — one of the most prestigious awards in the field — recognizing the breadth and depth of his contributions to logic, from domain theory to modal logic to set theory. The award citation highlighted his unique ability to create mathematical frameworks that illuminate both philosophical questions and practical computational problems.

The Living Legacy of Domain Theory

Domain theory, Scott’s most enduring creation, has evolved far beyond its original purpose. While it was initially developed to provide semantics for programming languages, it has found applications across mathematics, physics, and information science. In theoretical computer science, domain theory underpins the study of abstract interpretation — the technique used in static analysis tools that detect bugs in software without running it.

The connections between domain theory and topology have led to productive interactions between computer science and pure mathematics. The Scott topology on a domain — where the open sets are precisely the upward-closed, inaccessible-by-directed-joins sets — provides a topological perspective on computation that has inspired work in constructive mathematics and computable analysis.

Modern research continues to extend Scott’s ideas. Probabilistic programming, quantum computing semantics, and concurrent process calculi all use domain-theoretic techniques or their descendants. When researchers seek to give rigorous meaning to new computational paradigms, they invariably begin with the conceptual toolkit that Scott forged.

For development teams building complex software systems, the practical downstream effects of Scott’s work are everywhere. Organizations using Toimi to coordinate digital projects operate in an ecosystem where compilers, type checkers, and verification tools all function reliably because of the mathematical foundations Scott established decades ago.

Scott’s Philosophy of Mathematics and Computation

What distinguishes Scott from many theoretical computer scientists is his deep engagement with philosophical questions. His work on the foundations of mathematics — particularly his Boolean-valued models and his contributions to the debate over constructive versus classical mathematics — reflects a thinker who cares not just about what we can prove but about what proof means.

Scott has argued that computer science needs to take its mathematical foundations more seriously, that the gap between theory and practice is not a sign that theory is irrelevant but that it has not yet been made accessible enough. This conviction drove his work on domain theory: he wanted to create mathematical structures that were not just correct in the abstract but genuinely useful for reasoning about real programs.

This philosophy — that deep theory and practical utility are not enemies but allies — is perhaps Scott’s most important lesson for the field. In an era where software increasingly controls critical infrastructure, the rigorous foundations he built are more relevant than ever. The formal methods community, the programming language design community, and the verification community all stand on ground that Dana Scott helped prepare.

His influence can be traced through the work of Edsger Dijkstra, who shared Scott’s insistence on mathematical rigor in programming, and Donald Knuth, whose monumental analysis of algorithms complemented Scott’s semantic approach to understanding programs. Together, these pioneers built the theoretical infrastructure that makes modern software engineering possible.

Frequently Asked Questions

What did Dana Scott win the Turing Award for?

Dana Scott shared the 1976 ACM Turing Award with Michael O. Rabin for their joint paper “Finite Automata and Their Decision Problems” (1959). The paper introduced nondeterministic finite automata and proved that they are equivalent in power to deterministic finite automata via the subset construction. This work laid conceptual foundations for complexity theory and the P vs NP problem.

What is domain theory and why does it matter?

Domain theory is a branch of mathematics developed by Dana Scott in the late 1960s and early 1970s to provide rigorous mathematical semantics for programming languages. A domain is a partially ordered set with completeness properties that can model computational processes, including recursion and non-termination. It matters because it provides the theoretical foundation for type systems, program verification, compiler correctness proofs, and the design of functional programming languages like Haskell.

How does Scott’s work relate to modern programming languages?

Scott’s denotational semantics and domain theory directly influenced the design of functional programming languages, type systems, and formal verification tools. Lazy evaluation in Haskell, type inference in ML and its descendants, and the mathematical models underlying program correctness all trace back to Scott’s contributions. Modern type checkers and static analysis tools rely on fixed-point computations that Scott’s theorems guarantee to be well-defined.

What is the difference between denotational and operational semantics?

Operational semantics describes program meaning by specifying how a program executes step by step — essentially defining an abstract machine. Denotational semantics, developed by Scott and Strachey, assigns a mathematical object (like a function on a domain) to each program construct, defining meaning compositionally without reference to execution. The two approaches are complementary: operational semantics is closer to implementation, while denotational semantics supports mathematical reasoning and proof. Establishing the equivalence between the two is a key goal in programming language theory.

What is the significance of Scott’s D∞ model?

The D∞ model was the first mathematical model of the untyped lambda calculus, Alonzo Church’s foundational formalism for computation. The challenge was to find a mathematical space D where the function space D → D embeds into D — impossible with ordinary sets due to cardinality constraints. Scott solved this by constructing D∞ as an inverse limit of continuous lattices, where only Scott-continuous functions are considered. This proved that the lambda calculus and functional programming rest on consistent mathematical foundations, resolving a decades-old open question in the theory of computation.