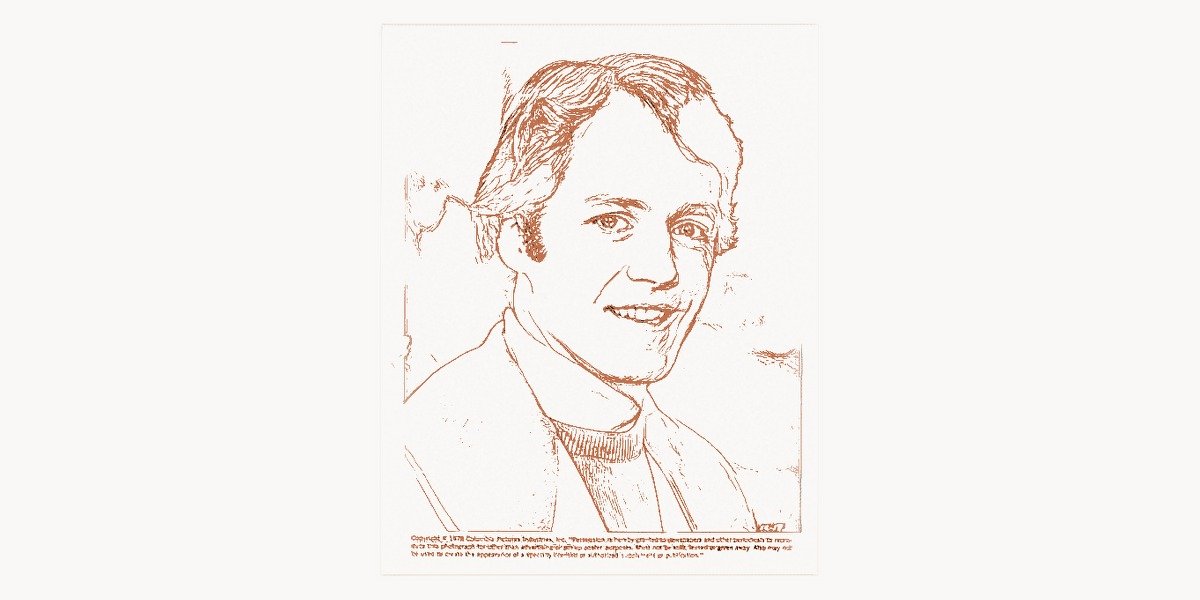

In 1951, a graduate student at MIT faced a choice: write a term paper for Robert Fano’s information theory class or take the final exam. David Albert Huffman chose the paper — and in doing so, invented an algorithm that would become one of the most widely used encoding methods in all of computer science. Every JPEG image you view, every MP3 file you play, every ZIP archive you decompress owes a quiet debt to the elegant binary trees that Huffman sketched out in his MIT dormitory more than seven decades ago. His prefix-free coding scheme did not merely solve an academic problem; it fundamentally reshaped how humanity stores and transmits digital information.

Early Life and the Road to MIT

David Albert Huffman was born on August 9, 1925, in Alliance, Ohio. From an early age, he displayed the kind of restless intellectual curiosity that would later define his career. He earned his bachelor’s degree in electrical engineering from Ohio State University at the age of eighteen in 1944, a pace that reflected both the wartime acceleration of academic programs and Huffman’s own exceptional aptitude. After serving in the U.S. Navy as a radar maintenance officer during World War II and the subsequent occupation of Japan, Huffman returned to academic life with a deepened understanding of signal processing and electronic systems.

He completed his master’s degree at Ohio State before moving to the Massachusetts Institute of Technology for doctoral studies. It was at MIT, under the mentorship of some of the era’s greatest minds in information theory and electrical engineering, that Huffman would make his defining contribution. The intellectual atmosphere at MIT in the early 1950s was electric — Claude Shannon had recently published his groundbreaking paper on the mathematical theory of communication, and the entire field was buzzing with new possibilities for encoding, transmitting, and compressing data.

The Famous Term Paper That Changed Computing

The story of Huffman coding’s invention is one of the most celebrated anecdotes in computer science history. In the fall of 1951, Huffman was enrolled in an information theory course taught by Professor Robert Fano. Fano gave his students a choice: they could either take the final exam or write a term paper on the problem of finding the most efficient binary code for a given set of symbol frequencies. What Fano did not tell his students was that he himself had been working on this very problem alongside Claude Shannon, and neither of them had been able to prove that their approach — known as Shannon-Fano coding — was truly optimal.

Huffman struggled with the problem for months, filling pages with attempts and discarding them. He was on the verge of giving up and taking the final exam instead when, according to his own recollection, inspiration struck while he was about to throw his notes in the trash. He realized that by building a binary tree from the bottom up — starting with the least frequent symbols and merging them step by step — he could construct a provably optimal prefix-free code. This was the inverse of the top-down approach that Shannon and Fano had been using, and it was precisely this reversal of perspective that yielded the proof of optimality.

The resulting paper, “A Method for the Construction of Minimum-Redundancy Codes,” was published in the Proceedings of the Institute of Radio Engineers in September 1952. It was concise, elegant, and revolutionary. At just a few pages long, it presented an algorithm that a graduate student had derived for a class assignment — and it surpassed the work of two of the most brilliant minds in information theory. The irony was not lost on anyone, least of all Fano, who graciously acknowledged the superiority of Huffman’s approach. This kind of foundational algorithmic work parallels the contributions of Edsger Dijkstra, whose own graph algorithms emerged from similarly elegant reasoning about optimal structures.

How Huffman Coding Works

At its core, Huffman coding is a variable-length encoding scheme that assigns shorter binary codes to more frequently occurring symbols and longer codes to less frequent ones. The key insight is that the code must be prefix-free — no codeword can be a prefix of another codeword. This property ensures that encoded messages can be decoded unambiguously without any delimiters between symbols.

The Greedy Algorithm

The Huffman algorithm is a classic example of a greedy strategy, one of the foundational paradigms covered extensively in Donald Knuth’s monumental work, The Art of Computer Programming. The steps are deceptively simple:

- Count the frequency of each symbol in the input data.

- Create a leaf node for each symbol and add it to a priority queue (min-heap) ordered by frequency.

- While there is more than one node in the queue: remove the two nodes with the lowest frequency, create a new internal node with these two as children and a frequency equal to their sum, then add this new node back to the queue.

- The remaining node is the root of the Huffman tree. Assign binary codes by traversing the tree: every left branch is a 0, every right branch is a 1.

The result is a binary tree where the most common symbols live closest to the root (short codes) and the rarest symbols are pushed to the leaves farthest from the root (long codes). This produces a mathematically provable minimum-redundancy code for any given frequency distribution.

Implementation in Python

The following implementation demonstrates the complete Huffman coding pipeline — building the tree from a frequency table and generating the prefix-free codebook:

import heapq

from collections import Counter

class HuffmanNode:

def __init__(self, char=None, freq=0, left=None, right=None):

self.char = char

self.freq = freq

self.left = left

self.right = right

def __lt__(self, other):

return self.freq < other.freq

def build_huffman_tree(text):

"""Build a Huffman tree from input text and return the root node."""

frequency = Counter(text)

# Create a min-heap of leaf nodes

heap = [HuffmanNode(char=ch, freq=f) for ch, f in frequency.items()]

heapq.heapify(heap)

# Edge case: single unique character

if len(heap) == 1:

node = heapq.heappop(heap)

root = HuffmanNode(freq=node.freq, left=node)

return root

# Merge the two lowest-frequency nodes until one tree remains

while len(heap) > 1:

left = heapq.heappop(heap)

right = heapq.heappop(heap)

merged = HuffmanNode(

freq=left.freq + right.freq,

left=left,

right=right

)

heapq.heappush(heap, merged)

return heap[0]

def generate_codes(node, prefix="", codebook=None):

"""Traverse the Huffman tree to build the codebook."""

if codebook is None:

codebook = {}

if node is None:

return codebook

# Leaf node — assign the accumulated prefix as its code

if node.char is not None:

codebook[node.char] = prefix if prefix else "0"

return codebook

generate_codes(node.left, prefix + "0", codebook)

generate_codes(node.right, prefix + "1", codebook)

return codebook

def huffman_encode(text):

"""Encode text using Huffman coding. Return encoded string and codebook."""

root = build_huffman_tree(text)

codebook = generate_codes(root)

encoded = "".join(codebook[ch] for ch in text)

return encoded, codebook

# --- Example usage ---

sample = "huffman coding is elegant and efficient"

encoded_bits, codes = huffman_encode(sample)

print("Symbol | Frequency | Huffman Code")

print("-" * 40)

for char, code in sorted(codes.items(), key=lambda x: len(x[1])):

freq = sample.count(char)

display = repr(char)

print(f" {display:6s} | {freq:9d} | {code}")

print(f"\nOriginal size: {len(sample) * 8} bits (ASCII)")

print(f"Encoded size: {len(encoded_bits)} bits")

print(f"Compression: {(1 - len(encoded_bits)/(len(sample)*8))*100:.1f}%")Running this code reveals the essential beauty of Huffman coding: common characters like spaces and the letter ‘e’ receive short codes (perhaps 3-4 bits), while rare characters receive longer codes. The overall encoded message is significantly shorter than the fixed-width ASCII representation.

Prefix-Free Property and Decoding

The prefix-free property is what makes Huffman codes uniquely decodable. Because no codeword is a prefix of any other, you can decode a stream of bits by walking the Huffman tree from root to leaf. The moment you reach a leaf, you output that symbol and start again from the root. No lookahead, no backtracking, no ambiguity. This elegant property connects Huffman’s work to broader principles in formal language theory that were being explored simultaneously by pioneers like John Backus, whose work on BNF grammars addressed similar questions of unambiguous parsing.

Here is a compact decoder that demonstrates this tree-walking approach:

def huffman_decode(encoded_bits, root):

"""Decode a Huffman-encoded bit string using the Huffman tree."""

decoded_chars = []

current = root

for bit in encoded_bits:

# Walk left on '0', right on '1'

if bit == '0':

current = current.left

else:

current = current.right

# Reached a leaf — emit the character and reset to root

if current.char is not None:

decoded_chars.append(current.char)

current = root

return "".join(decoded_chars)

# --- Verify round-trip ---

sample = "huffman coding is elegant and efficient"

root = build_huffman_tree(sample)

codebook = generate_codes(root)

encoded = "".join(codebook[ch] for ch in sample)

decoded = huffman_decode(encoded, root)

assert decoded == sample, "Round-trip failed!"

print(f"Decoded: {decoded}")

print("Round-trip verification: PASSED")

# --- Demonstrate the prefix-free property ---

print("\nPrefix-free verification:")

for c1, code1 in codebook.items():

for c2, code2 in codebook.items():

if c1 != c2 and code2.startswith(code1):

print(f" VIOLATION: '{c1}' ({code1}) is prefix of '{c2}' ({code2})")

break

else:

continue

break

else:

print(" No codeword is a prefix of another — property holds.")Optimality and Theoretical Foundations

What set Huffman’s method apart from Shannon-Fano coding was not just that it produced shorter codes in practice, but that Huffman could prove his approach was optimal. Specifically, Huffman coding produces the shortest possible prefix-free code for a given set of symbol frequencies among all integer-bit-length codes. The proof proceeds by showing that in any optimal code, the two least frequent symbols must be at the maximum depth of the tree and must be siblings — exactly the condition that the Huffman algorithm enforces at every step.

It is worth noting that Huffman coding achieves optimality only within the constraint of assigning whole-bit codeword lengths. The theoretical lower bound for compression is the entropy of the source, as defined by Shannon’s famous formula H = -Σ p(x) log₂ p(x). Huffman coding can approach this bound but cannot go below it. Later techniques like arithmetic coding would break the integer-bit barrier by effectively assigning fractional bit lengths to symbols, achieving even closer approximations to the entropy limit. Nevertheless, Huffman coding remains preferred in many applications because of its simplicity, speed, and patent-free status.

Impact on Modern Technology

The practical impact of Huffman coding is almost impossible to overstate. It forms a critical component of many of the compression standards that underpin the modern digital world.

Image Compression

The JPEG image format, which dominates digital photography and web imagery, uses Huffman coding as its entropy encoding stage. After an image is transformed into frequency-domain coefficients via the discrete cosine transform, those coefficients are quantized and then Huffman-encoded to produce the final compressed file. Every time you view a photograph on the web, Huffman’s algorithm is at work behind the scenes. For teams managing large volumes of digital assets in production workflows, tools like Taskee help coordinate the complex pipelines where compressed media moves from creation through review to deployment.

File Compression

The DEFLATE algorithm, used in ZIP files, gzip, and PNG images, combines LZ77 dictionary-based compression with Huffman coding. The first stage finds repeated patterns in the data; the second stage Huffman-encodes the resulting symbols for maximum compactness. This two-stage approach, blending dictionary methods with statistical encoding, became the gold standard for lossless compression throughout the 1990s and 2000s.

Audio and Video

MP3 audio compression uses Huffman coding after its psychoacoustic model removes inaudible frequency components. The H.264 and H.265 video codecs also rely on variants of Huffman coding (specifically, context-adaptive techniques like CAVLC) for portions of their entropy coding stages. Streaming video, video calls, and digital television all benefit directly from the foundation that Huffman laid.

Network Protocols

HTTP/2, the protocol that powers the modern web, uses Huffman coding in its HPACK header compression scheme. Every HTTP header exchanged between browsers and servers is Huffman-encoded using a static table derived from real-world frequency analysis of header values. This reduces bandwidth overhead and accelerates page loads for billions of users daily.

Academic Career and Other Contributions

While Huffman coding alone would have secured his legacy, David Huffman’s academic career extended far beyond that single algorithm. After completing his Ph.D. at MIT in 1953, he joined the faculty at MIT where he taught for several years before moving to the University of California, Santa Cruz in 1967. At UCSC, he became one of the founding members of the computer science department and served as its first chairman.

Signal Analysis and Folding

Huffman made significant contributions to the study of signal design and mathematical origami. He developed methods for analyzing the properties of surfaces that can be folded from flat sheets — a problem that may seem distant from coding theory but shares deep connections to computational geometry and optimization. His work on the mathematical properties of zero-curvature surfaces anticipated developments in computational origami by decades.

Teaching and Mentorship

Colleagues and students consistently described Huffman as a dedicated and inspiring teacher. He brought the same clarity and rigor to his lectures that characterized his research. At UC Santa Cruz, he helped shape the computer science curriculum during a formative period for the discipline. His emphasis on mathematical foundations and algorithmic thinking influenced generations of students who went on to careers in academia and industry. This tradition of rigorous pedagogy echoes the approach championed by Niklaus Wirth, who similarly believed that clean algorithmic thinking was the cornerstone of computer science education.

Huffman’s Algorithm in the Broader Compression Landscape

Understanding where Huffman coding fits in the larger tapestry of data compression requires appreciating the distinction between statistical methods and dictionary-based methods. Huffman coding is the quintessential statistical approach — it examines the frequency distribution of symbols and assigns codes accordingly. Dictionary-based methods like LZ77 and LZ78, developed by Abraham Lempel and Jacob Ziv in the late 1970s, take a fundamentally different approach by replacing repeated sequences with references to earlier occurrences.

In practice, the most powerful compression systems combine both approaches. DEFLATE, as mentioned, pairs LZ77 with Huffman coding. More modern algorithms like Zstandard (zstd) and Brotli use variants of this hybrid strategy, often replacing Huffman coding with more sophisticated entropy coders like asymmetric numeral systems (ANS) or arithmetic coding for marginal gains in compression ratio. Yet the conceptual framework that Huffman established — variable-length prefix-free codes derived from symbol statistics — remains the foundation upon which all these advances are built.

The theoretical groundwork for understanding compression limits was, of course, laid by Claude Shannon in his 1948 paper. Shannon proved that there exists a fundamental limit to how much data can be compressed without loss — the entropy rate. Huffman’s contribution was to provide a practical, efficient algorithm that achieves this limit as closely as any integer-bit code can. The interplay between Shannon’s theory and Huffman’s practice became a template for the entire field of information theory: first establish the theoretical bounds, then design algorithms that approach them.

Legacy and Recognition

David Huffman received numerous honors throughout his career, including the IEEE Richard W. Hamming Medal in 1999 — an award named after another pioneer of coding theory. He was a fellow of the IEEE and received the Louis E. Levy Medal of the Franklin Institute. Despite the enormous practical significance of his coding algorithm, Huffman never patented it, allowing it to be freely used in countless applications. This decision, whether deliberate or simply a reflection of the academic culture of the time, contributed enormously to the algorithm’s ubiquity.

Huffman passed away on October 7, 1999, at the age of 74 in Santa Cruz, California. By that time, his algorithm had been embedded into standards and systems used by billions of people. The compression of the modern digital world — from the images on your screen to the files in your cloud storage — rests in no small part on the work of a graduate student who chose a term paper over a final exam.

In the landscape of algorithmic pioneers, Huffman occupies a unique position. Like Alan Turing, who defined the theoretical foundations of computation, Huffman provided a fundamental building block that transcended any single application. His algorithm is taught in every computer science curriculum, implemented in every major programming language, and executed trillions of times daily across the global digital infrastructure. For organizations navigating complex technology landscapes, understanding these foundational innovations provides critical context — the kind of strategic technological insight that agencies like Toimi bring to their digital consulting engagements.

Frequently Asked Questions

What is Huffman coding and why is it important?

Huffman coding is a lossless data compression algorithm that assigns variable-length binary codes to symbols based on their frequency of occurrence. More frequent symbols receive shorter codes, and less frequent symbols receive longer codes. It is important because it produces the mathematically optimal prefix-free code for any given frequency distribution, making it a fundamental building block in formats like JPEG, MP3, ZIP, PNG, and modern web protocols like HTTP/2. Virtually every digital device in use today relies on Huffman coding in some form.

How does Huffman coding differ from Shannon-Fano coding?

Both Huffman coding and Shannon-Fano coding are variable-length prefix-free coding methods, but they differ in their construction approach. Shannon-Fano coding builds the code tree from the top down by repeatedly dividing the symbol set into two groups of roughly equal total probability. Huffman coding builds the tree from the bottom up by repeatedly merging the two least frequent symbols. This bottom-up approach allows Huffman coding to produce provably optimal codes, whereas Shannon-Fano coding can sometimes produce suboptimal results. Huffman’s method guarantees minimum redundancy; Shannon-Fano does not.

Is Huffman coding still used in modern software and hardware?

Yes, Huffman coding remains extremely widely used. It is a core component of the JPEG image standard, the DEFLATE algorithm used in ZIP and gzip, MP3 audio compression, and the HPACK header compression in HTTP/2. Many hardware implementations in networking equipment, storage controllers, and embedded systems include dedicated Huffman encoding and decoding circuits for performance. While newer entropy coding methods like arithmetic coding and asymmetric numeral systems offer marginally better compression ratios, Huffman coding’s simplicity, speed, and freedom from patent restrictions ensure its continued relevance.

What does prefix-free mean in the context of Huffman codes?

A prefix-free code (also called a prefix code) is one in which no codeword is a prefix of any other codeword. For example, if the code for the letter ‘a’ is “01”, then no other character’s code can start with “01”. This property is essential because it allows a compressed bitstream to be decoded unambiguously by reading bits one at a time and following a path through the Huffman tree. The moment a leaf node is reached, the corresponding symbol is output and decoding restarts from the root. No separators, delimiters, or lookahead are required, making decoding both simple and fast.

What are the limitations of Huffman coding compared to arithmetic coding?

The primary limitation of Huffman coding is that it assigns whole-number bit lengths to each symbol. If the optimal code length for a symbol is, say, 2.3 bits, Huffman coding must round to either 2 or 3 bits. Arithmetic coding overcomes this limitation by effectively encoding the entire message as a single fractional number, achieving bit lengths that can be arbitrarily close to the entropy lower bound. In practice, this means arithmetic coding can compress data a few percent more efficiently than Huffman coding, particularly when symbol probabilities are heavily skewed. However, arithmetic coding is more computationally complex and was historically encumbered by patents, which is why Huffman coding remains dominant in many standards.