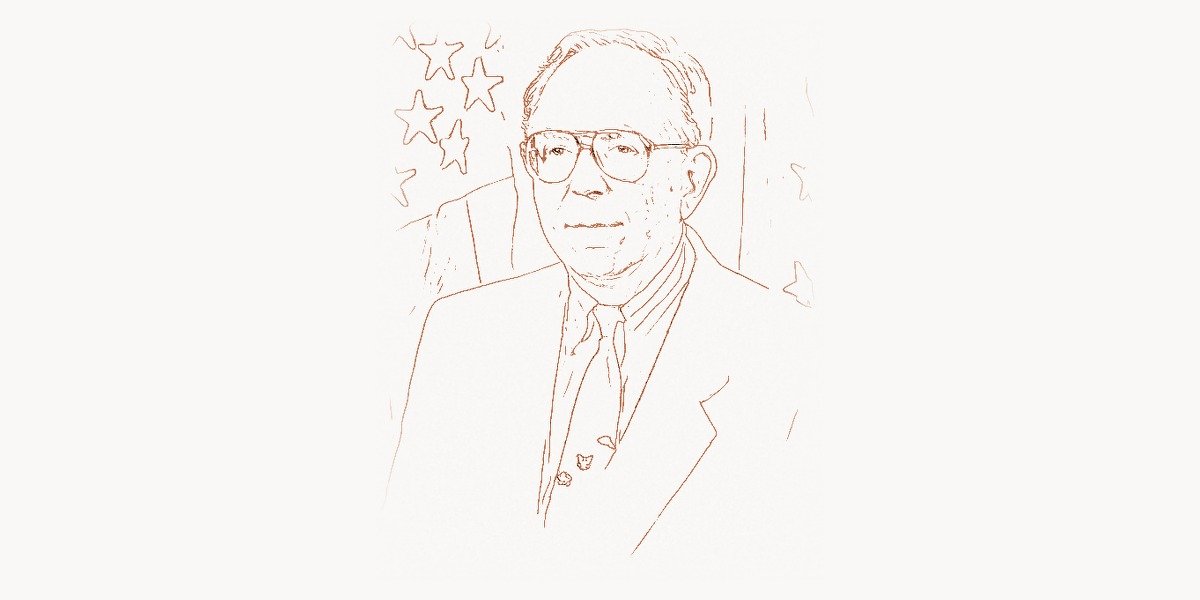

In 1965, while most computer scientists were obsessed with making machines calculate faster, Edward Feigenbaum asked a radically different question: what if computers could know things? Not just crunch numbers, but reason through problems the way a human expert would — diagnosing diseases, analyzing chemical compounds, or configuring complex systems. That question launched the field of expert systems, changed how industries from medicine to manufacturing operated, and earned Feigenbaum the nickname “Father of Expert Systems.” His work proved that the real power of artificial intelligence lay not in abstract reasoning, but in capturing and applying the specialized knowledge of human experts.

Early Life and Education

Edward Albert Feigenbaum was born on January 20, 1936, in Weehawken, New Jersey. His father was an immigrant baker, and the family lived modestly. From an early age, Feigenbaum showed a fascination with how things worked — a curiosity that would eventually lead him to one of the most consequential ideas in computing history.

Feigenbaum attended the Carnegie Institute of Technology (now Carnegie Mellon University), where he earned his bachelor’s degree in electrical engineering in 1956. It was during his undergraduate years that he encountered something that would reshape his entire trajectory: Herbert Simon’s pioneering work on artificial intelligence. Simon, along with Allen Newell, was building some of the first programs that could simulate aspects of human thought. Feigenbaum was captivated. He stayed at Carnegie to pursue his Ph.D. under Simon’s supervision, completing it in 1960.

His doctoral dissertation focused on EPAM (Elementary Perceiver and Memorizer), a computer model of human verbal learning. EPAM was one of the first computational models of memory and learning, simulating how people learn to associate nonsense syllables — a classic task in experimental psychology. The program built a discrimination net, a kind of decision tree that grew as it encountered new stimuli, much like the way humans develop categories through experience. EPAM was groundbreaking because it showed that a computer could model not just logical reasoning, but the messy, incremental process of human learning. This work connected Feigenbaum to the emerging field of cognitive science and planted the seeds for his later focus on knowledge representation. After all, if you could model how humans learn, why not model what they know?

Career and the Rise of Expert Systems

After completing his Ph.D., Feigenbaum joined the faculty at the University of California, Berkeley, before moving to Stanford University in 1965. It was at Stanford that his most transformative work began. The mid-1960s were a pivotal time for AI research. Early enthusiasm about general-purpose reasoning systems was giving way to frustration. Programs like the General Problem Solver, developed by Newell and Simon, could handle toy problems but struggled with real-world complexity. Feigenbaum had an insight that would define his career: the bottleneck was not reasoning power, but knowledge.

Technical Innovation

In 1965, Feigenbaum partnered with geneticist Joshua Lederberg and chemist Carl Djerassi to create DENDRAL, widely recognized as the first expert system. The problem DENDRAL tackled was chemical analysis: given mass spectrometry data, could a computer determine the molecular structure of an unknown compound? This was a task that required deep expertise in organic chemistry — exactly the kind of specialized knowledge that Feigenbaum believed computers could encode.

DENDRAL’s architecture was revolutionary. Instead of relying on general-purpose search algorithms, it encoded the heuristic rules that expert chemists used when analyzing spectra. These rules — things like “if you see a peak at mass X, consider bond Y” — were formalized as production rules in a knowledge base. A separate inference engine applied these rules to the input data, generating and pruning hypotheses about molecular structure.

;; Simplified representation of a DENDRAL-style production rule

;; for mass spectrometry analysis

(defrule identify-ketone-fragment

"If a mass spectrum shows a peak at M-28, consider a CO loss (ketone group)"

(peak ?spectrum :mass-loss 28 :intensity high)

(molecular-formula ?compound :contains-oxygen true)

=>

(assert (candidate-substructure ?compound :group ketone

:confidence 0.85))

(prune-candidates ?compound :incompatible-with

(no-oxygen-functional-groups)))

;; The inference engine would chain these rules together,

;; building up a structural hypothesis from spectral evidence

;; Each rule encodes domain expertise from chemistsThe results were remarkable. DENDRAL could identify molecular structures as accurately as experienced chemists, and in some cases it discovered valid structures that human experts had overlooked. The system demonstrated that encoding domain-specific expertise into a computer program was not only possible but could match and occasionally surpass human performance on well-defined tasks.

Building on DENDRAL’s success, Feigenbaum and his colleagues developed MYCIN in the early 1970s (led by Edward Shortliffe under Feigenbaum’s mentorship). MYCIN diagnosed bacterial infections and recommended antibiotics. It introduced certainty factors — a numeric confidence value between -1 and 1 assigned to each rule — allowing the system to reason under uncertainty, which was essential for medical diagnosis.

# Modern illustration of a certainty-factor inference engine

# inspired by MYCIN's approach to uncertain reasoning

class ExpertSystem:

def __init__(self):

self.facts = {} # known evidence

self.rules = [] # production rules

self.hypotheses = {} # candidate conclusions + CF

def add_rule(self, conditions, conclusion, cf):

"""Each rule maps conditions -> conclusion with certainty factor."""

self.rules.append({

'if': conditions, # list of (fact, expected_value) tuples

'then': conclusion,

'cf': cf # certainty factor: -1.0 to 1.0

})

def combine_cf(self, cf1, cf2):

"""MYCIN's certainty-factor combination formula."""

if cf1 > 0 and cf2 > 0:

return cf1 + cf2 * (1 - cf1)

elif cf1 < 0 and cf2 < 0:

return cf1 + cf2 * (1 + cf1)

else:

return (cf1 + cf2) / (1 - min(abs(cf1), abs(cf2)))

def infer(self):

"""Forward-chain through rules, accumulating evidence."""

for rule in self.rules:

if all(self.facts.get(f) == v for f, v in rule['if']):

conclusion = rule['then']

new_cf = rule['cf']

if conclusion in self.hypotheses:

old_cf = self.hypotheses[conclusion]

self.hypotheses[conclusion] = self.combine_cf(old_cf, new_cf)

else:

self.hypotheses[conclusion] = new_cf

return self.hypothesesMYCIN's diagnostic accuracy was tested rigorously: in a blind evaluation, it outperformed practicing physicians in recommending appropriate antibiotic therapies. This was a landmark result — a computer program besting human experts in their own domain.

Why It Mattered

Feigenbaum's expert systems were revolutionary for several reasons. First, they shifted AI's focus from general reasoning to knowledge engineering — the disciplined process of extracting, structuring, and encoding expert knowledge. Feigenbaum articulated what he called the Knowledge Principle: a system's intelligence comes primarily from the knowledge it possesses, not from the sophistication of its inference mechanisms. This was a direct challenge to the prevailing AI orthodoxy, which focused on ever-more-powerful search and reasoning algorithms.

Second, expert systems proved that AI could deliver practical, commercial value. By the 1980s, expert systems were being deployed across industries. Digital Equipment Corporation's XCON (also known as R1) configured VAX computer orders and saved the company an estimated $40 million per year. Medical expert systems helped diagnose diseases. Financial expert systems evaluated loan applications. The expert systems boom became the first major wave of commercial AI, generating billions of dollars in revenue and spawning an entire industry of knowledge-engineering tools and shell systems.

Third, Feigenbaum's work established fundamental ideas about knowledge representation that remain central to AI today. The separation of knowledge base from inference engine, the use of production rules, the concept of certainty factors, and the focus on domain-specific rather than general-purpose intelligence — these ideas influenced everything from business rule engines to the knowledge graphs that power modern search and recommendation systems. Even today's large language models can be understood partly through Feigenbaum's lens: they encode vast amounts of knowledge, and their performance depends on the breadth and quality of that knowledge, just as he predicted.

Other Major Contributions

Beyond DENDRAL and MYCIN, Feigenbaum's influence extended across multiple dimensions of computer science and technology policy. In the 1970s and 1980s, he played a central role in building Stanford's Knowledge Systems Laboratory (KSL) into one of the world's premier AI research centers. KSL produced a stream of foundational work on knowledge representation, ontology engineering, and automated reasoning.

Feigenbaum was also a key figure in the national debate over America's technological competitiveness. In 1983, he co-authored (with Pamela McCorduck) The Fifth Generation: Artificial Intelligence and Japan's Computer Challenge to the World, a widely read book that warned about Japan's ambitious Fifth Generation Computer Systems project. The book argued that Japan's government-funded effort to build knowledge-processing computers could give it a decisive lead in AI, and urged the United States to invest more aggressively in AI research. While the Japanese Fifth Generation project ultimately fell short of its goals, Feigenbaum's book played an important role in catalyzing increased U.S. funding for AI and sparked a broader conversation about the strategic importance of computer science research.

Feigenbaum served as Chief Scientist of the U.S. Air Force from 1994 to 1997, advising on the application of information technology and AI to defense challenges. He was an early advocate for the use of the internet in scientific collaboration and knowledge sharing, and he contributed to the development of early digital libraries and knowledge management systems.

His influence on the founding vision of artificial intelligence that Geoffrey Hinton would later extend with neural networks, and that Danny Hillis would power with parallel computing hardware, cannot be overstated. While pioneers like Michael Stonebraker were revolutionizing how we store data in databases, Feigenbaum was figuring out how to make that data reason.

He also mentored generations of AI researchers, many of whom went on to build key systems and lead major research groups. His students and collaborators have shaped fields from natural language processing to robotics, and the knowledge-engineering methodology he developed became the foundation for an entire discipline.

Philosophy and Approach

Feigenbaum's approach to AI was rooted in a deeply practical philosophy. He was not interested in building abstract thinking machines; he wanted to create systems that could do useful work in the real world. This pragmatism, combined with a deep respect for human expertise, defined his intellectual legacy.

Key Principles

- The Knowledge Principle: An intelligent system's power comes from the knowledge it possesses, not from the particular reasoning schemes it employs. General-purpose problem-solving methods are weak; domain-specific knowledge is what makes systems perform at expert levels.

- Knowledge is the scarce resource: The real bottleneck in building intelligent systems is not computing power or algorithmic sophistication — it is acquiring and representing the right knowledge. This insight drove the entire knowledge-engineering methodology.

- Separation of knowledge and inference: A well-designed expert system keeps its knowledge base separate from its inference engine. This makes the system transparent, maintainable, and adaptable — you can update the knowledge without rewriting the reasoning mechanism.

- Collaboration with domain experts is essential: Building an expert system is fundamentally a collaborative process between a knowledge engineer and a domain expert. The knowledge engineer's job is to elicit, structure, and formalize the expert's tacit knowledge — a process that is as much art as science.

- Practical impact over theoretical elegance: Feigenbaum consistently prioritized systems that could solve real problems over those that demonstrated clever algorithms. He measured success by whether a system could match or exceed human expert performance on genuine tasks.

- Transparency and explainability: Expert systems should be able to explain their reasoning. Unlike black-box models, a well-built expert system can trace its chain of inference and tell the user why it reached a particular conclusion — a principle that resonates strongly with today's emphasis on explainable AI in production environments.

Feigenbaum's philosophy stands as a bridge between the early symbolic AI tradition and the modern era. His emphasis on knowledge, domain expertise, and practical deployment anticipated many of the challenges that today's machine learning practitioners face — including the critical importance of high-quality training data, which is essentially the statistical equivalent of Feigenbaum's knowledge base.

Legacy and Impact

Edward Feigenbaum's contributions have been recognized with the highest honors in computer science. In 1994, he received the ACM A.M. Turing Award, jointly with Raj Reddy, for their pioneering work in the design and construction of large-scale artificial intelligence systems. The Turing Award is often called the Nobel Prize of computing, and Feigenbaum's receipt of it underscored the fundamental importance of expert systems to the field.

His legacy extends far beyond the specific systems he built. The expert systems industry he helped create laid the groundwork for the broader enterprise AI market that exists today. Business rule engines, clinical decision support systems, configuration tools, and knowledge management platforms all trace their lineage back to the ideas Feigenbaum and his colleagues developed. Today's organizations working to design intelligent web systems that leverage AI-driven knowledge bases owe a conceptual debt to the architecture Feigenbaum established decades ago.

The methodology of knowledge engineering — the systematic process of extracting and encoding expertise — remains relevant in the age of machine learning. While modern AI systems learn from data rather than hand-coded rules, the challenge of capturing the right knowledge in the right form persists. The current emphasis on data quality, feature engineering, and domain adaptation echoes Feigenbaum's insight that knowledge is the key bottleneck. Researchers like Tomas Mikolov, who pioneered Word2Vec and enabled machines to learn word-level knowledge from text, built on the conceptual foundations that knowledge-centric AI researchers like Feigenbaum established.

More broadly, Feigenbaum's work shaped how we think about the relationship between humans and machines. His expert systems were not designed to replace human experts but to amplify and preserve their knowledge — making it available to non-experts, ensuring it survived beyond any individual's career, and enabling consistent application of best practices at scale. This vision of AI as a tool for knowledge democratization remains one of the most compelling and humane framings of what artificial intelligence can accomplish.

His influence on AI research extended across the Pacific as well, through the intense intellectual competition with Japan's Fifth Generation project. And within the American AI community, Feigenbaum's students and intellectual descendants — people like Barbara Liskov, who brought similar rigor to software engineering principles — carried forward the Stanford tradition of building systems that combine theoretical depth with practical impact.

Key Facts

- Full name: Edward Albert Feigenbaum

- Born: January 20, 1936, Weehawken, New Jersey, USA

- Education: B.S. in Electrical Engineering (1956), Ph.D. in Industrial Administration (1960), both from Carnegie Institute of Technology

- Known for: Expert systems, DENDRAL, knowledge engineering, the Knowledge Principle

- Major award: ACM A.M. Turing Award (1994, shared with Raj Reddy)

- Key systems: DENDRAL (1965), MYCIN (1970s, supervised), Heuristic Programming Project

- Notable book: The Fifth Generation (1983, with Pamela McCorduck)

- Positions: Professor at Stanford, Chief Scientist of the U.S. Air Force (1994–1997)

- Doctoral advisor: Herbert A. Simon (Nobel laureate)

- Nickname: "Father of Expert Systems"

FAQ

What is an expert system and why was it important?

An expert system is a computer program that emulates the decision-making ability of a human expert in a specific domain. It consists of two main components: a knowledge base containing domain-specific rules and facts, and an inference engine that applies those rules to new situations. Expert systems were important because they represented the first successful approach to making AI practically useful in the real world. Before expert systems, AI was largely confined to academic research on general-purpose reasoning. Feigenbaum's insight — that encoding specialized knowledge was more valuable than building general reasoners — transformed AI from a theoretical pursuit into a commercially viable technology. Systems like DENDRAL, MYCIN, and XCON proved that computers could perform at expert levels in medicine, chemistry, and engineering, saving millions of dollars and opening entirely new markets for AI technology.

How did Feigenbaum's work influence modern AI and machine learning?

While today's deep learning systems differ architecturally from classical expert systems, Feigenbaum's core insights remain deeply relevant. His Knowledge Principle — that intelligence depends on knowledge, not just reasoning — anticipated the modern understanding that large language models succeed because of the vast knowledge they absorb from training data. The knowledge-engineering process he developed, which involves carefully extracting, validating, and structuring domain expertise, parallels today's practices of data curation, annotation, and quality control. Furthermore, his emphasis on explainability and transparency in AI reasoning has gained new urgency as society grapples with the opacity of neural networks. The expert systems tradition directly informed the development of knowledge graphs, ontologies, and semantic web technologies that remain vital infrastructure for search engines and enterprise AI.

What was the relationship between DENDRAL and MYCIN?

DENDRAL (1965) was the first expert system, designed to identify molecular structures from mass spectrometry data. It proved that encoding domain expertise in production rules could produce expert-level performance. MYCIN (early 1970s) was the second major expert system, built by Edward Shortliffe under Feigenbaum's mentorship, focused on diagnosing bacterial infections and recommending antibiotics. MYCIN advanced beyond DENDRAL by introducing certainty factors for reasoning under uncertainty, which was crucial for medical diagnosis where evidence is often incomplete. Together, these two systems established the architectural template for expert systems — a separate knowledge base and inference engine — and demonstrated the approach's viability across very different domains. DENDRAL showed it could work; MYCIN showed it could generalize.

Why did the expert systems industry decline in the late 1980s?

The expert systems industry experienced a significant contraction in the late 1980s and early 1990s, often called the "AI winter." Several factors contributed. First, expert systems were expensive and time-consuming to build — the knowledge-engineering process required months of intensive collaboration with domain experts. Second, they were brittle: outside their narrow domain, they failed completely, and they could not learn from new data. Third, the specialized hardware (like Lisp machines) that many expert systems ran on became obsolete as general-purpose workstations grew more powerful. Fourth, expectations had been inflated by hype, leading to inevitable disappointment. However, the decline was not the end of the story. Many expert system concepts were absorbed into mainstream software engineering as business rule engines, configuration systems, and clinical decision support tools. The ideas survived even as the label fell out of fashion, and they continue to influence modern AI system design.