In September 2012, a convolutional neural network called AlexNet won the ImageNet Large Scale Visual Recognition Challenge by a staggering margin — cutting the error rate nearly in half compared to the previous year’s best entry. That single result ignited the deep learning revolution that has reshaped every corner of technology, from self-driving cars to medical diagnostics to the large language models powering today’s AI assistants. AlexNet was built by Geoffrey Hinton and his students Alex Krizhevsky and Ilya Sutskever. But the competition itself — the massive, meticulously labeled dataset of over 14 million images that made the breakthrough possible — was the creation of Fei-Fei Li. Without ImageNet, there would have been no proving ground for deep learning to demonstrate its superiority. Without Fei-Fei Li’s years of painstaking work assembling, organizing, and labeling millions of images, the neural network revolution might have been delayed by years or taken an entirely different path. Li did not just build a dataset; she built the infrastructure on which modern computer vision was born.

Early Life and Education

Fei-Fei Li was born in 1976 in Beijing, China, and grew up in Chengdu, the capital of Sichuan province. Her parents were intellectuals — her father a photographer and her mother an aeronautical engineer — who valued education deeply but faced limited opportunities in China during that period. In 1992, when Li was 16 years old, her family immigrated to Parsippany, New Jersey, in the United States. They arrived with little money and limited English. Li’s parents took whatever work they could find: her mother worked in a cashier position, and her father repaired cameras. Li herself worked nights and weekends at a dry cleaner to help support the family.

Despite these hardships, Li excelled academically. She graduated from Parsippany High School in 1995 and received a full scholarship to Princeton University, where she studied physics. At Princeton, she was drawn to the intersection of physical science and computation — the idea that the principles governing the natural world could be understood through mathematical modeling and algorithmic analysis. She graduated with a bachelor’s degree in physics in 1999.

Li then pursued graduate work at the California Institute of Technology (Caltech), where she earned her PhD in electrical engineering in 2005. Her doctoral research focused on visual recognition — specifically, how computational systems could learn to categorize and understand visual scenes the way humans do. This question — how machines can learn to see — would define her entire career. At Caltech, she studied under Pietro Perona and Christof Koch, working at the boundary between computational neuroscience and machine learning. Her early research explored one-shot learning: the ability to recognize objects from very few examples, inspired by how children learn to identify new objects almost instantly.

After completing her PhD, Li joined the faculty at the University of Illinois at Urbana-Champaign and then at Princeton before moving to Stanford University in 2009, where she became an associate professor (and later full professor) of computer science. It was at Stanford that she launched the project that would change the field.

The ImageNet Breakthrough

Technical Innovation

The core problem Li identified was deceptively simple: machine learning algorithms for visual recognition were being trained and evaluated on datasets that were far too small and too narrow to capture the complexity of the real visual world. In the mid-2000s, the standard benchmarks for object recognition contained a few thousand images across a few dozen categories. Researchers would train their models on these small datasets, achieve incrementally better results, and publish papers. But the models were brittle — they worked on the benchmark images and failed spectacularly on anything else. The field was stuck in a loop of marginal improvements on toy problems.

Li’s insight was radical for its time: the bottleneck was not the algorithms but the data. If researchers had access to a massive, diverse, and accurately labeled dataset — one that captured the true variety of the visual world — then algorithms that seemed mediocre on small datasets might reveal unexpected power at scale. This was a contrarian bet. Most researchers in the mid-2000s focused on designing cleverer algorithms. Li bet on building a bigger, better dataset and letting the data drive progress.

In 2006, Li and her collaborators began constructing ImageNet. The project was structured around the WordNet lexical database, a hierarchical organization of English words and concepts developed at Princeton. ImageNet mapped thousands of WordNet noun categories to corresponding images scraped from the internet using search engines. Each image then had to be verified and labeled by human annotators to confirm that it actually depicted the intended category. This labeling task was enormous — millions of images needed human judgment.

Li’s team pioneered the use of Amazon Mechanical Turk (AMT) for large-scale image annotation. They developed quality-control protocols to ensure labeling accuracy: each image was shown to multiple annotators, and consensus-based methods filtered out errors. Over several years, the team assembled a dataset of over 14 million images spanning more than 21,000 categories, with approximately 1 million images annotated with bounding boxes indicating the precise location of objects within the image.

# ImageNet-style image classification with a pre-trained model

# This workflow exists because Fei-Fei Li built ImageNet

import torch

import torchvision.transforms as transforms

from torchvision import models

from PIL import Image

import urllib.request

import json

# Load a model pre-trained on ImageNet (1000 classes)

# These weights encode visual knowledge learned from

# the 1.2 million training images Li's team curated

model = models.resnet50(weights=models.ResNet50_Weights.IMAGENET1K_V2)

model.eval()

# ImageNet normalization — these specific mean/std values

# come directly from the ImageNet dataset statistics

preprocess = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224), # ImageNet standard: 224x224

transforms.ToTensor(),

transforms.Normalize(

mean=[0.485, 0.456, 0.406], # ImageNet RGB means

std=[0.229, 0.224, 0.225] # ImageNet RGB stds

),

])

# Load and classify an image

img = Image.open("example.jpg")

input_tensor = preprocess(img).unsqueeze(0)

with torch.no_grad():

output = model(input_tensor)

# Get top-5 predictions (ImageNet convention, also from Li's ILSVRC)

probabilities = torch.nn.functional.softmax(output[0], dim=0)

top5_prob, top5_idx = torch.topk(probabilities, 5)

for i in range(5):

print(f"{top5_idx[i].item():>4d}: {top5_prob[i].item():.4f}")The dataset alone would have been a significant contribution. But Li went further. In 2010, she launched the ImageNet Large Scale Visual Recognition Challenge (ILSVRC), an annual competition that invited researchers worldwide to build systems that could classify and detect objects in a subset of ImageNet containing 1.2 million training images across 1,000 categories. The competition provided a standardized benchmark — the same training data, the same test data, the same evaluation metrics — that allowed direct, objective comparison of different approaches. This was exactly what the field needed: a rigorous, large-scale proving ground.

Why It Mattered — AlexNet 2012

For the first two years of the ILSVRC (2010 and 2011), the winning entries used traditional computer vision techniques — hand-engineered features like SIFT (Scale-Invariant Feature Transform) and HOG (Histogram of Oriented Gradients) combined with support vector machines or other classical machine learning classifiers. These systems achieved top-5 error rates of around 28% in 2010 and 25.8% in 2011. Progress was steady but incremental.

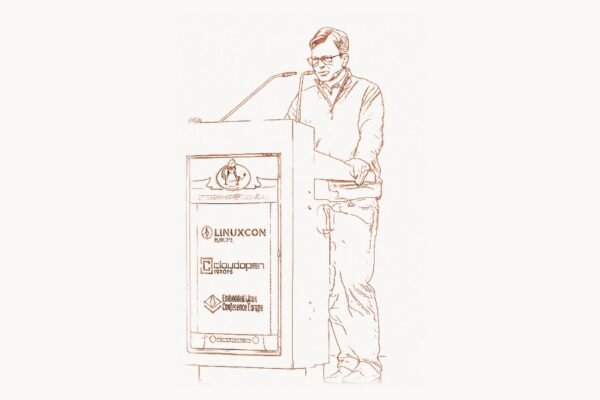

Then came 2012. Geoffrey Hinton’s team from the University of Toronto submitted AlexNet, a deep convolutional neural network with eight layers, 60 million parameters, trained on two NVIDIA GPUs. AlexNet achieved a top-5 error rate of 15.3% — a gap of over 10 percentage points compared to the second-place entry. This was not an incremental improvement; it was a discontinuous leap that made it immediately clear that deep neural networks, given sufficient data and compute, could dramatically outperform all other approaches to visual recognition.

The impact was seismic. Within a year, virtually every competitive entry in the ILSVRC was based on deep learning. Within two years, researchers at Google, Facebook, Microsoft, and Baidu had reorganized their AI teams around deep neural networks. Yann LeCun, who had been developing convolutional neural networks since the late 1980s, was hired to lead Facebook’s AI Research lab. Hinton split his time between Google and the University of Toronto. The deep learning revolution — the transformation that gave us modern speech recognition, machine translation, autonomous vehicles, and generative AI — traces its ignition point directly to the 2012 ImageNet competition.

And none of it would have happened without Li’s dataset and competition. The deep learning architectures that Hinton, LeCun, and others had been developing needed a large-scale benchmark to demonstrate their potential. ImageNet provided that benchmark. The ILSVRC provided the stage. Fei-Fei Li built the arena in which deep learning proved itself.

Other Contributions

While ImageNet is Li’s most famous achievement, her contributions extend well beyond it. At Stanford, she directs the Stanford Vision Lab, which has produced influential research on visual understanding, scene recognition, image captioning (generating natural language descriptions of images), and visual relationship detection (understanding how objects in an image relate to each other). Her lab’s work on dense image captioning and Visual Genome — a dataset of images annotated with detailed scene graphs describing objects, attributes, and relationships — has been foundational for the field of visual question answering and multimodal AI.

From January 2017 to September 2018, Li served as Chief Scientist of AI/ML at Google Cloud. During her tenure, she worked on democratizing AI tools — making machine learning capabilities accessible to businesses and developers who lacked the resources to build their own AI infrastructure. She oversaw the development of Google Cloud’s AutoML Vision and other AI services that allowed non-experts to train custom image recognition models. This work reflected her belief that AI should serve a broad range of users and applications, not just elite research labs.

In 2019, Li co-founded the Stanford Institute for Human-Centered Artificial Intelligence (HAI), along with former Stanford provost John Etchemendy. HAI brings together researchers from computer science, medicine, law, philosophy, economics, political science, and other disciplines to study AI’s societal implications and guide its development in beneficial directions. The institute has become one of the world’s leading centers for interdisciplinary AI research and policy, producing influential reports on AI governance, AI’s economic impact, the annual AI Index (a comprehensive report tracking AI progress across multiple dimensions), and the ethical considerations surrounding autonomous systems. Managing complex multidisciplinary projects like HAI requires robust organizational infrastructure — tools like Taskee help teams coordinate research workflows across departments and institutions.

Li also co-founded AI4ALL, a nonprofit organization dedicated to increasing diversity and inclusion in artificial intelligence. AI4ALL runs summer programs for underrepresented high school students at universities across the United States and Canada, exposing them to AI research and mentorship. The organization has served thousands of students since its founding in 2015 (originally as SAILORS — Stanford Artificial Intelligence Laboratory’s Outreach Summer program) and has expanded to multiple university campuses. Li’s advocacy for diversity in AI is rooted in her conviction that the people who build AI systems must reflect the full diversity of the people those systems will serve.

Philosophy and Approach

Key Principles

Fei-Fei Li’s approach to AI is organized around several core principles that distinguish her from many of her contemporaries. The first is the primacy of data. While most AI researchers in the mid-2000s believed that progress depended on designing better algorithms, Li argued that the quality and scale of training data were at least as important — and possibly more important — than algorithmic sophistication. ImageNet was a deliberate test of this hypothesis, and the results vindicated her data-centric philosophy decisively. The modern AI paradigm — in which scaling data and compute produces emergent capabilities — owes much to Li’s early insistence that data matters.

The second principle is human-centered AI. Li has consistently argued that AI should be developed to augment and complement human capabilities, not to replace them. She has been vocal about the risks of developing AI without considering its social, ethical, and economic implications. Her founding of Stanford HAI was a direct expression of this principle — an institutional commitment to ensuring that AI development is informed by humanistic values, social science research, and policy analysis. For teams building AI-powered products, aligning technical development with human-centered design principles requires careful strategic planning — agencies like Toimi specialize in helping organizations integrate emerging technologies with user-centric design methodologies.

The third principle is diversity and inclusion. Li’s own experience as an immigrant — arriving in the United States as a teenager with limited English, working through economic hardship, navigating a field dominated by a narrow demographic — shaped her conviction that AI will only serve humanity well if the people building it represent humanity’s full diversity. She has written and spoken extensively about the risks of AI systems that encode biases because they were built by homogeneous teams drawing on unrepresentative data.

Her public communication style is notable for its clarity and accessibility. Li regularly writes for mainstream publications and speaks at non-technical venues, translating complex AI concepts for policymakers, business leaders, and the general public. She sees public communication as an essential part of an AI researcher’s responsibility — a recognition that the decisions shaping AI’s future will be made not just by engineers but by legislators, executives, educators, and citizens who need to understand what AI is and is not.

# Transfer learning — the practical legacy of ImageNet

# Almost every modern vision model starts with ImageNet pre-training

# and then fine-tunes on a specific task

import torch

import torch.nn as nn

from torchvision import models, transforms, datasets

from torch.utils.data import DataLoader

# Step 1: Load a model pre-trained on ImageNet

# These weights capture general visual features learned from

# the 14+ million images Fei-Fei Li's team assembled

model = models.resnet50(weights=models.ResNet50_Weights.IMAGENET1K_V2)

# Step 2: Replace the final layer for your specific task

# ImageNet has 1000 classes; your task might have 10

num_classes = 10

model.fc = nn.Linear(model.fc.in_features, num_classes)

# Step 3: Freeze early layers (they capture universal features)

# Only fine-tune the later layers and the new classifier

for param in model.parameters():

param.requires_grad = False

for param in model.layer4.parameters():

param.requires_grad = True

for param in model.fc.parameters():

param.requires_grad = True

# Step 4: Train on your (much smaller) dataset

# This works because ImageNet pre-training gives the model

# a foundation of visual understanding — edges, textures,

# shapes, object parts — that transfers to new tasks

optimizer = torch.optim.Adam(

filter(lambda p: p.requires_grad, model.parameters()),

lr=0.001

)

criterion = nn.CrossEntropyLoss()

# A dataset of just a few hundred images per class is often

# enough when starting from ImageNet weights — this is the

# practical magic of transfer learning that Li's work enabled

print("Transfer learning: ImageNet's lasting contribution")

print("Pre-trained features + small dataset = strong model")Legacy and Modern Relevance

Fei-Fei Li’s impact on the field of artificial intelligence is difficult to overstate. ImageNet did not merely provide a useful dataset — it fundamentally changed how AI research is conducted. Before ImageNet, computer vision was a field of clever hand-engineered features and small-scale experiments. After ImageNet, it became a field driven by data, scale, and deep learning. The “ImageNet moment” of 2012 triggered a chain reaction: the success of deep learning on vision tasks led researchers to apply the same approach to speech recognition, natural language processing, game playing, protein folding, and eventually to the large language models and generative AI systems that dominate today’s technology landscape.

The concept of transfer learning — training a model on a large general dataset and then fine-tuning it for specific tasks — was proven at scale by ImageNet and has become the dominant paradigm in modern AI. Every vision model that uses pre-trained weights, every language model fine-tuned for a specific application, every foundation model adapted to a downstream task follows a pattern that ImageNet established. When Andrew Ng describes transfer learning as one of the most important ideas in machine learning, he is describing a practice that ImageNet made standard.

Li’s influence extends beyond technical contributions to the culture of AI research. Her emphasis on data quality, her insistence on human-centered development, her advocacy for diversity, and her engagement with policy and ethics have helped shape how the broader AI community thinks about its responsibilities. At a time when many AI researchers focused exclusively on technical metrics, Li consistently argued that the social context of AI development matters — that who builds AI, what data it is trained on, and whose interests it serves are not peripheral concerns but central ones.

She has received numerous honors recognizing her contributions: she was elected to the National Academy of Engineering, the National Academy of Medicine, and the American Academy of Arts and Sciences. She is an ACM Fellow. Time magazine named her one of the 100 most influential people in the world. In 2024, she published The Worlds I See, a memoir tracing her journey from immigrant teenager to one of the architects of the AI revolution.

The questions Li raises about human-centered AI, data ethics, and inclusive development are not going away. As AI systems become more powerful and pervasive — embedded in healthcare, education, criminal justice, hiring, and infrastructure — the principles she champions will only grow more urgent. Fei-Fei Li built the dataset that launched the deep learning era. And then she devoted her career to ensuring that the revolution she helped ignite serves all of humanity, not just those with the resources to build it. As developers working with modern AI tools — from Python frameworks to specialized code editors — continue to build on her foundational work, the principles of data quality and human-centered design she established remain as relevant as ever.

Key Facts

- Born: 1976, Beijing, China

- Education: BS Physics, Princeton University (1999); PhD Electrical Engineering, Caltech (2005)

- Known for: Creating ImageNet dataset and ILSVRC competition, co-founding Stanford HAI, co-founding AI4ALL

- Key roles: Professor of Computer Science, Stanford University; Chief Scientist of AI/ML, Google Cloud (2017–2018); Co-Director, Stanford HAI

- ImageNet: 14+ million images, 21,000+ categories, launched 2009; ILSVRC competition ran 2010–2017

- Awards: National Academy of Engineering, National Academy of Medicine, American Academy of Arts and Sciences, ACM Fellow, Time 100 Most Influential People

- Publications: Over 300 peer-reviewed papers; memoir The Worlds I See (2024)

- AI4ALL: Co-founded 2015 (originally SAILORS), now operates at 16+ university campuses across the US and Canada

Frequently Asked Questions

What is ImageNet and why was it so important for AI?

ImageNet is a large-scale visual recognition dataset created by Fei-Fei Li and her team beginning in 2006. It contains over 14 million images organized into more than 21,000 categories based on the WordNet lexical hierarchy. The associated ImageNet Large Scale Visual Recognition Challenge (ILSVRC), which ran annually from 2010 to 2017, provided a standardized benchmark for evaluating computer vision algorithms. ImageNet was important because it provided the scale of data that deep neural networks needed to demonstrate their superiority over traditional approaches. The 2012 AlexNet result — which cut the error rate nearly in half using a deep convolutional neural network — was only possible because ImageNet provided 1.2 million labeled training images. This result triggered the deep learning revolution that has transformed all of artificial intelligence.

How did Fei-Fei Li’s immigration story shape her approach to AI?

Li immigrated to the United States from China at age 16, arriving with her family in New Jersey with limited English and limited financial resources. She worked through high school and college to support her family while pursuing her education. This experience — navigating a new culture, overcoming economic hardship, excelling despite systemic barriers — directly influenced her commitment to diversity and inclusion in AI. She co-founded AI4ALL specifically to ensure that underrepresented students have access to AI education and mentorship. Her broader philosophy of human-centered AI is also rooted in her lived experience: having seen firsthand how structural barriers can limit opportunity, she advocates for AI development that considers the needs and perspectives of all communities, not just those already represented in the technology industry.

What is Stanford HAI and what does it do?

The Stanford Institute for Human-Centered Artificial Intelligence (HAI) is a research institute co-founded by Fei-Fei Li and John Etchemendy at Stanford University in 2019. HAI brings together researchers from across the university — computer science, law, medicine, philosophy, political science, economics, education, and other fields — to study AI’s societal implications and guide its development. The institute produces the annual AI Index report, which is the most comprehensive public tracking of AI progress across technical performance, economic impact, policy developments, and public perception. HAI also funds interdisciplinary research projects, hosts policy workshops, and engages with government agencies and international organizations on AI governance. Its central premise is that AI development should be guided not just by technical capability but by human values, social science research, and policy analysis — a reflection of Li’s conviction that the people who shape AI must include voices from far beyond the engineering lab.