Every time you type a password to unlock your computer, log into a website, or access a cloud service, you are using an invention that traces directly back to one man: Fernando Jose Corbato. In 1961, at the Massachusetts Institute of Technology, Corbato and his team built the Compatible Time-Sharing System — CTSS — the first practical operating system that allowed multiple users to share a single computer simultaneously. To keep each user’s files private from the others, Corbato introduced something deceptively simple: the computer password. That single innovation, born from a pragmatic need on a university mainframe, became a foundational mechanism of digital security that persists to this day. But passwords were only the beginning. Corbato went on to lead the Multics project, one of the most ambitious operating system efforts in computing history, which pioneered hierarchical file systems, dynamic linking, memory-mapped files, and security rings — concepts that live on in every modern operating system. His work earned him the 1990 Turing Award and shaped the trajectory of computing from the centralized mainframe era through the dawn of personal and networked systems. Corbato’s career demonstrates that the most profound innovations often emerge not from a desire to be revolutionary, but from the disciplined pursuit of practical solutions to real engineering problems.

Early Life and Path to Technology

Fernando Jose Corbato was born on July 1, 1926, in Oakland, California. His father was a Spanish-born professor of literature, and his mother was of American descent. The family environment combined the humanities with intellectual rigor — a background that instilled in young Fernando both analytical discipline and an appreciation for clarity of thought. Growing up on the West Coast during the Great Depression and World War II, Corbato developed the pragmatic, no-nonsense engineering sensibility that would later define his approach to computing.

Corbato attended the California Institute of Technology (Caltech), where he earned his bachelor’s degree in physics in 1950. The choice of physics was significant: it gave him a deep grounding in mathematical reasoning, systems thinking, and experimental methodology. Rather than pursuing theoretical physics, however, Corbato was drawn to the emerging field of computing. He enrolled at the Massachusetts Institute of Technology for graduate studies, earning his PhD in physics in 1956. His doctoral work involved computational problems that required extensive use of MIT’s early computers, and it was through this hands-on experience that Corbato realized the potential — and the limitations — of the computing systems available at the time.

At MIT, Corbato joined the Computation Center, which housed the IBM 704 and later the IBM 709 and 7090 — machines that represented the state of the art in academic computing. Working in this environment, Corbato experienced firsthand the frustration that defined mid-century computing: batch processing. Users would submit programs on punched cards, wait hours or even days for their turn on the machine, and then receive output that might reveal a single typographical error. This staggeringly inefficient workflow wasted both human time and machine capacity. For someone with Corbato’s practical engineering temperament, the problem was not just an inconvenience — it was a fundamental barrier to scientific progress. The entire research community at MIT was bottlenecked by a computing model that treated the most expensive machine in the building as if it could only serve one person at a time.

This frustration became the seed of an idea that would revolutionize computing: what if a single powerful computer could serve many users at once, giving each the illusion of having the machine to themselves? This concept — time-sharing — would become Corbato’s defining contribution to the field, placing him alongside contemporaries like John McCarthy, who had independently articulated similar ideas about interactive computing and artificial intelligence at MIT.

The Breakthrough: CTSS and the Birth of Time-Sharing

In November 1961, Corbato and his small team at the MIT Computation Center demonstrated the Compatible Time-Sharing System (CTSS) on an IBM 7090 mainframe. The word “compatible” in the name was deliberate and important: CTSS was designed to coexist with the existing batch-processing system, not replace it. This pragmatic approach ensured that the new system could be tested and refined without disrupting ongoing research — a masterclass in incremental engineering that avoided the political and practical pitfalls of demanding a wholesale transition.

The Technical Innovation

CTSS was a remarkable piece of engineering given the hardware constraints of the era. The IBM 7090 had no hardware support for memory protection between users, no virtual memory, and no built-in concept of user accounts. Corbato and his team had to build all of these capabilities in software, working within severe limitations. The system used a modified IBM 7090 with two 32K-word memory banks — one for the supervisor program (the operating system) and one for the current user’s program. A hardware timer interrupted the running program at regular intervals, giving the supervisor the opportunity to save the current user’s state and switch to another user. This technique — preemptive multitasking driven by a clock interrupt — became the fundamental mechanism used by every subsequent time-sharing and multitasking operating system.

The basic structure of CTSS’s time-sharing mechanism can be understood through its scheduling logic:

CTSS Supervisor — Time-Sharing Scheduling Loop (conceptual)

REPEAT FOREVER:

Wait for clock interrupt (every ~200 milliseconds)

Save current user's registers and memory state

Record time quantum consumed by current user

IF current user's program completed:

Mark job as DONE

Notify user via console typewriter

Select next user from ready queue:

Priority based on:

- Time waiting (longer wait = higher priority)

- Time consumed (less CPU used = higher priority)

- Interactive vs. background classification

Restore selected user's registers and memory state

IF selected user needs disk-resident code:

Swap user's program from drum storage to core memory

(This swap was the critical bottleneck —

drum latency dominated response time)

Transfer control to selected user's program

User sees response on console typewriter within 1-3 seconds

(Compared to hours or days under batch processing)The system initially supported four simultaneous users on console typewriters (essentially electric terminals), but this was soon expanded to handle over thirty concurrent sessions. Users could edit programs, compile code, and run calculations interactively — a transformative experience for researchers accustomed to the batch-processing grind. For the first time, a programmer could type a command, see the result within seconds, fix errors immediately, and iterate rapidly. This interactive workflow was so vastly superior to batch processing that it changed not just how people used computers, but how they thought about computing itself.

One of the challenges that immediately arose with multiple users sharing a single system was privacy. Researchers needed assurance that their files and data could not be accessed — accidentally or deliberately — by other users. Corbato’s solution was elegant in its simplicity: assign each user a unique identifier and require them to authenticate with a secret word before accessing their files. This was the world’s first implementation of the computer password. The concept seems so obvious today that it is easy to forget that someone had to invent it. Corbato himself later expressed ambivalence about this particular innovation, acknowledging that passwords had become an increasingly inadequate security mechanism as systems grew more complex — but in 1961, for a university time-sharing system, it was exactly the right solution.

Why It Mattered

CTSS’s impact on computing cannot be overstated. It demonstrated conclusively that time-sharing was not merely a theoretical curiosity but a practical, superior way to use computing resources. Before CTSS, the idea that an expensive mainframe should be divided among multiple simultaneous users was controversial — many experts believed that the overhead of switching between users would waste too much of the machine’s precious capacity. Corbato’s implementation proved that the productivity gains from interactive use far outweighed the overhead costs.

The system also served as a crucible for innovations that extended far beyond time-sharing itself. CTSS introduced the concept of a hierarchical command structure, where users interacted with the system through typed commands — a precursor to the command-line interfaces that would dominate computing for decades. The system’s file management capabilities influenced every subsequent operating system’s approach to organizing user data. And the notion of distinct user accounts with authentication — Corbato’s password system — established the fundamental security paradigm that, for better or worse, remains the primary mechanism for digital identity verification more than sixty years later.

CTSS ran continuously at MIT from 1961 to 1973, serving as the primary computing platform for the university’s research community. It was one of the first systems to support electronic mail, allowing users to leave messages for one another — a rudimentary but recognizable precursor to modern email. The system inspired similar time-sharing efforts at other institutions and commercial organizations, kickstarting a movement that would eventually lead to the interactive computing paradigm we take for granted today. Figures like Margaret Hamilton, who was also working at MIT during this era, operated in an environment that CTSS helped define — one where software was becoming recognized as a discipline in its own right, worthy of the same rigor applied to hardware engineering.

Multics: The Most Ambitious Operating System of Its Era

Even before CTSS had reached its full maturity, Corbato was already thinking about its successor. The limitations of CTSS — its dependence on a single hardware platform, its limited security model, its ad hoc design — made it clear that a more principled, more ambitious system was needed. In 1964, Corbato began leading Project MAC’s effort to develop Multics (Multiplexed Information and Computing Service), a collaboration between MIT, General Electric, and Bell Telephone Laboratories.

Multics was conceived as nothing less than a computing utility — a system that would provide computing power as reliably and ubiquitously as the electric power grid provided electricity. Users would connect from terminals anywhere, access their files and programs, and pay only for the resources they consumed. This vision, articulated in the mid-1960s, anticipated cloud computing by roughly four decades.

The technical ambitions of Multics were staggering. The system introduced or refined an extraordinary number of concepts that became standard in subsequent operating systems:

Hierarchical file system. Multics implemented a tree-structured file system with directories that could contain both files and other directories — the organizational model used by every modern operating system. Before Multics, file systems were typically flat, with all files existing in a single namespace.

Security rings. Multics introduced the concept of hardware-enforced protection rings, where the operating system kernel ran at the most privileged level and user programs ran at less privileged levels. This architecture prevented user programs from directly accessing or corrupting the operating system — a principle that remains fundamental to modern CPU architecture (Intel’s x86 processors still implement ring-based protection).

Dynamic linking. Multics supported shared libraries that could be loaded into memory once and used by multiple programs simultaneously, with linking resolved at runtime rather than compile time. This dramatically reduced memory usage and enabled more flexible software architectures — a technique that every modern operating system employs.

Memory-mapped files. Multics unified its file system and virtual memory system so that files could be accessed as if they were in memory, with the operating system handling the details of reading from and writing to disk. This elegant abstraction simplified programming and improved performance for many workloads.

Hot swapping and continuous operation. Multics was designed to run continuously without scheduled downtime — a requirement driven by the “computing utility” vision. Hardware components could be added, removed, or replaced while the system was running. This capability, routine in modern data centers, was revolutionary in the 1960s.

The project’s conceptual framework for managing such complexity anticipated challenges that modern development teams face daily. Today, coordinating large-scale system development across distributed teams often relies on structured project management tools like Taskee, but in the 1960s, Corbato and his collaborators had to invent their own processes for managing a software project of unprecedented scope.

Multics was also, famously, a troubled project. Its ambition outstripped the hardware capabilities of the era, and the system was chronically late and over budget. Bell Labs withdrew from the project in 1969, frustrated by the slow progress. This departure had an unexpected consequence that would reshape computing history: two Bell Labs researchers who had worked on Multics — Ken Thompson and Dennis Ritchie — went on to create Unix, a deliberately simpler operating system whose very name was a pun on Multics (originally “Unics,” for Uniplexed Information and Computing Service). Unix adopted many of Multics’s best ideas — hierarchical file systems, a shell command language, the pipe mechanism — while deliberately abandoning the complexity that had made Multics so difficult to build. The irony is that Multics’s most lasting legacy may be the system it inspired by negative example: Unix, which in turn begat Linux, BSD, macOS, and the entire family of Unix-like systems that dominate modern computing.

Despite its reputation as a cautionary tale of overengineering, Multics itself was a working, production system. It ran at MIT, at Honeywell (which acquired GE’s computer division), at the Ford Motor Company, at various military installations, and at several universities. The last Multics system was shut down in October 2000 at the Canadian Department of National Defence — an operational lifespan of over thirty years. The system’s security was so robust that it was never successfully penetrated by an external attacker during its operational lifetime — a claim that few, if any, modern operating systems can make.

Corbato’s Law and Software Engineering Wisdom

Beyond his direct technical contributions, Corbato articulated insights about software development that have only grown more relevant with time. The most famous of these is what became known as Corbato’s Law: “The number of lines of code a programmer can write in a day is the same regardless of the programming language.” This observation — which Corbato made based on empirical measurements of programmer productivity — has profound implications for software engineering strategy.

If a programmer produces roughly the same number of lines per day whether writing in assembly language or a high-level language, then the choice of language determines how much functionality can be delivered per unit of time. A single line of a high-level language typically accomplishes far more than a single line of assembly. Corbato’s Law thus provides a quantitative argument for using the highest-level language appropriate for a given task — an argument that resonates with the work of John Backus, who created FORTRAN precisely to free scientists from the tedium of assembly programming, and with Edsger Dijkstra, who spent decades advocating for programming languages and methodologies that elevated the level of abstraction at which programmers worked.

Corbato’s Law also carries a more sobering implication: as systems grow larger, the number of bugs grows proportionally, regardless of language. This means that managing complexity — through modular design, rigorous testing, and clear interfaces — is not optional but essential. The principles of structured programming, information hiding, and separation of concerns that emerged from the work of Dijkstra, Donald Knuth, and others were not merely academic preferences but practical necessities driven by the realities that Corbato’s Law describes.

Corbato's Law — Implications for Software Engineering

Observation:

Lines of code per programmer per day ≈ constant K

(regardless of programming language)

Therefore:

Functionality per day = K × (expressiveness of language)

In assembly: K lines → small functionality increment

In C: K lines → moderate functionality increment

In Python: K lines → large functionality increment

Corollary (for system reliability):

Bugs per day ≈ constant B (proportional to K)

System of N lines → approximately N/K × B total bugs

Reducing N (via higher-level languages or better abstractions)

is the ONLY way to reduce total bug count

This is why Multics used PL/I (a high-level language)

instead of assembly — a controversial choice in the 1960s

that Corbato's own data justified.Multics’s deliberate choice of PL/I as its implementation language — rather than assembly — was a direct application of this principle. At the time, writing an operating system in a high-level language was considered reckless by many in the field. Corbato’s team demonstrated that it was not only feasible but advantageous, producing a more maintainable and more reliable system than would have been possible in assembly. This decision directly influenced Thompson and Ritchie’s later choice to write Unix in C — a decision that, in turn, made Unix portable across hardware platforms and enabled its explosive proliferation.

Philosophy and Engineering Approach

Corbato’s engineering philosophy was shaped by decades of building and operating complex systems. His approach combined theoretical rigor with unflinching pragmatism — a balance that is easy to describe but extraordinarily difficult to practice consistently across a career spanning over forty years.

Key Principles

Simplicity as a discipline. Despite leading one of the most complex software projects of its era, Corbato was a vocal advocate for simplicity. He understood, perhaps better than anyone, the costs of unnecessary complexity. His experience with Multics taught him that every feature added to a system carries not just its own implementation cost but an ongoing tax in terms of maintenance, debugging, and interaction with every other feature. This principle is echoed in the Unix philosophy of doing one thing well — a philosophy that emerged directly from the Multics experience.

Measure before you optimize. Corbato’s Law itself was the product of empirical measurement, not theoretical speculation. He insisted on measuring actual programmer productivity, actual system performance, and actual user behavior before making design decisions. This evidence-based approach to engineering was ahead of its time and anticipated the data-driven methodologies that dominate modern software development.

Design for the user, not the machine. The entire motivation behind time-sharing was to make computing serve human needs rather than forcing humans to accommodate the machine’s limitations. Corbato’s systems were designed around the principle that human time is more valuable than machine time — a radical idea in an era when a single computer cost millions of dollars and a programmer’s annual salary was a tiny fraction of that. This human-centered design philosophy now seems obvious, but in the 1960s it represented a fundamental shift in thinking about the relationship between people and machines.

Security is not optional. Multics’s ring-based security architecture reflected Corbato’s conviction that security must be designed into a system from the ground up, not bolted on after the fact. The difference between Multics’s security record (never breached) and the constant stream of vulnerabilities in systems that treated security as an afterthought validates this principle decisively.

Invest in infrastructure. Both CTSS and Multics were infrastructure projects — they did not solve any specific application problem but instead created platforms on which others could build solutions. Corbato understood that the highest leverage contributions in computing are often at the infrastructure level, where a single improvement benefits every application built on top of it. This principle guided his career focus on operating systems rather than applications.

Legacy and Modern Relevance

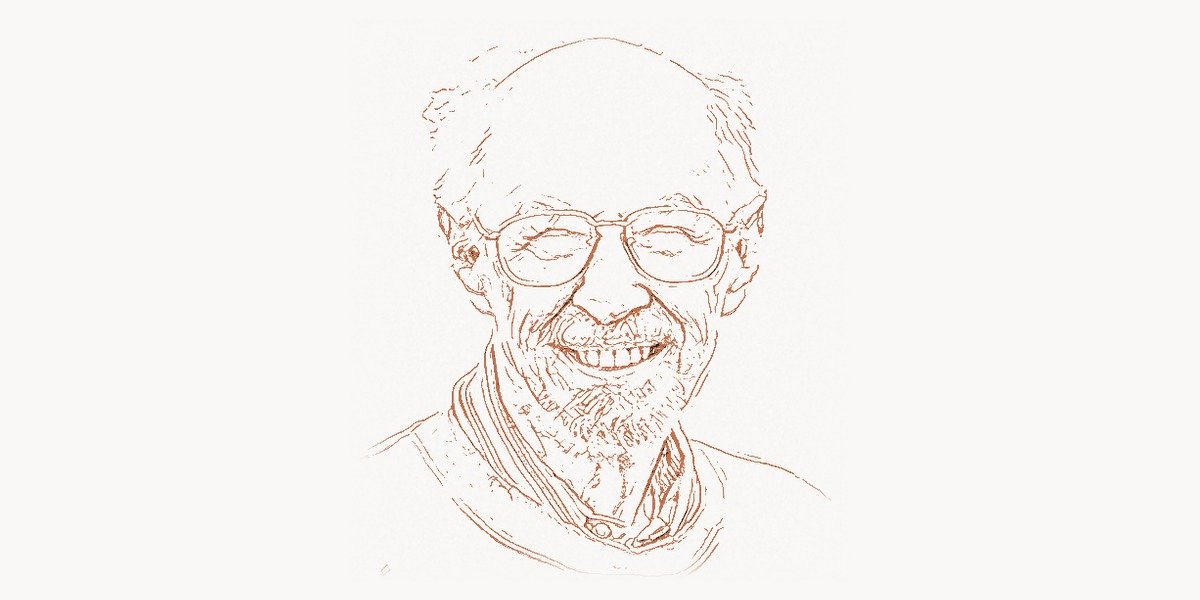

Fernando Corbato passed away on July 12, 2019, at the age of 93. His contributions to computing were recognized with the ACM Turing Award in 1990 — often called the Nobel Prize of computing — for his “pioneering work organizing the concepts and leading the development of the general-purpose, large-scale, time-sharing and resource-sharing computer systems.” But the formal recognition only hints at the depth and breadth of his influence.

The lineage from Corbato’s work to modern computing is direct and unbroken. CTSS proved that time-sharing worked, establishing the paradigm that evolved into modern multitasking operating systems. Multics pioneered the hierarchical file system, security rings, dynamic linking, and the computing utility model. Unix — created by researchers who had worked on Multics and were inspired by both its successes and its failures — became the ancestor of Linux, macOS, iOS, Android, and essentially every server operating system on the internet. When you navigate a directory tree on your laptop, you are using a concept that Multics introduced. When your operating system prevents a user application from corrupting kernel memory, it is using a security mechanism that Multics pioneered. When you access a cloud computing service, you are using a computing utility model that Corbato envisioned in 1964.

The computer password, Corbato’s most quotidian invention, has become both ubiquitous and controversial. Corbato himself acknowledged that passwords had become an inadequate security mechanism, and the modern push toward multi-factor authentication, biometrics, and passkeys represents an ongoing effort to solve the problem he first addressed in 1961. Yet even as passwords are supplemented by newer mechanisms, the fundamental concept of authenticating a user’s identity before granting access to resources — the concept Corbato introduced — remains the bedrock of digital security.

Corbato’s Law continues to inform software engineering decisions. The ongoing trend toward higher-level programming languages, more expressive frameworks, and more powerful abstractions is fundamentally driven by the insight Corbato articulated: since programmer output measured in lines of code is roughly constant, the only way to increase delivered functionality is to make each line do more. This principle justifies investments in better tools, better languages, and better development environments — investments that modern digital agencies like Toimi apply when selecting technology stacks for their clients’ projects.

Perhaps most importantly, Corbato’s career embodies a truth about technological progress that is easy to forget in an era obsessed with disruption and novelty: the most transformative innovations are often those that make existing capabilities more accessible. Time-sharing did not create new computational power — it made existing power available to more people, more efficiently. The password did not create security — it made shared systems practical by giving users confidence that their work was protected. Multics did not invent the concept of an operating system — it articulated and implemented the principles on which all subsequent operating systems would be built. In each case, Corbato’s genius lay not in imagining something entirely new, but in engineering practical solutions that unlocked the latent potential of existing technology. That is a kind of innovation that endures.

Key Facts

- Born Fernando Jose Corbato on July 1, 1926, in Oakland, California

- Earned a bachelor’s degree in physics from Caltech (1950) and a PhD in physics from MIT (1956)

- Created the Compatible Time-Sharing System (CTSS) in 1961 — the first practical time-sharing operating system

- Invented the computer password in 1961 to protect user accounts on CTSS

- Led the Multics project (1964-onward) in collaboration with General Electric and Bell Labs

- Multics pioneered hierarchical file systems, security rings, dynamic linking, and memory-mapped files

- Bell Labs researchers who left the Multics project went on to create Unix — whose name is a pun on Multics

- Formulated Corbato’s Law: the number of lines of code a programmer writes per day is constant regardless of language

- Received the ACM Turing Award in 1990 for pioneering time-sharing and resource-sharing systems

- Spent his entire academic career at MIT, serving as a professor of computer science

- Multics was never successfully penetrated by an external attacker during its operational lifetime

- The last Multics system ran until October 2000 — over 30 years of continuous operation

- Passed away on July 12, 2019, at the age of 93

Frequently Asked Questions

Why is Fernando Corbato considered the inventor of the computer password?

When Corbato’s team built CTSS in 1961, they faced a problem that had never arisen before in computing: multiple users needed to store private files on the same machine. In the batch-processing era, each user’s job ran in isolation and was physically separated on punched cards and printed output. But a time-sharing system, where users logged in through terminals and their files coexisted on the same disk, required a mechanism to verify each user’s identity and restrict access to their files. Corbato’s solution was to assign each user a unique login name and a secret password stored in a system file. When a user connected to CTSS, they had to provide this password before gaining access. This was the first implementation of password-based authentication on a computer system. While the concept seems obvious in retrospect, it was a genuine invention — no prior computer system had needed or implemented such a mechanism. Corbato himself later became critical of passwords, noting that they had become a poor security solution as systems grew more complex. He acknowledged that the proliferation of passwords across dozens of services had made them unmanageable for most users. Nevertheless, the basic model he invented — authenticating identity with a secret known only to the user — remains the foundation of digital security, even as it is augmented by newer technologies such as biometrics and hardware security keys.

How did Multics influence the creation of Unix, and why does the relationship matter?

The connection between Multics and Unix is one of the most consequential relationships in computing history. Ken Thompson and Dennis Ritchie both worked on the Multics project at Bell Labs before Bell withdrew from the collaboration in 1969. Frustrated but inspired, Thompson began writing a new operating system on a spare PDP-7 minicomputer, applying the lessons he had learned from Multics. The resulting system — originally called Unics (a pun on Multics, suggesting it was a simpler, “uni” version of the “multi” system) and later respelled Unix — adopted many of Multics’s core ideas: a hierarchical file system, a command shell, the concept of treating devices as files, and the use of a high-level language (C, instead of Multics’s PL/I) for implementation. However, Unix deliberately rejected Multics’s complexity, favoring small, composable tools over monolithic integrated systems. This relationship matters because Unix became the ancestor of an entire family of operating systems — including Linux, macOS, iOS, and Android — that collectively run the majority of the world’s computing devices. In a very real sense, Multics’s DNA, filtered through Unix, is present in virtually every computer and smartphone on the planet. Corbato’s work on Multics thus influenced modern computing both directly (through the concepts Multics pioneered) and indirectly (through Unix’s selective adoption and simplification of those concepts).

What was Corbato’s Law and why does it still matter for modern software engineering?

Corbato’s Law is the empirical observation that the number of lines of code a programmer can write in a day remains roughly constant regardless of the programming language being used. Whether writing assembly language, C, Java, or Python, a programmer produces approximately the same quantity of code per day. The implications of this observation are significant and enduring. First, it provides a powerful argument for using higher-level programming languages: if each programmer writes the same number of lines per day, and each line of a higher-level language accomplishes more than a line of assembly, then choosing a more expressive language directly increases the amount of functionality delivered per unit of developer time. This principle drove Corbato’s decision to implement Multics in PL/I and influenced Thompson and Ritchie’s decision to write Unix in C. Second, Corbato’s Law implies that the total number of bugs in a system is roughly proportional to its total lines of code, since bugs are introduced at a roughly constant rate per line. This means that reducing code volume — through better abstractions, code reuse, and higher-level languages — is the most effective strategy for improving software reliability. In the modern era, this principle justifies investments in frameworks, libraries, code generation tools, and increasingly, AI-assisted development. Corbato’s insight, derived from careful measurement of 1960s programming practices, remains one of the most practically useful observations in all of software engineering.