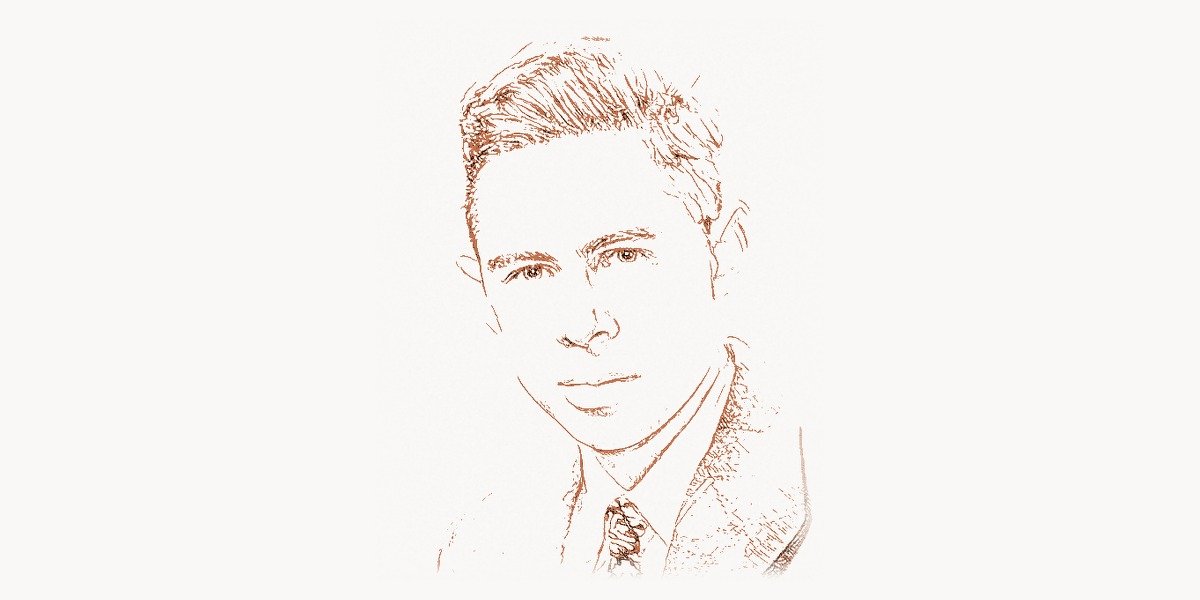

In the summer of 1958, a 32-year-old psychologist at Cornell Aeronautical Laboratory stood before a room full of reporters and military officials and demonstrated a machine that could learn. It was not programmed with explicit rules. It was not told what to look for. Instead, it was shown a series of images — and it figured out, on its own, how to tell them apart. The machine was called the Mark I Perceptron, and the man who built it was Frank Rosenblatt. The New York Times reported the event with a headline suggesting the Navy had revealed a computer that would one day walk, talk, and be conscious. The claim was wildly exaggerated. But the underlying idea — that a machine could learn from experience rather than following fixed instructions — was not exaggerated at all. It was, in fact, the foundational insight of modern deep learning, neural networks, and artificial intelligence as we know it today. Frank Rosenblatt saw it six decades before the rest of the world caught up.

Early Life and Education

Frank Rosenblatt was born on July 11, 1928, in New Rochelle, New York. His father was of Russian-Jewish origin, having emigrated to the United States, and worked as a civil engineer. Rosenblatt grew up in a household that valued intellectual achievement, and he showed an early aptitude for both science and the humanities. He attended the Bronx High School of Science, one of the most prestigious public high schools in the United States, known for producing an extraordinary number of Nobel laureates and leading scientists.

After graduating from the Bronx High School of Science, Rosenblatt enrolled at Cornell University, where he would spend the majority of his academic and professional life. He earned his bachelor’s degree in 1950 and then pursued graduate studies in psychology — not computer science or electrical engineering. This distinction is critical to understanding Rosenblatt’s approach. He was not primarily interested in building faster calculators or more efficient circuits. He was interested in the brain — how biological organisms perceive, learn, and remember. His doctoral research focused on psycholinguistics and the statistical properties of language, and he received his Ph.D. in psychology from Cornell in 1956.

After completing his doctorate, Rosenblatt joined the Cornell Aeronautical Laboratory in Buffalo, New York, a research facility affiliated with Cornell University that conducted work for the U.S. military. It was there, at the intersection of psychology, neuroscience, and engineering, that Rosenblatt began the work that would define his career. He was hired to work on cognitive science and perception — specifically, to investigate whether machines could be built that perceived and learned in ways analogous to biological neural systems. The laboratory’s connection to military funding gave him access to substantial resources, including the hardware needed to build physical implementations of his theoretical models.

The Perceptron Breakthrough

Technical Innovation

In 1957, Rosenblatt published a technical report at the Cornell Aeronautical Laboratory titled “The Perceptron: A Perceiving and Recognizing Automaton.” The following year, he published the formal academic paper “The Perceptron: A Probabilistic Model for Information Storage and Organization in the Brain” in the journal Psychological Review. The paper proposed a fundamentally new approach to machine intelligence — one based not on symbolic logic or explicit programming, but on the architecture of the brain itself.

The perceptron was a mathematical model of a single neuron. In its simplest form, it takes a set of numerical inputs (analogous to signals arriving at a biological neuron through its dendrites), multiplies each input by a corresponding weight (analogous to the strength of a synaptic connection), sums the weighted inputs, and passes the result through a threshold function (analogous to the neuron’s firing threshold). If the weighted sum exceeds the threshold, the perceptron outputs 1 (the neuron fires); otherwise, it outputs 0.

import numpy as np

class Perceptron:

"""

Frank Rosenblatt's perceptron (1958).

The fundamental building block of neural networks.

A single artificial neuron that learns through iterative

weight adjustment — the first machine learning algorithm

proven to converge on linearly separable data.

"""

def __init__(self, n_inputs, learning_rate=0.1):

# Initialize weights to small random values

# Rosenblatt used random initial weights in the Mark I

self.weights = np.random.randn(n_inputs) * 0.01

self.bias = 0.0

self.learning_rate = learning_rate

def predict(self, inputs):

"""Compute weighted sum and apply threshold function."""

weighted_sum = np.dot(inputs, self.weights) + self.bias

# Step activation: fire (1) if above threshold, else 0

return 1 if weighted_sum > 0 else 0

def train(self, training_data, labels, epochs=100):

"""

The perceptron learning rule:

w_new = w_old + learning_rate * (target - prediction) * input

Rosenblatt proved this converges for linearly separable data

— the Perceptron Convergence Theorem (1962).

"""

for epoch in range(epochs):

errors = 0

for inputs, target in zip(training_data, labels):

prediction = self.predict(inputs)

error = target - prediction

if error != 0:

# Adjust weights proportional to error and input

self.weights += self.learning_rate * error * inputs

self.bias += self.learning_rate * error

errors += 1

if errors == 0:

print(f"Converged after {epoch + 1} epochs")

return

print(f"Training completed after {epochs} epochs")

# Example: Teaching a perceptron to perform logical AND

# This is the type of pattern classification Rosenblatt demonstrated

X = np.array([[0, 0], [0, 1], [1, 0], [1, 1]])

y = np.array([0, 0, 0, 1]) # AND truth table

perceptron = Perceptron(n_inputs=2, learning_rate=0.1)

perceptron.train(X, y, epochs=50)

# Test the trained perceptron

for inputs, label in zip(X, y):

prediction = perceptron.predict(inputs)

print(f"Input: {inputs}, Expected: {label}, Predicted: {prediction}")What made the perceptron revolutionary was not just its structure but its learning algorithm. Rosenblatt proposed that the weights could be adjusted automatically based on the difference between the perceptron’s output and the correct answer. When the perceptron made an error, the weights were nudged in the direction that would reduce the error. This process — repeated over many examples — caused the perceptron to gradually converge on a set of weights that correctly classified the training data. Rosenblatt proved mathematically that this convergence was guaranteed for any problem where a correct set of weights existed (the Perceptron Convergence Theorem, published in his 1962 book “Principles of Neurodynamics”).

This was a paradigm shift. Before Rosenblatt, the dominant approach to artificial intelligence — championed by researchers like John McCarthy and Marvin Minsky — was symbolic AI: writing explicit rules and logical statements that a computer would follow. Rosenblatt proposed something entirely different: a system that learned its own rules from data. The implications were profound. If a machine could learn to recognize patterns from examples, rather than having patterns hand-coded by programmers, it could potentially learn to do things that no programmer knew how to specify explicitly — like recognizing faces, understanding speech, or interpreting complex visual scenes.

Why It Mattered

The perceptron mattered because it was the first formal, mathematically grounded demonstration that a machine could learn. Earlier cybernetics work by Warren McCulloch and Walter Pitts (1943) had shown that networks of simple units could compute logical functions, and Donald Hebb (1949) had proposed that synaptic connections could be strengthened through use. But Rosenblatt was the first to combine these ideas into a concrete, trainable model with a proven convergence guarantee and a working physical implementation.

The perceptron also mattered because of its biological plausibility. Unlike symbolic AI, which had no meaningful connection to how actual brains work, the perceptron was inspired by and loosely modeled on real neural architecture. Rosenblatt was explicit about this: his goal was not just to build a useful engineering tool but to develop computational theories of how biological perception worked. This dual emphasis on engineering utility and biological insight established a research tradition that continues in modern computational neuroscience.

The significance of the perceptron learning rule cannot be overstated. The idea that a machine should adjust its internal parameters based on the discrepancy between its output and the correct answer is the central idea of all modern machine learning. Every neural network trained today — from the convolutional networks that Yann LeCun developed for image recognition to the transformers behind ChatGPT — learns by the same fundamental principle that Rosenblatt formalized in 1958: compute an error, propagate it back through the network, and adjust the weights to reduce that error. Backpropagation, the algorithm that made deep learning possible, is a direct generalization of Rosenblatt’s perceptron learning rule to multilayer networks.

Other Major Contributions

Rosenblatt’s contributions extended well beyond the single-layer perceptron. His work encompassed hardware implementation, theoretical extensions to multilayer networks, and foundational contributions to pattern recognition as a discipline.

The Mark I Perceptron, completed in 1958 at the Cornell Aeronautical Laboratory with funding from the U.S. Office of Naval Research, was not a software simulation. It was a physical machine. The Mark I used a 20×20 grid of cadmium sulfide photocells as its input “retina” — 400 sensors that detected light and dark regions in simple images. These inputs were connected through a patch panel to a layer of 512 “association units” with randomly wired connections. The association units fed into 8 output units whose weights were adjusted by electric motors controlled by the learning algorithm. The machine literally turned physical knobs to learn. It weighed several tons and filled an entire room, but it worked. It learned to distinguish between simple shapes — triangles, squares, and circles — after being shown a few dozen examples of each. This was the first time in history that a physical machine had learned to classify visual patterns from examples.

Rosenblatt did not stop at single-layer perceptrons. In his 1962 book “Principles of Neurodynamics: Perceptrons and the Theory of Brain Mechanisms,” he described a range of perceptron architectures, including multilayer networks that he called “cross-coupled” and “back-coupled” systems. He discussed perceptrons with multiple layers of trainable weights, feedback connections, and temporal processing capabilities. He understood that multilayer networks could solve problems that single-layer perceptrons could not — a point that would become the center of a famous controversy. The book laid out a theoretical framework for neural computation that was decades ahead of its time, anticipating concepts that would later be central to the deep learning revolution of the 2010s.

Rosenblatt also made significant contributions to pattern recognition theory more broadly. He developed statistical methods for analyzing the capacity and generalization properties of learning machines — questions about how much a network could learn, how well it could generalize from training examples to new data, and what factors determined its performance. These questions are still at the center of modern machine learning research, and Rosenblatt’s early theoretical work on them provided a foundation for later developments in computational learning theory.

His work on random connectivity — the idea that the initial wiring of a neural network did not need to be carefully designed but could be random, with learning handling the fine-tuning — was another prescient insight. Modern techniques like random initialization of neural network weights, random feature extraction, and reservoir computing all build on the principle that Rosenblatt explored: that randomness, combined with learning, can be a powerful computational strategy. Teams working on AI research today, whether coordinating through platforms like Taskee or in academic labs, still grapple with the same weight initialization questions Rosenblatt first addressed in the 1950s.

Philosophy and Approach

Key Principles

Rosenblatt’s intellectual framework was defined by several principles that set him apart from his contemporaries and that have been vindicated by the subsequent history of AI.

First, he believed that intelligence was fundamentally about learning, not about logic. While the dominant symbolic AI school — led by McCarthy, Minsky, Allen Newell, and Herbert Simon — focused on encoding human knowledge as logical rules and search procedures, Rosenblatt argued that the essence of intelligence was the ability to extract patterns from experience. He did not deny that logic and reasoning were important, but he believed they were secondary to perception and learning. This bottom-up, data-driven view of intelligence was a minority position in the 1960s. It is the consensus position in AI today.

Second, Rosenblatt believed that the brain was the right model for intelligent machines. He was not interested in building systems that were merely useful — he wanted to understand how biological neural systems actually worked and to build artificial systems based on those principles. This commitment to biological plausibility separated him from both the symbolic AI camp (which had no interest in the brain) and from most engineers (who simply wanted systems that worked, regardless of mechanism). Modern computational neuroscience and neuromorphic computing owe a direct intellectual debt to Rosenblatt’s insistence on taking the brain seriously as a computational architecture.

Third, Rosenblatt was committed to building working hardware, not just writing theoretical papers. The Mark I Perceptron was a physical machine that demonstrated his ideas in concrete, undeniable terms. This emphasis on implementation was important both scientifically (it proved the concept worked) and rhetorically (it captured the imagination of the public and funders). In today’s AI landscape, where practical implementations of large-scale neural networks have driven progress far more than pure theory, Rosenblatt’s bias toward building is especially relevant.

Fourth, Rosenblatt was intellectually courageous. He made bold claims about the potential of perceptrons and neural networks at a time when the establishment was skeptical. When Minsky and Seymour Papert published “Perceptrons” in 1969 — a book that proved fundamental limitations of single-layer perceptrons and was widely (mis)interpreted as a refutation of all neural network research — Rosenblatt continued to defend and develop his ideas. The criticism was mathematically valid for single-layer networks, but Rosenblatt had always argued that multilayer networks could overcome those limitations. History proved him right, though he did not live to see it.

Legacy and Impact

Frank Rosenblatt died on July 11, 1971 — his 43rd birthday — in a boating accident on Chesapeake Bay. His death came at the worst possible time for his legacy. The publication of Minsky and Papert’s “Perceptrons” in 1969 had triggered what historians of AI call the first “AI winter” for neural networks. Funding dried up. Researchers moved to other areas. The perceptron was widely seen as a dead end. Without Rosenblatt alive to advocate for his ideas and push the research forward, neural network research entered a period of dormancy that lasted over a decade.

The revival began in the 1980s, when researchers including Geoffrey Hinton, David Rumelhart, and Ronald Williams demonstrated that the backpropagation algorithm could train multilayer perceptrons effectively — solving exactly the problem that Minsky and Papert had identified. Backpropagation extended Rosenblatt’s perceptron learning rule to networks with hidden layers, allowing them to learn complex, nonlinear patterns that single-layer perceptrons could not represent. The multilayer perceptron, trained by backpropagation, became the workhorse of neural network research for two decades.

The deep learning revolution of the 2010s — driven by Hinton, Yann LeCun, Yoshua Bengio, and Jurgen Schmidhuber, among others — was the ultimate vindication of Rosenblatt’s vision. When convolutional neural networks began beating human-level performance on image recognition, when recurrent networks learned to translate languages, and when transformers learned to generate coherent text, they were all doing what Rosenblatt had predicted: learning complex patterns from data using networks of simple, interconnected units with adjustable weights. The architecture was more sophisticated, the training algorithms more powerful, and the hardware incomparably faster, but the fundamental principle was Rosenblatt’s.

Today, every major AI system — from image classifiers to language models, from autonomous vehicles to protein structure predictors — is a descendant of Rosenblatt’s perceptron. The artificial neurons in a modern transformer model process inputs, apply weights, sum results, and pass them through activation functions in exactly the pattern Rosenblatt described. The learning algorithms adjust those weights based on prediction errors, following the logic Rosenblatt formalized. The field has added enormous sophistication — attention mechanisms, normalization layers, residual connections, massive datasets, specialized hardware — but the conceptual core remains unchanged. Organizations managing complex AI and software projects, whether using tools like Toimi for digital project coordination or running university research labs, are all working within the paradigm Rosenblatt defined.

"""

From Rosenblatt's Perceptron to a Modern Neural Network

This code shows how a multilayer perceptron (MLP) — the direct

descendant of Rosenblatt's single-layer perceptron — is built.

Each layer is a collection of perceptrons.

"""

import numpy as np

class MultilayerPerceptron:

"""

A simple multilayer perceptron (MLP).

Rosenblatt described multilayer architectures in

'Principles of Neurodynamics' (1962), but lacked

an efficient training algorithm for hidden layers.

Backpropagation (Rumelhart, Hinton, Williams, 1986)

solved this problem and unlocked deep learning.

"""

def __init__(self, layer_sizes):

self.weights = []

self.biases = []

for i in range(len(layer_sizes) - 1):

w = np.random.randn(layer_sizes[i], layer_sizes[i+1]) * 0.1

b = np.zeros((1, layer_sizes[i+1]))

self.weights.append(w)

self.biases.append(b)

def sigmoid(self, z):

"""Smooth activation — replaces Rosenblatt's hard threshold."""

return 1 / (1 + np.exp(-np.clip(z, -500, 500)))

def sigmoid_derivative(self, a):

return a * (1 - a)

def forward(self, X):

"""Forward pass through all layers."""

self.activations = [X]

current = X

for w, b in zip(self.weights, self.biases):

z = current @ w + b

current = self.sigmoid(z)

self.activations.append(current)

return current

def backward(self, y, learning_rate=0.5):

"""

Backpropagation — the generalization of Rosenblatt's

learning rule to networks with hidden layers.

"""

m = y.shape[0]

delta = (self.activations[-1] - y) * \

self.sigmoid_derivative(self.activations[-1])

for i in range(len(self.weights) - 1, -1, -1):

dw = self.activations[i].T @ delta / m

db = np.sum(delta, axis=0, keepdims=True) / m

if i > 0:

delta = (delta @ self.weights[i].T) * \

self.sigmoid_derivative(self.activations[i])

self.weights[i] -= learning_rate * dw

self.biases[i] -= learning_rate * db

# XOR — the problem Minsky proved a single perceptron cannot solve.

# A two-layer perceptron handles it easily.

X = np.array([[0,0],[0,1],[1,0],[1,1]])

y = np.array([[0],[1],[1],[0]])

mlp = MultilayerPerceptron([2, 4, 1])

for epoch in range(5000):

output = mlp.forward(X)

mlp.backward(y, learning_rate=2.0)

print("XOR predictions after training:")

for inputs, prediction in zip(X, mlp.forward(X)):

print(f" {inputs} -> {prediction[0]:.4f}")Rosenblatt’s influence extends beyond the technical. His insistence that machines could learn — and that learning was the path to intelligence — reshaped the philosophical landscape of AI. The question is no longer whether machines can learn (they obviously can) but how far that learning can go and what it means. Modern discussions about AI alignment, safety, and the nature of machine understanding all trace back to the research program Rosenblatt initiated. He did not live to see the vindication of his ideas. He missed the backpropagation breakthrough by 15 years, the deep learning revolution by 40 years, and the era of large language models by over 50 years. But every one of those breakthroughs was built on the foundation he laid. The perceptron was the seed from which the entire field of neural network research grew. Rosenblatt planted it, defended it against fierce criticism, and died before it bore fruit. The fruit, when it came, changed the world.

Key Facts

- Born: July 11, 1928, New Rochelle, New York, United States

- Died: July 11, 1971, Chesapeake Bay, United States (boating accident, on his 43rd birthday)

- Known for: Inventing the perceptron, founding neural network research, the Perceptron Convergence Theorem

- Key works: “The Perceptron: A Probabilistic Model for Information Storage and Organization in the Brain” (1958), “Principles of Neurodynamics” (1962), Mark I Perceptron hardware (1958)

- Education: B.A. from Cornell University (1950), Ph.D. in Psychology from Cornell University (1956)

- Affiliations: Cornell Aeronautical Laboratory, Cornell University

- Fields: Neural networks, pattern recognition, computational neuroscience, machine learning

- Posthumous recognition: IEEE Frank Rosenblatt Award (established 2004), acknowledged as a founding father of deep learning by historians of AI

FAQ

What exactly is a perceptron and why was it so important?

A perceptron is a mathematical model of a single artificial neuron, proposed by Frank Rosenblatt in 1958. It takes numerical inputs, multiplies each by a learnable weight, sums the results, and outputs 1 or 0 based on whether the sum exceeds a threshold. Its importance lies not in its simplicity but in its learning algorithm: the perceptron automatically adjusts its weights based on errors, converging on correct classifications without being explicitly programmed. This was the first formally proven machine learning algorithm, and its core principle — adjusting parameters to minimize errors — is the foundation of all modern neural networks, including the deep learning systems that power image recognition, language models, and autonomous vehicles today.

Why did neural network research stall after Rosenblatt’s perceptron?

In 1969, Marvin Minsky and Seymour Papert published “Perceptrons,” a book that rigorously proved that single-layer perceptrons cannot solve problems that are not linearly separable — most famously, the XOR (exclusive or) function. The book was widely interpreted as proving that neural networks in general were a dead end, which was not what Minsky and Papert actually claimed. Combined with Rosenblatt’s death in 1971, this led to a dramatic reduction in funding and interest in neural network research throughout the 1970s. The field revived in the 1980s when backpropagation was shown to effectively train multilayer networks, overcoming the exact limitation Minsky and Papert had identified.

How does Rosenblatt’s perceptron relate to modern deep learning?

Modern deep learning is the direct descendant of Rosenblatt’s perceptron. A deep neural network is, structurally, many layers of perceptrons connected together. Each neuron in a modern network performs the same basic operation Rosenblatt described: it receives inputs, applies weights, sums them, and passes the result through an activation function. The training process still follows Rosenblatt’s fundamental insight — adjust weights based on the difference between predicted and actual outputs. The key advances since Rosenblatt have been the backpropagation algorithm (which extends his learning rule to multilayer networks), better activation functions (replacing his hard threshold with smooth, differentiable functions), vastly larger datasets, and enormously more powerful hardware. But the conceptual DNA of every modern neural network traces directly back to Rosenblatt’s 1958 perceptron.

What was the Mark I Perceptron machine?

The Mark I Perceptron was a physical hardware implementation of Rosenblatt’s perceptron algorithm, built at the Cornell Aeronautical Laboratory in 1958 with funding from the U.S. Office of Naval Research. It used a 20×20 grid of 400 cadmium sulfide photocells as its visual input, connected to 512 association units and 8 output units. The weights were implemented as physical potentiometers adjusted by electric motors controlled by the learning algorithm. The machine occupied an entire room and weighed several tons. It was the first hardware device in history that could learn to classify visual patterns from examples, successfully distinguishing between simple geometric shapes like triangles and squares after training. While primitive by modern standards, it proved that learning machines were physically realizable, not just theoretical constructs.