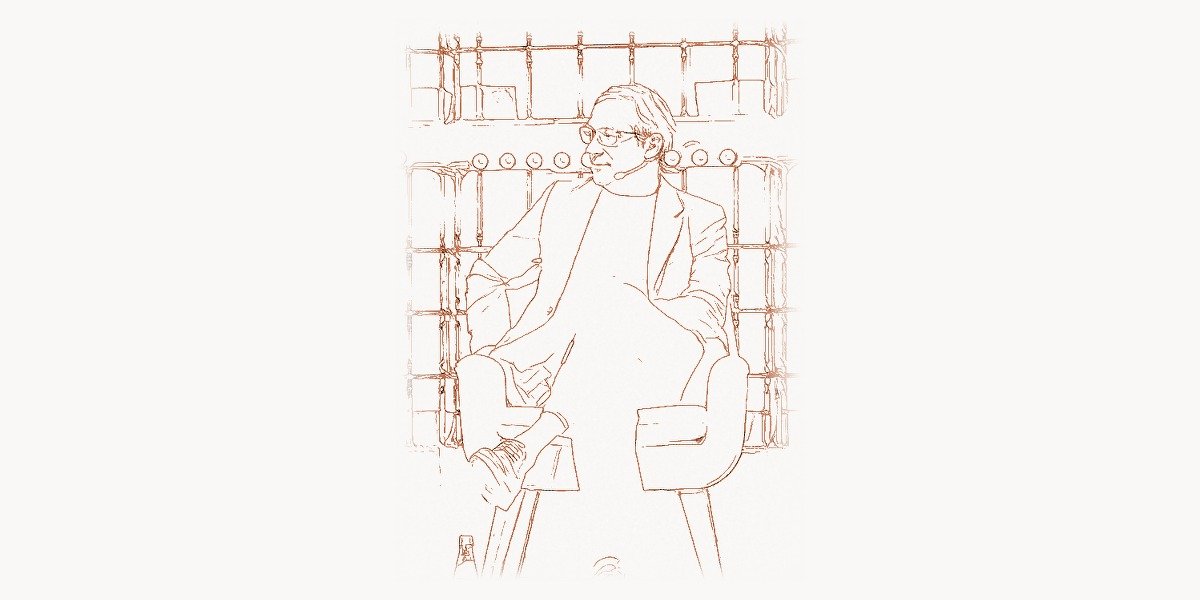

In the frenzied gold rush of modern artificial intelligence, where billion-dollar language models dominate headlines and venture capital flows like water, one voice has consistently cut through the hype with surgical precision. Gary Marcus — cognitive scientist, entrepreneur, bestselling author, and relentless AI skeptic — has spent decades arguing that the emperor of deep learning, if not naked, is at least missing some crucial garments. His is not the complaint of a technophobe or a Luddite, but the rigorously reasoned critique of a scientist who has spent his entire career studying how minds actually work, both biological and artificial. In an era when questioning the dominant paradigm can feel like heresy, Marcus has made a career of doing exactly that — and being proven right more often than his detractors care to admit.

Early Life and Education

Gary Fred Marcus was born on February 8, 1970, in Baltimore, Maryland. Even as a child, he displayed an insatiable curiosity about how things work — not just mechanical things, but the machinery of thought itself. Growing up in an intellectually stimulating household, he developed an early fascination with language, logic, and the puzzle of human cognition. How do children learn language so effortlessly? Why can a toddler generalize from a handful of examples while sophisticated computer programs struggle with millions? These questions would shape his entire intellectual trajectory.

Marcus pursued his undergraduate studies at Hampshire College in Amherst, Massachusetts, a liberal arts institution known for its interdisciplinary approach and emphasis on independent scholarship. The college’s structure — with its emphasis on self-directed learning and cross-disciplinary thinking — proved ideal for a young mind that refused to stay within the boundaries of any single discipline. He graduated in 1992 and set his sights on one of the most prestigious cognitive science programs in the world.

For his doctoral work, Marcus went to the Massachusetts Institute of Technology, where he studied under the legendary linguist and cognitive scientist Steven Pinker. Working in Pinker’s lab was transformative. Marcus immersed himself in the study of language acquisition, connectionism, and the ongoing debates about whether neural networks could truly account for the richness and systematicity of human language. His 1998 PhD dissertation tackled one of the central questions in cognitive science head-on: can connectionist models — the neural networks of that era — explain how children learn the rules of language? His answer, supported by rigorous experimentation, was a qualified but firm “no,” at least not in their current form. This dissertation laid the groundwork for much of his subsequent career.

Career and the Critique of Pure Deep Learning

After completing his PhD, Marcus joined the faculty of New York University’s Department of Psychology, where he would eventually become a full professor and director of the NYU Center for Language and Music. His academic work continued to explore the intersection of language, learning, and computation, but it was his willingness to publicly challenge the dominant trends in AI research that catapulted him into broader prominence.

Marcus published his first major book, The Algebraic Mind, in 2001, arguing that the human brain uses rule-based, algebraic operations that pure connectionist models cannot replicate. The book was a shot across the bow of the neural network community, which at the time was in a relative quiet period before the deep learning revolution. When that revolution arrived in the 2010s — fueled by backpropagation at scale, massive datasets, and GPU computing — Marcus found himself once again pushing back against what he saw as dangerous overconfidence in a single approach.

Technical Innovation: The Case for Hybrid AI

Marcus’s most significant intellectual contribution has been his persistent, technically grounded argument that deep learning alone is insufficient for achieving robust, general artificial intelligence. While researchers like Andrej Karpathy were pushing the boundaries of what neural networks could achieve in computer vision and David Silver was demonstrating superhuman performance in game-playing, Marcus was asking the harder question: what can’t these systems do?

His critique centered on several key technical limitations of pure deep learning approaches. Neural networks, he argued, struggle with systematic generalization — the ability to apply learned rules to novel situations in a predictable, structured way. A child who learns what “breaking” means can immediately understand “breaking a glass,” “breaking a promise,” or “breaking a record.” Deep learning systems, trained on statistical correlations, often fail at precisely these kinds of systematic extensions.

To illustrate the gap between pattern matching and genuine understanding, consider how a symbolic reasoning system approaches logical inference versus how a neural network might attempt the same task:

# Symbolic AI approach to reasoning — explicit rules and logic

class SymbolicReasoner:

def __init__(self):

self.knowledge_base = {}

self.rules = []

def add_fact(self, entity, property, value):

"""Store structured knowledge explicitly."""

if entity not in self.knowledge_base:

self.knowledge_base[entity] = {}

self.knowledge_base[entity][property] = value

def add_rule(self, condition_fn, conclusion_fn):

"""Add inference rules that generalize perfectly."""

self.rules.append((condition_fn, conclusion_fn))

def infer(self, entity):

"""Apply rules systematically — works on novel entities."""

results = []

for condition, conclusion in self.rules:

if condition(self.knowledge_base.get(entity, {})):

results.append(conclusion(entity))

return results

# This system generalizes PERFECTLY to new entities

reasoner = SymbolicReasoner()

reasoner.add_fact("Socrates", "type", "human")

reasoner.add_fact("Ada", "type", "human")

# Rule: All humans are mortal

reasoner.add_rule(

lambda props: props.get("type") == "human",

lambda name: f"{name} is mortal"

)

# Works immediately for ANY new human — no retraining needed

reasoner.add_fact("NewPerson", "type", "human")

print(reasoner.infer("NewPerson")) # ["NewPerson is mortal"]

Marcus argued that this kind of systematic, compositional reasoning — where knowledge can be recombined in novel ways following clear rules — is precisely what deep learning struggles with. While neural networks excel at pattern recognition, they lack the structural scaffolding needed for reliable logical inference. His proposed solution was not to abandon neural networks but to build hybrid architectures that combine the pattern-recognition strengths of deep learning with the systematic reasoning capabilities of symbolic AI.

This hybrid approach can be conceptualized as a layered architecture where different cognitive tasks are handled by the most appropriate computational mechanism:

# Conceptual hybrid AI architecture — Marcus's vision

class HybridAISystem:

"""

Combines neural perception with symbolic reasoning,

reflecting Marcus's argument for neurosymbolic AI.

"""

def __init__(self):

self.neural_layer = NeuralPerceptionModule()

self.symbolic_layer = SymbolicReasoningEngine()

self.integration_layer = NeurosymbolicBridge()

def process(self, input_data):

# Step 1: Neural networks handle perception

# (image recognition, speech processing, pattern detection)

perceptual_features = self.neural_layer.extract(input_data)

# Step 2: Bridge converts subsymbolic representations

# into structured symbolic form

symbolic_repr = self.integration_layer.neurals_to_symbols(

perceptual_features

)

# Step 3: Symbolic engine handles reasoning, planning,

# causal inference, and compositional generalization

reasoning_output = self.symbolic_layer.reason(symbolic_repr)

# Step 4: Results can flow back to neural layer

# for natural language generation or action selection

return self.integration_layer.symbols_to_output(

reasoning_output

)

# Key advantage: systematic generalization

# If the system learns "X broke Y" for any X and Y,

# it can handle novel combinations WITHOUT retraining

Why It Mattered

Marcus’s critique mattered because it was not the hand-wraving of an outsider but the detailed technical analysis of someone deeply versed in both cognitive science and machine learning. When he published his landmark 2018 paper “Deep Learning: A Critical Appraisal” on arXiv, it sent shockwaves through the AI community. The paper systematically catalogued ten challenges facing deep learning, from data hunger to the inability to handle hierarchical structure, and argued that progress toward artificial general intelligence would require fundamentally new approaches.

The timing was crucial. In 2018, AI hype was reaching fever pitch. Billions of dollars were pouring into deep learning startups, and prominent researchers were making increasingly bold claims about the imminence of human-level AI. Marcus’s paper served as a necessary corrective — a rigorous, peer-level rebuttal that forced the field to confront uncomfortable limitations. Many of the problems he identified — hallucination, brittleness, lack of common-sense reasoning — have since become widely acknowledged as central challenges, particularly with the rise of large language models. Researchers at organizations including Anthropic and other AI labs have since devoted significant resources to addressing exactly the issues Marcus flagged years earlier.

Other Major Contributions

Beyond his role as AI’s most prominent constructive critic, Marcus has made substantial contributions across multiple domains. In 2001, he founded the NYU Center for Child Language, later renamed the Center for Language and Music, which conducted groundbreaking research into how infants acquire language and what this process reveals about the architecture of the human mind. His experiments with infants — testing whether babies can learn abstract rule-like patterns from sequences of sounds — produced some of the most cited results in developmental cognitive science.

In 2014, Marcus co-founded Geometric Intelligence, a machine learning startup built around the idea that AI systems should be able to learn more from less data, just as human children do. The company attracted top talent and significant attention for its approach to building AI systems that could generalize from small numbers of examples. In late 2016, Uber acquired Geometric Intelligence, and Marcus briefly served as director of Uber’s AI lab before returning to his academic and public intellectual pursuits. The acquisition validated his core thesis: industry recognized that pure big-data approaches had limits, and more sample-efficient learning methods were commercially valuable.

Marcus is also a prolific author of popular science books. The Birth of the Mind (2004) explored how genes build brains and what genetics can tell us about cognition. Kluge: The Haphazard Construction of the Human Mind (2008) argued that evolution built our brains as kludges — imperfect solutions that work well enough but are far from optimally designed. Guitar Zero (2012) was a fascinating personal experiment in which the then-40-year-old Marcus attempted to learn guitar, using his own experience to explore the science of learning at any age. Most recently, Rebooting AI (2019), co-authored with Ernest Davis, laid out a comprehensive case for why current AI systems are far less intelligent than we think and what it would take to build truly trustworthy AI.

His public commentary has also been instrumental in shaping discourse around AI safety and regulation. Marcus has testified before the U.S. Senate on AI risks, contributed to major policy discussions, and consistently advocated for a more cautious, scientifically grounded approach to AI development. His Substack newsletter and social media presence have made him one of the most followed voices in AI commentary, reaching audiences far beyond the academic world.

Philosophy and Approach

Marcus’s intellectual philosophy is grounded in a deep commitment to scientific rigor and honest assessment, even when it means being unpopular. He draws heavily on the traditions of cognitive science — particularly the work of Noam Chomsky, Jerry Fodor, and his mentor Steven Pinker — while maintaining an openness to computational approaches that would make a strict nativist uncomfortable. His thinking bridges what are often seen as incompatible traditions: the rationalist emphasis on innate structure and the empiricist focus on learning from data.

At the heart of his worldview is the conviction that intelligence — whether biological or artificial — requires more than pattern matching. True understanding, he argues, requires the ability to build and manipulate internal models of the world, to reason about cause and effect, and to combine known concepts in novel ways. This places him in intellectual kinship with pioneers like John von Neumann, who understood that computation requires both data and structure, and Edsger Dijkstra, who championed disciplined, structured approaches over ad-hoc solutions.

Key Principles

- Hybrid architecture over monoculture: No single approach to AI — whether deep learning, symbolic reasoning, or evolutionary algorithms — is sufficient on its own. Robust intelligence requires integrating multiple computational paradigms, much as the brain itself uses different mechanisms for perception, memory, reasoning, and motor control.

- Sample efficiency matters: Systems that require millions of examples to learn what a child grasps from a handful are not truly intelligent. Any credible path to general AI must account for the extraordinary data efficiency of human learning.

- Generalization is the gold standard: The true test of intelligence is not performance on training data or even benchmark datasets, but the ability to generalize systematically to novel situations — to handle what you have never seen before based on what you know.

- Innate structure enables learning: Far from being a blank slate, the human brain comes equipped with powerful inductive biases — built-in structures that constrain and guide learning. AI systems, too, need appropriate architectural priors to learn effectively.

- Honesty about limitations builds better science: Overselling AI capabilities is not just intellectually dishonest but actively harmful. It misallocates resources, creates unrealistic expectations, and can lead to deploying unreliable systems in high-stakes domains.

- Common sense is the hard problem: While AI has made impressive progress on narrow tasks, endowing machines with common-sense knowledge and reasoning remains an unsolved challenge that may require fundamentally new approaches.

- Interdisciplinary thinking is essential: The best AI research draws on insights from cognitive science, neuroscience, linguistics, philosophy, and developmental psychology — not just engineering and statistics.

Legacy and Impact

Gary Marcus’s legacy is unusual in the history of technology: he will be remembered not primarily for what he built, but for what he prevented others from ignoring. In a field prone to cycles of hype and disillusionment, his consistent, technically informed skepticism has served as a crucial corrective mechanism. The problems he identified in his 2001 book have resurfaced with new urgency in the era of large language models; the limitations he catalogued in 2018 are now the research agenda of some of the world’s leading AI labs.

His influence on the neurosymbolic AI movement has been substantial. Researchers at MIT, IBM, DeepMind, and numerous universities are now actively pursuing hybrid approaches that combine neural networks with symbolic reasoning — exactly the architecture Marcus has advocated for over two decades. The fact that even the most ardent deep learning enthusiasts now acknowledge the need for some form of structured reasoning, planning, and world modeling is a testament to the power of his arguments.

For teams building modern software products, Marcus’s emphasis on reliability and honest capability assessment resonates strongly. Whether you are managing complex engineering projects with tools like Taskee or consulting with Toimi for strategic technology guidance, the principles Marcus advocates — rigorous testing, honest assessment of limitations, and hybrid approaches that combine the best available tools — apply directly to practical software development.

Marcus’s work also connects to a broader intellectual tradition of scientists who advanced their fields by pushing back against consensus. Like Frank Rosenblatt, who pioneered the perceptron only to see it harshly dismissed, Marcus has experienced both the vindication and the frustration of being ahead of the curve. And like Arthur Samuel, who demonstrated early machine learning decades before the field matured, Marcus has always kept his eyes on the long game rather than chasing short-term benchmarks.

Perhaps most importantly, Marcus has modeled what it means to be a responsible public intellectual in the age of AI. He has shown that it is possible to be deeply knowledgeable about a technology, genuinely excited about its potential, and simultaneously honest about its limitations. In a world where AI discourse is often polarized between breathless hype and existential doom, Marcus has carved out a vital middle ground: optimistic about the destination, skeptical about the current map, and relentlessly insistent on scientific honesty along the way.

Key Facts

- Full name: Gary Fred Marcus

- Born: February 8, 1970, Baltimore, Maryland, USA

- Education: BA from Hampshire College (1992); PhD in Brain and Cognitive Sciences from MIT (1998, advisor: Steven Pinker)

- Academic position: Professor of Psychology and Neural Science at New York University

- Founded: NYU Center for Language and Music; Geometric Intelligence (acquired by Uber, 2016)

- Notable books: The Algebraic Mind (2001), The Birth of the Mind (2004), Kluge (2008), Guitar Zero (2012), Rebooting AI (2019, with Ernest Davis)

- Key paper: “Deep Learning: A Critical Appraisal” (2018) — one of the most discussed AI papers of its decade

- Research focus: Cognitive science, language acquisition, neurosymbolic AI, AI safety and policy

- Known for: Rigorous technical critique of pure deep learning approaches; advocacy for hybrid AI architectures

- Policy work: Testified before the U.S. Senate on AI risks and regulation

FAQ

Why does Gary Marcus criticize deep learning if it has achieved so many impressive results?

Marcus does not deny that deep learning has produced remarkable achievements in areas like image recognition, game-playing, and natural language processing. His critique is more nuanced: he argues that these successes, while genuine, mask fundamental limitations that become apparent when systems are deployed in the real world. Deep learning models can be brittle, prone to hallucination, and unable to reason reliably about cause and effect. Marcus’s position is that acknowledging these limitations is essential for making genuine progress — not that deep learning is worthless, but that it is insufficient on its own for building truly robust, general-purpose AI systems.

What is neurosymbolic AI and why does Marcus advocate for it?

Neurosymbolic AI refers to hybrid systems that combine the pattern-recognition strengths of neural networks (deep learning) with the structured reasoning capabilities of symbolic AI (logic, rules, knowledge graphs). Marcus advocates for this approach because he believes it addresses the fundamental weaknesses of each paradigm in isolation. Neural networks are excellent at learning from raw data but poor at systematic reasoning; symbolic systems are excellent at logical inference but struggle with messy, real-world perception. By integrating both, neurosymbolic systems aim to achieve the robustness, generalizability, and data efficiency that neither approach can deliver alone. This idea has gained significant traction, with major research labs now pursuing neurosymbolic architectures as a path toward more capable and reliable AI.

How did Geometric Intelligence differ from other AI startups?

While most AI startups in the 2014-2016 era were focused on scaling deep learning with more data and compute, Geometric Intelligence took a fundamentally different approach. Founded on Marcus’s cognitive science insights, the company focused on building machine learning systems that could learn efficiently from small amounts of data — much as human children do. Rather than throwing billions of data points at a neural network, Geometric Intelligence explored algorithms inspired by how biological minds extract abstract structure from limited experience. Uber’s acquisition of the company in 2016 validated this approach and brought Marcus’s ideas about sample-efficient learning into one of the world’s largest technology companies.

What is Marcus’s view on AI safety and existential risk?

Marcus occupies a distinctive position in the AI safety landscape. He takes AI risks seriously but is often skeptical of both extreme optimism and extreme pessimism. He has argued that the most pressing near-term risks from AI are not existential scenarios involving superintelligent machines but rather the widespread deployment of unreliable systems that hallucinate, discriminate, and fail in unpredictable ways. He has testified before the U.S. Senate on these issues and has consistently called for better regulation, more rigorous testing standards, and greater honesty from AI companies about what their systems can and cannot do. His perspective is that by building more robust, trustworthy AI systems — through hybrid architectures and better engineering practices — we can mitigate both near-term harms and longer-term risks simultaneously.