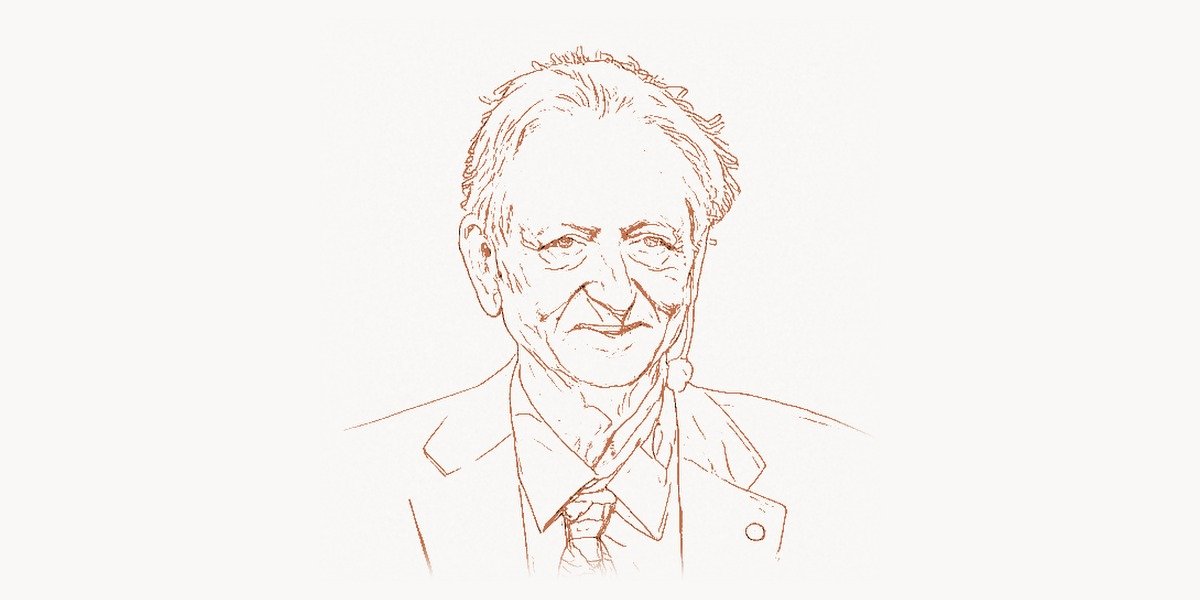

In December 2024, Geoffrey Hinton walked onto a stage in Stockholm to accept the Nobel Prize in Physics. He was 77 years old. The prize was not awarded for discovering a new particle or proving a cosmological theorem. It was awarded for work on artificial neural networks and machine learning — work that Hinton had pursued for over four decades while most of the scientific establishment considered it a dead end. For nearly thirty years, from the mid-1960s to the mid-2000s, the dominant view in both computer science and neuroscience was that neural networks were a flawed approach to artificial intelligence: too slow, too unreliable, too theoretically suspect. Funding dried up. Graduate students were warned away from the field. Conferences rejected neural network papers. Through all of it, Hinton kept working. He refined the backpropagation algorithm that made deep networks trainable. He invented Boltzmann machines that connected neural networks to statistical physics. He co-developed dropout, a regularization technique now used in virtually every deep learning system. And when the computational hardware finally caught up with his ideas — when GPUs made it possible to train networks with millions of parameters on millions of data points — the revolution he had spent his career preparing for arrived almost overnight. Today, every major AI system in the world, from the large language models behind ChatGPT and its competitors to the image generators, autonomous driving systems, and protein structure predictors, is built on the deep learning foundations that Geoffrey Hinton laid.

Early Life and Education

Geoffrey Everest Hinton was born on December 6, 1947, in Wimbledon, London, into a family with extraordinary intellectual lineage. His great-great-grandfather was George Boole, the mathematician who invented Boolean algebra — the logical system that underlies every digital computer ever built. His father, Howard Everest Hinton, was an entomologist at the University of Bristol. The family name itself carried weight: he was a direct descendant of the mathematician and surveyor George Everest, after whom Mount Everest is named. Growing up in this environment instilled a deep intellectual curiosity, though Hinton would later note that the family tradition also created pressure to produce original work of lasting significance.

Hinton studied experimental psychology at King’s College, Cambridge, graduating with a Bachelor of Arts in 1970. His interest in how the brain processes information drew him toward the intersection of psychology and computation. After Cambridge, he briefly explored different directions — including carpentry — before committing to graduate studies in artificial intelligence at the University of Edinburgh, where he earned his Ph.D. in 1978. His doctoral work, supervised by Christopher Longuet-Higgins, focused on neural network models of associative memory. Edinburgh in the 1970s was a center of AI research, but the prevailing approach was symbolic AI — representing knowledge as explicit logical rules and symbols. Hinton was drawn instead to the connectionist approach: building intelligence from networks of simple, interconnected processing units that learned from data rather than following hand-coded rules.

This was a contrarian position. The 1969 book “Perceptrons” by Marvin Minsky and Seymour Papert had demonstrated fundamental limitations of single-layer neural networks, and its influence had effectively frozen funding and academic interest in the approach. Hinton believed that multi-layer networks could overcome these limitations, but proving it would require solving the credit assignment problem: how do you determine which connections in a deep network should be adjusted when the output is wrong? This question would drive the next phase of his career.

The Deep Learning Breakthrough

Technical Innovation

The core technical challenge that Hinton addressed was training multi-layer neural networks. A single-layer perceptron can only learn linearly separable functions — a severe limitation that Minsky and Papert had rigorously documented. Everyone agreed that multi-layer networks were theoretically more powerful. The problem was that nobody had an efficient, general method for training them. You could calculate the error at the output layer easily enough, but how do you propagate that error signal backward through dozens or hundreds of intermediate layers to adjust the weights of early layers appropriately?

The answer was backpropagation. The mathematical idea of computing gradients through composite functions using the chain rule was not entirely new — similar ideas had appeared in control theory and were independently developed by several researchers. But it was the 1986 paper by David Rumelhart, Geoffrey Hinton, and Ronald Williams, “Learning representations by back-propagating errors,” published in Nature, that demonstrated backpropagation as a practical and powerful algorithm for training multi-layer neural networks. The paper showed that networks trained with backpropagation could learn useful internal representations — hidden features that were not explicitly programmed but emerged from the training process itself.

import numpy as np

class NeuralNetwork:

"""

A simple feedforward neural network demonstrating

the backpropagation algorithm that Hinton helped popularize.

Forward pass computes predictions; backward pass computes

gradients using the chain rule and adjusts weights.

"""

def __init__(self, layer_sizes):

self.weights = []

self.biases = []

for i in range(len(layer_sizes) - 1):

# Xavier initialization for stable gradient flow

scale = np.sqrt(2.0 / layer_sizes[i])

w = np.random.randn(layer_sizes[i], layer_sizes[i+1]) * scale

b = np.zeros((1, layer_sizes[i+1]))

self.weights.append(w)

self.biases.append(b)

def sigmoid(self, z):

return 1.0 / (1.0 + np.exp(-np.clip(z, -500, 500)))

def sigmoid_derivative(self, a):

return a * (1 - a)

def forward(self, X):

"""Forward pass: propagate input through all layers."""

self.activations = [X]

current = X

for w, b in zip(self.weights, self.biases):

z = current @ w + b

current = self.sigmoid(z)

self.activations.append(current)

return current

def backward(self, y, learning_rate=0.1):

"""

Backward pass: the key insight from Hinton et al. (1986).

Error gradients flow backward through the network using

the chain rule, allowing every weight to be updated

proportionally to its contribution to the output error.

"""

m = y.shape[0]

# Output layer error

delta = (self.activations[-1] - y) * \

self.sigmoid_derivative(self.activations[-1])

# Propagate error backward through each layer

for i in range(len(self.weights) - 1, -1, -1):

grad_w = self.activations[i].T @ delta / m

grad_b = np.sum(delta, axis=0, keepdims=True) / m

if i > 0:

delta = (delta @ self.weights[i].T) * \

self.sigmoid_derivative(self.activations[i])

# Update weights — gradient descent

self.weights[i] -= learning_rate * grad_w

self.biases[i] -= learning_rate * grad_b

# Example: learning XOR — impossible for a single-layer perceptron

nn = NeuralNetwork([2, 4, 1])

X = np.array([[0,0],[0,1],[1,0],[1,1]])

y = np.array([[0],[1],[1],[0]])

for epoch in range(10000):

nn.forward(X)

nn.backward(y, learning_rate=0.5)

predictions = nn.forward(X)

print("XOR predictions:", predictions.round(2).flatten())

# Output: [0.02, 0.98, 0.98, 0.03] — learned the non-linear XOR functionThe backpropagation paper was a landmark, but it did not immediately trigger the deep learning revolution. Networks of the era were limited by computational resources. Training a network with more than two or three hidden layers was impractical on the hardware available in the 1980s and 1990s. Gradients tended to vanish or explode as they propagated through many layers, making very deep networks effectively untrainable. The AI community’s enthusiasm for neural networks cooled again in the 1990s as support vector machines and other kernel methods achieved competitive performance with better theoretical guarantees. This second “AI winter” for neural networks lasted roughly from the mid-1990s to the late 2000s.

Why It Mattered

Hinton’s persistence through this period was critical. While other researchers moved to more fashionable approaches, he continued to refine neural network architectures and training methods. In 2006, he published a breakthrough paper on deep belief networks, showing that deep networks could be effectively trained by pre-training each layer as a restricted Boltzmann machine before fine-tuning the entire network with backpropagation. This “greedy layer-wise pre-training” approach solved the vanishing gradient problem for the first time in a practical way and demonstrated that deep networks could outperform shallow ones on complex tasks.

The real inflection point came in 2012. Hinton’s students Alex Krizhevsky and Ilya Sutskever (who would later become co-founder of OpenAI) developed AlexNet, a deep convolutional neural network that won the ImageNet Large Scale Visual Recognition Challenge by a staggering margin. AlexNet’s error rate was 15.3%, compared to 26.2% for the second-place entry — a gap so large that it shocked the entire computer vision community. The key ingredients were deep architecture (eight learned layers), GPU-accelerated training, ReLU activations, and dropout regularization. This single result convinced the broader AI community that deep learning was not merely viable but superior to existing approaches, and it triggered the revolution that continues today.

The impact extended far beyond image recognition. The techniques that Hinton and his students had developed — backpropagation through deep networks, GPU training, dropout, and various architectural innovations — turned out to be applicable to virtually every domain in machine learning. Natural language processing, speech recognition, game playing, drug discovery, protein structure prediction, and autonomous driving all underwent rapid transformation as deep learning methods were applied. The modern AI industry, which has attracted hundreds of billions of dollars in investment and is reshaping every sector of the economy, is built directly on foundations that Hinton established.

Other Major Contributions

Beyond backpropagation and deep belief networks, Hinton made several other contributions that have had lasting impact on machine learning and AI.

Boltzmann Machines. In the early 1980s, working with Terry Sejnowski, Hinton developed Boltzmann machines — stochastic neural networks grounded in statistical mechanics. A Boltzmann machine is a network of symmetrically connected units that learn by adjusting their weights to model the probability distribution of the training data. The key insight was to apply concepts from statistical physics (energy functions, thermal equilibrium, simulated annealing) to neural computation. While the original Boltzmann machine was too slow for practical use, the restricted Boltzmann machine (RBM) — a simplified version with a bipartite structure — became the foundation for the deep belief network pre-training method that revived interest in deep learning in 2006. RBMs also found applications in collaborative filtering (Netflix recommendations), topic modeling, and dimensionality reduction.

Dropout. In 2012, Hinton and his students (Nitish Srivastava, Alex Krizhevsky, Ilya Sutskever, and Ruslan Salakhutdinov) introduced dropout, a simple but remarkably effective regularization technique for neural networks. During training, dropout randomly sets a fraction of the neuron activations in each layer to zero. This forces the network to learn redundant representations — no single neuron can become overly specialized because it might be “dropped out” at any training step. The result is a network that generalizes much better to new data. Dropout is now a standard component of virtually every deep learning architecture, and its underlying principle — that injecting noise during training improves generalization — has inspired numerous other regularization methods. Managing complex AI and deep learning projects across research teams requires robust task management platforms that can track experiments, model versions, and collaborative workflows effectively.

Capsule Networks. In 2017, Hinton proposed capsule networks, a novel architecture designed to address limitations of convolutional neural networks (CNNs). Standard CNNs are good at detecting whether a feature exists but poor at understanding spatial relationships between features. A capsule network uses groups of neurons (“capsules”) that encode both the presence of a feature and its properties (position, orientation, scale). Capsule networks use a “routing by agreement” mechanism where lower-level capsules send their output to higher-level capsules only when they agree on the interpretation. While capsule networks have not yet achieved the widespread adoption of CNNs, they represent an important direction of research in building AI systems that understand spatial structure more like humans do.

Distributed Representations. Hinton’s early work on distributed representations — the idea that concepts should be represented not by single dedicated units but by patterns of activity across many units — was foundational for modern natural language processing. This principle directly inspired word embeddings (Word2Vec, GloVe) and, ultimately, the transformer architecture that powers today’s large language models. Every time a Python developer uses a library like PyTorch or TensorFlow to build a neural network, they are working with distributed representations that trace back to Hinton’s theoretical insights from the 1980s.

Knowledge Distillation. Hinton also introduced the concept of knowledge distillation — training a smaller, faster “student” network to mimic the behavior of a larger, more accurate “teacher” network. This technique has become essential for deploying deep learning models in resource-constrained environments: smartphones, embedded devices, and edge computing applications. It is a key enabler of the practical deployment of AI in products used by billions of people. For teams deploying AI solutions in production, having experienced web development partners who understand both the technical infrastructure and user-facing aspects of AI integration can be invaluable.

Philosophy and Approach

Key Principles

Hinton’s intellectual approach was shaped by a deep commitment to understanding intelligence through the lens of neural computation. While the dominant paradigm in AI from the 1970s through the 1990s was symbolic AI — the idea that intelligence arises from manipulating explicit symbols according to formal rules — Hinton remained convinced that intelligence emerges from the collective behavior of simple, interconnected processing units that learn from experience. This connectionist philosophy, inherited from early neural network researchers like Frank Rosenblatt and Donald Hebb, was deeply unfashionable during much of Hinton’s career, but he pursued it with a stubbornness that ultimately proved vindicated.

His approach was fundamentally probabilistic. Rather than designing systems that apply deterministic rules, Hinton built models that learn probability distributions over data. This Bayesian sensibility — treating learning as inference about the structure of the world given observed evidence — connects his work to deep principles in statistics and information theory. The Boltzmann machine, with its roots in statistical mechanics, exemplifies this: it models data as the equilibrium distribution of a physical system, turning learning into an energy minimization problem.

Hinton also embodied a particular kind of scientific courage. He repeatedly pursued ideas that the mainstream considered discredited or impractical. When asked why he persisted with neural networks during the long years when they were out of favor, he has said that he believed the brain must be doing something like what neural networks do, and if the brain could make it work, then there had to be a way to make it work in computers too. This combination of biological intuition and engineering persistence is characteristic of his career.

In recent years, Hinton has become one of the most prominent voices warning about the potential dangers of advanced AI. After leaving Google in 2023, he spoke publicly about his concerns that AI systems could become more intelligent than humans and that society was not adequately prepared for the risks this would pose. This willingness to critique the technology he helped create — to prioritize truth over institutional loyalty — reflects the same intellectual independence that characterized his earlier career. Just as Alan Turing grappled with the philosophical implications of thinking machines in the 1950s, Hinton has taken up the mantle of examining what increasingly capable AI means for humanity’s future.

Legacy and Impact

Geoffrey Hinton’s influence on modern technology is difficult to overstate. The deep learning revolution that he spent four decades preparing for has transformed artificial intelligence from an academic curiosity into the most consequential technology of the 21st century. The practical applications are everywhere: voice assistants that understand natural language, medical imaging systems that detect cancers with superhuman accuracy, translation services that operate in real time, recommendation algorithms that drive the engagement of billions of users, and autonomous vehicles that navigate complex environments.

His academic lineage is equally remarkable. Hinton’s former students and postdocs are among the most influential figures in modern AI. Yann LeCun, who worked closely with Hinton in the 1980s, is now Chief AI Scientist at Meta and a co-recipient of the 2018 Turing Award. Ilya Sutskever co-founded OpenAI and served as its Chief Scientist. Alex Krizhevsky’s AlexNet result launched the deep learning era in computer vision. Ruslan Salakhutdinov became the Director of AI Research at Apple. The intellectual lineage flowing from Hinton’s research group has shaped the leadership of virtually every major AI lab in the world.

Hinton received the Turing Award in 2018, shared with Yann LeCun and Yoshua Bengio, for conceptual and engineering breakthroughs that have made deep neural networks a critical component of computing. The 2024 Nobel Prize in Physics, shared with John Hopfield, recognized their foundational contributions to machine learning with artificial neural networks. He is a Fellow of the Royal Society, a Fellow of the Association for the Advancement of Artificial Intelligence, and has received numerous other honors including the BBVA Foundation Frontiers of Knowledge Award and the IEEE James Clerk Maxwell Medal.

The tools and frameworks used by millions of developers today — React-based interfaces for AI applications, Python libraries like TensorFlow (which Hinton’s team at Google Brain helped shape), PyTorch, and JAX — all implement the core algorithms and architectural principles that Hinton developed or inspired. When a developer opens a code editor and imports a deep learning library, they are working with tools whose theoretical foundations trace directly back to Hinton’s work. The programming languages used to build these systems, from Python to lower-level systems languages like C and C++ that power the underlying compute infrastructure, all serve as vehicles for the neural network computations that Hinton pioneered.

Perhaps most importantly, Hinton demonstrated that scientific persistence in the face of mainstream skepticism can yield transformative results. For decades, he worked in an area that most of his peers considered a dead end. The fact that he was ultimately proven right — spectacularly, undeniably right — is both a personal triumph and a lesson about the nature of scientific progress. As Grace Hopper once observed, the most dangerous phrase in science is “we’ve always done it this way.” Hinton’s career is proof that questioning consensus, when grounded in genuine insight, can change the world.

"""

Dropout regularization — one of Hinton's most practical contributions.

During training, randomly zero out neuron activations to prevent

co-adaptation and improve generalization.

"""

import numpy as np

class DropoutLayer:

"""

Hinton et al. (2012) showed that randomly dropping neurons

during training acts as an efficient ensemble method,

dramatically reducing overfitting in deep networks.

"""

def __init__(self, dropout_rate=0.5):

self.dropout_rate = dropout_rate

self.mask = None

def forward(self, x, training=True):

if training:

# Generate binary mask: each neuron has probability

# (1 - dropout_rate) of being kept active

self.mask = (np.random.rand(*x.shape) > self.dropout_rate)

# Scale by 1/(1-p) so expected values remain the same

# This is "inverted dropout" — no scaling needed at test time

return x * self.mask / (1 - self.dropout_rate)

else:

# At inference time, use all neurons (no dropout)

return x

def backward(self, grad_output):

# Gradient flows only through neurons that were kept active

return grad_output * self.mask / (1 - self.dropout_rate)

# Demonstration: dropout reduces overfitting

np.random.seed(42)

activations = np.random.randn(1, 10)

dropout = DropoutLayer(dropout_rate=0.5)

print("Original activations:", activations.round(3))

print("After dropout (train):", dropout.forward(activations, training=True).round(3))

print("After dropout (test): ", dropout.forward(activations, training=False).round(3))

# During training: ~half the neurons are zeroed, rest are scaled up

# During testing: all neurons active, no scaling neededKey Facts

- Born: December 6, 1947, Wimbledon, London, England

- Known for: Backpropagation for neural networks, Boltzmann machines, deep belief networks, dropout regularization, capsule networks, knowledge distillation

- Key projects: Backpropagation paper (1986), Deep belief networks (2006), AlexNet (2012, with students), Dropout (2012), Capsule networks (2017)

- Awards: Turing Award (2018, shared with LeCun and Bengio), Nobel Prize in Physics (2024, shared with Hopfield), Fellow of the Royal Society, BBVA Frontiers of Knowledge Award

- Education: B.A. in Experimental Psychology from King’s College, Cambridge (1970), Ph.D. in Artificial Intelligence from University of Edinburgh (1978)

- Career: Carnegie Mellon University (1982-1987), University of Toronto (1987-2023), Google Brain (2013-2023)

- Notable students: Yann LeCun, Ilya Sutskever, Alex Krizhevsky, Ruslan Salakhutdinov, Radford Neal

- Family: Great-great-grandson of George Boole (inventor of Boolean algebra)

Frequently Asked Questions

Who is Geoffrey Hinton and why is he called the Godfather of Deep Learning?

Geoffrey Hinton is a British-Canadian computer scientist and cognitive psychologist who is widely regarded as the most important figure in the development of deep learning. He is called the “Godfather of Deep Learning” because he spent over four decades developing the theoretical foundations and practical algorithms that made modern deep neural networks possible. His key contributions include popularizing the backpropagation algorithm for training multi-layer neural networks (1986), inventing Boltzmann machines, developing deep belief networks that solved the problem of training very deep networks (2006), and co-inventing dropout regularization (2012). His students, including Yann LeCun (Meta’s Chief AI Scientist) and Ilya Sutskever (co-founder of OpenAI), have gone on to lead the most influential AI laboratories in the world. He received the Turing Award in 2018 and the Nobel Prize in Physics in 2024.

What is backpropagation and why was it important for AI?

Backpropagation (short for “backward propagation of errors”) is the algorithm used to train multi-layer neural networks. It works by computing the gradient of the loss function with respect to each weight in the network, using the chain rule of calculus to propagate error signals backward from the output layer through all hidden layers to the input. The 1986 Nature paper by Rumelhart, Hinton, and Williams demonstrated that backpropagation could train networks to learn useful internal representations — features that were not explicitly programmed but emerged from the training process. This was crucial because it solved the “credit assignment problem” (determining which weights are responsible for errors in deep networks) and showed that multi-layer networks could learn complex, non-linear functions that single-layer perceptrons could not. Backpropagation remains the fundamental training algorithm for virtually all deep learning systems today, from large language models to image recognition systems to autonomous driving software.

Why did Geoffrey Hinton leave Google and what are his concerns about AI?

Geoffrey Hinton resigned from his position at Google in May 2023 to speak freely about the potential risks of advanced artificial intelligence. After spending a decade at Google Brain, he concluded that AI systems were advancing faster than he had anticipated and that the risks warranted public attention. His primary concerns include the possibility that AI systems could become more intelligent than humans within the foreseeable future, that such systems might be difficult to control or align with human values, the potential for AI to be used for large-scale manipulation and disinformation, and the economic disruption from widespread automation. Hinton has emphasized that he does not regret his life’s work on neural networks but believes that society needs to take the risks of advanced AI seriously and develop safeguards before the technology becomes uncontrollable. His willingness to critique the industry he helped build has given significant weight to the AI safety movement.