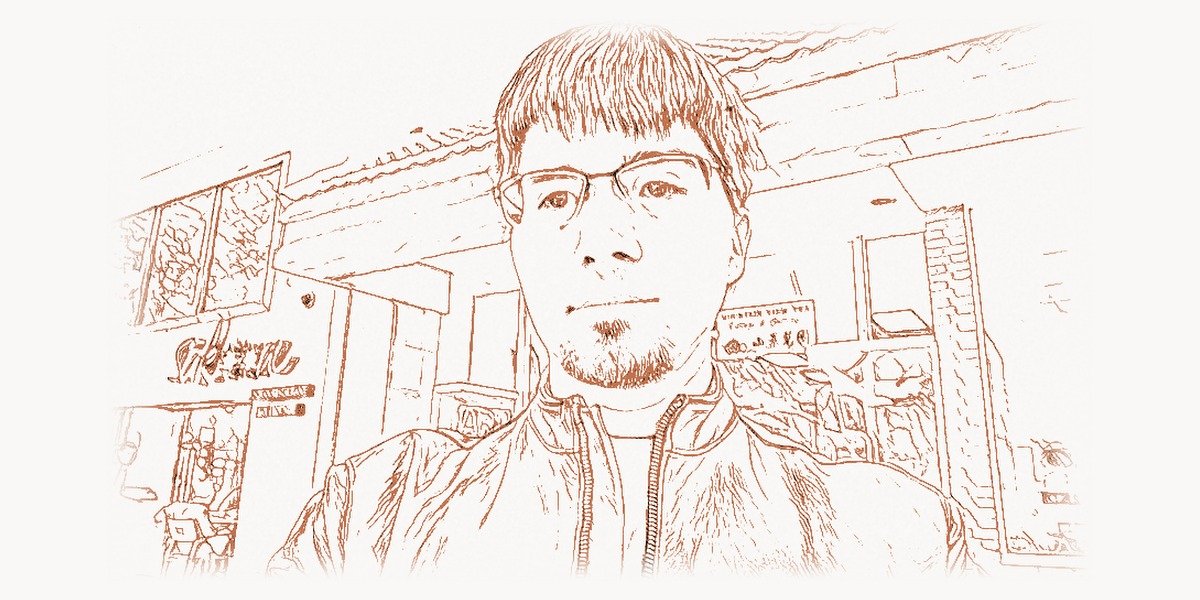

The idea came to him at a bar. In June 2014, Ian Goodfellow — then a 28-year-old Ph.D. student at the Université de Montréal working under Yoshua Bengio — was drinking with fellow researchers after a colleague’s going-away party. The conversation turned to a persistent frustration in generative modeling: how do you teach a neural network to produce realistic images from scratch? Existing methods were slow, produced blurry results, and required painstaking hand-engineering of probability distributions. Goodfellow’s colleagues had been trying to make a statistical approach work, layering complex mathematical machinery to approximate the intractable distributions that describe natural images. Goodfellow proposed something different, something almost absurdly elegant: what if you trained two neural networks against each other — one to generate fake data and one to detect fakes — and let their adversarial competition drive both toward perfection? His friends were skeptical. He went home, coded it up that same night, and it worked on the first try. The generative adversarial network — the GAN — was born, and with it an entirely new paradigm for machine learning that would reshape computer vision, art, medicine, security, and our very understanding of what artificial intelligence can create.

Early Life and Education

Ian Jacob Goodfellow was born on March 20, 1986, in the United States. He grew up in a household that valued education and intellectual curiosity, though his path to becoming one of the most influential figures in modern AI was far from predetermined. As a student, Goodfellow showed an early aptitude for mathematics and computer science — the kind of combined analytical and engineering mindset that would later allow him to bridge the gap between theoretical machine learning and practical implementation with unusual fluency.

Goodfellow completed his undergraduate and master’s degrees at Stanford University, where he studied computer science. Stanford’s proximity to Silicon Valley and its deep bench of machine learning researchers provided an environment where theoretical ambition and practical engineering culture coexisted naturally. During his time at Stanford, Goodfellow was exposed to the full breadth of machine learning — from kernel methods and graphical models to the early resurgence of neural networks that would soon transform the field. He worked with Andrew Ng, one of the most prominent advocates for deep learning’s practical applications and a co-founder of the Google Brain project. This early exposure to both the theoretical foundations and the large-scale engineering challenges of machine learning shaped Goodfellow’s distinctive approach: always grounded in rigorous mathematics, but always aimed at building systems that actually work on real data.

For his doctoral studies, Goodfellow moved to the Université de Montréal to work with Yoshua Bengio at Mila — then called the Montreal Institute for Learning Algorithms. This was not a random choice. By 2010, Bengio’s lab had become one of the three most important centers for deep learning research in the world, alongside Geoffrey Hinton‘s group in Toronto and Yann LeCun‘s team at NYU. Bengio’s emphasis on understanding generative models, representation learning, and the theoretical underpinnings of deep networks created exactly the intellectual ecosystem in which the GAN idea could germinate. Montreal in the early 2010s was a place where researchers argued about the right mathematical frameworks for describing what neural networks learn, where unsupervised learning was taken seriously as a research direction when much of the field chased supervised benchmarks, and where wild ideas were given room to breathe. Goodfellow thrived in this environment.

The GAN Breakthrough

Technical Innovation

The core idea behind generative adversarial networks is deceptively simple, which is part of what makes it so powerful. Goodfellow proposed a framework in which two neural networks are trained simultaneously in a minimax game. The first network — the generator — takes random noise as input and attempts to produce data samples (such as images) that are indistinguishable from real data. The second network — the discriminator — receives both real data samples and the generator’s fakes, and attempts to correctly classify which is which. The generator is trained to fool the discriminator, and the discriminator is trained to catch the generator. As training progresses, both networks improve: the generator produces increasingly realistic outputs, and the discriminator becomes increasingly discerning. At equilibrium, the generator produces samples that are statistically indistinguishable from the real training data, and the discriminator is reduced to random guessing.

Mathematically, the GAN objective is expressed as a minimax optimization over a value function. The discriminator tries to maximize the probability of correctly labeling real and generated samples, while the generator tries to minimize the discriminator’s ability to distinguish fakes from reality. Goodfellow showed that this adversarial process is equivalent to minimizing the Jensen-Shannon divergence between the real data distribution and the generator’s output distribution — a result that gave the framework its theoretical grounding and connected it to well-understood concepts in information theory.

import numpy as np

class SimpleGAN:

"""

Minimal GAN implementation illustrating Goodfellow's core idea:

two networks locked in adversarial competition.

The generator learns to produce data that fools the discriminator.

The discriminator learns to distinguish real data from fakes.

At convergence, generated samples match the real data distribution.

"""

def __init__(self, data_dim=1, hidden_dim=16, lr=0.01):

self.lr = lr

# Generator: maps random noise to data space

self.g_w1 = np.random.randn(1, hidden_dim) * 0.1

self.g_b1 = np.zeros(hidden_dim)

self.g_w2 = np.random.randn(hidden_dim, data_dim) * 0.1

self.g_b2 = np.zeros(data_dim)

# Discriminator: maps data to probability of being real

self.d_w1 = np.random.randn(data_dim, hidden_dim) * 0.1

self.d_b1 = np.zeros(hidden_dim)

self.d_w2 = np.random.randn(hidden_dim, 1) * 0.1

self.d_b2 = np.zeros(1)

def sigmoid(self, x):

return 1.0 / (1.0 + np.exp(-np.clip(x, -500, 500)))

def relu(self, x):

return np.maximum(0, x)

def generate(self, noise):

"""Generator forward pass: noise → fake data."""

h = self.relu(noise @ self.g_w1 + self.g_b1)

return h @ self.g_w2 + self.g_b2

def discriminate(self, x):

"""Discriminator forward pass: data → P(real)."""

h = self.relu(x @ self.d_w1 + self.d_b1)

return self.sigmoid(h @ self.d_w2 + self.d_b2)

def train_step(self, real_data, batch_size=32):

"""

One GAN training step — the adversarial game:

1. Train discriminator to separate real from fake

2. Train generator to fool the discriminator

This minimax dynamic is Goodfellow's key insight:

competition between networks drives both toward optimality.

"""

# Sample noise and generate fake data

noise = np.random.randn(batch_size, 1)

fake_data = self.generate(noise)

# Discriminator scores

d_real = self.discriminate(real_data)

d_fake = self.discriminate(fake_data)

# Discriminator loss: maximize log(D(x)) + log(1 - D(G(z)))

d_loss = -np.mean(np.log(d_real + 1e-8) + np.log(1 - d_fake + 1e-8))

# Generator loss: minimize log(1 - D(G(z)))

# In practice, maximize log(D(G(z))) for stronger gradients

g_loss = -np.mean(np.log(d_fake + 1e-8))

return d_loss, g_loss, np.mean(fake_data)

# Conceptual training loop

gan = SimpleGAN()

target_mean = 4.0 # Real data: samples from N(4, 0.5)

for step in range(1000):

real_samples = np.random.randn(32, 1) * 0.5 + target_mean

d_loss, g_loss, gen_mean = gan.train_step(real_samples)

# Over training, gen_mean converges toward target_mean

# The generator learns the real distribution through adversarial playWhat made this framework revolutionary was not just its elegance but its generality. Previous generative models — variational autoencoders, restricted Boltzmann machines, autoregressive models — each required specific architectural constraints or tractable likelihood functions. GANs required neither. The generator could be any differentiable function, the discriminator could be any binary classifier, and the training procedure was just standard backpropagation applied to both networks alternately. This meant that GANs could, in principle, generate any type of data: images, audio, text, molecular structures, 3D models, medical scans. The framework imposed almost no constraints on the data modality or the network architecture, which gave it extraordinary flexibility.

The original GAN paper, published at the Conference on Neural Information Processing Systems (NeurIPS) in 2014, was co-authored with Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Bengio. The paper’s clarity and the simplicity of its core idea made it immediately accessible, while its theoretical analysis — proving convergence under ideal conditions — gave it intellectual weight. It became one of the most cited machine learning papers of the decade.

Why It Mattered

Before GANs, generating realistic images with neural networks was essentially impossible. The best generative models produced blurry, low-resolution outputs that were immediately recognizable as machine-generated. GANs changed this almost overnight. Within a few years of the original 2014 paper, researchers had developed GAN variants — DCGAN, Progressive GAN, StyleGAN — capable of generating photorealistic faces, landscapes, and objects at high resolution. By 2018, GAN-generated faces were indistinguishable from photographs to the human eye. By 2020, NVIDIA‘s StyleGAN2 could produce images of people who had never existed with such fidelity that the technology raised profound questions about the nature of visual evidence itself.

The practical applications multiplied rapidly. In medicine, GANs were used to augment small training datasets for rare disease detection — generating synthetic medical images that helped classifiers learn without requiring thousands of real patient scans. In art and design, GANs enabled entirely new creative workflows: style transfer, image-to-image translation (turning sketches into photorealistic renderings), and even the generation of novel artworks that sold at auction houses. In science, GANs were applied to drug discovery (generating candidate molecular structures), physics simulations (accelerating computationally expensive simulations by orders of magnitude), and climate modeling. Teams building these applied systems often relied on project management tools to coordinate the complex pipelines that GAN-based workflows demanded.

But GANs also created an entirely new category of problems. The same technology that could generate photorealistic faces could also create deepfakes — convincing fake videos of real people saying or doing things they never did. The implications for politics, journalism, personal privacy, and trust in visual media were enormous and immediate. Goodfellow himself was acutely aware of this dual-use problem from the beginning and spent significant effort on adversarial robustness — the flip side of the GAN coin, focused on defending against malicious uses of generative AI.

Other Major Contributions

Adversarial Examples and Robustness

Goodfellow’s second most influential contribution to the field — and in some ways the most consequential for AI safety — was his work on adversarial examples. In 2014, the same year he published the GAN paper, Goodfellow co-authored research demonstrating that state-of-the-art neural networks could be fooled by imperceptible perturbations to their inputs. A carefully crafted noise pattern, invisible to the human eye, could cause an image classifier to confidently misidentify a panda as a gibbon, a stop sign as a speed limit sign, or a benign mole as a malignant tumor. These adversarial examples were not obscure edge cases — they revealed a fundamental fragility in how deep neural networks process information.

Goodfellow developed the Fast Gradient Sign Method (FGSM), a simple and efficient algorithm for generating adversarial examples by computing the gradient of the loss function with respect to the input and perturbing each pixel in the direction that maximizes the loss. FGSM was important not because it was the most powerful attack — more sophisticated methods were developed later — but because its simplicity demonstrated that adversarial vulnerability was not a bug in specific architectures but a pervasive property of high-dimensional classifiers trained with standard methods.

import numpy as np

def fgsm_attack(model_gradient_fn, input_data, true_label, epsilon=0.01):

"""

Fast Gradient Sign Method (FGSM) — Goodfellow et al., 2014.

Generates an adversarial example by perturbing the input

in the direction that maximizes the classification loss.

The perturbation is imperceptible (bounded by epsilon)

but can cause confident misclassification in deep networks.

This revealed a fundamental vulnerability: neural networks

are sensitive to directions in input space that humans ignore.

Parameters:

model_gradient_fn: returns dLoss/dInput for the given input and label

input_data: original correctly-classified input (e.g., an image)

true_label: the correct class label

epsilon: maximum perturbation magnitude (small = imperceptible)

Returns:

adversarial_input: perturbed input that fools the classifier

"""

# Compute gradient of loss w.r.t. input pixels

gradient = model_gradient_fn(input_data, true_label)

# Perturb each pixel in the direction that increases loss

# sign() ensures uniform perturbation magnitude across all dimensions

perturbation = epsilon * np.sign(gradient)

# Create adversarial example

adversarial_input = input_data + perturbation

# Clip to valid pixel range [0, 1]

adversarial_input = np.clip(adversarial_input, 0.0, 1.0)

return adversarial_input

# Example: a perturbation of epsilon=0.01 (1% of pixel range)

# is invisible to humans but can flip a classifier's prediction

# from "panda" (99.3% confidence) to "gibbon" (99.7% confidence).

#

# This finding had profound implications for deploying neural networks

# in safety-critical systems: autonomous vehicles, medical diagnosis,

# security screening — anywhere misclassification has real consequences.This line of research had immediate practical implications. If neural networks deployed in autonomous vehicles, medical imaging, or security systems could be trivially fooled by imperceptible modifications to their inputs, the entire premise of deploying deep learning in safety-critical applications was called into question. Goodfellow’s work on adversarial examples essentially founded the field of adversarial machine learning — a now-enormous research area focused on understanding, generating, and defending against adversarial attacks. He also contributed to adversarial training, a defense method where the model is explicitly trained on adversarial examples to improve its robustness — a technique that, notably, mirrors the adversarial dynamic at the heart of GANs.

The Deep Learning Textbook

In 2016, Goodfellow, along with Yoshua Bengio and Aaron Courville, published Deep Learning — a comprehensive textbook that became the standard reference for the field. The book was remarkable for several reasons. It covered the full stack of deep learning, from the mathematical prerequisites (linear algebra, probability, information theory, numerical optimization) through the core architectures (feedforward networks, convolutional networks, recurrent networks, autoencoders) to the research frontier (generative models, structured probabilistic models, Monte Carlo methods). Its treatment was simultaneously rigorous — accessible to graduate students in mathematics and physics — and practical, with enough implementation detail to serve as a guide for engineers building real systems.

The Deep Learning textbook filled a critical gap. In 2016, the field was growing so rapidly that most learning resources were scattered across research papers, blog posts, and online courses of varying quality. Goodfellow, Bengio, and Courville provided a unified, authoritative treatment that gave the field intellectual coherence at exactly the moment it needed it. The book was made freely available online, which dramatically expanded its reach. It has been cited tens of thousands of times and remains one of the most widely used resources for anyone — from undergraduate students to experienced researchers pivoting into deep learning — seeking to understand the mathematical and conceptual foundations of modern AI. For those learning Python to implement deep learning models, the textbook’s code examples and mathematical clarity provided an invaluable bridge between theory and practice. Anyone setting up their development environment for the first time could also benefit from understanding the best code editors for working with deep learning frameworks.

Philosophy and Approach

Key Principles

Goodfellow’s research philosophy is characterized by several distinctive commitments that explain both the nature of his contributions and his unusually broad impact across multiple subfields of machine learning.

Simplicity as a design principle. The GAN framework is breathtaking in its simplicity. Two networks, one loss function, standard backpropagation. No complex inference procedures, no tractability constraints on the generative model, no hand-crafted features. Goodfellow consistently sought the simplest possible formulation of a problem, not because he was unaware of complexity but because he understood that simple ideas scale, generalize, and inspire further innovation in ways that complex ones rarely do. The same principle guided his work on adversarial examples: FGSM is a one-step, closed-form attack that anyone can implement in a few lines of code, yet it exposed a vulnerability that an entire research community had missed. This preference for elegance over machinery is a hallmark of the most consequential contributions in computer science.

Adversarial thinking as a methodology. Both of Goodfellow’s most famous contributions — GANs and adversarial examples — are rooted in the same intellectual framework: progress through opposition. In GANs, the generator improves because it must fool a discriminator that is simultaneously improving. In adversarial machine learning, models become more robust by being exposed to the very attacks that exploit their weaknesses. This adversarial framing is not just a technical trick — it reflects a deeper belief that the most robust systems are those that have been stress-tested against intelligent adversaries, not just evaluated on curated benchmarks. It is an engineering philosophy with deep roots in game theory, evolutionary biology, and cryptography.

Responsibility for dual-use technologies. Goodfellow’s career trajectory after inventing GANs is revealing. Rather than focusing exclusively on generating ever-more-impressive images, he invested heavily in understanding and mitigating the risks created by generative AI. His work on adversarial robustness, his public commentary on deepfakes, and his research on machine learning security all reflect a conviction that researchers bear responsibility for the downstream consequences of their inventions. This stance became increasingly important as GAN-generated deepfakes emerged as a real threat to information integrity — a problem that Goodfellow had anticipated years before it entered public consciousness.

The value of moving between academia and industry. Unlike researchers who plant themselves firmly in one world, Goodfellow deliberately moved between academic and industrial settings — from Bengio’s lab at Mila to Google Brain, then to OpenAI led by Sam Altman, back to Google Brain, and finally to Apple’s machine learning division. Each move was motivated by a different set of research priorities and opportunities. At Google Brain, he had access to computational resources and engineering infrastructure that no university lab could match. At OpenAI, he worked on safety and policy questions in an environment explicitly organized around long-term AI risk. At Apple, he brought his expertise to bear on privacy-preserving machine learning and on-device AI. This itinerant approach gave Goodfellow an unusually broad perspective on both the capabilities and the limitations of modern AI systems, and it allowed his ideas to propagate across multiple organizations and research cultures. Consulting firms like digital strategy agencies that advise technology companies on AI adoption have drawn on the kind of cross-organizational insights that Goodfellow’s career trajectory exemplifies.

Legacy and Impact

Ian Goodfellow’s impact on artificial intelligence is both deep and broad. The GAN framework he invented at age 28 did not merely introduce a new algorithm — it created an entirely new paradigm for generative modeling that spawned thousands of research papers, dozens of practical applications, and fundamental questions about the nature of artificial creativity. Within a decade of the original 2014 paper, GAN-derived techniques were being used to generate photorealistic images, create synthetic training data for medical AI systems, accelerate scientific simulations, design new materials and molecules, and produce the deepfakes that have reshaped public discourse about trust and visual evidence.

His work on adversarial examples was equally foundational. By demonstrating that state-of-the-art neural networks could be fooled by imperceptible input perturbations, Goodfellow forced the entire field to confront the gap between benchmark performance and real-world robustness. The adversarial machine learning research community that grew from his early work now comprises thousands of researchers working on attack methods, defense mechanisms, certified robustness guarantees, and the theoretical foundations of neural network vulnerability. This work has direct implications for the safety of autonomous vehicles, medical AI systems, facial recognition, and every other domain where deep learning is deployed in high-stakes settings.

The Deep Learning textbook he co-authored with Bengio and Courville became the field’s standard reference — a role it has maintained for a decade, through a period of unprecedented growth and change in the discipline. For an entire generation of machine learning researchers and practitioners, Goodfellow, Bengio, and Courville’s book was their entry point into the mathematical foundations of deep learning. Its influence on the field’s intellectual culture — its emphasis on mathematical rigor, conceptual clarity, and the unity of theory and practice — is difficult to overstate.

Goodfellow’s career also illustrates a broader truth about the current state of AI research: the most consequential contributions often come from individuals who combine deep theoretical understanding with practical engineering skills and who are willing to work across institutional boundaries. His ability to move between Hinton-school deep learning theory, the engineering culture of Google Brain, the safety-focused environment of OpenAI, and the product-oriented world of Apple gave his ideas an reach and an influence that purely academic work rarely achieves.

At its core, Goodfellow’s legacy is about the productive tension between creation and critique. GANs embody this duality — a generator creating and a discriminator critiquing, each pushing the other toward excellence. Adversarial examples embody the same principle — attacks that expose weaknesses, driving the development of more robust defenses. This adversarial philosophy, applied recursively and rigorously across the full stack of machine learning, is Goodfellow’s most enduring contribution to the field. It is a framework for thinking about AI that will remain relevant long after any specific architecture or algorithm has been superseded by the next generation of innovation.

Key Facts

- Full name: Ian Jacob Goodfellow

- Born: 1986, United States

- Education: B.S. and M.S. in Computer Science, Stanford University; Ph.D. in Machine Learning, Université de Montréal (advisor: Yoshua Bengio)

- Known for: Inventing Generative Adversarial Networks (GANs, 2014), Fast Gradient Sign Method (FGSM) for adversarial examples, co-authoring the Deep Learning textbook

- Career: Google Brain, OpenAI, Google Brain (second stint), Apple ML/AI, DeepMind

- GAN paper citations: Over 70,000 — one of the most cited papers in machine learning history

- Textbook: Deep Learning (2016, with Bengio and Courville) — the standard reference for the field

- Awards: MIT Technology Review 35 Innovators Under 35 (2017), GAN paper named among the top 10 most influential ML papers of the 2010s

- Key innovation: Demonstrated that adversarial competition between neural networks can drive generative quality beyond what any single-network approach achieves

Frequently Asked Questions

What are Generative Adversarial Networks (GANs) and why were they such a big deal?

GANs are a framework in which two neural networks — a generator and a discriminator — are trained against each other. The generator creates fake data (such as images), and the discriminator tries to tell the fakes from real data. Through this adversarial competition, the generator learns to produce increasingly realistic outputs. Before GANs, neural networks could not generate photorealistic images. Within a few years of Goodfellow’s 2014 paper, GAN variants were producing faces, landscapes, and artworks indistinguishable from real photographs. The framework’s simplicity, generality, and extraordinary results made it one of the most influential ideas in the history of machine learning, spawning applications in art, medicine, science, data augmentation, and far beyond.

How did Ian Goodfellow’s work on adversarial examples change AI safety?

Goodfellow demonstrated that deep neural networks can be fooled by tiny, imperceptible changes to their inputs — for example, adding a barely visible noise pattern to an image of a panda causes a classifier to confidently identify it as a gibbon. This discovery was alarming because it revealed that the neural networks being deployed in self-driving cars, medical diagnosis systems, and security applications were fundamentally brittle in ways that benchmark testing did not reveal. Goodfellow’s Fast Gradient Sign Method (FGSM) made adversarial attacks easy to study and replicate, which catalyzed an entire new field — adversarial machine learning — focused on making AI systems robust against intentional manipulation. His work forced the community to reckon with the difference between a model that performs well on test sets and a model that is genuinely reliable in adversarial environments.

What is the connection between GANs and deepfakes?

Deepfakes are synthetic media — typically videos or images — in which a person’s likeness is convincingly replaced or manipulated using AI. The technology behind most deepfakes descends directly from Goodfellow’s GAN framework. GAN variants like StyleGAN can generate photorealistic faces of people who do not exist, and face-swapping systems use GAN-based architectures to replace one person’s face with another’s in video footage. Goodfellow recognized the dual-use potential of GANs early on and invested significant research effort in adversarial robustness and detection methods. The deepfake problem remains one of the most pressing challenges at the intersection of AI and society, and it underscores the broader lesson of Goodfellow’s career: powerful generative technology requires equally powerful safeguards, and the creators of such technology bear a responsibility to help develop those safeguards.