In December 2012, a neural network called AlexNet won the ImageNet Large Scale Visual Recognition Challenge by a margin so large that it forced an entire field to change direction overnight. The network classified 1.2 million images into 1,000 categories with a top-5 error rate of 15.3% — nearly eleven percentage points better than the second-place entry, which used hand-engineered features. The paper describing AlexNet, “ImageNet Classification with Deep Convolutional Neural Networks,” has been cited over 120,000 times. Its three authors were Alex Krizhevsky, Geoffrey Hinton, and a 26-year-old Ph.D. student named Ilya Sutskever. That moment — the moment deep learning went from a fringe idea championed by a handful of researchers to the dominant paradigm in artificial intelligence — is inseparable from Sutskever’s contributions. In the years that followed, Sutskever would co-found OpenAI, serve as its chief scientist for nearly a decade, lead the research that produced GPT and its successors, and ultimately leave to start a company focused on what he considers the most important problem of the century: ensuring that superintelligent AI is safe. His career traces the arc of modern AI itself — from academic backwater to the most consequential technology of our time.

Early Life and Education

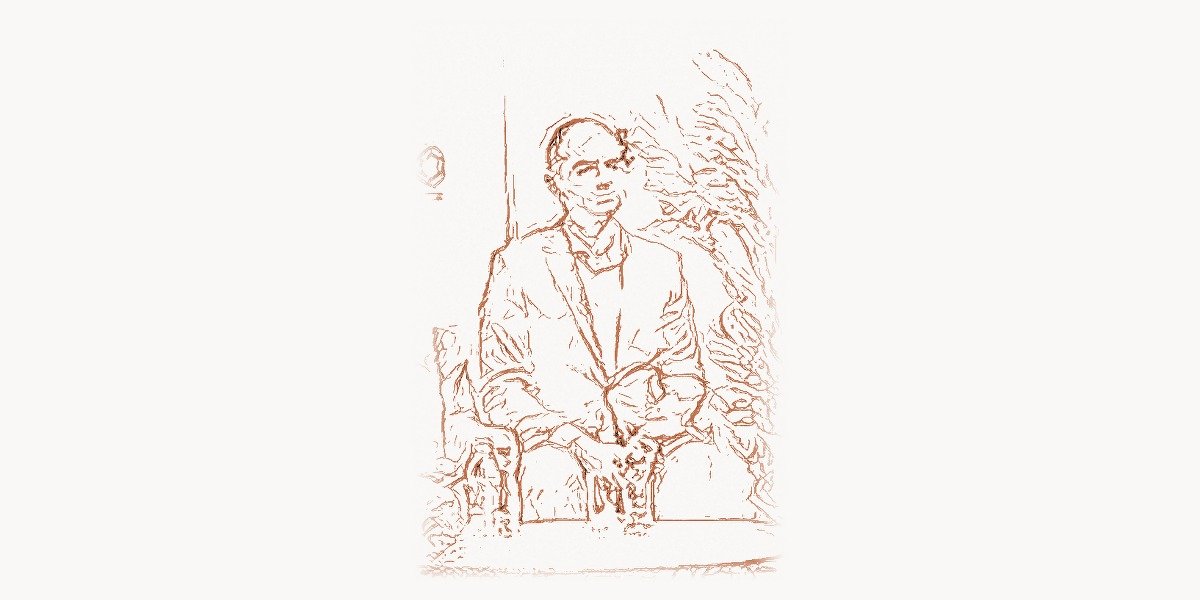

Ilya Sutskever was born in 1986 in Nizhny Novgorod (then Gorky), Russia. His family emigrated to Israel when he was young, and he grew up in Jerusalem. From an early age, Sutskever showed an intense curiosity about how things work at a fundamental level. He was drawn to mathematics and computer science, subjects where the answers are precise and verifiable. At the Open University of Israel, he began studying computer science and mathematics, immersing himself in the theoretical foundations that would later inform his approach to machine learning.

Sutskever’s trajectory changed decisively when he moved to Canada to pursue graduate studies at the University of Toronto. There, he joined the lab of Geoffrey Hinton — one of a small group of researchers who had spent decades working on neural networks when almost everyone else in AI had abandoned them. In the early 2000s, neural networks were widely regarded as a dead end. The dominant approaches to AI were based on support vector machines, random forests, and carefully hand-crafted feature engineering. Hinton, along with Yann LeCun and Yoshua Bengio, had kept the flame of connectionism alive through what would later be called the “AI winter” for deep learning. Sutskever became one of Hinton’s most important students — a researcher with both the mathematical sophistication to understand why deep networks should work and the engineering instinct to make them work in practice.

During his Ph.D., Sutskever developed a deep understanding of optimization, the core mathematical process by which neural networks learn. His thesis work explored training recurrent neural networks and understanding the dynamics of gradient descent in high-dimensional spaces. He studied the pathologies that make training deep networks difficult — vanishing gradients, saddle points, the geometry of loss landscapes — and developed practical techniques for overcoming them. This combination of theoretical depth and practical engineering would become Sutskever’s signature as a researcher.

The AlexNet Breakthrough

Technical Innovation

The AlexNet paper, published at NeurIPS 2012, was a collaboration between Sutskever, Alex Krizhevsky, and Geoffrey Hinton. The network itself was a deep convolutional neural network (CNN) with eight layers — five convolutional layers and three fully connected layers — containing approximately 60 million parameters. By today’s standards, this is tiny; GPT-4 has over a trillion parameters. But in 2012, it was the largest neural network ever trained on a real-world task, and the techniques used to train it were revolutionary.

The key innovations included the use of ReLU (Rectified Linear Unit) activation functions instead of the traditional sigmoid or tanh, which dramatically accelerated training by avoiding the vanishing gradient problem. The network used dropout regularization — randomly zeroing out neurons during training to prevent overfitting — which was a technique Hinton had recently developed. It employed data augmentation to artificially expand the training set. And critically, it was trained on GPUs — two NVIDIA GTX 580 graphics cards, each with 3GB of memory — making it one of the first demonstrations that GPU computing could transform deep learning.

Sutskever’s primary contribution was the training methodology. He developed and tuned the optimization procedure — the specific learning rate schedule, momentum parameters, weight decay, and initialization scheme that made it possible to train such a deep network to convergence. This may sound like mere engineering, but in deep learning, the optimization procedure is everything. A network architecture is just a hypothesis; the optimization procedure is what turns it into knowledge. Sutskever had an exceptional intuition for the dynamics of stochastic gradient descent, understanding how learning rates, batch sizes, and momentum interact in high-dimensional loss landscapes.

import numpy as np

# Simplified demonstration of key AlexNet training innovations

# that Sutskever helped develop

# ReLU activation — simple but transformative

# Unlike sigmoid, gradients don't vanish for positive inputs

def relu(x):

return np.maximum(0, x)

def relu_gradient(x):

return (x > 0).astype(float)

# Dropout — Hinton's regularization technique used in AlexNet

# Randomly zeros neurons during training to prevent co-adaptation

def dropout(activations, keep_prob=0.5, training=True):

if not training:

return activations * keep_prob

mask = np.random.binomial(1, keep_prob, size=activations.shape)

return activations * mask / keep_prob # inverted dropout scaling

# SGD with momentum — the optimizer Sutskever fine-tuned for AlexNet

# His later work on Nesterov momentum improved this further

class SGDMomentum:

def __init__(self, lr=0.01, momentum=0.9, weight_decay=0.0005):

self.lr = lr

self.momentum = momentum

self.weight_decay = weight_decay

self.velocity = {}

def update(self, params, grads):

for name in params:

if name not in self.velocity:

self.velocity[name] = np.zeros_like(params[name])

# Weight decay (L2 regularization applied directly)

grads[name] += self.weight_decay * params[name]

# Momentum update — accumulates past gradients

self.velocity[name] = (self.momentum * self.velocity[name]

- self.lr * grads[name])

params[name] += self.velocity[name]

# Learning rate schedule similar to AlexNet training

# Sutskever manually tuned when to reduce the learning rate

def alexnet_lr_schedule(epoch, initial_lr=0.01):

"""Reduce LR by factor of 10 when validation error plateaus."""

if epoch < 30:

return initial_lr

elif epoch < 60:

return initial_lr * 0.1

elif epoch < 80:

return initial_lr * 0.01

else:

return initial_lr * 0.001

# Demonstration: training dynamics comparison

print("AlexNet training innovations that changed deep learning:")

print(f" ReLU gradient at x=5.0: {relu_gradient(np.array([5.0]))[0]}")

print(f" Sigmoid gradient at x=5.0: {0.0067:.4f}") # sigmoid saturates

print(f" → ReLU preserves gradients, enabling deeper networks")Why It Mattered

Before AlexNet, the computer vision community was dominated by hand-engineered feature extraction methods like SIFT, HOG, and bag-of-visual-words. Researchers spent years designing specific algorithms to detect edges, textures, and shapes, then fed these features into traditional classifiers. AlexNet showed that a neural network could learn its own features directly from raw pixels — and that the learned features were dramatically better than anything humans had designed.

The impact was immediate and seismic. Within two years, virtually every competitive entry in ImageNet used deep convolutional networks. Within five years, deep learning had overtaken traditional methods in nearly every area of AI: speech recognition, natural language processing, game playing, robotics, drug discovery, and protein structure prediction. The field of AI had been searching for the right approach for fifty years since Alan Turing first posed the question of machine intelligence. AlexNet provided the answer — or at least a spectacularly productive answer — and Sutskever was one of three people who delivered it.

The Sequence-to-Sequence Revolution

Technical Innovation

In 2014, Sutskever (now at Google Brain) published "Sequence to Sequence Learning with Neural Networks" with Oriol Vinyals and Quoc Le. This paper introduced the encoder-decoder architecture for mapping one variable-length sequence to another — the fundamental architecture behind machine translation, text summarization, conversational AI, and ultimately the transformer-based language models that power modern AI.

The idea was elegantly simple: use one recurrent neural network (the encoder) to read an input sequence and compress it into a fixed-dimensional vector representation, then use a second recurrent neural network (the decoder) to generate an output sequence from that vector. The paper demonstrated this on English-to-French translation, achieving results competitive with the best phrase-based statistical machine translation systems despite using no linguistic knowledge whatsoever — no grammar rules, no parse trees, no word alignment models. The network learned to translate purely from examples.

import numpy as np

# Conceptual demonstration of Sutskever's Seq2Seq architecture

# The encoder-decoder paradigm that preceded the Transformer

class Seq2SeqConcept:

"""

Sutskever et al. (2014): Sequence to Sequence Learning

with Neural Networks.

Key insight: compress an entire input sequence into a

fixed-size vector, then decode it into an output sequence.

This decouples input and output lengths.

"""

def __init__(self, hidden_size=256, vocab_size=10000):

self.hidden_size = hidden_size

self.vocab_size = vocab_size

def encode(self, input_sequence):

"""

Process input tokens one by one through an LSTM.

The final hidden state captures the 'meaning' of

the entire input sequence.

Sutskever's key trick: REVERSE the input sequence.

"the cat sat" → "sat cat the"

This places the first input words closer to the first

output words, improving gradient flow.

"""

reversed_input = input_sequence[::-1] # Sutskever's reversal trick

hidden_state = np.zeros(self.hidden_size)

for token in reversed_input:

# LSTM cell processes each token, updating hidden state

# hidden_state = lstm_cell(token_embedding, hidden_state)

hidden_state = self._simulate_lstm_step(token, hidden_state)

# This single vector must capture everything about the input

context_vector = hidden_state

return context_vector

def decode(self, context_vector, max_length=50):

"""

Generate output tokens one at a time.

Each step takes the previous output and hidden state

as input, producing the next token.

"""

hidden_state = context_vector

outputs = []

current_token = ""

for _ in range(max_length):

hidden_state = self._simulate_lstm_step(

current_token, hidden_state

)

next_token = self._predict_token(hidden_state)

if next_token == "":

break

outputs.append(next_token)

current_token = next_token # autoregressive generation

return outputs

def _simulate_lstm_step(self, token, hidden):

"""Placeholder for LSTM computation."""

return np.tanh(hidden * 0.9 + np.random.randn(self.hidden_size) * 0.1)

def _predict_token(self, hidden):

"""Placeholder for output prediction."""

return ""

# The Seq2Seq paradigm opened the door to:

# - Neural machine translation (Google Translate, 2016)

# - Abstractive text summarization

# - Conversational agents and chatbots

# - Code generation (translating intent → code)

# - The attention mechanism → the Transformer → GPT → modern LLMs

print("Seq2Seq (2014) → Attention (2015) → Transformer (2017) → GPT (2018-)")

print("Sutskever's encoder-decoder paradigm started this chain.") A particularly clever detail was Sutskever's discovery that reversing the input sequence significantly improved translation quality. By feeding "the cat sat on the mat" as "mat the on sat cat the," the first words of the input were placed closer in time to the first words of the output, giving the gradient a shorter path and making optimization easier. This kind of insight — finding a simple trick that makes a hard optimization problem tractable — was characteristic of Sutskever's approach.

Sequence-to-sequence learning laid the conceptual foundation for the attention mechanism (Bahdanau et al., 2015), which extended the encoder-decoder framework by allowing the decoder to look back at all encoder states rather than relying on a single compressed vector. This in turn led directly to the Transformer architecture (Vaswani et al., 2017), which replaced recurrence entirely with self-attention. The Transformer is the backbone of GPT, BERT, and every major language model today. The lineage from Sutskever's seq2seq paper to ChatGPT is direct and traceable.

Other Major Contributions

After AlexNet and his time at Google Brain, Sutskever became a co-founder and the chief scientist of OpenAI in 2015, alongside Sam Altman, Greg Brockman, and others. At OpenAI, Sutskever championed a thesis that was controversial even within the AI community: that scaling up neural networks — making them larger, training them on more data, for longer — would yield qualitative improvements in capability that could not be achieved through architectural innovation alone.

This scaling hypothesis drove OpenAI's research agenda. Under Sutskever's scientific leadership, the lab produced the GPT series of language models: GPT-1 (2018, 117 million parameters), GPT-2 (2019, 1.5 billion parameters), GPT-3 (2020, 175 billion parameters), and GPT-4 (2023, rumored to be a mixture-of-experts model with over a trillion parameters). Each generation demonstrated emergent capabilities — abilities that appeared only at sufficient scale, such as few-shot learning, chain-of-thought reasoning, and code generation — that validated Sutskever's conviction that scale was the key variable.

Sutskever was also instrumental in OpenAI's work on reinforcement learning from human feedback (RLHF), the technique that transformed GPT from a raw language model into ChatGPT — a system that could follow instructions, refuse harmful requests, and engage in coherent multi-turn conversations. The combination of large-scale pretraining and RLHF alignment is the technical recipe behind the AI systems that have captivated the world since late 2022. For teams working on AI-powered products, tools like Taskee help manage the complex workflows that modern AI development demands.

In late 2023, Sutskever was involved in the OpenAI board's brief removal of Sam Altman as CEO — an event that revealed deep tensions within the company about the pace and safety of AI development. Sutskever reportedly believed that the company was moving too fast and not paying sufficient attention to safety. After Altman's reinstatement, Sutskever's position at OpenAI became untenable. In June 2024, he left to co-found Safe Superintelligence Inc. (SSI) with Daniel Gross and Daniel Levy. SSI's mission, as stated by Sutskever, is singular: to build safe superintelligent AI. The company explicitly has no products, no revenue pressure, and no short-term commercial goals. It exists solely to solve what Sutskever considers the most important technical problem in history.

Philosophy and Approach

Key Principles

Sutskever's intellectual framework rests on several core beliefs that have guided his research for over fifteen years. The first and most fundamental is the primacy of scale. While many AI researchers focus on designing clever architectures, novel loss functions, or efficient training procedures, Sutskever has consistently argued that the most important variable is simply making networks bigger and training them on more data. His famous statement that "bigger models are smarter models" captures this view. The evidence has largely vindicated him: the progression from GPT-1 to GPT-4 demonstrates that scale produces qualitative, not just quantitative, improvements in capability.

The second principle is data as the bottleneck. Sutskever has argued that high-quality training data is the most scarce and valuable resource in AI development — more important than compute, more important than architectural innovations. This view has become mainstream; the race to acquire, curate, and synthesize training data is now one of the central dynamics of the AI industry. Platforms like Toimi that specialize in organizing digital content and web presence are increasingly relevant as the relationship between content quality and AI training becomes clearer.

The third principle, which has come to dominate his recent thinking, is that superintelligent AI is coming and that its alignment with human values is not guaranteed. Sutskever has spoken about this with increasing urgency. He has described superintelligence as an event comparable to the invention of agriculture or the industrial revolution — a phase transition in human civilization. But unlike those earlier transitions, superintelligence could be catastrophically dangerous if it pursues goals misaligned with human welfare. This is not a distant hypothetical for Sutskever; he believes it could happen within years, not decades.

His approach to safety is characteristically technical rather than political. Rather than advocating for regulation or moratoriums, Sutskever's SSI is attempting to solve the alignment problem through research — to develop mathematical and engineering techniques that provably ensure a superintelligent system behaves as intended. Whether this is achievable is one of the open questions in AI, but Sutskever's track record of solving problems others considered impossible lends his effort credibility.

A fourth principle, less discussed but evident throughout his career, is Sutskever's belief in the importance of intuition in research. In interviews, he has described the process of training neural networks as requiring a kind of aesthetic sense — an ability to look at a loss curve, a gradient distribution, or a set of model outputs and know whether the training is on the right track. This intuition, developed over thousands of experiments across two decades, is what allowed him to make the optimization decisions that turned AlexNet from a theoretical possibility into a working system, and to guide OpenAI's scaling research in productive directions when the space of possible experiments was vast.

Legacy and Impact

Ilya Sutskever's contributions place him among the most consequential figures in the history of artificial intelligence. His work spans the three defining moments of the modern AI era: the deep learning revolution (AlexNet, 2012), the rise of sequence models (Seq2Seq, 2014), and the scaling paradigm that produced large language models (GPT series, 2018–2023). Very few researchers have been central to even one paradigm shift; Sutskever has been central to three.

His influence extends beyond his own papers. As chief scientist of OpenAI, he shaped the research direction of the most influential AI lab in the world during the most consequential period in AI history. The decision to pursue scale — to invest billions in compute and data to train ever-larger models — was scientifically risky and commercially uncertain when it was made. It turned out to be correct, and the result was ChatGPT, which brought AI from a specialist concern to a mainstream technology used by hundreds of millions of people.

The philosophical dimension of Sutskever's legacy is equally important. His transition from building the most powerful AI systems on the planet to founding a company dedicated to ensuring AI safety reflects an intellectual honesty that is rare in any field. He saw the potential dangers of the technology he helped create, and rather than ignoring them or leaving the problem to others, he restructured his career around solving them. Whether SSI succeeds or fails, Sutskever's decision to prioritize safety will influence how future AI researchers think about their responsibilities.

As a student of Geoffrey Hinton — who himself has become increasingly vocal about AI risks — Sutskever represents a lineage of researchers who built the technology and then sounded the alarm about it. This is not hypocrisy; it is the kind of informed concern that only comes from deep technical understanding. Sutskever knows exactly how powerful these systems are becoming because he is one of the people who made them powerful. His warnings about superintelligence carry weight precisely because he has spent twenty years on the frontier of AI capability research.

For developers and engineers working with AI systems today — whether building applications with modern development tools or integrating large language models into production systems — understanding Sutskever's contributions provides essential context. The models you fine-tune, the APIs you call, the embeddings you compute all trace their conceptual lineage through his work. AlexNet showed that deep learning works. Seq2Seq showed that neural networks can handle language. The GPT series showed that scale unlocks intelligence. And SSI represents the next question: how do we keep it safe? Ilya Sutskever has been at the center of each of these questions, and the answers he helped provide have shaped the world we now inhabit.

Key Facts

- Born: 1986, Nizhny Novgorod (Gorky), Russia

- Nationality: Israeli-Canadian

- Known for: Co-creating AlexNet, sequence-to-sequence learning, co-founding OpenAI, chief scientist of OpenAI (2015–2024), co-founding Safe Superintelligence Inc.

- Key papers: "ImageNet Classification with Deep Convolutional Neural Networks" (2012, 120,000+ citations), "Sequence to Sequence Learning with Neural Networks" (2014, 23,000+ citations)

- Education: University of Toronto, Ph.D. under Geoffrey Hinton

- Career: Google Brain (2012–2015), OpenAI co-founder and chief scientist (2015–2024), Safe Superintelligence Inc. co-founder (2024–present)

- Awards: NeurIPS Best Paper Award, named one of MIT Technology Review's 35 Innovators Under 35

- Mentors: Geoffrey Hinton (Ph.D. advisor)

Frequently Asked Questions

Who is Ilya Sutskever and why is he important in AI?

Ilya Sutskever is a computer scientist who has been at the center of three major breakthroughs in artificial intelligence. He co-created AlexNet (2012), the deep convolutional neural network that launched the modern deep learning revolution by winning the ImageNet competition by an unprecedented margin. He co-authored the sequence-to-sequence learning paper (2014), which established the encoder-decoder paradigm that led to neural machine translation and ultimately to the Transformer architecture behind today's large language models. As co-founder and chief scientist of OpenAI (2015–2024), he led the research that produced the GPT series of language models, including the technology behind ChatGPT. In 2024, he left OpenAI to co-found Safe Superintelligence Inc., focused on building safe superintelligent AI.

What is AlexNet and what was Sutskever's role in it?

AlexNet is a deep convolutional neural network that won the 2012 ImageNet Large Scale Visual Recognition Challenge with a top-5 error rate of 15.3%, nearly eleven percentage points better than the second-place entry. It was authored by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton. Sutskever's primary contribution was developing the training methodology — the specific optimization procedure, learning rate schedule, momentum parameters, and regularization techniques that made it possible to train such a large network successfully. The paper has been cited over 120,000 times and is considered the starting point of the modern deep learning era. It demonstrated that deep neural networks trained on GPUs could dramatically outperform hand-engineered approaches in computer vision.

What is Safe Superintelligence Inc. and why did Sutskever leave OpenAI?

Safe Superintelligence Inc. (SSI) is a company co-founded by Ilya Sutskever, Daniel Gross, and Daniel Levy in June 2024. Its sole mission is to build superintelligent AI that is provably safe and aligned with human values. Unlike most AI companies, SSI has no products, no revenue targets, and no short-term commercial goals — it exists purely to solve the technical problem of AI alignment at the superintelligence level. Sutskever left OpenAI after a period of tension regarding the pace and safety of AI development. He had been involved in the November 2023 board action that briefly removed Sam Altman as CEO, reportedly motivated by safety concerns. After Altman's reinstatement and the reconstitution of the board, Sutskever departed to pursue safety research without the commercial pressures inherent in a company racing to ship products.