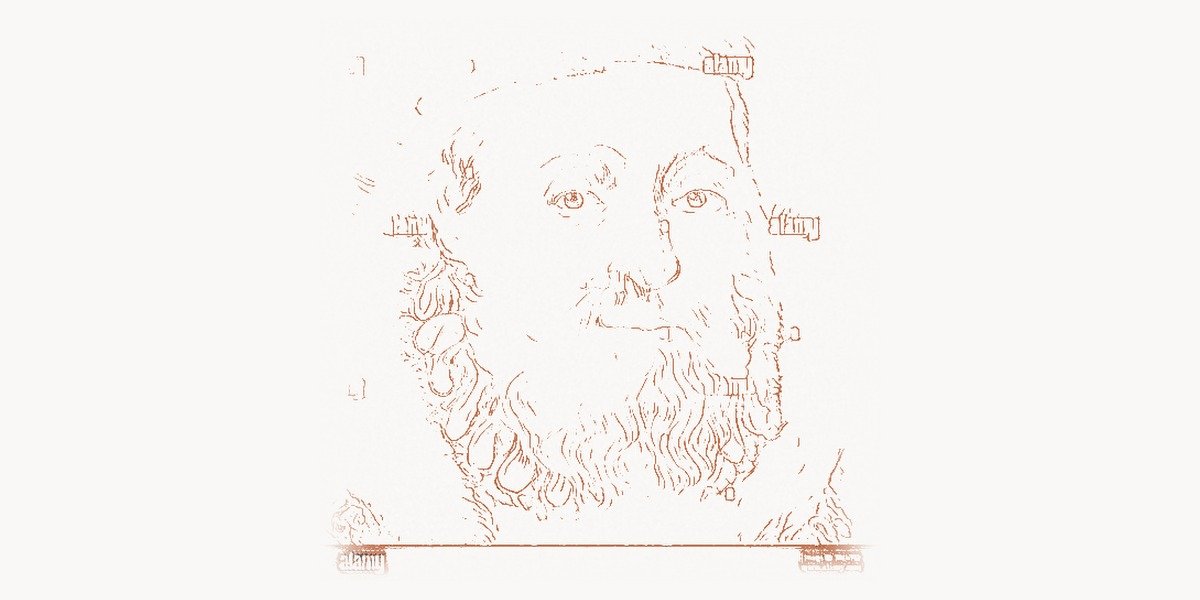

In the vast empire of Amazon Web Services, where millions of servers hum across dozens of regions worldwide, one engineer’s fingerprints are on virtually every physical decision that makes it all possible. James Hamilton — a Distinguished Engineer and VP at Amazon, former Microsoft and IBM veteran — is the person most responsible for turning data centers from glorified server closets into precision-engineered machines that power the modern internet. While most software engineers debate frameworks and languages, Hamilton has spent decades obsessing over power distribution, cooling efficiency, server board design, and network topology at a scale that few people on Earth can truly comprehend. His work has saved billions of dollars, reduced carbon footprints, and fundamentally reshaped how the industry thinks about infrastructure at scale.

Early Life and Education

James Hamilton grew up with a deep curiosity about how things work — both mechanical and electronic systems. He earned his Bachelor of Science in Electrical Engineering, followed by graduate studies that deepened his understanding of computer architecture and systems design. His academic background gave him a rare combination: the ability to think about problems from the hardware level all the way up through distributed software systems.

What set Hamilton apart from the start was his refusal to treat hardware and software as separate disciplines. Even in his early career, he recognized that the biggest performance gains and cost reductions come from co-designing systems — understanding how the physical infrastructure interacts with the software running on it. This holistic mindset would become the defining characteristic of his career and, eventually, a core principle at AWS.

Career and Technical Contributions

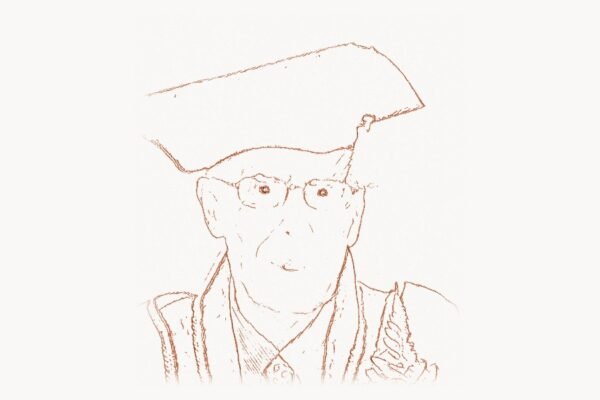

Hamilton’s career arc reads like a tour through the most important infrastructure organizations in computing history. He held senior engineering positions at IBM, where he worked on database systems, and at Microsoft, where he contributed to SQL Server and the early cloud infrastructure efforts. But it was his move to Amazon in 2008 that gave him the canvas to truly revolutionize data center design at planetary scale.

At IBM, Hamilton developed a deep understanding of transactional systems and reliability engineering — the same principles that Edgar F. Codd had formalized decades earlier. At Microsoft, he worked alongside teams building the enterprise database systems that powered a generation of business applications. His paper “On Designing and Deploying Internet-Scale Services,” published in 2007, became a landmark document that codified best practices for building reliable, large-scale distributed systems. It remains one of the most cited papers in the field of cloud operations.

Technical Innovation

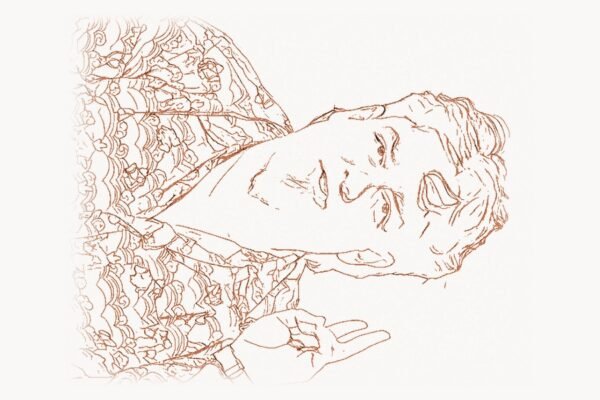

Hamilton’s greatest technical innovations revolve around what he calls “cost per useful operation” — the idea that every engineering decision in a data center should be measured by how efficiently it converts electricity into useful computation. This principle led him to rethink virtually every component of the data center stack.

One of his most significant contributions was the custom server design philosophy at AWS. Rather than purchasing off-the-shelf servers from Dell or HP, Hamilton championed the approach of designing custom hardware optimized specifically for cloud workloads. AWS began stripping away unnecessary components — removing GPU slots from compute-focused machines, eliminating redundant power supplies in favor of facility-level redundancy, and designing custom motherboards with exactly the components needed and nothing more. This approach eventually led to the development of the AWS Nitro System and later the Graviton ARM-based processors, which fundamentally changed the economics of cloud computing.

Hamilton also revolutionized data center power distribution. Traditional data centers used multiple layers of power conversion — from utility power to UPS systems, through power distribution units, down to individual server power supplies — each conversion losing 5-15% of energy as waste heat. Hamilton’s designs minimized these conversion stages, pioneering the use of higher-voltage DC distribution within data centers. The following simplified configuration illustrates the kind of power management approach that Hamilton advocated for modern hyperscale facilities:

# Simplified data center power monitoring configuration

# Inspired by Hamilton's principles of measuring cost per useful operation

facility:

name: "us-east-1-az3"

design_pue_target: 1.08

power_distribution:

utility_voltage: 480V_AC

distribution_voltage: 400V_DC

conversion_stages: 2 # Minimize conversion losses

redundancy_model: "facility-level" # Not per-server

monitoring:

metrics:

- name: power_usage_effectiveness

formula: "total_facility_power / it_equipment_power"

alert_threshold: 1.12

- name: server_utilization

target_range: [0.40, 0.65]

alert_below: 0.25

- name: cost_per_useful_operation

components: ["power", "cooling", "amortization", "network"]

cooling:

primary: "outside_air_economization"

secondary: "evaporative_cooling"

mechanical_cooling: false # Eliminated through site selection

max_inlet_temperature: 80F # ASHRAE A2 expanded envelopeHis approach to cooling was equally radical. Hamilton was among the first to argue that data centers should be located where nature provides free cooling — regions with temperate climates where outside air can be used directly, eliminating the need for energy-hungry mechanical chillers. He also pushed for wider temperature operating ranges, challenging the industry’s conservative approach that kept server rooms at unnecessary cold temperatures. By demonstrating that modern servers operate reliably at higher temperatures, he eliminated enormous amounts of wasted cooling energy. These infrastructure innovations echo the same first-principles thinking that Jeff Bezos applied to Amazon’s business model — questioning every assumption and optimizing from the ground up.

Network design was another area where Hamilton made transformative contributions. He championed the move away from expensive, proprietary networking equipment toward commodity switches running custom software — an approach that saved AWS hundreds of millions of dollars while actually improving reliability. His team designed custom network protocols and topologies optimized for the specific traffic patterns of cloud workloads, much like how Jeff Dean approached infrastructure challenges at Google by building custom solutions for their specific scale requirements.

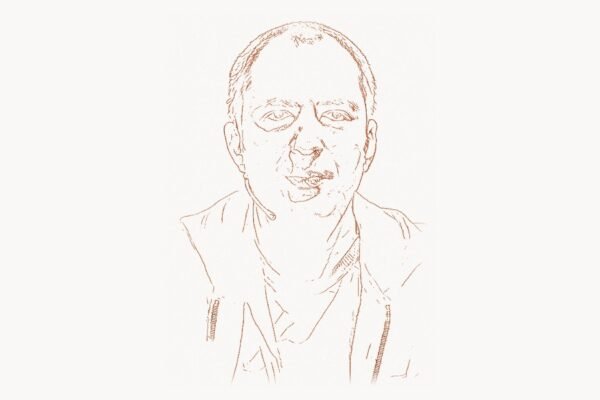

Why It Mattered

The cumulative impact of Hamilton’s innovations is staggering. When AWS reports a Power Usage Effectiveness (PUE) approaching 1.1, it means that for every watt consumed by actual computation, only 0.1 watts are lost to overhead — cooling, power conversion, lighting, and other facility costs. The industry average when Hamilton started was closer to 2.0, meaning half of all electricity was wasted. At AWS’s scale of operation, this efficiency improvement translates to billions of dollars in savings annually and millions of tons of avoided carbon emissions.

But the impact goes far beyond AWS itself. Hamilton’s public writings, conference talks, and papers democratized knowledge that was previously locked inside proprietary operations. Startups building their first data centers could learn from the same principles that power the world’s largest cloud provider. His blog, “Perspectives,” became essential reading for anyone working in infrastructure, covering everything from submarine cable design to diesel generator reliability to the economics of renewable energy. This open sharing of knowledge through technical writing mirrors the philosophy championed by engineers like Werner Vogels, whose own writings on distributed systems architecture helped define an entire generation of cloud-native development practices.

Hamilton’s work also made cloud computing economically viable for an entirely new class of applications. By relentlessly driving down the cost per computation, he enabled companies to run workloads in the cloud that would have been prohibitively expensive just a few years earlier. Machine learning training, genomic analysis, climate modeling — these computationally intensive tasks became accessible because the underlying infrastructure was engineered to extreme efficiency.

Other Notable Contributions

Beyond his direct data center work, Hamilton has made significant contributions to the broader technology community through his writing and speaking. His 2007 paper “On Designing and Deploying Internet-Scale Services” laid out 100+ best practices for running reliable services at scale. Many of these recommendations — such as designing for failure, automating everything, and measuring everything — have become standard practice in the DevOps and Site Reliability Engineering movements.

Hamilton has been a vocal advocate for renewable energy in data centers. He has written extensively about the economics and engineering challenges of powering data centers with wind, solar, and hydroelectric energy. His analysis of power purchase agreements (PPAs) and the grid-level implications of renewable energy adoption has informed both industry strategy and public policy discussions. The following example shows the kind of automated health-check framework that embodies Hamilton’s operational philosophy of designing systems that expect and handle failure gracefully:

# Server fleet health monitoring — Hamilton's "design for failure" principle

# Automated detection and remediation of common data center issues

class FleetHealthMonitor:

"""

Implements Hamilton's principle: assume everything fails,

design systems that detect and recover automatically.

"""

FAILURE_DOMAINS = [

"power_supply", "network_link", "storage_device",

"cooling_fan", "memory_dimm", "cpu_thermal"

]

def __init__(self, availability_zone, redundancy_factor=3):

self.az = availability_zone

self.redundancy = redundancy_factor

self.server_pool = {}

self.quarantine_pool = set()

def evaluate_server_health(self, server_id, telemetry):

"""Score server health from 0.0 (failed) to 1.0 (healthy)."""

scores = []

for domain in self.FAILURE_DOMAINS:

metric = telemetry.get(domain, {})

score = self._domain_score(domain, metric)

scores.append(score)

composite = sum(scores) / len(scores)

# Hamilton's rule: don't wait for failure, predict it

if composite < 0.6:

self._quarantine_server(server_id, composite)

elif composite < 0.8:

self._schedule_maintenance(server_id, composite)

return composite

def _quarantine_server(self, server_id, health_score):

"""Remove server from rotation before it impacts customers."""

self.quarantine_pool.add(server_id)

self._drain_workloads(server_id)

self._notify_ops(

severity="warning",

message=f"Server {server_id} quarantined (score: {health_score:.2f})"

)

def calculate_fleet_pue(self, facility_metrics):

"""Track Power Usage Effectiveness per Hamilton's methodology."""

total_power = facility_metrics["total_facility_kw"]

it_power = facility_metrics["it_equipment_kw"]

pue = total_power / it_power

if pue > 1.12:

self._alert_facilities_team(

f"PUE {pue:.3f} exceeds target in {self.az}"

)

return pueHe has also contributed to the discourse on submarine network cables, writing detailed analyses of how undersea fiber optic networks are designed, deployed, and maintained — a topic critical to global cloud infrastructure but poorly understood by most technologists. His analyses of the economic and engineering tradeoffs involved in submarine cable routing have become reference material for anyone working in global network architecture.

Hamilton is also known for his work on database reliability and transaction processing during his IBM and Microsoft years. His contributions to SQL Server’s storage engine and recovery mechanisms helped make it one of the most reliable database platforms of its era — work that built on the foundational transaction processing research of pioneers like Jim Gray.

Philosophy and Key Principles

Hamilton’s engineering philosophy can be distilled into several core principles that have influenced an entire generation of infrastructure engineers.

Measure everything, optimize relentlessly. Hamilton believes that you cannot improve what you do not measure. He advocates for comprehensive instrumentation of every aspect of data center operations — from power consumption at the rack level to the failure rates of individual hard drive models to the thermal performance of different server configurations. This data-driven approach allows engineers to make informed decisions rather than relying on vendor claims or industry folklore.

Design for the total cost of ownership. Hamilton consistently argues that purchase price is a misleading metric. A cheaper server that consumes 20% more power will cost far more over its operational lifetime than a more expensive but efficient alternative. This TCO mindset extends to everything — networking equipment, cooling systems, building design, and even site selection. The location of a data center affects land costs, power costs, cooling costs, network connectivity costs, and tax implications, and Hamilton insists on modeling all of these factors together.

Assume everything fails. Perhaps his most influential principle is the insistence on designing systems that expect failure at every level. Power supplies fail, hard drives fail, network switches fail, entire racks fail, and even entire data centers can go offline. Rather than trying to prevent every possible failure (an impossibly expensive approach), Hamilton advocates for building systems that tolerate failure gracefully — automatically detecting problems, routing around them, and recovering without human intervention. This philosophy directly shaped AWS’s multi-availability-zone architecture, which has become the gold standard for cloud reliability.

Simplify aggressively. Hamilton argues that complexity is the enemy of reliability and efficiency. Every unnecessary component, every extra layer of abstraction, and every redundant conversion step adds cost, increases failure probability, and reduces performance. He champions designs that achieve their goals with the minimum number of components — a philosophy that resonates with the approach taken by engineers like Mitchell Hashimoto, who built Terraform and Vagrant around the principle that infrastructure management should be simple, declarative, and reproducible.

Share knowledge openly. Despite working for one of the most competitive companies in technology, Hamilton has consistently shared his insights publicly through his blog, conference presentations, and papers. He believes that raising the bar for the entire industry ultimately benefits everyone, including AWS. This openness has made him one of the most respected voices in infrastructure engineering and has helped establish best practices that are now widely adopted.

Legacy and Impact

James Hamilton’s legacy is literally built into the physical infrastructure of the modern internet. Every time you stream a video, train a machine learning model, or deploy a containerized application on AWS, you are benefiting from design decisions that Hamilton made or influenced. His work has set the standard for how hyperscale data centers are built and operated, and his principles have been adopted by every major cloud provider.

The custom silicon movement that Hamilton helped pioneer at AWS — starting with the Nitro System and continuing through the Graviton processor family — has fundamentally disrupted the server market. By demonstrating that cloud providers can design their own hardware more efficiently than general-purpose server vendors, Hamilton opened the door for a wave of custom chip development across the industry. Google followed with its TPU processors, and Microsoft with its own custom designs, but AWS under Hamilton’s technical leadership was the trailblazer.

Hamilton’s influence extends beyond any single company. His public advocacy for energy efficiency has helped push the entire data center industry toward sustainability. The tools and frameworks that modern infrastructure teams use to manage their operations — like those built by companies such as Taskee for cross-functional team coordination — all operate within an ecosystem that Hamilton helped shape through his relentless focus on measurability and automation. His writings on renewable energy, PUE optimization, and efficient cooling have been cited in academic research, industry standards, and even government policy documents.

For infrastructure engineers and SREs (Site Reliability Engineers), Hamilton represents an ideal: the engineer who understands systems from the physics of power delivery all the way up to the distributed algorithms that keep services running. He demonstrates that the most impactful engineering often happens not in the latest JavaScript framework or mobile app, but in the invisible infrastructure that makes everything else possible. His approach to building and managing complex technical organizations has influenced how engineering teams structure their work across the industry.

His “On Designing and Deploying Internet-Scale Services” paper, now nearly two decades old, remains required reading at many technology companies. The principles it outlines — design for failure, automate everything, measure everything, keep it simple — have become so widely accepted that they feel obvious today. But when Hamilton articulated them, they were radical departures from the prevailing wisdom of enterprise IT, where expensive hardware, manual operations, and over-provisioning were the norm. In the same way that Linus Torvalds transformed software development through Linux and Git, Hamilton transformed the physical infrastructure layer that all software ultimately depends on.

Key Facts

| Detail | Information |

|---|---|

| Full Name | James Hamilton |

| Role at Amazon | Vice President and Distinguished Engineer, AWS |

| Previous Companies | IBM, Microsoft |

| Key Paper | “On Designing and Deploying Internet-Scale Services” (2007) |

| Primary Focus | Data center design, power efficiency, custom hardware |

| Blog | Perspectives (mvdirona.com) |

| Known For | AWS custom server design, PUE optimization, Nitro System advocacy |

| Core Principle | Cost per useful operation |

| Industry Impact | Pioneered hyperscale data center efficiency standards |

| Engineering Philosophy | Co-design hardware and software for total system optimization |

Frequently Asked Questions

What is James Hamilton best known for in the tech industry?

James Hamilton is best known for revolutionizing data center design and operations at Amazon Web Services. As VP and Distinguished Engineer, he led the effort to design custom servers, optimize power distribution, and implement energy-efficient cooling systems at a scale previously unimaginable. His work drove AWS’s Power Usage Effectiveness (PUE) to near-theoretical-minimum levels and saved billions of dollars in operational costs. He is also widely recognized for his influential 2007 paper “On Designing and Deploying Internet-Scale Services,” which codified the operational best practices that modern cloud infrastructure is built upon.

How did James Hamilton change data center cooling and power efficiency?

Hamilton challenged fundamental assumptions about how data centers should be cooled and powered. He advocated for locating data centers in temperate climates where free outside air cooling could replace energy-hungry mechanical chillers. He pushed for wider server operating temperature ranges based on actual hardware reliability data rather than conservative industry defaults. On the power side, he minimized the number of electrical conversion stages between the utility grid and the server, pioneering high-voltage DC distribution that eliminated wasteful AC-to-DC conversions. These combined changes reduced total facility overhead from roughly 50% of power consumption to under 10% at AWS facilities.

What is the significance of Hamilton’s “cost per useful operation” metric?

The “cost per useful operation” metric represents Hamilton’s holistic approach to data center economics. Rather than optimizing individual components — buying the cheapest servers or the most efficient cooling units — he insists on measuring the total cost of delivering one unit of useful computation to a customer. This includes server purchase price, power consumption, cooling overhead, network costs, operational labor, facility amortization, and even the cost of failures and downtime. By focusing on this end-to-end metric, Hamilton’s teams could make counterintuitive decisions — such as spending more on servers to save on power, or investing in custom hardware to reduce operational complexity — that optimized the total system rather than any individual component.

Why is Hamilton’s 2007 paper still relevant today?

Hamilton’s “On Designing and Deploying Internet-Scale Services” has endured because it addresses fundamental principles rather than specific technologies. Its recommendations — such as designing every operation to be idempotent, instrumenting everything, practicing failure recovery regularly, and automating all routine operations — transcend any particular technology stack. The paper was written when cloud computing was in its infancy, yet its principles anticipated the challenges that DevOps teams, SREs, and platform engineers face today. It serves as a bridge between the theoretical foundations of distributed systems and the practical realities of operating services at scale, making it as relevant to an engineer deploying Kubernetes clusters today as it was to someone managing a handful of servers in 2007.