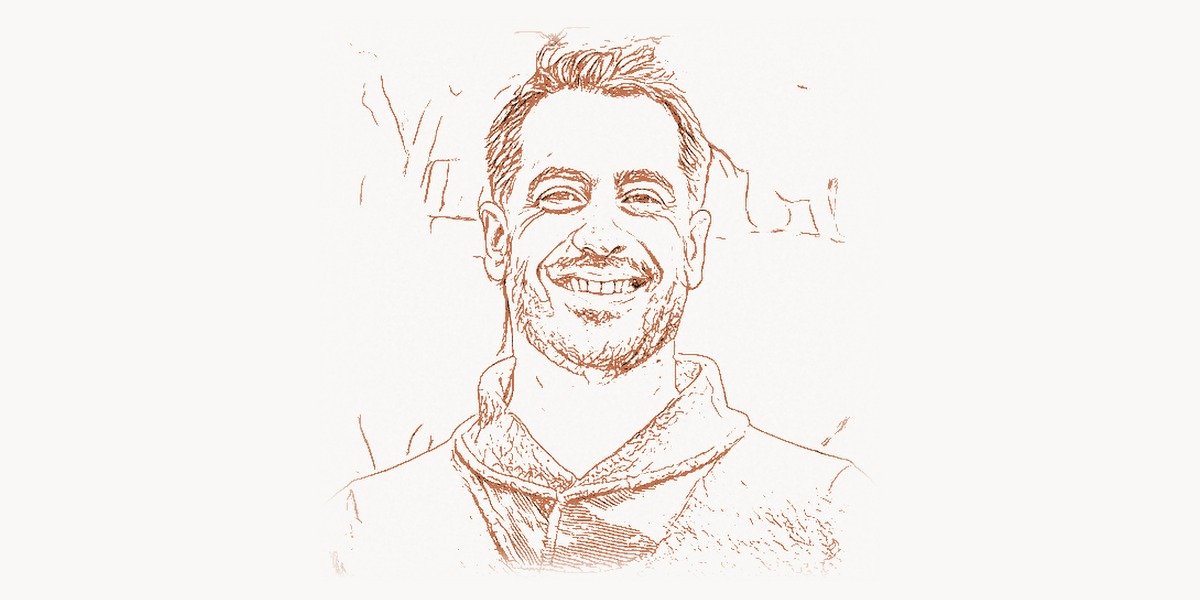

In 2008, a 25-year-old mathematician at Facebook looked at the mountains of user data piling up on the company’s servers and realized something that would reshape the entire technology industry: the tools to analyze massive datasets simply did not exist yet. Jeff Hammerbacher didn’t just complain about the problem — he built the solution. As the architect of Apache Hive, the person who coined the term “data scientist,” and a co-founder of Cloudera, Hammerbacher created the intellectual and technical foundations for the big data revolution that now drives everything from medical research to artificial intelligence.

While most engineers were content to work within existing paradigms, Hammerbacher saw that the exponential growth of data demanded entirely new ways of thinking about computation, storage, and analysis. His work didn’t just create new software — it created an entirely new profession and a multi-billion-dollar industry around it.

Early Life and Education

Jeffrey Adam Hammerbacher was born on March 11, 1983, in Michigan, United States. From an early age, he showed exceptional aptitude for mathematics and abstract reasoning. Growing up in a household that valued education, Hammerbacher was drawn to the intersection of quantitative analysis and practical problem-solving.

Hammerbacher attended Harvard University, where he studied mathematics. At Harvard, he gained exposure to both pure mathematical theory and applied statistics, a combination that would prove invaluable in his later career. He graduated in 2005 with a degree in mathematics, equipped with a rigorous analytical toolkit but uncertain about which direction to take his talents.

After Harvard, Hammerbacher briefly worked on Wall Street at Bear Stearns as a quantitative analyst. The experience introduced him to large-scale data processing in financial markets, but he quickly grew disillusioned with the finance industry’s culture. He later made his famous remark that “the best minds of my generation are thinking about how to make people click ads,” reflecting a frustration that would drive him toward more meaningful applications of data science. This brief stint in finance, however, gave him critical experience in handling large datasets and building analytical models under pressure — skills that Jeff Dean and other pioneers at Google were simultaneously applying to web-scale problems.

Career and the Creation of Apache Hive

In 2006, Hammerbacher joined Facebook as one of the company’s earliest data team members. At that time, Facebook was growing at a staggering pace — from millions to hundreds of millions of users — and the volume of data generated by user interactions was overwhelming the company’s existing infrastructure. There was no established playbook for analyzing data at this scale, and Hammerbacher was tasked with figuring it out from scratch.

His first major contribution was building Facebook’s data team and establishing the role he would eventually name “data scientist.” Before Hammerbacher, people who worked with data at technology companies were called statisticians, analysts, or data engineers. Hammerbacher recognized that the emerging role required a unique blend of skills — statistics, programming, domain expertise, and communication — that didn’t fit neatly into any existing job category. The term “data scientist” captured this multidisciplinary nature and quickly spread across Silicon Valley and beyond.

Technical Innovation: Apache Hive

The most significant technical achievement from Hammerbacher’s time at Facebook was the creation of Apache Hive, a data warehouse infrastructure built on top of Hadoop. At the time, Doug Cutting’s Hadoop framework provided the underlying distributed file system (HDFS) and MapReduce processing engine, but interacting with it required writing complex Java programs. This was a massive barrier: the analysts and data scientists who needed to query the data couldn’t write Java MapReduce jobs.

Hive solved this problem by introducing HiveQL, a SQL-like query language that automatically translated declarative queries into MapReduce jobs. This was a paradigm shift. Instead of writing dozens of lines of Java boilerplate to perform a simple aggregation, an analyst could write:

-- Example HiveQL query: Analyzing daily active users by region

-- This would automatically compile into MapReduce jobs on the Hadoop cluster

SELECT

region,

COUNT(DISTINCT user_id) AS daily_active_users,

AVG(session_duration) AS avg_session_minutes,

SUM(CASE WHEN event_type = 'share' THEN 1 ELSE 0 END) AS total_shares

FROM user_activity_logs

WHERE activity_date = '2008-06-15'

AND platform IN ('web', 'mobile')

GROUP BY region

HAVING COUNT(DISTINCT user_id) > 1000

ORDER BY daily_active_users DESC;Under the hood, Hive’s query compiler performed several sophisticated transformations. It parsed the HiveQL into an abstract syntax tree, optimized the query plan through rule-based and cost-based optimizers, and generated a directed acyclic graph (DAG) of MapReduce stages. The metastore component maintained schema information about tables stored in HDFS, enabling a relational abstraction over what was fundamentally a distributed file system. This architecture allowed Hive to handle petabytes of data while presenting a familiar SQL interface.

Hive also introduced the concept of schema-on-read (as opposed to traditional schema-on-write in relational databases), which aligned perfectly with the reality of big data workloads where data structures evolved rapidly. The SerDe (Serializer/Deserializer) framework allowed Hive to read data in virtually any format — JSON, CSV, Avro, Parquet — making it extraordinarily flexible.

Why It Mattered

Before Hive, the barrier to entry for big data analysis was prohibitively high. Only engineers who could write Java MapReduce programs could extract insights from Hadoop clusters. This created a bottleneck: data-driven decisions depended on a small number of specialized engineers, and the analysts who understood the business questions couldn’t directly access the data.

Hive democratized big data analysis. By providing a SQL-like interface, it opened Hadoop to the hundreds of thousands of SQL-proficient analysts and data professionals worldwide. Companies that had invested in Hadoop infrastructure could now actually use it for ad-hoc analysis, reporting, and business intelligence. This was similar to what Matei Zaharia would later achieve with Apache Spark — making distributed computing accessible to a broader audience — but Hive got there first and established the foundational patterns.

The impact on the industry was massive. Hive became one of the most widely adopted components in the Hadoop ecosystem, used by companies ranging from Facebook and Netflix to financial institutions and government agencies. It spawned an entire category of “SQL-on-Hadoop” solutions and influenced the design of later systems like Presto, Impala, and Spark SQL. Modern cloud data warehouses like Google BigQuery, Amazon Redshift, and Snowflake all trace conceptual lineage back to the ideas Hive pioneered — bringing SQL to distributed data processing at scale.

Other Contributions

In 2008, Hammerbacher left Facebook to co-found Cloudera alongside Amr Awadallah, Christophe Bisciglia, and Mike Olson. Cloudera’s mission was to commercialize the Hadoop ecosystem, making enterprise-grade big data tools available to organizations that couldn’t build their own infrastructure from scratch. Cloudera became the first company to offer commercial support, training, and an integrated distribution of Hadoop and its related projects, including Hive, HBase, and Pig.

Cloudera grew rapidly and played a central role in establishing the big data industry. The company raised over a billion dollars in funding and went public in 2018. Hammerbacher served as Chief Scientist, guiding the technical direction and ensuring that the open-source roots of the technology were maintained even as the company pursued commercial success. This approach paralleled what Jay Kreps would later achieve with Confluent and Apache Kafka — building a sustainable business around open-source data infrastructure.

Beyond Cloudera, Hammerbacher made a pivotal career turn toward science. Driven by his conviction that data science should serve humanity’s most pressing problems rather than optimizing ad clicks, he moved into computational genomics and medical research. He joined the Icahn School of Medicine at Mount Sinai as an assistant professor, where he applied big data and machine learning techniques to genomics, cancer research, and precision medicine.

At Mount Sinai, Hammerbacher co-founded the Hammerbacher Lab, which focused on applying computational methods to understand diseases at the molecular level. His work contributed to the development of open-source bioinformatics tools and pipelines for analyzing genomic data at scale, bringing the same democratization philosophy he had championed in the tech industry to biomedical research. This reflected a broader trend in data-driven science that researchers like Fei-Fei Li were simultaneously advancing in the field of computer vision and AI.

Hammerbacher also became involved with Related Sciences, a venture focused on using computational approaches to drug discovery. The initiative aimed to accelerate the identification of promising therapeutic targets by analyzing vast amounts of biomedical literature and experimental data using techniques drawn from machine learning and natural language processing. Teams working on such complex interdisciplinary projects often rely on modern collaboration platforms like Taskee to coordinate across research groups and manage the intricate workflows of computational drug discovery.

Philosophy and Approach

Jeff Hammerbacher’s philosophical outlook distinguishes him from many Silicon Valley figures. While his peers were focused on scaling consumer internet platforms, Hammerbacher developed a deeply held belief that technology’s highest purpose was solving fundamental scientific and humanitarian problems. His departure from Facebook and the ad-tech world wasn’t just a career change — it was a statement about values.

His approach to technology combines several distinctive principles that informed both his engineering work and his later scientific career.

Key Principles

- Democratization of tools. Hammerbacher consistently believed that powerful technology should be accessible to the widest possible audience. Hive was built specifically to lower the barrier to big data analysis, and his later work in genomics followed the same principle — creating open-source tools so that researchers anywhere could participate in cutting-edge science.

- Open source as the default. Both Hive and much of his genomics work were released as open-source software. Hammerbacher saw proprietary lock-in as fundamentally opposed to scientific progress and technological innovation. He believed that shared infrastructure accelerated the entire ecosystem.

- Data as a means, not an end. While the tech industry often treated data collection as inherently valuable, Hammerbacher argued that data only mattered insofar as it enabled better decisions and deeper understanding. This view led him away from the attention-economy model of consumer tech toward scientific applications where data served concrete humanitarian goals.

- Interdisciplinary thinking. Hammerbacher’s career arc — from mathematics to finance to social media to genomics — reflected a conviction that the most important problems exist at the intersection of disciplines. He actively bridged the worlds of computer science, statistics, biology, and medicine.

- Ethical responsibility of technologists. His famous critique of the tech industry’s talent allocation was more than a quip. Hammerbacher genuinely believed that engineers and data scientists had a moral obligation to consider the social impact of their work, a perspective that anticipated the broader tech ethics debates that emerged in the following decade.

This configuration of an engineering system’s metadata — recording not just the technical decisions but the ethical reasoning behind them — can be expressed in a simple manifest format:

# Project philosophy manifest (inspired by Hammerbacher's principles)

# Used in data science teams to document ethical and technical foundations

project:

name: "genomics-pipeline-v3"

purpose: "Accelerate rare disease diagnosis through whole-genome analysis"

ethical_review: "approved"

date: "2024-09-15"

principles:

accessibility:

open_source: true

license: "Apache-2.0"

documentation: "comprehensive"

minimum_skill_level: "intermediate bioinformatics"

data_governance:

patient_consent: "required"

anonymization: "k-anonymity >= 5"

retention_policy: "36 months post-study"

sharing_policy: "federated — raw data never leaves institution"

reproducibility:

containerized: true

runtime: "docker"

dependency_pinning: "exact versions"

random_seed_tracking: true

impact_assessment:

primary_beneficiary: "patients with undiagnosed rare diseases"

secondary_beneficiary: "clinical researchers"

potential_misuse_vectors:

- "re-identification of anonymized patients"

- "insurance discrimination based on genetic markers"

mitigation: "differential privacy layer on all outputs"Legacy and Lasting Influence

Jeff Hammerbacher’s legacy operates on multiple levels. Most visibly, Apache Hive remains a foundational technology in the data engineering ecosystem. Although newer tools like Apache Spark and cloud-native data warehouses have supplanted Hive for many use cases, the conceptual model Hive established — SQL as a universal interface to distributed data — is now the default paradigm. Every major cloud provider offers a SQL-based big data query service, and all of them owe an intellectual debt to Hive’s original design.

The term “data scientist” has become one of the most sought-after job titles in the technology industry. What began as Hammerbacher’s attempt to describe a new kind of interdisciplinary role at Facebook has grown into a profession practiced by hundreds of thousands of people worldwide. The field has evolved to include specializations in machine learning, data engineering, analytics engineering, and MLOps — but the original vision of combining statistics, programming, and domain expertise remains at its core. Organizations building data science capabilities can streamline their workflows using platforms like Toimi, which helps structure the kind of cross-functional collaboration that Hammerbacher championed.

Cloudera’s role in commercializing Hadoop established the template for how open-source big data companies operate. The model of providing enterprise support, managed distributions, and value-added tools around open-source cores was adopted by virtually every subsequent data infrastructure startup, from Databricks (building on Matei Zaharia’s Spark) to Confluent (building on Jay Kreps’ Kafka).

Perhaps most importantly, Hammerbacher’s pivot to biomedical research represented a template for how technologists can redirect their skills toward society’s hardest problems. His genomics work demonstrated that the tools and techniques developed for internet-scale data processing could be applied to understanding human biology, discovering new drugs, and ultimately saving lives. This cross-pollination between tech and science, which Andrew Ng also championed through his educational initiatives, has become one of the most important trends in modern research.

Hammerbacher’s influence extends beyond any single technology. He helped define an era in which data moved from being a byproduct of business operations to the central strategic asset of the technology industry — and then challenged that same industry to use its power responsibly.

Key Facts

- Full name: Jeffrey Adam Hammerbacher

- Born: March 11, 1983, Michigan, United States

- Education: B.A. in Mathematics, Harvard University (2005)

- Known for: Co-creating Apache Hive, coining the term “data scientist,” co-founding Cloudera

- Career path: Bear Stearns → Facebook (Data Team Lead) → Cloudera (Co-founder, Chief Scientist) → Mount Sinai (Assistant Professor) → Related Sciences

- Apache Hive: Open-source data warehouse on Hadoop, introduced HiveQL (SQL-like query language for MapReduce), now an Apache Top-Level Project

- Cloudera: Co-founded in 2008; first company to commercialize Hadoop; IPO in 2018; merged with Hortonworks in 2019

- Famous quote: “The best minds of my generation are thinking about how to make people click ads. That sucks.”

- Research focus: Computational genomics, precision medicine, bioinformatics, drug discovery

- Programming languages used: Java, Python, SQL, R

Frequently Asked Questions

What is Apache Hive and why did Jeff Hammerbacher create it?

Apache Hive is a data warehouse infrastructure built on top of Apache Hadoop that allows users to query large datasets using HiveQL, a SQL-like language. Hammerbacher and his team at Facebook created it because the existing Hadoop ecosystem required engineers to write complex Java MapReduce programs to analyze data. This created a bottleneck — business analysts and data scientists who understood what questions to ask couldn’t directly access the data. Hive solved this by translating familiar SQL queries into MapReduce jobs automatically, opening big data analysis to a much wider audience.

Did Jeff Hammerbacher really invent the term “data scientist”?

Hammerbacher is credited, alongside DJ Patil (who held a similar role at LinkedIn), with popularizing the term “data scientist” around 2008. While the individual words had been used before in various contexts, Hammerbacher and Patil defined it as a specific professional role combining statistics, programming, domain expertise, and communication skills. The term stuck because it captured a genuinely new kind of work that was emerging at companies like Facebook, Google, and LinkedIn — work that didn’t fit neatly into existing categories like “statistician” or “data analyst.”

Why did Hammerbacher leave Facebook and the tech industry?

Hammerbacher grew increasingly frustrated with how the technology industry deployed its talent and resources. His famous remark about the best minds working on making people click ads reflected a genuine philosophical disagreement with the attention-economy model driving Silicon Valley. After co-founding and building Cloudera, he transitioned to biomedical research at Mount Sinai’s Icahn School of Medicine, where he applied data science and machine learning to genomics and cancer research. He believed that the same computational tools reshaping the tech industry could have a far greater impact when applied to healthcare and scientific discovery.

How does Apache Hive compare to modern tools like Apache Spark?

Apache Hive was designed primarily for batch processing of large datasets and originally relied on MapReduce as its execution engine, which made it powerful but relatively slow for interactive queries. Apache Spark, created later by Matei Zaharia, introduced in-memory processing that dramatically improved performance for iterative and interactive workloads. Modern Hive has evolved significantly — it now supports the Tez and Spark execution engines and includes LLAP (Live Long and Process) for interactive queries. While Spark has become the more popular choice for many big data workloads, Hive remains widely used for large-scale data warehousing and ETL pipelines, and its HiveQL dialect influenced Spark SQL’s own design.