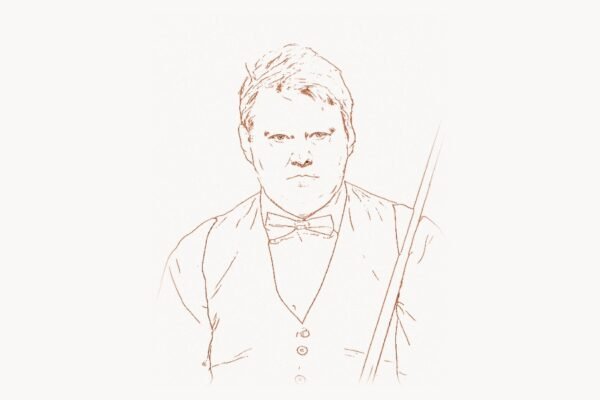

Every computer science student who has ever taken a compilers course knows the dragon. It appears on the cover of Compilers: Principles, Techniques, and Tools, breathing fire at a knight armed with the weapons of syntax-directed translation and parsing theory. That textbook — universally known as the “Dragon Book” — has shaped how compilers are taught and built since 1986. But while the Dragon Book made Jeffrey Ullman famous among generations of students, it represents only one facet of a career that fundamentally altered both compiler science and database theory. Ullman’s theoretical work on formal languages, his co-creation of foundational algorithms textbooks with Alfred Aho and John Hopcroft, and his pioneering research into database query optimization and datalog have left permanent marks on how we process programming languages and how we store, query, and reason about data. When the Association for Computing Machinery awarded Ullman and Aho the 2020 Turing Award for “fundamental algorithms and theory underlying programming language implementation,” it recognized a body of work spanning five decades — one that bridges the gap between abstract mathematical theory and the practical systems that power modern computing.

Early Life and Academic Formation

Jeffrey David Ullman was born on November 22, 1942, in New York City. He grew up in an era when computing was transitioning from room-sized vacuum tube machines to the first transistor-based systems, and when computer science itself was only beginning to emerge as a discipline distinct from mathematics and electrical engineering. Ullman showed strong mathematical aptitude early, pursuing his undergraduate education at Columbia University, where he earned a Bachelor of Science in engineering in 1963.

For graduate work, Ullman moved to Princeton University, then one of the premier centers for theoretical computer science in the United States. At Princeton, he studied under the supervision of Arthur Bernstein, completing his Ph.D. in electrical engineering in 1966. His dissertation work focused on problems at the intersection of automata theory and formal languages — the mathematical structures that describe what computers can and cannot compute, and the grammars that define the syntax of programming languages. This foundation in formal language theory would prove essential: it connected directly to both compiler construction (where formal grammars define programming language syntax) and database theory (where formal logic defines query languages).

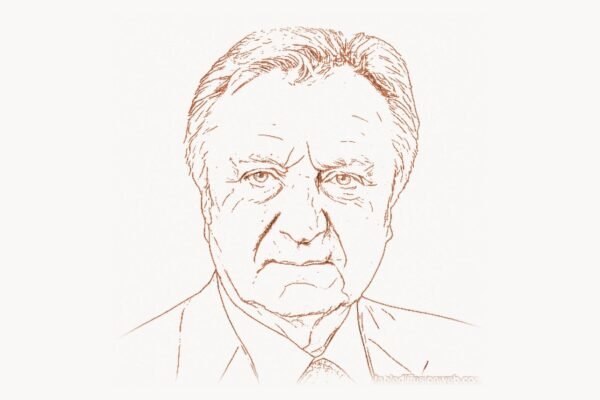

After completing his doctorate, Ullman spent a brief period at Bell Labs — the legendary research institution where Alfred Aho was building his career and where tools like Unix and the C language were being developed. At Bell Labs, Ullman collaborated with Aho and John Hopcroft on what would become some of the most influential textbooks in computer science. He then moved to Princeton University as a faculty member before joining Stanford University in 1979, where he would spend the most productive decades of his career and become one of the defining figures of the Stanford Computer Science Department.

The Dragon Book: Defining Compiler Education

The story of the Dragon Book begins not in 1986 but in 1977, when Aho and Ullman published Principles of Compiler Design — sometimes called the “Green Dragon Book” for its cover illustration. That first edition established the structure and approach that would make the series legendary: a systematic treatment of every phase of compilation, from lexical analysis through code generation and optimization, grounded in formal language theory but always oriented toward practical implementation.

The 1986 edition — Compilers: Principles, Techniques, and Tools, co-authored with Ravi Sethi — expanded and refined the material substantially. Known as the “Red Dragon Book,” it became the standard textbook for compiler courses at universities worldwide. The book covers the complete compilation pipeline: lexical analysis using finite automata, parsing using context-free grammars (including LL and LR parsing techniques), semantic analysis through attribute grammars and type checking, intermediate code generation, code optimization using data-flow analysis, and final target code generation.

What distinguished the Dragon Book from other compiler textbooks was its rigorous yet accessible treatment of the theoretical foundations. Ullman’s deep expertise in formal language theory ensured that concepts like context-free grammars, pushdown automata, and fixed-point computations were presented with mathematical precision. But the book never let theory become an end in itself — every formal concept was motivated by a concrete compiler design problem. Consider the treatment of LR parsing, which forms one of the book’s most important contributions to compiler education:

// LR(1) Parsing — Shift-Reduce Algorithm

// From Dragon Book principles: bottom-up parsing

// builds parse tree from leaves to root

// Example grammar for arithmetic expressions:

// E → E + T | T

// T → T * F | F

// F → ( E ) | id

// LR parsing table (simplified):

// ┌───────┬─────────────────────┬───────────────┐

// │ State │ ACTION │ GOTO │

// │ │ id + * ( ) $ │ E T F │

// ├───────┼─────────────────────┼───────────────┤

// │ 0 │ s5 s4 │ 1 2 3 │

// │ 1 │ s6 a │ │

// │ 2 │ r2 s7 r2 r2 │ │

// │ 3 │ r4 r4 r4 r4 │ │

// │ 4 │ s5 s4 │ 8 2 3 │

// │ 5 │ r6 r6 r6 r6 │ │

// │ 6 │ s5 s4 │ 9 3 │

// │ 7 │ s5 s4 │ 10 │

// │ 8 │ s6 s11 │ │

// │ 9 │ r1 s7 r1 r1 │ │

// │ 10 │ r3 r3 r3 r3 │ │

// │ 11 │ r5 r5 r5 r5 │ │

// └───────┴─────────────────────┴───────────────┘

//

// Parsing "id + id * id":

// Stack Input Action

// 0 id+id*id$ shift 5

// 0 id 5 +id*id$ reduce F→id

// 0 F 3 +id*id$ reduce T→F

// 0 T 2 +id*id$ reduce E→T

// 0 E 1 +id*id$ shift 6

// 0 E 1 + 6 id*id$ shift 5

// 0 E 1 + 6 id 5 *id$ reduce F→id

// 0 E 1 + 6 F 3 *id$ reduce T→F

// 0 E 1 + 6 T 9 *id$ shift 7

// 0 E 1 + 6 T 9 * 7 id$ shift 5

// 0 E 1 + 6 T 9 * 7 id 5 $ reduce F→id

// 0 E 1 + 6 T 9 * 7 F 10 $ reduce T→T*F

// 0 E 1 + 6 T 9 $ reduce E→E+T

// 0 E 1 $ acceptThis kind of step-by-step trace, connecting abstract parsing theory to concrete mechanical execution, exemplifies the Dragon Book’s pedagogical power. Students could see exactly how the mathematical formalism of LR parsing translated into a working algorithm. The book equipped generations of computer scientists not just to use compilers but to understand, modify, and build them — a skill that extends far beyond traditional compilers into language servers, linters, domain-specific languages, and query processors.

A second edition appeared in 2006, adding Monica Lam as a co-author and expanding coverage of modern optimization techniques, just-in-time compilation, and the challenges posed by contemporary programming languages. Known as the “Purple Dragon Book,” this edition demonstrated that the Dragon Book’s framework remained relevant even as compilation targets shifted from simple register machines to complex multi-core processors, virtual machines, and managed runtimes.

Foundational Textbooks: Algorithms, Automata, and Data Structures

The Dragon Book was far from Ullman’s only influential textbook. In 1974, he co-authored The Design and Analysis of Computer Algorithms with Aho and Hopcroft — one of the first comprehensive treatments of algorithms as a unified subject. This book covered graph algorithms, sorting and searching, string matching, computational geometry, and the theory of NP-completeness. It helped establish algorithms as a core pillar of computer science education, complementing Donald Knuth’s monumental The Art of Computer Programming with a more textbook-oriented approach suitable for classroom use.

Even earlier, in 1969, Ullman and Hopcroft published Formal Languages and Their Relation to Automata, which was revised and expanded into the widely used Introduction to Automata Theory, Languages, and Computation (1979, with further editions in 2001 and 2006). Known informally as the “Cinderella Book” for its cover art, this textbook became the standard reference for automata theory, formal languages, and computability — subjects that form the mathematical backbone of computer science. The book’s treatment of regular expressions, context-free grammars, Turing machines, decidability, and computational complexity gave students the theoretical tools needed to understand not just compilers but the fundamental limits of computation itself. It connected directly to the work of pioneers like Alan Turing, whose theoretical framework for computation underpins everything in the field.

Ullman also co-authored Data Structures and Algorithms (1983) with Aho and Hopcroft, aimed at undergraduates, and Foundations of Computer Science (1992) with Aho, covering the mathematical prerequisites for the discipline. Together, these textbooks formed a coherent curriculum spanning from introductory data structures through advanced algorithms, automata theory, and compiler construction. Professors at universities worldwide could construct an entire computer science program using Ullman’s textbooks as the backbone. Few individuals have had such a comprehensive influence on how an entire discipline is taught.

Database Theory and Query Optimization

While Ullman’s compiler work earned him widespread recognition among students, his contributions to database theory were equally profound and arguably more impactful on the software industry. Beginning in the late 1970s, Ullman turned his attention to the theoretical foundations of relational databases — the model that Edgar F. Codd had proposed and that systems like System R and INGRES (later developed into PostgreSQL by Michael Stonebraker) were bringing to practical reality.

Ullman’s two-volume work, Principles of Database and Knowledge-Base Systems (1988-1989), became a defining text in database theory. Where other database textbooks focused on the practical use of SQL and relational systems, Ullman’s work addressed the deeper questions: How can database queries be optimized? What is the expressive power of different query languages? How do dependencies between data attributes affect database design? What is the relationship between database queries and logical inference?

His work on query optimization was particularly influential. Ullman and his students developed techniques for transforming database queries into more efficient forms — reordering joins, pushing selections down through query plans, and identifying equivalent but faster execution strategies. These ideas became core components of every commercial and open-source database engine. When a modern database system like PostgreSQL executes a complex SQL query, the query optimizer uses techniques that Ullman helped develop and formalize.

-- Query optimization principles influenced by Ullman's work

-- Demonstrating algebraic equivalences for relational queries

-- Original query: unoptimized nested subquery

-- A naive execution would scan the entire orders table

-- for each customer, resulting in O(n*m) complexity

SELECT c.name, c.email

FROM customers c

WHERE c.id IN (

SELECT o.customer_id

FROM orders o

WHERE o.total > 1000

AND o.order_date >= '2025-01-01'

);

-- Ullman's algebraic optimization: rewrite as join

-- The query optimizer pushes predicates down and

-- converts subqueries to semi-joins — a technique

-- formalized in Ullman's database theory work

SELECT DISTINCT c.name, c.email

FROM customers c

INNER JOIN orders o ON c.id = o.customer_id

WHERE o.total > 1000

AND o.order_date >= '2025-01-01';

-- The optimizer's internal transformation (conceptual):

--

-- Step 1: Push selection σ(total>1000 ∧ date≥2025-01-01)

-- down to the orders relation BEFORE the join

--

-- Step 2: Convert IN-subquery to semi-join (⋉)

-- customers ⋉ σ(orders)

--

-- Step 3: Choose join algorithm based on statistics:

-- - Hash join if orders result is large

-- - Index nested-loop if index on customer_id

-- - Merge join if both inputs are sorted

--

-- Step 4: Eliminate redundant projections

--

-- Result: From O(|customers| × |orders|)

-- To O(|customers| + |qualifying_orders|)

-- This algebraic approach to optimization — treating

-- queries as expressions in relational algebra and

-- applying equivalence-preserving transformations —

-- is a direct legacy of Ullman's theoretical framework.These optimization principles remain at the heart of every modern database system. The algebraic approach to query transformation — treating SQL queries as expressions in relational algebra and applying equivalence-preserving rewrite rules — was formalized in large part through Ullman’s theoretical work. Database engineers working on query planners today, whether for traditional relational systems or modern distributed databases, operate within a framework that Ullman helped establish.

Datalog and Logic Programming for Databases

One of Ullman’s most forward-looking contributions was his work on Datalog — a declarative query language based on logic programming. While SQL is the practical standard for database queries, Datalog offers a cleaner theoretical foundation, particularly for recursive queries (queries that need to follow chains of relationships, like finding all ancestors of a person or all nodes reachable from a starting point in a graph).

Ullman championed Datalog as both a research tool and a practical query language, developing efficient evaluation algorithms and demonstrating its applications in program analysis, network routing, security policy specification, and knowledge representation. His work showed that the techniques of logic programming — unification, resolution, and fixed-point computation — could be applied to database queries to handle problems that relational algebra alone could not express elegantly.

This work proved prescient. Datalog has experienced a renaissance in the 2010s and 2020s, finding applications in program analysis tools (such as Soufflé, used for points-to analysis in large codebases), network configuration verification, access control systems, and knowledge graphs. The idea that database queries and logical inference are fundamentally the same activity — an insight that Ullman’s work helped establish — has become increasingly important as systems need to reason about complex, interconnected data. Researchers like Christos Papadimitriou, who explored the computational complexity of database queries, built upon the theoretical framework that Ullman helped create.

Stanford, Students, and Industry Impact

Ullman’s move to Stanford University in 1979 placed him at the epicenter of Silicon Valley’s technology ecosystem, and he leveraged that position to create enormous impact through his students. As a doctoral advisor, Ullman supervised or co-supervised an extraordinary roster of students who went on to shape the technology industry. Among his advisees were Sergey Brin, co-founder of Google, who worked with Ullman before teaming up with Larry Page on the search engine that would transform the internet. Ullman also advised or taught students who became leaders in database systems, programming languages, and data mining.

At Stanford, Ullman became deeply involved in the theory and practice of data mining — the extraction of patterns and knowledge from large datasets. His textbook Mining of Massive Datasets, co-authored with Jure Leskovec and Anand Rajaraman, addressed the algorithms and techniques needed to process the enormous volumes of data generated by the web, social networks, and commercial systems. The book covered locality-sensitive hashing, recommendation systems, link analysis (including the PageRank algorithm that powered Google), frequent itemset mining, and stream processing. Made freely available online, it became one of the most widely used resources for students and practitioners working with big data.

Ullman’s connection to the founding of Google is a particularly striking example of how academic research in theoretical computer science can lead to transformative commercial innovation. The techniques of information retrieval, graph analysis, and efficient data processing that Brin and Page used to build Google were deeply connected to the theoretical frameworks that Ullman and his colleagues had developed. The relational database theory, query optimization, and data mining techniques that Ullman had spent decades developing were precisely the intellectual foundations needed for the era of web-scale data processing.

For teams managing the kind of large-scale technical projects that emerge from this research tradition, coordination tools are essential. Platforms like Toimi help development teams organize the complex workflows involved in building database engines, compiler toolchains, and data processing systems — the very kinds of systems that Ullman’s theoretical work made possible.

The Turing Award and Legacy

In 2020, the Association for Computing Machinery awarded the A.M. Turing Award — often called the Nobel Prize of computing — jointly to Jeffrey Ullman and Alfred Aho. The citation recognized their “fundamental algorithms and theory underlying programming language implementation.” The award acknowledged not just the Dragon Book or any single contribution, but the cumulative impact of decades of work on formal languages, automata theory, compiler design, and the textbooks that communicated these ideas to the world.

The Turing Award committee specifically noted the breadth of Aho and Ullman’s influence: their work spans theoretical foundations, practical algorithms, tool building, and education. Few researchers have made significant contributions in all four areas. Ullman’s ability to move between deep theory (formal languages, computational complexity, relational database theory) and practical impact (compiler design, query optimization, data mining) exemplifies the kind of career that advances an entire field rather than just a single subarea.

Ullman’s legacy is woven into the fabric of modern computing in ways that most programmers never see directly but depend on constantly. Every time a compiler transforms source code into machine instructions, it uses techniques that Ullman helped develop and codify. Every time a database optimizer rewrites a query for faster execution, it applies algebraic transformations that Ullman’s theoretical work formalized. Every time a student opens a textbook on automata theory, algorithms, or compilers, they are likely reading material shaped by Ullman’s pedagogical vision. And every time a web search engine processes a query against billions of documents, it relies on information retrieval and data mining techniques that Ullman helped pioneer.

Philosophy and Intellectual Approach

Ullman’s career embodies a particular philosophy about the relationship between theory and practice in computer science. He has consistently argued that deep theoretical understanding is not opposed to practical engineering — it is the foundation of it. His compiler work shows this: the formal theory of context-free grammars and automata is not merely an academic exercise but the direct basis for the parsing algorithms that every compiler uses. His database work shows it equally: relational algebra and dependency theory are not abstractions divorced from reality but the formal tools that enable query optimization in real systems.

This perspective connects Ullman to a tradition in computer science that includes figures like Edsger Dijkstra, who insisted on mathematical rigor in programming, and Donald Knuth, who combined theoretical analysis with practical tool building. Like these predecessors, Ullman demonstrated that the most lasting practical contributions often emerge from the deepest theoretical insights. The Aho-Corasick algorithm, LR parsing, query optimization through algebraic rewriting — all are cases where theory produced solutions that no amount of ad hoc engineering could have achieved.

Ullman has also been a vocal advocate for the importance of computer science education. His commitment to writing textbooks — a task that many researchers consider less prestigious than publishing papers — reflects a belief that knowledge has value only when it can be transmitted effectively. The clarity and rigor of his textbooks have set a standard for technical writing in computer science, and his decision to make Mining of Massive Datasets freely available online extended his educational reach to students worldwide who might not have access to expensive textbooks.

Impact on Modern Development

The techniques and principles that Ullman helped develop continue to evolve in modern computing. Just-in-time compilation, which powers languages like JavaScript and Java, extends the compilation techniques formalized in the Dragon Book into runtime environments. Modern database systems — from PostgreSQL to distributed SQL engines like CockroachDB and cloud-native databases — rely on query optimization frameworks that descend directly from Ullman’s theoretical work. Static analysis tools that detect bugs in code before it runs use data-flow analysis techniques that the Dragon Book popularized. And the resurgence of Datalog in program analysis and network verification demonstrates that Ullman’s work on logic-based query languages was decades ahead of its time.

The impact extends beyond individual technologies to the way computer scientists think about problems. Ullman’s textbooks taught generations of researchers and engineers to think formally — to define problems precisely, to prove that solutions are correct, to analyze their efficiency mathematically, and to seek general frameworks rather than ad hoc solutions. This intellectual discipline, applied across compiler construction, database theory, and algorithms, is perhaps Ullman’s most enduring contribution. It shaped not just specific tools and systems but the mindset of the people who build them.

For database engineers optimizing query plans, for compiler writers implementing new language features, for researchers exploring the boundaries of what can be efficiently computed — Ullman’s work provides both the foundational techniques and the intellectual framework. His career demonstrates that the most powerful form of practical engineering is one grounded in rigorous theory, communicated through clear education, and validated through decades of real-world impact. The dragon on the cover of his most famous book may be fictional, but the tools he forged to slay it are very real — and they continue to power the systems that underlie modern computing.

Frequently Asked Questions

What is the Dragon Book and why is it important?

The “Dragon Book” is the informal name for Compilers: Principles, Techniques, and Tools, co-authored by Jeffrey Ullman, Alfred Aho, and Ravi Sethi (with Monica Lam joining for the second edition). Named for the dragon illustration on its cover, it has been the standard university textbook on compiler construction since 1986. The book systematically covers every phase of compilation — lexical analysis, parsing, semantic analysis, optimization, and code generation — with rigorous theoretical foundations and practical algorithms. It has been used in compiler courses at hundreds of universities worldwide and has influenced the design of virtually every modern compiler, interpreter, and language processing tool.

What are Jeffrey Ullman’s main contributions to database theory?

Ullman made foundational contributions to relational database theory, particularly in query optimization, dependency theory, and the Datalog query language. His work on algebraic query optimization — transforming database queries into equivalent but more efficient forms using relational algebra rewrite rules — became a core component of every database engine. His two-volume Principles of Database and Knowledge-Base Systems was a landmark text that formalized the theoretical underpinnings of relational databases. His championship of Datalog demonstrated that logic programming could serve as a powerful query language for recursive and complex data relationships, an insight that has proven increasingly important in modern program analysis and knowledge graph systems.

How did Jeffrey Ullman influence the founding of Google?

Sergey Brin, co-founder of Google, was a graduate student at Stanford University where Ullman was a professor. Brin worked with Ullman before collaborating with Larry Page on the PageRank algorithm and the Google search engine. While Ullman did not directly create Google’s technology, his research environment at Stanford — focused on data mining, information retrieval, and large-scale data processing — provided the intellectual ecosystem in which Google was conceived. Ullman’s textbook Mining of Massive Datasets, which covers algorithms for web-scale data processing including link analysis and recommendation systems, reflects the same research tradition that produced Google.

What is Datalog and why does it matter today?

Datalog is a declarative query language based on logic programming that Ullman championed for database applications. Unlike SQL, Datalog handles recursive queries naturally — it can express computations like finding all reachable nodes in a graph or all ancestors in a hierarchy without the awkward syntax of SQL recursive common table expressions. After decades as primarily an academic language, Datalog has experienced a practical renaissance. Modern tools like Soufflé use Datalog for program analysis (detecting bugs and security vulnerabilities in large codebases), network configuration verification, and access control policy specification. Its clean theoretical foundation makes it particularly well-suited for applications where correctness guarantees are essential.

Why did Jeffrey Ullman receive the Turing Award?

In 2020, the ACM awarded the Turing Award jointly to Jeffrey Ullman and Alfred Aho for “fundamental algorithms and theory underlying programming language implementation.” The award recognized their combined contributions to formal language theory, compiler design (particularly through the Dragon Book), algorithms textbooks, and the theoretical foundations that enable programming languages to be implemented efficiently. The Turing Award committee noted the extraordinary breadth of their impact — spanning theoretical foundations, practical algorithms, software tools, and education — acknowledging that their textbooks and research shaped how an entire discipline thinks about language processing and computation.