In the spring of 2023, Jensen Huang stood on stage at the GTC conference and declared that the world was experiencing an “iPhone moment” for artificial intelligence. Behind him, projected in green and black, were the specifications of a new generation of GPUs that would power the large language models reshaping every industry on the planet. The stock market agreed: within eighteen months, NVIDIA’s market capitalization would surpass three trillion dollars, briefly making it the most valuable company on Earth. But to understand how a graphics card manufacturer from Santa Clara became the engine of the AI revolution, you have to go back thirty years, to a moment when three engineers in a Denny’s restaurant decided that the future of computing was parallel. Jensen Huang was thirty years old. He had an electrical engineering degree, a chip design background, and a conviction that the human desire for visual realism in computing would drive demand for a new kind of processor — one that could perform thousands of simple calculations simultaneously rather than a few complex ones sequentially. That conviction would prove to be one of the most consequential technical bets in the history of the semiconductor industry. The company he co-founded, NVIDIA, did not merely dominate the GPU market. It created the GPU market. And when deep learning researchers discovered that the same parallel architecture that rendered video game graphics could train neural networks orders of magnitude faster than conventional CPUs, NVIDIA found itself at the center of a technological transformation that even Huang himself had not fully anticipated. Today, NVIDIA’s chips power the training runs of virtually every frontier AI model, from the systems behind OpenAI’s GPT series to Google’s Gemini, Anthropic’s Claude, and Meta’s LLaMA. The deep learning revolution that Geoffrey Hinton spent decades preparing for arrived on hardware that Jensen Huang built.

Early Life and Education

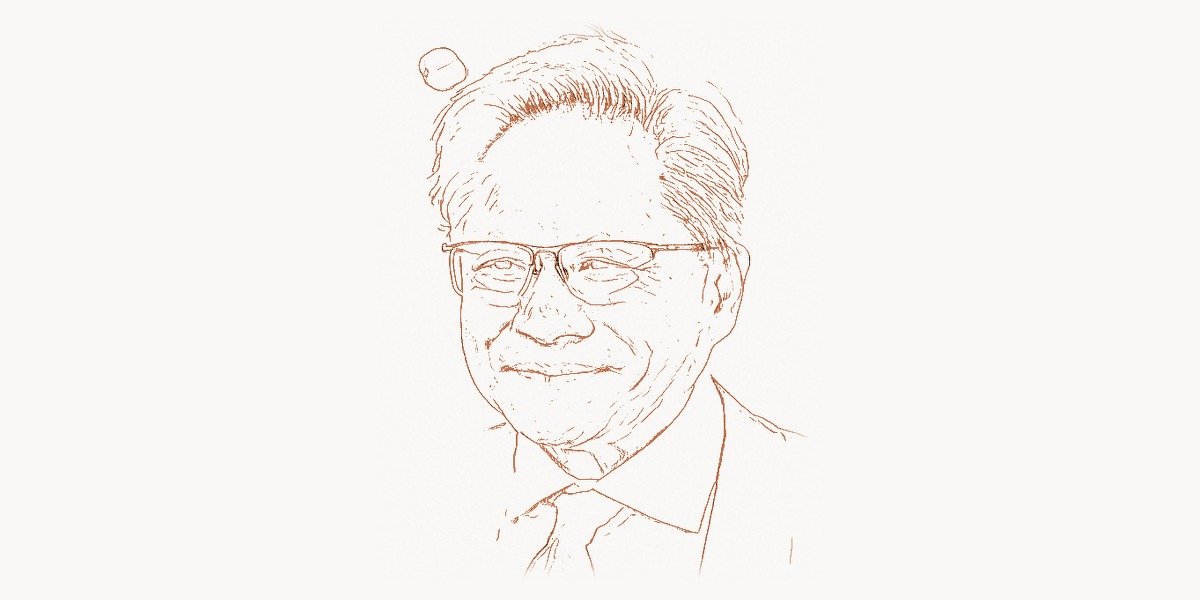

Jen-Hsun Huang was born on February 17, 1963, in Tainan, Taiwan. His father was a chemical engineer and his mother a schoolteacher. The family moved to Thailand when Huang was five, seeking better economic opportunities. At age nine, he and his older brother were sent to the United States to live with relatives in Tacoma, Washington. The plan was for the boys to attend a preparatory school, but a miscommunication landed them at Oneida Baptist Institute in rural Kentucky — a reform school where students were assigned manual labor, including cleaning horse stalls and tending tobacco fields. Huang was the youngest student and one of very few Asian children. He later recalled that the experience, while difficult, taught him resilience and self-reliance at a formative age.

His parents eventually joined the family in Oregon, and Huang attended Aloha High School near Portland, where he excelled academically and became a nationally ranked table tennis player. He enrolled at Oregon State University, earning a bachelor’s degree in electrical engineering in 1984. At Oregon State, he met Lori Mills, who would later become his wife; she was his lab partner in an engineering class. After graduation, Huang joined Advanced Micro Devices (AMD) as a chip designer. He then moved to LSI Logic, a semiconductor company where he worked on microprocessor design. Recognizing that he needed a stronger theoretical foundation, he enrolled at Stanford University, earning a master’s degree in electrical engineering in 1992. At Stanford, he was exposed to the emerging field of computer graphics and began to see the commercial potential of hardware acceleration for visual computing — an insight that would shape the next three decades of his career.

The GPU Computing Breakthrough

In January 1993, Jensen Huang, along with Chris Malachowsky and Curtis Priem — both experienced chip architects — co-founded NVIDIA. The company’s name derived from “invidia,” the Latin word for envy. Its mission was audacious: to build dedicated hardware for 3D graphics acceleration at a time when the market for such chips was unproven. Personal computers in 1993 rendered graphics almost entirely on the CPU, and the conventional wisdom held that general-purpose processors would always be fast enough for visual computing. Huang bet otherwise. He believed that real-time 3D graphics would become essential — first for gaming, then for professional visualization, and eventually for applications no one had yet imagined.

Technical Innovation

NVIDIA’s early years were marked by intense competition and near-fatal missteps. The company’s first product, the NV1, shipped in 1995 with an unconventional quadratic texture mapping approach instead of the industry-standard triangle-based rendering. It was technically ambitious but commercially disastrous — game developers had committed to triangles, and the NV1 was incompatible with the emerging Microsoft Direct3D standard. NVIDIA nearly went bankrupt. Huang laid off more than half the company and pivoted aggressively. The result was the RIVA 128, released in 1997, which was fully compatible with Direct3D and delivered performance that undercut established competitors like 3dfx. It sold a million units in four months.

But the product that truly defined NVIDIA’s trajectory was the GeForce 256, released in 1999. NVIDIA marketed it as the world’s first “Graphics Processing Unit” — coining the term GPU. The GeForce 256 moved the entire transform-and-lighting pipeline onto the graphics card, freeing the CPU from geometry calculations and establishing the architectural principle that would define GPU computing for the next quarter century: massive parallelism. While a CPU of that era had a single core executing instructions sequentially with deep pipelines optimized for low latency on individual tasks, the GeForce 256 had dozens of parallel processing units optimized for high throughput on thousands of simultaneous operations. Each individual unit was simpler and slower than a CPU core, but working in concert, they could process vertices and pixels at rates no CPU could match.

This architectural insight — that many problems in computing are inherently parallel and benefit more from throughput than from single-thread performance — was not unique to NVIDIA. But NVIDIA executed on it with a relentlessness that left competitors behind. The company adopted an aggressive two-year product cycle (later compressed to roughly annual), invested heavily in driver software and developer relations, and maintained a vertical integration strategy that controlled the full stack from silicon design to the software tools developers used to program the hardware. By the mid-2000s, NVIDIA’s GPUs contained hundreds of parallel cores, and researchers began to notice that the same hardware that rendered explosions in video games could accelerate scientific simulations, cryptographic computations, and — most consequentially — the matrix multiplications at the heart of neural network training.

Why It Mattered

The significance of GPU computing cannot be overstated. For decades, improvements in computing performance had followed Moore’s Law — the observation that transistor density doubled roughly every two years, delivering steady gains in single-thread CPU performance. By the mid-2000s, this trajectory was slowing. CPUs hit power and thermal walls that limited clock frequency increases. The industry’s response was multi-core CPUs — two, four, eight cores — but most software could not efficiently use more than a few parallel threads. GPUs offered a radically different approach: thousands of simple cores executing the same instruction on different data simultaneously, a model known as Single Instruction, Multiple Data (SIMD). For workloads that fit this model — and neural network training fit it almost perfectly — GPUs delivered speedups of 10x to 100x over CPUs.

When Yann LeCun’s convolutional neural networks and Hinton’s deep learning architectures began achieving breakthrough results in the early 2010s, it was NVIDIA’s GPUs that made the training computationally feasible. The ImageNet competition of 2012, where Alex Krizhevsky’s AlexNet (trained on two NVIDIA GTX 580 GPUs) crushed the competition by a historic margin, was the moment the AI research community realized that GPU computing was not just useful but essential. Without the parallel processing power that NVIDIA had spent two decades developing for gamers, the deep learning revolution would have been delayed by years, possibly a decade. The training runs that took days on GPUs would have taken months on CPUs — long enough to make iterative research effectively impossible.

The following Python example demonstrates the core principle behind GPU-accelerated deep learning — massive parallel matrix operations that map naturally onto GPU architecture. Modern frameworks like PyTorch abstract the CUDA layer, but the underlying computation remains the same parallel matrix multiplication that NVIDIA’s hardware was designed to accelerate:

import torch

import time

def benchmark_matrix_ops(size=4096):

"""

Demonstrate why GPU parallelism transformed deep learning.

Matrix multiplication is the core operation in neural network

training — every forward pass and gradient computation reduces

to multiplying large matrices. GPUs perform this operation

orders of magnitude faster than CPUs.

"""

# Create random matrices simulating neural network weight layers

A_cpu = torch.randn(size, size)

B_cpu = torch.randn(size, size)

# CPU matrix multiply — sequential, limited parallelism

start = time.time()

C_cpu = torch.mm(A_cpu, B_cpu)

cpu_time = time.time() - start

# GPU matrix multiply — thousands of CUDA cores in parallel

if torch.cuda.is_available():

A_gpu = A_cpu.cuda() # Transfer to NVIDIA GPU memory

B_gpu = B_cpu.cuda()

torch.cuda.synchronize()

start = time.time()

C_gpu = torch.mm(A_gpu, B_gpu) # Uses cuBLAS under the hood

torch.cuda.synchronize()

gpu_time = time.time() - start

speedup = cpu_time / gpu_time

print(f"Matrix size: {size}x{size}")

print(f"CPU time: {cpu_time:.4f}s")

print(f"GPU time: {gpu_time:.4f}s")

print(f"GPU speedup: {speedup:.1f}x")

# Typical result: 20-100x speedup for large matrices

# This is why training GPT-4 takes weeks on GPUs

# but would take years on CPUs alone

benchmark_matrix_ops()Other Major Contributions

Jensen Huang’s strategic vision extended far beyond building faster graphics cards. He recognized early that hardware without software is a paperweight, and that NVIDIA’s long-term dominance depended on creating an ecosystem that locked developers into its platform. This insight produced several transformative initiatives.

CUDA (Compute Unified Device Architecture). Released in 2006, CUDA was arguably NVIDIA’s most consequential strategic decision after the GPU itself. Before CUDA, programming a GPU for general-purpose computing required developers to disguise their computations as graphics operations — literally pretending that matrix multiplications were texture rendering tasks. This was awkward, error-prone, and inaccessible to most scientists and engineers. CUDA provided a C-like programming language and runtime that allowed developers to write parallel code for NVIDIA GPUs directly, without any graphics pretense. The following example illustrates the fundamental CUDA programming model — launching thousands of parallel threads to perform element-wise computation on arrays:

// CUDA kernel: each thread processes one element in parallel

// This pattern — mapping one thread to one data element — is

// the foundation of GPU-accelerated deep learning operations

__global__ void vectorAdd(float *A, float *B, float *C, int N) {

// Calculate unique global thread index

int idx = blockIdx.x * blockDim.x + threadIdx.x;

if (idx < N) {

C[idx] = A[idx] + B[idx];

}

}

// Matrix multiply kernel — the core operation in neural networks

// Each thread computes one element of the output matrix

__global__ void matMul(float *A, float *B, float *C,

int M, int K, int N) {

int row = blockIdx.y * blockDim.y + threadIdx.y;

int col = blockIdx.x * blockDim.x + threadIdx.x;

if (row < M && col < N) {

float sum = 0.0f;

for (int i = 0; i < K; i++) {

sum += A[row * K + i] * B[i * N + col];

}

C[row * N + col] = sum;

}

}

// Host code: launch 1 million parallel threads

int main() {

int N = 1 << 20; // ~1 million elements

// ... allocate and copy memory ...

// Launch kernel with 1024 threads per block

int threadsPerBlock = 256;

int blocksPerGrid = (N + threadsPerBlock - 1) / threadsPerBlock;

vectorAdd<<>>(d_A, d_B, d_C, N);

// For matrix multiply: 2D grid of thread blocks

dim3 block(16, 16);

dim3 grid((N + 15) / 16, (M + 15) / 16);

matMul<<>>(d_A, d_B, d_C, M, K, N);

return 0;

} CUDA’s importance was strategic as much as technical. By giving researchers a powerful and relatively easy-to-use tool for GPU programming, NVIDIA ensured that the growing body of scientific and machine learning code was written specifically for its hardware. When deep learning exploded in popularity, every major framework — TensorFlow, PyTorch, Caffe — was built on CUDA. Switching to a competing GPU meant rewriting the entire software stack, a cost that most researchers and companies were unwilling to bear. This software moat proved far more durable than any hardware advantage.

RTX and Real-Time Ray Tracing. In 2018, NVIDIA introduced the RTX series of GPUs with dedicated ray tracing cores (RT cores) and AI-powered denoising through tensor cores. Ray tracing — simulating the physical behavior of light to generate photorealistic images — had been a gold standard in offline rendering for decades, used in films and visual effects. But real-time ray tracing in interactive applications was considered computationally infeasible. NVIDIA’s approach combined specialized hardware with AI: the RT cores handled ray-scene intersection calculations, while tensor cores ran neural networks that denoised the partially rendered image, producing convincing results from far fewer rays than a brute-force approach would require. The technique, called DLSS (Deep Learning Super Sampling), was an elegant demonstration of AI enhancing traditional computing tasks.

AI Data Center Infrastructure. Recognizing that AI training was moving from individual GPUs to massive clusters, Huang pivoted NVIDIA’s data center business from an afterthought to its primary revenue driver. The company developed purpose-built AI training systems — the DGX line — that packaged multiple GPUs with high-bandwidth interconnects (NVLink and NVSwitch) into integrated units designed for large-scale deep learning. The A100 GPU (2020) and its successor the H100 (2022) became the standard hardware for training frontier AI models. NVIDIA also developed networking technology (through its acquisition of Mellanox) and software frameworks (NCCL, Triton Inference Server) that optimized distributed training across thousands of GPUs. By 2024, building a frontier AI model without NVIDIA hardware was nearly impossible — the company controlled an estimated 80-95% of the AI training chip market.

Omniverse. Launched in 2020, NVIDIA Omniverse is a real-time collaboration and simulation platform built on USD (Universal Scene Description, originally developed by Pixar). It allows engineers, designers, and researchers to build physically accurate digital twins of real-world environments — factories, cities, autonomous vehicle scenarios — and simulate them using GPU-accelerated physics. Omniverse represents Huang’s vision of a future where AI systems are trained and tested in simulated worlds before being deployed in the physical one. Companies like BMW, Siemens, and Amazon have used Omniverse to simulate factory layouts, robotic workflows, and warehouse logistics. For teams managing complex technical projects, platforms like Taskee provide structured task management that complements these sophisticated simulation workflows.

Philosophy and Approach

Jensen Huang’s leadership style and business philosophy are distinctive in an industry dominated by understated engineers. He is known for wearing a black leather jacket at every public appearance — it has become his signature, as recognizable in tech circles as Steve Jobs’s turtleneck. But beneath the showmanship is a management philosophy shaped by decades of near-death corporate experiences and relentless competition.

Key Principles

Intellectual honesty and “speed of light” thinking. Huang frequently pushes his engineering teams to calculate the theoretical maximum performance of a system — the “speed of light” — and then measure how close their implementation comes. This approach eliminates hand-waving and forces rigorous analysis of where performance is being lost. He has described NVIDIA’s culture as one where “the different between a good company and a great company is the last 10% of performance.”

A flat organizational structure. NVIDIA has an unusually flat management hierarchy for a company of its size. Huang reportedly has around 60 direct reports — far more than the typical CEO span of 7-10. He does not use a conventional one-on-one meeting structure; instead, he relies on a system of “Top 5” emails where employees send him their five most important priorities, challenges, or observations. This creates a direct information channel that bypasses middle management and gives Huang an unusually granular view of operations across the company.

Betting on the long term. NVIDIA’s investment in CUDA in 2006 is the canonical example. At the time, GPU computing was a niche academic interest with no clear commercial payoff. The CUDA team was expensive to maintain, and the initiative generated no meaningful revenue for years. Huang committed to it anyway, reasoning that if general-purpose GPU computing succeeded, the early mover advantage would be insurmountable. The same long-term thinking drove NVIDIA’s early and aggressive investment in AI-specific hardware features — tensor cores, transformer engines, high-bandwidth memory — years before the market fully materialized. Effective project management methodologies, such as those offered by Toimi, help technology companies maintain focus on long-term strategic initiatives while managing day-to-day engineering complexity.

Embracing near-failure. Huang has spoken openly about how close NVIDIA came to bankruptcy multiple times — the NV1 failure, the FX 5800 debacle, the mobile chip struggles. He views these experiences not as failures to be hidden but as essential lessons that shaped the company’s culture of urgency and adaptability. He has said that he tells new employees that NVIDIA is always “thirty days away from going out of business,” a deliberate exaggeration meant to maintain the intensity and focus of a startup even at massive scale.

Legacy and Impact

Jensen Huang’s impact on computing is measured not in any single product but in the fundamental shift he helped engineer in how computation is performed. Before NVIDIA, the computing industry’s default assumption was that the CPU was the universal processor and that all performance improvements would come from making CPUs faster. After NVIDIA — and specifically after CUDA made GPU computing accessible — the industry recognized that specialized parallel processors could deliver orders-of-magnitude improvements for specific workloads. This insight extended beyond GPUs: it laid the intellectual groundwork for the current era of domain-specific accelerators, including Google’s TPUs, Apple’s Neural Engine, and the proliferation of AI-specific chips from startups and established semiconductor companies alike.

The deep learning revolution is the most visible consequence. The neural network architectures developed by researchers like Hinton, LeCun, and Yoshua Bengio were theoretical possibilities for decades. What turned them into practical technologies was the availability of massive parallel computing power at reasonable cost — and that power came primarily from NVIDIA GPUs. Every frontier AI model trained between 2012 and 2025 — every version of GPT, every iteration of image generation, every protein structure prediction, every autonomous driving perception system — was trained on NVIDIA hardware. This is an extraordinary concentration of technological influence in a single company and, to a remarkable degree, in a single individual’s strategic vision.

Huang has received numerous honors for his contributions. He was named one of Time magazine’s 100 most influential people multiple times. He received the IEEE Founder’s Medal in 2024 and the Dr. Morris Chang Exemplary Leadership Award. NVIDIA, under his leadership, has grown from a three-person startup in a Denny’s booth to a company employing over 30,000 people with annual revenues exceeding $60 billion. At the core of this success is a simple architectural principle that Huang grasped before almost anyone else: that the future of computing is parallel, and that the company that controls the parallel computing platform will control the future of technology.

Understanding the modern developer toolchain requires recognizing how GPU computing has reshaped everything from code compilation to real-time debugging and profiling. What Linus Torvalds did for open-source software — creating the foundational infrastructure that an entire ecosystem depends on — Jensen Huang did for parallel computing hardware. The comparison is not exact, but the structural parallel is instructive: both men built platforms that became so deeply embedded in the technology stack that alternatives, while theoretically possible, face insurmountable switching costs.

Key Facts

- Full name: Jen-Hsun “Jensen” Huang

- Born: February 17, 1963, in Tainan, Taiwan

- Education: B.S. in Electrical Engineering, Oregon State University (1984); M.S. in Electrical Engineering, Stanford University (1992)

- Co-founded NVIDIA: January 1993, with Chris Malachowsky and Curtis Priem

- Key innovations: Coined the term “GPU” (1999), launched CUDA (2006), introduced RTX real-time ray tracing (2018), built the DGX AI supercomputer line

- Market impact: NVIDIA’s market capitalization exceeded $3 trillion in 2024, making it briefly the world’s most valuable company

- Awards: IEEE Founder’s Medal (2024), Time 100 Most Influential People (multiple years), Dr. Morris Chang Exemplary Leadership Award

- Personal: Known for his signature black leather jacket; married to Lori Huang (née Mills), his college lab partner; avid table tennis player in his youth

- Leadership style: ~60 direct reports, “Top 5” email system, flat organizational hierarchy

- AI market dominance: NVIDIA holds an estimated 80-95% share of the AI training chip market as of 2024

FAQ

Why is Jensen Huang considered a pioneer of GPU computing?

Jensen Huang co-founded NVIDIA in 1993 and led the development of the Graphics Processing Unit as a distinct category of processor. NVIDIA coined the term “GPU” in 1999 with the GeForce 256 and subsequently developed CUDA in 2006, which transformed the GPU from a graphics-only device into a general-purpose parallel computing platform. This platform became the essential hardware foundation for deep learning and AI research. Without NVIDIA’s GPUs and the CUDA software ecosystem, the training of large neural networks — which requires massive parallel matrix operations — would have been impractical with the CPU technology available at the time. Huang’s strategic decisions to invest in parallel computing hardware and developer tools years before the market demanded them positioned NVIDIA as the indispensable infrastructure provider for the AI era.

How did NVIDIA’s GPUs enable the deep learning revolution?

Deep learning algorithms — particularly the backpropagation-trained neural networks developed by researchers like Geoffrey Hinton and Yann LeCun — require enormous amounts of computation. Training a neural network involves performing billions of matrix multiplications, an operation that is inherently parallel: each element of the output matrix can be computed independently. CPUs, designed for sequential processing with a few powerful cores, were poorly suited to this workload. GPUs, with thousands of simpler cores designed for parallel throughput, could perform these matrix operations 10-100x faster. The breakthrough moment was AlexNet in 2012, which used two NVIDIA GPUs to train a deep convolutional neural network that dramatically outperformed all previous approaches on the ImageNet benchmark. After that result, GPU-accelerated training became the standard approach, and NVIDIA’s CUDA ecosystem ensured that its hardware remained the platform of choice for AI researchers worldwide.

What is CUDA and why is it important for AI development?

CUDA (Compute Unified Device Architecture) is a parallel computing platform and programming model developed by NVIDIA, first released in 2006. It allows software developers to use NVIDIA GPUs for general-purpose computation — not just graphics rendering — by providing a C/C++-like programming interface and a comprehensive set of libraries, tools, and frameworks. CUDA’s importance for AI stems from two factors. First, it made GPU programming accessible to machine learning researchers who were not graphics programming specialists, dramatically lowering the barrier to GPU-accelerated experimentation. Second, it created a powerful software ecosystem lock-in: all major deep learning frameworks (PyTorch, TensorFlow, JAX) are built on top of CUDA libraries like cuBLAS, cuDNN, and NCCL. This means that the vast majority of existing AI code runs only on NVIDIA hardware. Competing GPU manufacturers like AMD and Intel have struggled to replicate this ecosystem, giving NVIDIA a durable competitive advantage that extends far beyond raw hardware performance.