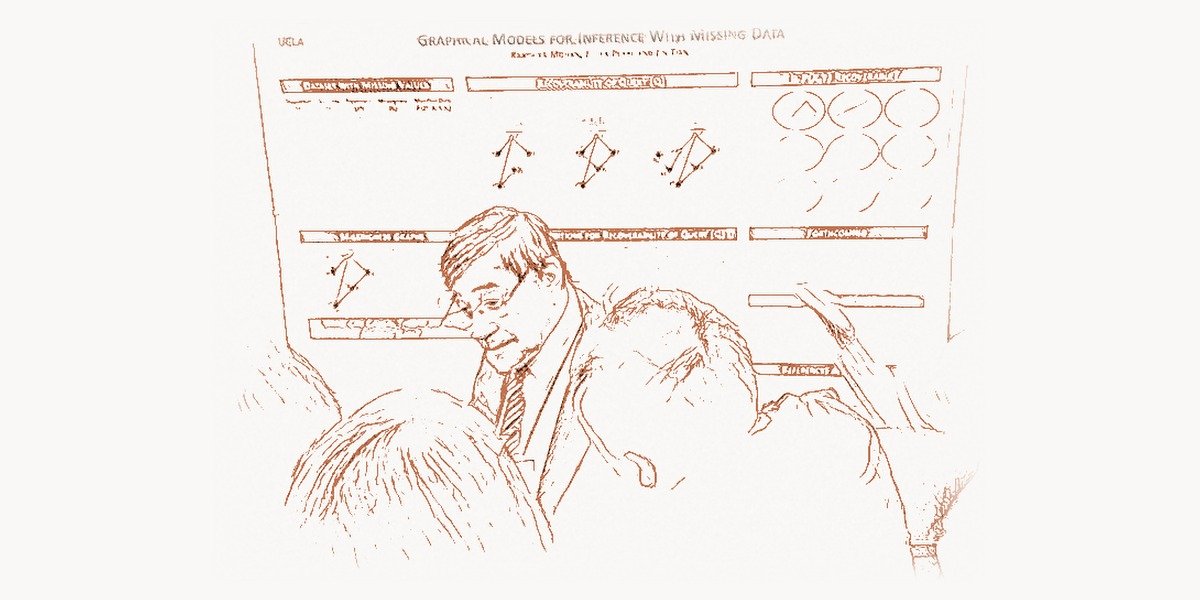

In 2011, Judea Pearl stood before an audience at the Association for Computing Machinery’s annual awards banquet to accept the A.M. Turing Award — the highest honor in computer science. The citation praised his “fundamental contributions to artificial intelligence through the development of a calculus for probabilistic and causal reasoning.” It was a recognition decades in the making. Pearl had spent the better part of thirty years building mathematical frameworks that allowed machines — and scientists — to reason about cause and effect with the same rigor that mathematics had long brought to logic and probability. His Bayesian networks, introduced in the 1980s, gave artificial intelligence a principled way to handle uncertainty. His do-calculus, developed through the 1990s and 2000s, gave science itself a formal language for distinguishing causation from correlation. These were not incremental improvements to existing methods. They were foundational shifts in how we think about thinking. Before Pearl, the fields of statistics and machine learning largely operated in a world of correlations — patterns in data that could predict but never explain. After Pearl, researchers had rigorous tools for asking “why” — for moving from observation to intervention to counterfactual reasoning. The impact has been felt across artificial intelligence, epidemiology, economics, social science, robotics, and philosophy. Every time a researcher writes a causal diagram to clarify the assumptions behind a study, every time an AI system reasons about the consequences of an action rather than merely pattern-matching on historical data, they are working with intellectual infrastructure that Judea Pearl built.

Early Life and Education

Judea Pearl was born on September 4, 1936, in Tel Aviv, in what was then the British Mandate of Palestine. He grew up in a family that valued education and intellectual inquiry. His early years were shaped by the turbulence of the region — the struggle for Israeli independence, the founding of the state of Israel in 1948, and the formative experience of growing up in a young nation building its institutions from scratch. Pearl attended the Technion — Israel Institute of Technology — in Haifa, where he earned a Bachelor of Science in electrical engineering in 1960. The Technion was already establishing itself as one of the foremost technical universities in the Middle East, and the training Pearl received there grounded him in the mathematical rigor and engineering pragmatism that would characterize his later work.

After completing his undergraduate degree, Pearl moved to the United States to pursue graduate studies. He earned a master’s degree in physics from Rutgers University in New Jersey, then shifted toward electrical engineering and computer science, completing a second master’s degree at the Polytechnic Institute of Brooklyn (now NYU Tandon School of Engineering). He received his Ph.D. in electrical engineering from the Polytechnic Institute in 1965. His doctoral research focused on aspects of signal processing and circuit theory, fields that required fluency in probability, linear algebra, and optimization — mathematical tools that would prove essential when he later turned his attention to artificial intelligence.

In 1970, Pearl joined the faculty of the University of California, Los Angeles, in the Computer Science Department. UCLA would become his intellectual home for the rest of his career, and the setting for the work that would transform multiple fields. When Pearl arrived, the computer science department was still young — the discipline itself was barely two decades old — and the freedom to pursue unconventional research directions was considerable. Pearl initially worked on search algorithms, heuristic methods, and the efficiency of problem-solving strategies. But by the early 1980s, his attention was turning to a problem that he considered more fundamental: how should intelligent systems reason under uncertainty?

The Bayesian Networks Breakthrough

Technical Innovation

The problem Pearl confronted in the early 1980s was both practical and philosophical. Artificial intelligence systems of the era were built on logical rules — deterministic if-then statements that encoded expert knowledge about a domain. These expert systems worked well in narrow, well-defined situations, but they failed catastrophically when confronted with the kind of uncertain, incomplete, and noisy information that characterizes the real world. A medical diagnosis system might know that “if the patient has symptoms A, B, and C, then the disease is X,” but real patients rarely present with exactly the expected combination of symptoms. Information is missing. Tests are imperfect. Multiple diseases can produce similar symptoms. The deterministic logic of expert systems had no principled way to handle this uncertainty.

Several approaches had been proposed. Fuzzy logic attempted to handle uncertainty by allowing truth values between 0 and 1. Certainty factors, used in the influential MYCIN system, assigned confidence levels to rules and combined them using ad hoc formulas. Dempster-Shafer theory offered an alternative mathematical framework. But Pearl found all of these approaches unsatisfying. They lacked the coherence and mathematical rigor of probability theory — the branch of mathematics that had been developed over centuries specifically to reason about uncertainty. The question was not whether probability theory was the right framework; Pearl believed it was. The question was how to make probabilistic reasoning computationally tractable for complex, real-world problems involving hundreds or thousands of interrelated variables.

The answer was Bayesian networks. In his 1988 book “Probabilistic Reasoning in Intelligent Systems: Networks of Plausible Inference,” Pearl introduced a framework that combined graph theory with probability theory to create a compact, intuitive, and computationally efficient representation of uncertain knowledge. A Bayesian network is a directed acyclic graph (DAG) in which nodes represent random variables and directed edges represent probabilistic dependencies between them. Each node is associated with a conditional probability table that quantifies the relationship between the node and its parents in The Graph. The key insight was that the graph structure encodes conditional independence relationships — if two variables are not connected by an edge, they are conditionally independent given certain other variables. This dramatically reduces the number of parameters needed to specify a joint probability distribution and makes inference computationally feasible.

"""

Bayesian Network for medical diagnosis — illustrating Pearl's framework.

A Bayesian network encodes probabilistic relationships as a directed

acyclic graph (DAG), enabling tractable inference under uncertainty.

"""

# Define the structure: a simple diagnostic Bayesian network

# Nodes: Pollution, Smoker, Cancer, XRay, Breathing

# This DAG encodes: Pollution and Smoking influence Cancer,

# Cancer influences both XRay results and Breathing difficulty

import random

class BayesianNetwork:

"""

Simplified Bayesian network demonstrating Pearl's key insight:

conditional independence encoded in graph structure makes

probabilistic inference tractable over many variables.

"""

def __init__(self):

# Conditional Probability Tables (CPTs)

# P(Pollution = High)

self.p_pollution = 0.1

# P(Smoker = True)

self.p_smoker = 0.3

# P(Cancer | Pollution, Smoker) — parents: Pollution, Smoker

self.p_cancer = {

(True, True): 0.05,

(True, False): 0.02,

(False, True): 0.03,

(False, False): 0.001

}

# P(XRay = Positive | Cancer)

self.p_xray = {True: 0.90, False: 0.20}

# P(Breathing difficulty | Cancer)

self.p_breathing = {True: 0.65, False: 0.30}

def sample(self):

"""

Forward sampling: generate a single observation from the

joint distribution by sampling each node given its parents.

This ancestral sampling follows the topological order of

the DAG — a direct consequence of Pearl's factorization.

"""

pollution = random.random() < self.p_pollution

smoker = random.random() < self.p_smoker

cancer = random.random() < self.p_cancer[(pollution, smoker)]

xray_pos = random.random() < self.p_xray[cancer]

breathing = random.random() < self.p_breathing[cancer]

return {

'pollution': pollution,

'smoker': smoker,

'cancer': cancer,

'xray_positive': xray_pos,

'breathing_difficulty': breathing

}

def estimate_probability(self, query, evidence, n_samples=100000):

"""

Approximate inference via rejection sampling.

Pearl's message-passing algorithms (belief propagation)

provide exact inference on trees and efficient

approximate inference on general graphs.

"""

consistent = 0

query_true = 0

for _ in range(n_samples):

s = self.sample()

# Check if sample matches all evidence

if all(s[var] == val for var, val in evidence.items()):

consistent += 1

if s[query]:

query_true += 1

if consistent == 0:

return None

return query_true / consistent

bn = BayesianNetwork()

# Query: P(Cancer | XRay=Positive, Breathing=True)

# What is the probability of cancer given both symptoms?

prob = bn.estimate_probability(

query='cancer',

evidence={'xray_positive': True, 'breathing_difficulty': True}

)

print(f"P(Cancer | XRay+, Breathing+) ≈ {prob:.4f}")

# Query: P(Cancer | XRay=Positive)

# With only one symptom observed

prob2 = bn.estimate_probability(

query='cancer',

evidence={'xray_positive': True}

)

print(f"P(Cancer | XRay+) ≈ {prob2:.4f}")

# The network correctly updates beliefs as new evidence arrivesWhy It Mattered

The impact of Bayesian networks on artificial intelligence was immediate and far-reaching. Before Pearl's work, AI systems that needed to reason under uncertainty were forced to choose between mathematical rigor (full joint probability distributions, which were computationally intractable for more than a handful of variables) and practical tractability (ad hoc methods like certainty factors, which lacked theoretical foundations). Bayesian networks offered both: a mathematically principled framework grounded in probability theory and a computational architecture that made inference feasible for real-world problems.

Pearl also developed efficient algorithms for inference in Bayesian networks. His message-passing algorithm, known as belief propagation, allowed exact probabilistic inference on tree-structured networks in time linear in the number of nodes. For more complex networks with loops, he and his students developed approximate inference methods, including loopy belief propagation, that worked well in practice even when exact inference was intractable. These algorithms became standard tools in machine learning and were later applied to problems far beyond their original scope — including error-correcting codes in telecommunications, computer vision, and natural language processing. The ideas behind Bayesian networks influenced an entire generation of AI researchers, including those working on the deep learning systems that dominate today's AI landscape.

Medical diagnosis systems, speech recognition engines, spam filters, and financial risk models all adopted Bayesian network architectures. Microsoft's early work on decision-support systems used Bayesian networks. NASA used them for fault diagnosis in spacecraft systems. The influence extended into biology (modeling gene regulatory networks), ecology (modeling species interactions), forensic science (evaluating DNA evidence), and dozens of other fields. Any domain where reasoning under uncertainty was essential — which is to say, nearly every domain — found Bayesian networks useful. The framework Pearl created was not just a tool for AI; it was a tool for clear thinking about uncertain knowledge, and as such its applications were essentially unlimited.

Other Major Contributions

While Bayesian networks alone would have secured Pearl's place in the history of computer science, his most profound contribution came in the years after the 1988 book, when he turned his attention from probabilistic reasoning to an even more fundamental problem: causal reasoning.

The Do-Calculus and Causal Inference. The central question that drove Pearl from the 1990s onward was deceptively simple: how do we distinguish causation from correlation? Statistics, as it had been practiced for over a century, was fundamentally about associations — about patterns in observed data. Statisticians could say that smoking was associated with lung cancer, but the formal mathematical language of statistics had no way to express the claim that smoking causes lung cancer. The standard mantra — "correlation does not imply causation" — was true, but it was also a confession of impotence. Statistics could warn you not to confuse the two, but it could not tell you how to determine which was which.

Pearl developed a formal mathematical framework for causal reasoning that filled this gap. His key innovation was the do-operator, written as do(X = x), which represents an intervention — actively setting a variable to a particular value, as distinct from merely observing that it takes that value. The probability P(Y | do(X = x)) represents the causal effect of X on Y: what would happen to Y if we intervened to set X to x, regardless of the confounding factors that might create a spurious association between X and Y in observational data. Pearl's do-calculus provided a complete set of rules for determining when causal effects can be estimated from observational data, given a causal graph that encodes the assumed causal structure of the domain.

This was a revolution in scientific methodology. For the first time, researchers had a rigorous mathematical framework for reasoning about cause and effect, analyzing confounders, and determining whether causal conclusions could be drawn from non-experimental data. The implications were enormous for fields like epidemiology, economics, and social science, where randomized controlled experiments are often impossible or unethical. Pearl's framework gave researchers the tools to analyze observational data with causal rigor — to determine, for example, whether a particular medical treatment actually helps patients or merely appears to because of selection bias. For data science teams navigating the complexities of causal analysis in large-scale projects, having a well-structured project management workflow is essential for coordinating experiments, tracking hypotheses, and maintaining reproducibility across team members.

The Ladder of Causation. Pearl articulated a hierarchy of causal reasoning that he called the "Ladder of Causation," which distinguishes three levels of cognitive ability. The first rung is association — seeing patterns in data. ("Patients who take this drug tend to recover.") The second rung is intervention — predicting the effects of actions. ("If I give this drug to a new patient, will they recover?") The third rung is counterfactuals — imagining what would have happened under different circumstances. ("Would the patient have recovered if they had not taken the drug?") Pearl argued that most of machine learning, including deep learning, operates at the first rung — finding associations in data — and that achieving truly intelligent AI requires the ability to reason at all three levels. This framework has become a touchstone in debates about the limitations of current AI systems and the path toward more capable artificial intelligence.

"The Book of Why." In 2018, Pearl co-authored (with science journalist Dana Mackenzie) "The Book of Why: The New Science of Cause and Effect," which presented his ideas about causation to a general audience. The book traced the history of statistics' uneasy relationship with causation, explained Pearl's causal framework in accessible terms, and argued that the "causal revolution" was as significant for science as the development of probability theory or the invention of the randomized controlled trial. The book became a bestseller and brought Pearl's ideas to a vastly wider audience, influencing not just AI researchers but also practitioners in medicine, public policy, education, and the social sciences. It is one of those rare works that managed to communicate deep mathematical ideas to non-specialists without sacrificing intellectual honesty.

Structural Causal Models. Pearl formalized the concept of structural causal models (SCMs), which combine causal graphs with structural equations to provide a complete specification of a causal system. An SCM defines each variable as a function of its direct causes plus an error term, and the resulting framework supports all three levels of the Ladder of Causation: observational reasoning, interventional reasoning, and counterfactual reasoning. SCMs have become the standard formal framework for causal inference in computer science and are increasingly adopted in econometrics, epidemiology, and the social sciences. They provide the theoretical underpinning for modern causal inference methods like instrumental variables, difference-in-differences, and regression discontinuity designs — connecting Pearl's work to the practical methodology used by researchers across dozens of fields.

Philosophy and Approach

Key Principles

Pearl's intellectual approach was characterized by a willingness to challenge deeply entrenched assumptions in both artificial intelligence and statistics. In AI, he challenged the dominance of logical reasoning by insisting that intelligent systems must handle uncertainty probabilistically. In statistics, he challenged the discipline's long-standing refusal to engage formally with causation by developing the mathematical tools to do so. In both cases, he was pushing against established orthodoxies — and in both cases, the orthodoxies eventually gave way.

His work was driven by a conviction that the right mathematical formalism can dissolve problems that seem intractable when stated informally. The confusion surrounding causation in statistics was, in Pearl's view, not a deep philosophical mystery but a consequence of lacking the right formal language. Once you had the do-operator and the do-calculus, questions that had been debated inconclusively for decades could be answered with mathematical precision. This faith in formalism — in the power of the right notation and the right axioms to clarify thinking — connects Pearl to the tradition of mathematical logic that runs from Alan Turing and John von Neumann through Claude Shannon and into modern computer science.

Pearl was also deeply influenced by his training in engineering. Unlike many theorists, he consistently insisted that his mathematical frameworks be useful in practice — that they lead to algorithms that can be implemented and applied to real problems. The Bayesian network was not just a mathematical abstraction; it came with efficient inference algorithms, software implementations, and clear guidelines for application. The do-calculus was not just a theoretical construct; it came with practical criteria for determining when causal effects are identifiable from data and algorithms for computing them. This engineering sensibility — the insistence that theory must serve practice — is one of the reasons Pearl's work has been so influential across disciplines. Organizations looking to implement causal inference frameworks in production systems often benefit from professional web development expertise that can bridge the gap between research methodologies and scalable, user-facing applications.

In his philosophical writings, Pearl has argued that causal reasoning is not just a useful tool but a defining feature of human intelligence. Humans do not merely detect patterns; they build causal models of the world and use them to predict, intervene, and imagine alternatives. A child who understands that pushing a glass off the table will cause it to break is reasoning causally — predicting the consequences of an intervention based on a mental model of physical causation. Pearl believes that achieving human-level AI will require machines that can reason causally at all three levels of his Ladder of Causation, and that the current generation of deep learning systems, powerful as they are at pattern recognition, remain fundamentally limited because they operate primarily at the associational level. This perspective has made him an important voice in debates about the future direction of AI research, complementing the insights of John McCarthy, who envisioned AI systems capable of genuine reasoning about the world.

Legacy and Impact

Judea Pearl's contributions have reshaped multiple fields in ways that continue to deepen. In artificial intelligence, Bayesian networks remain one of the foundational frameworks for probabilistic reasoning, used in applications ranging from medical diagnosis to autonomous navigation. His work on causal inference has influenced the design of modern AI systems that must reason about interventions — from treatment recommendation engines in healthcare to policy evaluation systems in economics. The growing recognition that AI systems need to move beyond pattern recognition toward genuine causal understanding is, in large part, a consequence of Pearl's articulation of the Ladder of Causation and his demonstration that the mathematical tools for causal reasoning exist.

In statistics and the empirical sciences, Pearl's causal revolution has been transformative. The directed acyclic graph (DAG) has become a standard tool for clarifying assumptions in observational studies. Epidemiologists use causal diagrams to identify confounders and design analyses that yield valid causal conclusions. Economists have connected Pearl's framework to their own methods of causal identification. Social scientists, who for decades struggled with the limitations of regression analysis for causal questions, now have formal tools for determining when causal claims are justified and when they are not. The do-calculus and the associated theory of identifiability have provided a unified mathematical foundation for causal inference that transcends disciplinary boundaries.

Pearl's personal legacy extends beyond his scientific contributions. The murder of his son, journalist Daniel Pearl, by terrorists in Pakistan in 2002 was a tragedy that shook the world. In response, Judea and his wife Ruth founded the Daniel Pearl Foundation, dedicated to promoting cross-cultural understanding, journalism, and the values Daniel embodied. Judea Pearl has spoken and written eloquently about dialogue, tolerance, and the importance of human connection across cultural and ideological divides. The foundation's work — including the Daniel Pearl World Music Days, celebrated annually in dozens of countries — stands as a testament to the belief that even in the face of unspeakable loss, it is possible to respond with constructive action and enduring hope.

At UCLA, Pearl has mentored generations of students who have gone on to become leaders in AI, statistics, and causal inference research. His Cognitive Systems Laboratory has produced foundational work on Bayesian networks, causal models, and the philosophy of causation. Researchers like Elias Bareinboim, Jin Tian, and Ilya Shpitser have extended Pearl's framework in important new directions, including transportability of causal knowledge across domains, causal inference with missing data, and mediation analysis.

Pearl's influence on how developers build intelligent systems is also evident in the tools and libraries available today. Python libraries like pgmpy, DoWhy, and CausalNex implement Pearl's theoretical frameworks and make causal inference accessible to practitioners. These tools, combined with modern development environments, allow data scientists to construct causal models, test assumptions, and estimate causal effects with a rigor that was impossible before Pearl's work. The integration of causal reasoning into machine learning pipelines represents one of the most promising frontiers in AI research — a frontier that Pearl's work made conceivable.

The Turing Award citation called Pearl's work "fundamental contributions to artificial intelligence." This is accurate as far as it goes, but it understates the scope of his impact. Pearl did not merely contribute to AI; he created new mathematical foundations for reasoning about uncertainty and causation that have transformed how science itself is conducted. In an era when data-driven decision-making shapes everything from medical treatment to public policy to the algorithms that curate our digital lives, the ability to distinguish causation from correlation — the ability to ask not just "what happened?" but "why?" — is perhaps the most important intellectual capability a society can possess. Judea Pearl gave us the formal tools to exercise that capability with mathematical precision.

"""

Pearl's do-calculus: the conceptual difference between

observing and intervening — the heart of causal inference.

"""

# Illustrating the key distinction Pearl formalized:

# P(Y | X) — observational probability (seeing)

# P(Y | do(X)) — interventional probability (doing)

# Classic example: Does carrying a lighter cause lung cancer?

# Observational data (confounded by smoking)

data = {

'observations': [

# (carries_lighter, smoker, lung_cancer)

(True, True, True),

(True, True, False),

(True, True, True),

(True, False, False),

(False, False, False),

(False, False, False),

(False, True, True),

(False, False, False),

(True, True, True),

(False, False, False),

]

}

# Naive correlation: P(Cancer | Lighter=True)

lighter_carriers = [d for d in data['observations'] if d[0]]

naive_p = sum(1 for d in lighter_carriers if d[2]) / len(lighter_carriers)

print(f"P(Cancer | Lighter=True) = {naive_p:.2f}")

# Shows high correlation — misleading!

# Pearl's adjustment formula: P(Cancer | do(Lighter=True))

# Control for the confounder (Smoking) using the backdoor criterion

# P(Y | do(X)) = Σ_z P(Y | X, Z) * P(Z)

# where Z (smoking) is the confounder to adjust for

smokers = [d for d in data['observations'] if d[1]]

non_smokers = [d for d in data['observations'] if not d[1]]

p_smoker = len(smokers) / len(data['observations'])

# P(Cancer | Lighter, Smoker) among smokers with lighters

s_with_l = [d for d in smokers if d[0]]

p_cancer_lighter_smoker = (

sum(1 for d in s_with_l if d[2]) / len(s_with_l) if s_with_l else 0

)

# P(Cancer | Lighter, NonSmoker) among non-smokers with lighters

ns_with_l = [d for d in non_smokers if d[0]]

p_cancer_lighter_nonsmoker = (

sum(1 for d in ns_with_l if d[2]) / len(ns_with_l) if ns_with_l else 0

)

# Backdoor adjustment (Pearl's formula)

causal_effect = (

p_cancer_lighter_smoker * p_smoker +

p_cancer_lighter_nonsmoker * (1 - p_smoker)

)

print(f"P(Cancer | do(Lighter=True)) ≈ {causal_effect:.2f}")

print("After adjusting for confounders, lighters don't cause cancer.")

print("\nThis is Pearl's fundamental insight: intervening (do)")

print("is mathematically different from observing (see).")Key Facts

- Born: September 4, 1936, Tel Aviv, British Mandate of Palestine (now Israel)

- Known for: Bayesian networks, do-calculus, causal inference, structural causal models, belief propagation algorithm, Ladder of Causation

- Key works: "Probabilistic Reasoning in Intelligent Systems" (1988), "Causality: Models, Reasoning, and Inference" (2000, 2nd ed. 2009), "The Book of Why" (2018)

- Awards: Turing Award (2011), IJCAI Award for Research Excellence (1999), Benjamin Franklin Medal in Computers and Cognitive Science (2008), Lakatos Award (2001), Rumelhart Prize (2011)

- Education: B.Sc. in Electrical Engineering from the Technion (1960), M.S. in Physics from Rutgers University, Ph.D. in Electrical Engineering from the Polytechnic Institute of Brooklyn (1965)

- Career: Professor of Computer Science at UCLA (1970–present), Director of the Cognitive Systems Laboratory

- Family: Father of journalist Daniel Pearl (1963–2002); co-founded the Daniel Pearl Foundation with wife Ruth Pearl

- Key students: Elias Bareinboim, Jin Tian, Ilya Shpitser, Thomas Verma, Dan Geiger

Frequently Asked Questions

What are Bayesian networks and why did Judea Pearl create them?

Bayesian networks are a mathematical framework that combines graph theory with probability theory to represent and reason about uncertain knowledge. Pearl developed them in the 1980s to solve a fundamental problem in artificial intelligence: existing AI systems used deterministic logic and could not handle the uncertainty inherent in real-world reasoning. A Bayesian network is a directed acyclic graph where nodes represent variables and edges represent probabilistic dependencies. Each node has a conditional probability table specifying its relationship to its parent nodes. The key innovation was that the graph structure encodes conditional independence relationships, which dramatically reduces the computational complexity of probabilistic inference. Pearl also developed efficient algorithms, including belief propagation, for computing probabilities in these networks. Bayesian networks became foundational in AI, machine learning, medical diagnosis, speech recognition, and dozens of other fields where reasoning under uncertainty is essential.

What is the do-calculus and why is it important for science?

The do-calculus is a set of mathematical rules developed by Pearl for computing the effects of interventions from observational data. At its core is the do-operator, written as do(X = x), which distinguishes between observing a variable (seeing that X happens to equal x) and intervening to set it (forcing X to equal x). This distinction is crucial because observational correlations can be misleading due to confounders — hidden common causes that create spurious associations. Pearl's do-calculus provides formal rules for determining when causal effects can be identified from non-experimental data and how to compute them. This was revolutionary for fields like epidemiology, economics, and social science, where randomized experiments are often impossible or unethical. The do-calculus gave researchers rigorous mathematical tools for drawing causal conclusions from observational studies, transforming how empirical science is conducted.

How has Judea Pearl's work influenced modern artificial intelligence?

Pearl's influence on AI operates at two levels. At the practical level, Bayesian networks and their descendants are used in countless AI applications — from medical diagnosis and fraud detection to natural language processing and robotics. The message-passing algorithms Pearl developed for inference in graphical models inspired techniques used in error-correcting codes, computer vision, and modern probabilistic programming languages. At the conceptual level, Pearl's Ladder of Causation has framed one of the most important ongoing debates in AI: whether current deep learning systems, which primarily operate at the level of pattern recognition (association), can achieve genuine intelligence without the ability to reason causally about interventions and counterfactuals. Many leading AI researchers now argue that integrating causal reasoning into machine learning is essential for building systems that can generalize robustly, explain their decisions, and operate safely in novel environments — a research direction that flows directly from Pearl's foundational work.