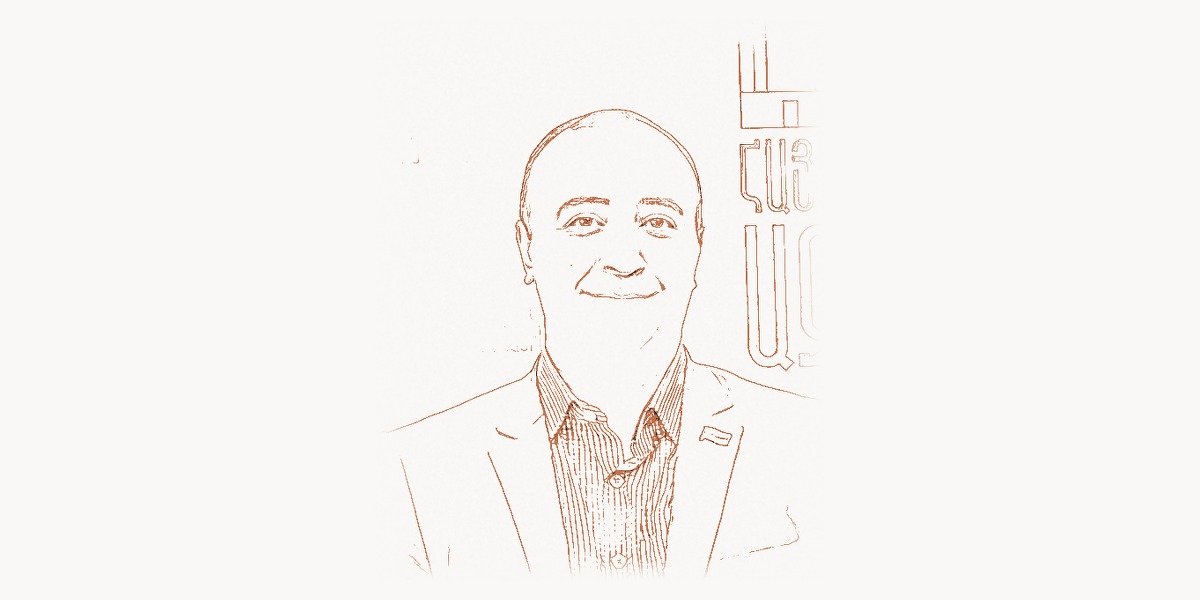

In 2014, a relatively unknown researcher from a small town in Armenia submitted a paper to the International Conference on Learning Representations that would fundamentally alter the trajectory of deep learning. Karen Simonyan, working alongside Andrew Zisserman at the University of Oxford, demonstrated that simply stacking more convolutional layers with tiny 3×3 filters could dramatically outperform the complex, hand-engineered architectures that had dominated computer vision. The resulting model — VGGNet — didn’t just win competitions; it rewrote the rules of how neural networks should be designed. Within months, every serious deep learning practitioner was studying VGGNet’s deceptively simple blueprint, and its influence on modern AI architectures remains profound to this day.

Early Life and Education

Karen Simonyan was born in Yerevan, Armenia, during the final years of the Soviet Union. Growing up in a country with a strong mathematical tradition, Simonyan showed exceptional aptitude for analytical thinking from an early age. Armenia, despite its modest size, has historically produced world-class mathematicians and scientists, and Simonyan’s upbringing in this intellectually rich environment shaped his future career path.

Simonyan pursued his undergraduate studies in applied mathematics and informatics at Yerevan State University, one of the oldest and most prestigious institutions in the Caucasus region. The rigorous mathematical foundation he received there — spanning linear algebra, probability theory, and optimization — would later prove essential when he turned his attention to the mathematical underpinnings of deep neural networks.

After completing his undergraduate degree, Simonyan moved to the United Kingdom to pursue graduate studies. He enrolled at the University of Oxford, joining the Visual Geometry Group (VGG) — the very lab whose name would become synonymous with his most famous work. Under the supervision of Professor Andrew Zisserman, a leading figure in computer vision, Simonyan began his doctoral research. His PhD thesis focused on deep learning methods for recognizing faces and objects in images and videos, laying the groundwork for the breakthroughs that would follow. Oxford’s environment, combining theoretical rigor with practical experimentation, proved to be the ideal setting for Simonyan’s transition from traditional computer vision techniques to deep learning approaches.

Career and the VGGNet Revolution

When Simonyan began his research career, the deep learning revolution had just started. Yoshua Bengio and other pioneers had spent decades arguing that deep neural networks could achieve remarkable results if properly trained. AlexNet, the 2012 ImageNet competition winner designed by Alex Krizhevsky under Geoffrey Hinton’s guidance, had proven that convolutional neural networks (CNNs) could crush traditional computer vision approaches. But a critical question remained: what was the optimal architecture for these networks?

Most researchers at the time were experimenting with larger filter sizes — 7×7 or 11×11 convolutions — believing that capturing wide spatial context was the key to better performance. Simonyan and Zisserman took the opposite approach. Their insight was elegantly simple: replace large filters with stacks of small 3×3 convolutional layers. This single architectural decision became the defining innovation of VGGNet.

Technical Innovation

The VGGNet architecture, formally described in the paper “Very Deep Convolutional Networks for Large-Scale Image Recognition,” introduced several principles that became standard in deep learning. The core idea was that two stacked 3×3 convolutional layers have the same effective receptive field as a single 5×5 layer, while three stacked 3×3 layers match a 7×7 layer. But the stacked approach uses fewer parameters and introduces more non-linear activations (ReLU functions between layers), making the network both more expressive and more efficient.

The VGG-16 and VGG-19 variants (with 16 and 19 weight layers respectively) achieved second place in the classification track and first place in the localization track at the 2014 ImageNet Large Scale Visual Recognition Challenge (ILSVRC). While GoogLeNet technically won the classification task, VGGNet’s simplicity made it far more influential in practice — researchers could easily understand, modify, and extend it.

Here is a simplified representation of the VGG-16 architecture that illustrates the elegant stacking pattern Simonyan designed:

import torch.nn as nn

class VGG16(nn.Module):

def __init__(self, num_classes=1000):

super(VGG16, self).__init__()

self.features = nn.Sequential(

# Block 1: 2x conv3-64

nn.Conv2d(3, 64, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(64, 64, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2),

# Block 2: 2x conv3-128

nn.Conv2d(64, 128, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(128, 128, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2),

# Block 3: 3x conv3-256

nn.Conv2d(128, 256, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(256, 256, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(256, 256, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2),

# Block 4: 3x conv3-512

nn.Conv2d(256, 512, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2),

# Block 5: 3x conv3-512

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2),

)

self.classifier = nn.Sequential(

nn.Linear(512 * 7 * 7, 4096),

nn.ReLU(inplace=True),

nn.Dropout(),

nn.Linear(4096, 4096),

nn.ReLU(inplace=True),

nn.Dropout(),

nn.Linear(4096, num_classes),

)

def forward(self, x):

x = self.features(x)

x = x.view(x.size(0), -1)

x = self.classifier(x)

return x

What makes this code remarkable is its regularity. Each convolutional block follows an identical pattern: stack 3×3 convolutions, apply ReLU activation, then max-pool. The number of filters doubles after each pooling operation (64 → 128 → 256 → 512), creating a natural hierarchy of feature extraction. This systematic approach stood in stark contrast to the ad-hoc architectures common at the time and influenced how researchers at organizations like Toimi and other tech companies approach deep learning model design today.

Why It Mattered

VGGNet’s impact extended far beyond its ImageNet performance for several interconnected reasons. First, it demonstrated that network depth — not filter complexity — was the critical factor in achieving high accuracy. This principle fundamentally shifted how the entire deep learning community thought about architecture design and directly paved the way for even deeper networks like ResNet.

Second, VGGNet became the de facto backbone for transfer learning. Because the model was both powerful and architecturally transparent, researchers across every domain — from medical imaging to satellite analysis to autonomous driving — adopted pre-trained VGG features as starting points for their own models. Before the era of massive foundation models, loading pre-trained VGG weights was often the first step in any computer vision project. The idea that a model trained on ImageNet could serve as a universal feature extractor was transformative, and VGGNet was the model that made this concept practical.

Third, Simonyan and Zisserman made the unusual decision to release the pre-trained weights publicly. In an era when many researchers kept their models proprietary, this act of openness accelerated the adoption of deep learning across academia and industry alike. Much like how Andrej Karpathy later championed accessible AI education, VGGNet’s open availability democratized access to state-of-the-art computer vision. As Ian Goodfellow and others have noted, VGGNet’s pre-trained features became a building block for countless subsequent innovations, including in the field of generative adversarial networks.

Other Major Contributions

While VGGNet is Simonyan’s most widely cited work, his contributions to deep learning span several other groundbreaking areas. Even during his doctoral work, Simonyan explored facial recognition with deep learning, developing methods that significantly advanced the state of the art in face verification tasks. His work on the DeepFace system and related approaches helped establish deep learning as the dominant paradigm for biometric recognition.

Simonyan also made pioneering contributions to video understanding. His two-stream architecture for action recognition — which combined a spatial stream (processing individual video frames) with a temporal stream (processing optical flow fields) — became a foundational approach for understanding human actions in video. This dual-pathway design influenced an entire generation of video analysis research and found practical applications in surveillance, sports analytics, and content moderation.

The concept behind the two-stream architecture can be understood through this simplified implementation:

import torch

import torch.nn as nn

class TwoStreamNetwork(nn.Module):

"""

Karen Simonyan's two-stream architecture concept

for video action recognition.

Spatial stream: appearance from single RGB frame

Temporal stream: motion from stacked optical flow

"""

def __init__(self, num_classes=101):

super(TwoStreamNetwork, self).__init__()

# Spatial stream processes single RGB frames

self.spatial_stream = nn.Sequential(

nn.Conv2d(3, 96, kernel_size=7, stride=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(3, stride=2),

nn.Conv2d(96, 256, kernel_size=5, stride=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(3, stride=2),

)

# Temporal stream processes stacked optical flow

# 20 channels = 10 frames x 2 (horizontal + vertical flow)

self.temporal_stream = nn.Sequential(

nn.Conv2d(20, 96, kernel_size=7, stride=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(3, stride=2),

nn.Conv2d(96, 256, kernel_size=5, stride=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(3, stride=2),

)

self.fusion = nn.Linear(512, num_classes)

def forward(self, rgb_frame, optical_flow):

spatial_feats = self.spatial_stream(rgb_frame)

temporal_feats = self.temporal_stream(optical_flow)

spatial_feats = spatial_feats.mean(dim=[2, 3])

temporal_feats = temporal_feats.mean(dim=[2, 3])

combined = torch.cat([spatial_feats, temporal_feats], dim=1)

return self.fusion(combined)

After completing his PhD at Oxford in 2017, Simonyan joined DeepMind, the London-based AI research lab founded by Demis Hassabis, Shane Legg, and Mustafa Suleyman. At DeepMind, Simonyan shifted his focus from computer vision to generative modeling and large-scale language models. He became a key contributor to several of DeepMind’s major research initiatives, co-leading work on neural network scalability, model architecture, and the development of advanced AI systems.

At DeepMind, Simonyan co-authored research on the Gemini family of large language and multimodal models, contributing to the architecture and training methodology behind one of the most powerful AI systems ever built. His experience in designing efficient visual architectures directly informed the multimodal capabilities of these systems, bridging the gap between vision and language understanding. The work of researchers like Ashish Vaswani, who invented the Transformer architecture, and Simonyan’s own deep vision expertise converged in this new generation of multimodal AI.

Philosophy and Approach

Karen Simonyan’s research philosophy stands out for its emphasis on simplicity, reproducibility, and principled experimentation. In a field often driven by complexity and novelty for its own sake, Simonyan has consistently demonstrated that elegant, straightforward solutions can outperform elaborate designs. This philosophy is evident in everything from VGGNet’s uniform architecture to his systematic approach to ablation studies.

His working style reflects a deep connection between theoretical understanding and practical validation. Rather than proposing architectures based on intuition alone, Simonyan meticulously tests each design choice through controlled experiments, isolating variables to understand their individual contributions. This scientific rigor has made his work not only impactful but trustworthy — other researchers can build upon his findings with confidence. Teams at companies like Taskee and other modern tech organizations benefit from this legacy of clarity in architecture design when they implement AI-driven features in their products.

Key Principles

- Depth over width: Simonyan proved that adding more layers with small filters is more effective than using fewer layers with large filters. This principle — that depth drives representational power — became a cornerstone of modern deep learning and directly influenced architectures like ResNet, DenseNet, and EfficientNet.

- Architectural uniformity: Rather than mixing different filter sizes and complex branching paths, VGGNet used identical 3×3 convolutions throughout. This uniformity made the network easier to analyze, debug, optimize, and port to new hardware — a lesson that resonates with software engineering principles championed by figures like Brian Kernighan in the programming world.

- Open science and reproducibility: By releasing pre-trained weights and providing detailed architectural specifications, Simonyan enabled the global research community to build on his work. This commitment to openness accelerated the pace of deep learning innovation worldwide.

- Systematic ablation: Every design choice in VGGNet was validated through careful ablation studies — removing or modifying one component at a time to measure its impact. This methodology set a standard for how deep learning research should be conducted and reported.

- Transfer learning as first-class design goal: Simonyan designed VGGNet not just for ImageNet classification, but with the understanding that learned features should transfer to new tasks. This forward-thinking approach helped establish transfer learning as a fundamental technique in modern AI.

- Complementary information streams: His two-stream architecture for video demonstrated that combining different representations of the same data (spatial appearance and temporal motion) yields stronger results than either alone — a principle that now underpins many multimodal AI systems.

Legacy and Impact

Karen Simonyan’s influence on the field of deep learning is difficult to overstate. The original VGGNet paper has been cited over 100,000 times, making it one of the most cited papers in the history of computer science. More importantly, the principles it established continue to guide how researchers and engineers design neural networks today.

VGGNet’s impact rippled through the entire deep learning ecosystem. The ResNet architecture, which won ILSVRC 2015 with an astonishing 152 layers, was built directly on the insights that Simonyan pioneered — particularly the finding that deeper networks, when properly constructed, yield better results. Without VGGNet’s proof that depth matters, the confidence to push to hundreds of layers might not have materialized so quickly.

In the domain of transfer learning, VGGNet served as the standard feature extractor for years. Medical imaging researchers used VGG features to detect tumors in X-rays and MRIs. Remote sensing scientists applied them to analyze satellite imagery. Art historians employed VGG-based neural style transfer to study artistic techniques. The model’s versatility as a feature backbone catalyzed an explosion of applied deep learning across nearly every scientific and industrial domain.

Simonyan’s two-stream architecture similarly defined the field of video understanding for half a decade. Before Transformer-based video models became common, virtually every competitive action recognition system incorporated some variant of Simonyan’s dual-pathway design. His insight that motion and appearance require separate processing streams anticipated many of the multimodal architectures that power today’s AI systems.

At DeepMind, Simonyan’s contributions extended into the era of foundation models. His work on large-scale systems reflects a consistent thread throughout his career: understanding how to scale neural architectures effectively while maintaining principled design. From the 16 layers of VGG-16 to the billions of parameters in modern language models, the same questions of depth, width, and efficient computation that Simonyan explored continue to drive progress. The pioneering work of researchers like Jakob Uszkoreit, who co-created the Transformer, combined with vision expertise from researchers like Simonyan, has led to the multimodal AI systems we see today.

Beyond his technical contributions, Simonyan represents a broader story about the global nature of AI research. An Armenian-born scientist who found his intellectual home at Oxford and then DeepMind, his career exemplifies how talent and ideas flow across borders to drive technological progress. In a field where collaboration and openness are essential, Simonyan’s willingness to share his work freely has created lasting value for the entire research community.

Key Facts

- Full name: Karen Simonyan

- Birthplace: Yerevan, Armenia

- Education: BSc from Yerevan State University; PhD from the University of Oxford (Visual Geometry Group)

- Best known for: Co-creating VGGNet (VGG-16 and VGG-19) deep convolutional neural network architectures

- Key paper: “Very Deep Convolutional Networks for Large-Scale Image Recognition” (2014), with over 100,000 citations

- Other notable work: Two-stream convolutional networks for action recognition in video, contributions to DeepMind’s Gemini models

- PhD supervisor: Andrew Zisserman, a pioneer in computer vision

- Current affiliation: Google DeepMind, London

- Core innovation: Proving that stacking small 3×3 convolutional filters with increased depth outperforms larger, shallower architectures

- Impact on transfer learning: VGGNet pre-trained weights became the most widely used feature extractor in deep learning research before the Transformer era

FAQ

What is VGGNet and why was it so important?

VGGNet is a family of deep convolutional neural network architectures developed by Karen Simonyan and Andrew Zisserman at the University of Oxford in 2014. The two most famous variants are VGG-16 (16 weight layers) and VGG-19 (19 weight layers). VGGNet’s importance lies in its demonstration that network depth — achieved through simple, uniform stacking of 3×3 convolutional layers — is the most critical factor for high accuracy in image recognition. Its clean, repeatable architecture made it extremely popular as a feature extractor for transfer learning, and it influenced virtually every subsequent deep learning architecture including ResNet, Inception, and modern vision Transformers.

How does VGGNet compare to modern deep learning architectures?

While VGGNet is no longer state-of-the-art in terms of raw accuracy or efficiency, its foundational principles remain deeply embedded in modern architectures. VGGNet has approximately 138 million parameters, making it large by 2014 standards but modest compared to today’s billion-parameter models. Modern architectures like EfficientNet and Vision Transformers achieve higher accuracy with fewer parameters thanks to techniques like skip connections (from ResNet), attention mechanisms (from Transformers), and neural architecture search. However, the core insight that Simonyan established — that depth and small filters are preferable to shallow networks with large filters — continues to be a guiding principle in architecture design.

What is Karen Simonyan’s two-stream architecture for video?

The two-stream architecture, published by Simonyan in 2014, was a breakthrough approach to recognizing human actions in video. It processes video through two parallel convolutional networks: a spatial stream that analyzes individual RGB frames to capture appearance information (what objects look like), and a temporal stream that processes stacked optical flow fields to capture motion information (how objects move). The outputs of both streams are then combined to produce the final action classification. This approach was groundbreaking because it showed that motion and appearance provide complementary information that is best processed separately before fusion, a principle that influenced the design of many subsequent multimodal systems.

What has Karen Simonyan contributed to research at DeepMind?

After joining DeepMind following his PhD at Oxford, Simonyan transitioned from pure computer vision to broader AI research, including generative modeling and large-scale language and multimodal models. He became a senior researcher contributing to the architecture and training of DeepMind’s advanced AI systems, including work on the Gemini family of models. His deep expertise in visual representation learning has been particularly valuable as AI moves toward multimodal systems that must understand both images and text. At DeepMind, Simonyan has continued to embody the same principles of methodical experimentation and architectural clarity that made VGGNet so influential.