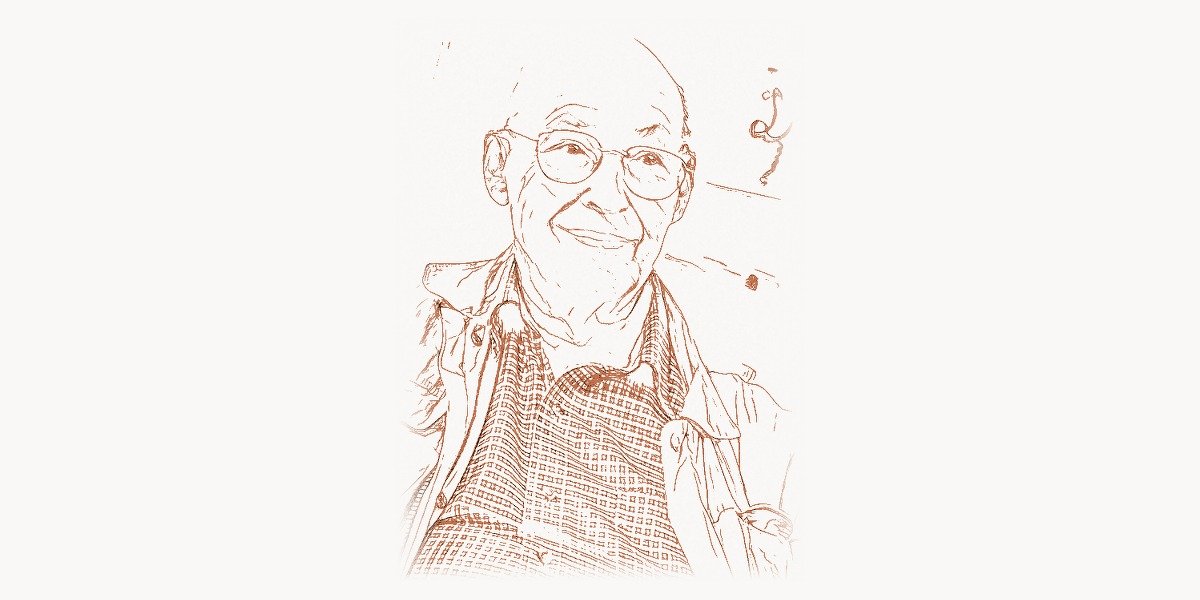

In the summer of 1956, a 29-year-old mathematician walked into a workshop at Dartmouth College in Hanover, New Hampshire, and helped launch the most ambitious scientific project in human history — the attempt to build machines that think. The workshop, organized by John McCarthy, Claude Shannon, Nathaniel Rochester, and Marvin Minsky, lasted two months and produced no breakthroughs. But it gave the field its name — artificial intelligence — and it established the intellectual agenda that would consume Minsky for the next sixty years. While the other attendees moved on to various pursuits, Minsky devoted his entire career to a single question: what is the mind, and how can we build one? He co-founded the MIT Artificial Intelligence Laboratory, invented the confocal scanning microscope, co-authored a book that nearly killed neural network research for a decade, wrote a philosophical masterwork about how human cognition works, co-invented the first head-mounted graphical display, built robotic hands and visual systems, won the Turing Award at age 42, and became the figure most widely regarded as the father of artificial intelligence. His work laid the intellectual foundations for everything from expert systems to modern deep learning, and his ideas about how to decompose intelligence into simpler mechanisms remain startlingly relevant in the age of large language models.

Early Life and Education

Marvin Lee Minsky was born on August 9, 1927, in New York City. His father, Henry Minsky, was an eye surgeon; his mother, Fannie, was an activist. The family was Jewish and intellectually oriented — dinner conversations ranged across science, philosophy, and the arts. Minsky attended the Ethical Culture Fieldston School in the Bronx, a progressive institution that emphasized critical thinking over rote learning, and later the Bronx High School of Science, which has produced more Nobel laureates than most countries.

After serving in the U.S. Navy from 1944 to 1945, Minsky enrolled at Harvard University, where he studied mathematics, physics, and music. He was an accomplished pianist who seriously considered a career in music — a detail that mattered, because his lifelong interest in how the brain processes music informed his later theories of cognition. At Harvard, he encountered the emerging ideas about computation and logic that Alan Turing and John von Neumann had developed in the previous decade. Turing’s 1950 paper “Computing Machinery and Intelligence” — which posed the question “Can machines think?” — struck Minsky with the force of revelation. Here was a question that combined his interests in mathematics, psychology, philosophy, and engineering into a single grand problem.

Minsky received his BA in mathematics from Harvard in 1950 and immediately moved to Princeton University for his PhD, also in mathematics. At Princeton, he worked with Albert W. Tucker (known for his contributions to game theory and optimization) and encountered the intellectual ferment around cybernetics, information theory, and neural modeling. His doctoral dissertation, completed in 1954, was titled “Theory of Neural-Analog Reinforcement Systems and Its Application to the Brain-Model Problem.” In it, Minsky presented a mathematical analysis of how networks of neuron-like elements could learn — work that foreshadowed the reinforcement learning research that would become central to AI sixty years later.

But even before his PhD, Minsky had already built something extraordinary. In 1951, as a graduate student, he constructed the SNARC (Stochastic Neural Analog Reinforcement Calculator) — a machine made of 40 artificial neurons built from vacuum tubes, motors, and clutches. The SNARC could learn to navigate a virtual maze through trial and error, adjusting the strengths of its connections based on success and failure. It was the first hardware neural network learning machine ever built, predating the perceptron by several years and demonstrating that machines could learn from experience — the fundamental premise of all modern machine learning.

The AI Lab Breakthrough

Technical Innovation

In 1958, Minsky joined the faculty of MIT, and in 1959 he and John McCarthy co-founded the MIT Artificial Intelligence Project — later renamed the MIT AI Laboratory, and today part of MIT CSAIL (Computer Science and Artificial Intelligence Laboratory). This laboratory became the most important AI research institution in the world and remained so for decades. Under Minsky’s intellectual leadership, the AI Lab attracted some of the brightest minds in computer science and produced foundational work in robotics, computer vision, natural language processing, knowledge representation, and computational learning theory.

Minsky’s approach to AI was radically broad. He did not believe that intelligence could be reduced to a single mechanism — neither pure logic, nor statistical learning, nor any one algorithm. Instead, he argued that intelligence required many different kinds of processes working together: reasoning, analogy, pattern recognition, planning, learning from examples, learning from mistakes, common sense, and emotional evaluation. This “society of agents” approach stood in contrast to the more narrowly focused programs of other AI researchers, and it anticipated the modern understanding that intelligence is a complex, multi-faceted phenomenon.

The AI Lab under Minsky’s guidance produced a stream of innovations. Students and researchers built some of the first computer programs that could see (using camera inputs to recognize objects), manipulate physical objects (using robotic arms), understand simple English sentences, prove mathematical theorems, play games, and solve puzzles. The Lab developed early versions of time-sharing operating systems (allowing multiple users to share a computer simultaneously), which were essential for interactive AI research. The culture of the Lab — open, collaborative, working through the night, pushing the boundaries of what machines could do — became the template for hacker culture and the open-source movement.

One of Minsky’s key technical contributions was in the field of knowledge representation — the question of how to structure information so that a machine can reason about the world. In 1974, he published “A Framework for Representing Knowledge,” which introduced the concept of frames. A frame is a data structure for representing a stereotyped situation — for example, entering a restaurant. The restaurant frame would contain default assumptions (there are tables, a menu, a waiter) and slots for specific details (the name of the restaurant, the items on the menu). When you enter a new restaurant, your mind activates the restaurant frame and fills in the slots with observed details, while using the defaults for anything you haven’t observed. This theory of frames became enormously influential in AI (leading to frame-based expert systems), in cognitive psychology (where it paralleled Schank and Abelson’s script theory), and in object-oriented programming (where objects with default properties and inheritance hierarchies echo Minsky’s frame structures).

Why It Mattered

Minsky’s MIT AI Lab was not just a research laboratory — it was a crucible that forged the field of artificial intelligence itself. The lab trained generations of researchers who went on to lead AI departments at universities around the world, found AI companies, and push the boundaries of what machines could do. The problems Minsky identified in the 1960s — how to give machines common sense, how to represent knowledge, how to learn from sparse data, how to integrate different kinds of reasoning — remain the central challenges of AI in 2025.

The frames concept, in particular, anticipated a pattern that recurs throughout modern AI. When a large language model generates text about a restaurant, it is effectively activating a “restaurant frame” — a cluster of associated concepts, default assumptions, and typical sequences — that it learned from training data. The concept of frames also directly influenced the development of object-oriented programming paradigms and semantic web technologies. Today, tools like Taskee use structured data representations that owe a philosophical debt to Minsky’s insight that knowledge must be organized into coherent frames with default values and inheritance relationships.

Other Contributions

Minsky’s intellectual range was extraordinary, even by the standards of mid-twentieth-century polymaths. Beyond the AI Lab and frames, he made significant contributions in at least four distinct areas.

The Perceptrons book. In 1969, Minsky and Seymour Papert published Perceptrons: An Introduction to Computational Geometry, a mathematical analysis of the capabilities and limitations of single-layer neural networks (perceptrons). The book rigorously proved that single-layer perceptrons could not compute certain functions — most famously, the XOR (exclusive or) function. This result was widely interpreted as a devastating critique of neural network research in general, and it contributed to a dramatic decline in funding and interest in neural networks that lasted from approximately 1970 to the mid-1980s — a period known as the first “AI winter” for connectionism. Whether Minsky and Papert intended this effect is debated: they later said that they had only analyzed single-layer networks and had explicitly noted that multi-layer networks could overcome the limitations. But the damage was done. It was not until the work of Geoffrey Hinton, David Rumelhart, and others on backpropagation in the 1980s that neural networks recovered. The Perceptrons episode remains one of the most consequential and controversial chapters in AI history.

The Society of Mind. In 1986, Minsky published The Society of Mind, a remarkable book that proposed a theory of how human intelligence works. The central idea is that the mind is not a single unified entity but a society of many small, relatively simple agents — each specialized for a particular task — that interact, compete, and cooperate to produce the complex behavior we call thinking. An agent for recognizing faces, an agent for grasping objects, an agent for feeling hungry, an agent for planning tomorrow’s schedule — hundreds or thousands of such agents, organized in hierarchies and networks, produce the emergent phenomenon of consciousness. This theory anticipated modern multi-agent AI architectures and resonates strongly with how contemporary digital agencies like Toimi structure complex projects by decomposing them into specialized teams that collaborate toward a unified goal.

The confocal scanning microscope. In 1957 — before the AI Lab, before the Dartmouth workshop, before almost everything — Minsky invented the confocal scanning microscope. This device uses a point light source and a pinhole detector to image a single point within a specimen, then scans across the specimen to build up a complete image. By eliminating out-of-focus light, it produces dramatically sharper images than conventional microscopes and can optically section thick specimens to create three-dimensional reconstructions. The confocal microscope became an indispensable tool in biology and medicine, used for everything from imaging neurons in the brain to diagnosing diseases. Minsky patented the invention in 1961 (U.S. Patent 3,013,467) but let the patent lapse without commercial exploitation — a decision that cost him a fortune but gave the world free access to the technology.

The head-mounted display. In 1963, Minsky and his colleague Charles Harrison co-developed one of the first head-mounted graphical displays — a device that projected computer-generated images directly in front of the user’s eyes. While Ivan Sutherland is more widely credited with the first VR head-mounted display (1968), Minsky’s earlier work was part of the intellectual lineage that led to modern virtual and augmented reality systems.

Philosophy and Engineering Approach

Key Principles

Minsky’s approach to AI was distinguished by several core principles that set him apart from many of his contemporaries and remain influential today.

Intelligence requires many mechanisms, not one. Minsky consistently argued against the idea that a single algorithm or formalism could capture intelligence. He criticized both the pure-logic camp (who believed that intelligence was just theorem-proving) and the pure-learning camp (who believed that a sufficiently powerful learning algorithm could acquire all necessary knowledge from data). In his view, intelligence required a toolbox of different mechanisms — logical reasoning, analogical reasoning, common-sense rules, emotional heuristics, perceptual routines, motor programs — loosely coordinated rather than centrally controlled. This principle is reflected in modern AI architectures that combine neural networks with retrieval systems, planners, tool use, and chain-of-thought reasoning.

Common sense is the hard problem. While most AI researchers focused on impressive but narrow feats — playing chess, proving theorems, recognizing speech — Minsky insisted that the real challenge was giving machines common sense: the vast background knowledge that humans use effortlessly but cannot easily articulate. You know that water flows downhill, that people get upset when you insult them, that a cup placed on the edge of a table might fall, that a cat that goes behind a couch still exists. This kind of knowledge is not in any textbook, and Minsky argued that without it, AI systems would remain brittle and narrow. The challenge of common-sense reasoning remains unsolved in AI, though large language models have made surprising progress by absorbing vast amounts of text that implicitly encodes common-sense knowledge.

Emotions are not the opposite of reason — they are a type of thinking. In his later work, particularly The Emotion Machine (2006), Minsky argued that emotions are not irrational intrusions into rational thought but rather mechanisms for switching between different modes of thinking. Anger focuses attention and increases energy. Fear triggers avoidance and caution. Love creates long-term commitments that override short-term calculations. In Minsky’s framework, an emotionless machine would not be more rational — it would be less intelligent, because it would lack the meta-cognitive mechanisms that allow humans to adaptively switch strategies. This perspective anticipated modern work on AI alignment and AI safety, where researchers grapple with how to give AI systems appropriate goals and values.

Minsky was also a brilliant programmer and tinkerer. He built things — robots, circuits, musical instruments, theoretical frameworks — with a craftsman’s delight in making things work. The following Python code illustrates a simplified version of his “Society of Mind” concept, where multiple simple agents collaborate to make a decision:

"""

A simplified Society of Mind model.

Multiple specialist agents vote on a decision,

each contributing a different kind of reasoning.

"""

class Agent:

def __init__(self, name, expertise):

self.name = name

self.expertise = expertise

def evaluate(self, situation):

raise NotImplementedError

class LogicAgent(Agent):

"""Reasons about formal structure and consistency."""

def evaluate(self, situation):

score = 0

if situation.get("consistent", False):

score += 3

if situation.get("has_evidence", False):

score += 2

return score

class EmotionAgent(Agent):

"""Evaluates based on past emotional associations."""

def evaluate(self, situation):

score = 0

if situation.get("feels_familiar", False):

score += 2

if situation.get("causes_anxiety", False):

score -= 3

return score

class AnalogyAgent(Agent):

"""Checks for similarity to previously solved problems."""

def evaluate(self, situation):

score = 0

if situation.get("similar_to_past_success", False):

score += 4

if situation.get("similar_to_past_failure", False):

score -= 2

return score

class SocietyOfMind:

"""

A collection of agents that collectively

evaluate situations through diverse reasoning.

"""

def __init__(self):

self.agents = []

def add_agent(self, agent):

self.agents.append(agent)

def deliberate(self, situation):

votes = {}

for agent in self.agents:

votes[agent.name] = agent.evaluate(situation)

total = sum(votes.values())

return {

"agent_votes": votes,

"collective_score": total,

"decision": "proceed" if total > 0 else "reconsider"

}

# Create a society of mind

society = SocietyOfMind()

society.add_agent(LogicAgent("Logic", "formal reasoning"))

society.add_agent(EmotionAgent("Emotion", "affective evaluation"))

society.add_agent(AnalogyAgent("Analogy", "pattern matching"))

# Evaluate a situation

situation = {

"consistent": True,

"has_evidence": True,

"feels_familiar": True,

"causes_anxiety": False,

"similar_to_past_success": True,

"similar_to_past_failure": False

}

result = society.deliberate(situation)

print(f"Decision: {result['decision']}")

print(f"Collective score: {result['collective_score']}")

for agent, score in result["agent_votes"].items():

print(f" {agent}: {score}")

# Output:

# Decision: proceed

# Collective score: 11

# Logic: 5, Emotion: 2, Analogy: 4This pattern — multiple specialized modules contributing to a collective decision — appears throughout modern AI, from mixture-of-experts architectures (used in large language models) to ensemble methods in machine learning to multi-agent systems that decompose complex tasks into subtasks handled by specialist agents.

A second code example demonstrates Minsky’s frames concept — the idea that knowledge is organized into structured templates with default values and inheritance:

"""

Minsky's Frames: structured knowledge representation

with defaults, inheritance, and slot-filling.

"""

class Frame:

def __init__(self, name, parent=None):

self.name = name

self.parent = parent

self.slots = {}

def set_slot(self, slot_name, value, is_default=False):

self.slots[slot_name] = {

"value": value,

"is_default": is_default

}

def get_slot(self, slot_name):

# Check this frame first

if slot_name in self.slots:

return self.slots[slot_name]["value"]

# Inherit from parent frame

if self.parent:

return self.parent.get_slot(slot_name)

return None

def fill_from_observation(self, observations):

"""Update slots with observed data,

overriding defaults."""

for key, value in observations.items():

self.set_slot(key, value, is_default=False)

def describe(self):

all_slots = {}

if self.parent:

# Gather inherited slots

frame = self.parent

while frame:

for k, v in frame.slots.items():

if k not in all_slots:

all_slots[k] = (v["value"], frame.name)

frame = frame.parent

# Override with own slots

for k, v in self.slots.items():

all_slots[k] = (v["value"], self.name)

return all_slots

# Build a frame hierarchy for "Restaurant"

building = Frame("Building")

building.set_slot("has_walls", True, is_default=True)

building.set_slot("has_roof", True, is_default=True)

restaurant = Frame("Restaurant", parent=building)

restaurant.set_slot("has_menu", True, is_default=True)

restaurant.set_slot("has_tables", True, is_default=True)

restaurant.set_slot("serves_food", True, is_default=True)

restaurant.set_slot("typical_activity", "dining", is_default=True)

# A specific restaurant fills in observed details

cafe = Frame("Cafe Minsky", parent=restaurant)

cafe.fill_from_observation({

"location": "Cambridge, MA",

"cuisine": "Mediterranean",

"price_range": "moderate"

})

# Querying inherits defaults from parents

print(cafe.get_slot("has_menu")) # True (from Restaurant)

print(cafe.get_slot("has_walls")) # True (from Building)

print(cafe.get_slot("cuisine")) # Mediterranean (observed)

print(cafe.get_slot("typical_activity")) # dining (default)Legacy and Modern Relevance

Marvin Minsky died on January 24, 2016, at the age of 88, in Boston, Massachusetts. He left behind a body of work that shaped not just artificial intelligence but computer science, cognitive psychology, philosophy of mind, robotics, and optical engineering.

His legacy is complex. On one hand, Minsky was the most influential AI researcher of the twentieth century. He co-founded the field, built its most important laboratory, trained many of its leading practitioners, and produced theoretical work — frames, the society of mind, the analysis of common-sense reasoning — that remains foundational. The Turing Award he received in 1969 recognized his contributions to AI when the field was barely a decade old. On the other hand, his role in the Perceptrons controversy cast a long shadow. By demonstrating the limitations of single-layer neural networks and contributing (intentionally or not) to the decline of neural network research for over a decade, Minsky delayed the development of the deep learning revolution that Geoffrey Hinton, Yann LeCun, and Yoshua Bengio would eventually lead. Whether this delay was a net negative — or whether the field needed to develop other approaches (symbolic AI, expert systems, knowledge representation) before it was ready for neural networks — is still debated.

In 2025, Minsky’s ideas resonate in ways he might have predicted. The society of mind concept — intelligence as an emergent property of many interacting agents — maps onto modern AI architectures in multiple ways. Mixture-of-experts models, where different neural network modules specialize in different types of inputs, are a direct computational analog of Minsky’s agent society. Multi-agent AI systems, where multiple language model instances collaborate on complex tasks (one agent plans, another writes code, another reviews), echo his principle that intelligence requires diverse, specialized components rather than a single monolithic reasoner. Even the structure of modern development environments and project management platforms reflects the insight that complex work is best decomposed into specialized roles with clear communication protocols.

His emphasis on common-sense reasoning has proven prophetic. Large language models have achieved remarkable fluency in language, but they still struggle with the kind of physical and social common sense that Minsky identified as the core challenge of AI. They can write essays about cups falling off tables but cannot reliably reason about the physics of cups falling off tables. The gap between linguistic competence and genuine understanding is precisely the gap that Minsky spent his career trying to close.

His theory of emotions as cognitive mechanisms — not obstacles to intelligence but essential components of it — has become newly relevant as researchers work on AI alignment and AI safety. If Minsky was right that emotions are evolved strategies for managing attention, goals, and behavior, then building safe AI may require building systems with something functionally equivalent to emotions: mechanisms that override short-term optimization in favor of long-term values, that trigger caution in unfamiliar situations, that maintain commitments even when breaking them would be locally advantageous.

Minsky’s influence extends through his students, who became leaders across computer science: Gerald Sussman (co-creator of Scheme), Patrick Winston (longtime director of the MIT AI Lab), Ray Kurzweil (inventor and futurist), Danny Hillis (founder of Thinking Machines Corporation), and dozens of others. The intellectual culture he created at MIT — ambitious, playful, interdisciplinary, willing to tackle problems that seemed impossibly hard — set the standard for AI research laboratories worldwide.

The field Minsky co-founded in that Dartmouth workshop in 1956 has grown into one of the most powerful technological forces in human history. The machines he dreamed of building — machines that can see, speak, reason, learn, and create — are now becoming real, albeit in ways different from what he imagined. Every neural network trained on GPU clusters, every language model generating text, every robot navigating a warehouse, every recommendation engine suggesting what to watch — all of it traces back to the question Minsky spent his life trying to answer: what is the mind, and how can we build one?

Key Facts

- Born: August 9, 1927, New York City, United States

- Died: January 24, 2016, Boston, Massachusetts, United States

- Known for: Co-founding the MIT AI Lab, frames theory, Society of Mind, confocal scanning microscope, SNARC neural network, Perceptrons book

- Education: BA in Mathematics from Harvard (1950), PhD in Mathematics from Princeton (1954)

- Key works: Perceptrons (1969, with Seymour Papert), The Society of Mind (1986), The Emotion Machine (2006), “A Framework for Representing Knowledge” (1974)

- Awards: ACM Turing Award (1969), Japan Prize (1990), IJCAI Award for Research Excellence (1991), Benjamin Franklin Medal (2001), IEEE Computer Pioneer Award

- Inventions: SNARC (first neural network learning machine, 1951), confocal scanning microscope (1957), head-mounted graphical display (1963)

- Notable students: Gerald Sussman, Patrick Winston, Ray Kurzweil, Danny Hillis, Terry Winograd, Manuel Blum

Frequently Asked Questions

Why is Marvin Minsky called the father of artificial intelligence?

Minsky earned this title because he was one of the co-founders of AI as a formal academic discipline. He co-organized the 1956 Dartmouth workshop that gave the field its name, co-founded the MIT AI Laboratory (the most influential AI research institution in the world for decades), won the Turing Award in 1969 for his AI contributions, and spent over sixty years developing foundational theories about how to build intelligent machines. His work on knowledge representation (frames), cognitive architecture (the society of mind), and the analysis of learning systems shaped the research agenda of the entire field. While John McCarthy coined the term “artificial intelligence” and Alan Turing posed the foundational question of machine intelligence, Minsky did more than anyone else to build AI into an institutional research discipline with laboratories, graduate programs, and a coherent intellectual framework.

What was Minsky’s most controversial contribution to AI?

Minsky’s most controversial work was Perceptrons (1969), co-authored with Seymour Papert. The book mathematically proved that single-layer neural networks (perceptrons) could not solve certain classes of problems, including the XOR function. While the analysis was technically correct and limited to single-layer networks, it was widely interpreted as a general indictment of neural network research. Combined with Minsky’s enormous prestige, the book contributed to a dramatic decline in neural network funding and research that lasted over a decade. The field did not recover until Geoffrey Hinton and others demonstrated the power of multi-layer networks trained with backpropagation in the 1980s. Whether Minsky intended to discourage all neural network research or merely to clarify the limitations of a specific architecture remains debated among AI historians.

How does Minsky’s Society of Mind theory relate to modern AI?

Minsky’s Society of Mind theory (1986) proposed that intelligence emerges from the interaction of many simple, specialized agents rather than from a single unified reasoning system. This idea maps remarkably well onto several modern AI approaches. Mixture-of-experts models — used in state-of-the-art language models — route different inputs to specialized neural network modules, much like Minsky’s agents specialize in different cognitive tasks. Multi-agent AI systems, where multiple AI instances collaborate on complex problems, directly implement the society-of-agents principle. Even the transformer architecture’s attention mechanism, which dynamically weights different parts of the input, echoes Minsky’s insight that intelligence requires flexible, context-dependent allocation of cognitive resources. The theory also anticipated the challenge of AI alignment: if intelligence is a society of competing agents, then ensuring that an AI system behaves well requires coordinating all of those agents toward coherent goals — a problem that remains central to AI safety research.