In 2010, a graduate student at UC Berkeley ran a benchmark that should not have been possible. His system processed the same terabyte-scale data sorting task that had taken a Hadoop cluster of 188 machines over 70 minutes — and completed it in under 30 seconds using just a fraction of the hardware. The student was Matei Zaharia, and the system was Apache Spark. What made the result so striking was not just the raw speed. It was the underlying idea: that by rethinking how distributed computing systems manage memory, you could eliminate the fundamental bottleneck that had limited every large-scale data processing framework since MapReduce. Zaharia did not build a slightly better version of Hadoop. He built a new computational engine based on a different abstraction — Resilient Distributed Datasets — that made iterative algorithms, interactive analytics, and real-time stream processing all possible within a single unified framework. That framework became Apache Spark, the most widely adopted distributed data processing engine in history. And the company Zaharia co-founded to commercialize it, Databricks, grew into one of the most valuable private technology companies in the world, valued at over $60 billion. From a Ph.D. thesis to an industry-defining platform, Zaharia’s trajectory is one of the clearest examples of how a single architectural insight can reshape an entire field.

Early Life and Education

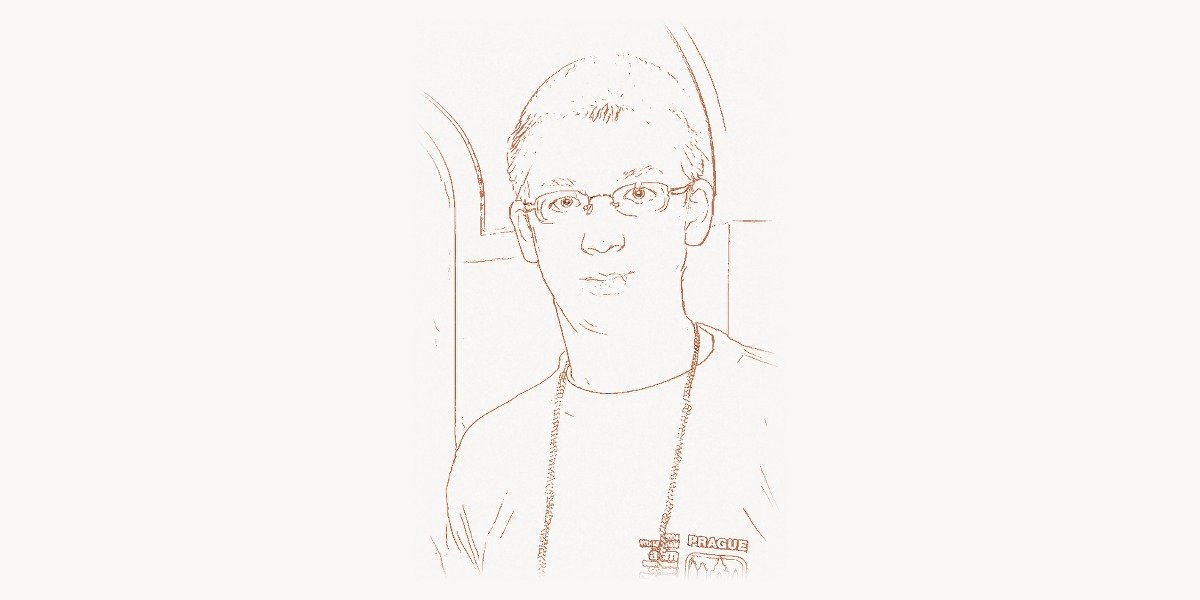

Matei Zaharia was born on March 30, 1986, in Bucharest, Romania. His family emigrated to Canada when he was young, and he grew up in Kitchener-Waterloo, Ontario — a region that had quietly become one of North America’s most productive technology corridors, anchored by the University of Waterloo and a cluster of technology companies including BlackBerry (then Research In Motion). The environment was steeped in engineering culture, and Zaharia showed an early aptitude for mathematics and computer science.

He enrolled at the University of Waterloo, one of Canada’s top institutions for computer science and engineering, graduating with a Bachelor’s degree in 2007. Waterloo’s cooperative education program — which alternates academic terms with paid work placements at technology companies — gave Zaharia early exposure to industrial-scale software engineering. But it was the academic side that drew him most strongly. He wanted to understand not just how to build software, but how to build the systems that software runs on.

In 2007, Zaharia entered the Ph.D. program in computer science at UC Berkeley, joining the AMPLab (Algorithms, Machines, and People Laboratory) under the supervision of Scott Shenker and Ion Stoica. The AMPLab was a unique research environment: it brought together researchers from systems, machine learning, and networking to work on problems at the intersection of all three. This interdisciplinary setting proved crucial for Zaharia’s work. The big data landscape at the time was dominated by Hadoop, the open-source implementation of Google’s MapReduce framework. Hadoop had democratized large-scale data processing, but it had significant limitations — and Zaharia set out to understand exactly what they were.

The Apache Spark Breakthrough

Technical Innovation

The core problem with MapReduce — and by extension Hadoop — was its rigid execution model. Every MapReduce job reads its input from disk, processes it through a Map phase and a Reduce phase, and writes the output back to disk. If you need to run another computation on that output, you launch a new MapReduce job, which reads the data from disk again. For single-pass computations like word counting or log aggregation, this was fine. But for iterative algorithms — machine learning training, graph processing, optimization routines — the repeated disk I/O was devastating. A machine learning algorithm that needed 20 iterations would read and write the same dataset to disk 20 times, spending the vast majority of its time waiting for hard drives rather than doing computation.

Zaharia’s insight was that the solution required a new abstraction, not just an optimization of the existing one. In 2010, he published a paper introducing Resilient Distributed Datasets (RDDs) — a data structure that could be kept in memory across a cluster of machines, persisting between computations, and automatically reconstructed if a node failed. The key innovation was in the word “resilient.” Previous in-memory distributed systems had achieved speed by caching data in RAM, but they lacked fault tolerance — if a machine crashed, the cached data was lost and the entire computation had to restart. RDDs solved this by recording the lineage of transformations used to create each dataset. If a partition was lost, the system could recompute just that partition by replaying the transformations on the original data, rather than replicating all data across the network.

This lineage-based fault tolerance was elegant and efficient. It meant that Spark could keep data in memory without the overhead of replication that traditional fault-tolerant distributed systems required. For iterative algorithms, the performance improvement was not incremental — it was often 10x to 100x faster than Hadoop MapReduce, because the data stayed in memory between iterations instead of being serialized to disk and deserialized back.

# Apache Spark: Iterative machine learning with in-memory persistence

# This example illustrates why RDDs transformed iterative computation.

# In Hadoop MapReduce, each iteration would read/write to disk.

# In Spark, the dataset stays in memory across all iterations.

from pyspark.sql import SparkSession

from pyspark.ml.feature import VectorAssembler, StandardScaler

from pyspark.ml.clustering import KMeans

from pyspark.ml.evaluation import ClusteringEvaluator

# Initialize Spark session — the unified entry point

spark = SparkSession.builder \

.appName("CustomerSegmentation") \

.config("spark.executor.memory", "8g") \

.getOrCreate()

# Load data — Spark reads once, keeps in memory for all iterations

raw_data = spark.read.csv("s3a://analytics/customers.csv", header=True, inferSchema=True)

# Feature engineering pipeline

assembler = VectorAssembler(

inputCols=["purchase_frequency", "avg_order_value",

"days_since_last_purchase", "total_lifetime_spend"],

outputCol="features_raw"

)

scaler = StandardScaler(inputCol="features_raw", outputCol="features",

withStd=True, withMean=True)

# Prepare features

assembled = assembler.transform(raw_data)

scaler_model = scaler.fit(assembled)

scaled_data = scaler_model.transform(assembled)

# Cache in memory — this is the key Spark advantage.

# K-Means runs ~20 iterations internally, each re-scanning this dataset.

# In Hadoop, each iteration = disk read + disk write. In Spark, it stays in RAM.

scaled_data.cache()

# Train K-Means clustering — iterative algorithm that benefits from RDDs

kmeans = KMeans(k=5, seed=42, maxIter=30, featuresCol="features")

model = kmeans.fit(scaled_data)

# Evaluate clustering quality

predictions = model.transform(scaled_data)

evaluator = ClusteringEvaluator(featuresCol="features")

silhouette = evaluator.evaluate(predictions)

print(f"Silhouette score: {silhouette:.4f}")

# Analyze cluster centers

for i, center in enumerate(model.clusterCenters()):

print(f"Cluster {i}: frequency={center[0]:.2f}, "

f"avg_value={center[1]:.2f}, recency={center[2]:.2f}")

spark.stop()Spark was also designed from the start to be a unified engine. Where the Hadoop ecosystem required a patchwork of specialized tools — MapReduce for batch processing, Apache Storm for streaming, Apache Mahout for machine learning, Hive for SQL queries — Spark provided a single engine that could handle all of these workloads. The Spark ecosystem eventually included Spark SQL for structured data queries, Spark Streaming (later Structured Streaming) for real-time data, MLlib for machine learning, and GraphX for graph computation. This unification was not just a convenience — it eliminated the data transfer overhead and operational complexity of maintaining multiple systems.

Why It Mattered

Apache Spark’s impact on the data engineering landscape was immediate and profound. Released as an open-source project in 2010 and donated to the Apache Software Foundation in 2013, Spark became the most active open-source project in big data within two years. By 2015, it had surpassed Hadoop MapReduce as the default engine for large-scale data processing. Today, Spark is used by thousands of organizations — from technology giants to financial institutions to healthcare providers — processing exabytes of data daily.

The reasons for adoption were both technical and practical. Spark was not just faster than Hadoop for iterative workloads; it was also dramatically easier to program. The RDD API (and later the DataFrame and Dataset APIs) provided a much more natural programming model than the rigid Map/Reduce paradigm. A data transformation that required hundreds of lines of Java in Hadoop could often be expressed in a dozen lines of Python or Scala in Spark. This accessibility brought large-scale data processing to a much wider audience — data scientists, analysts, and engineers who were not distributed systems specialists could now work with cluster-scale data.

Spark also bridged the gap between batch and real-time processing. The Apache Kafka project had solved the problem of reliable real-time data ingestion, but processing that data in real time required a separate streaming engine. Spark Streaming — and later Structured Streaming — allowed developers to write streaming applications using the same APIs they used for batch processing, treating a stream as an unbounded table that was continually appended to. This micro-batch approach traded a small amount of latency for enormous gains in simplicity and reliability.

For the broader technology ecosystem, Spark’s success demonstrated that the MapReduce paradigm pioneered by Jeff Dean at Google was not the final word in distributed data processing. It was a starting point — a foundation that could be fundamentally improved by rethinking the relationship between computation and memory. This lesson has influenced every distributed computing framework designed since.

Beyond Spark: Other Major Contributions

While Apache Spark is Zaharia’s most visible contribution, his work extends across several other dimensions of the data and AI landscape.

Databricks. In 2013, Zaharia co-founded Databricks along with several other AMPLab researchers, including Ali Ghodsi, Ion Stoica, Patrick Wendell, Reynold Xin, Andy Konwinski, and Arsalan Tavakoli-Shiraji. Databricks was founded to build a commercial platform around Spark, but it evolved into something much larger: the Lakehouse platform. The core idea was to merge the best properties of data warehouses (structured data, ACID transactions, schema enforcement) with data lakes (cheap storage, support for unstructured data, open formats). The result was the Delta Lake storage format and the Databricks Unified Analytics Platform, which allowed organizations to run data engineering, data science, machine learning, and business analytics on a single platform. By 2024, Databricks was valued at over $60 billion, making it one of the most valuable enterprise software companies in the world.

Delta Lake. Released as open source in 2019, Delta Lake added ACID transactions, scalable metadata handling, and time travel (data versioning) to Apache Spark and other data lake engines. Before Delta Lake, data lakes were notoriously unreliable — concurrent writes could corrupt data, failed jobs left partial results, and there was no way to roll back a bad update. Delta Lake solved these problems by adding a transaction log layer on top of Parquet files stored in cloud object storage. It became the most widely adopted open table format, processing exabytes of data for thousands of organizations.

MLflow. In 2018, Zaharia and the Databricks team released MLflow, an open-source platform for managing the machine learning lifecycle. MLflow addressed a practical problem that every ML team encountered: experiments were difficult to track, models were hard to reproduce, and deploying trained models to production was a fragmented, manual process. MLflow provided standardized interfaces for experiment tracking, model packaging, model registry, and deployment. It quickly became the most popular ML lifecycle management tool, with millions of monthly downloads.

Academic contributions. Despite transitioning from academia to industry, Zaharia maintained a strong research presence. He joined the Stanford University faculty as an assistant professor of computer science in 2016, where he continued to publish influential work on systems for machine learning, data management, and AI. His research group at Stanford contributed to topics including data-centric AI (the idea that improving data quality is often more effective than improving model architectures), foundation model research, and systems for serving large language models efficiently.

Philosophy and Approach

Key Principles

Zaharia’s work is unified by several recurring principles that reflect a distinctive engineering philosophy.

Unification over specialization. The central theme of Zaharia’s career is that a single well-designed abstraction can replace a collection of specialized tools. Spark unified batch, streaming, SQL, and ML workloads. Databricks unified data engineering and data science. MLflow unified experiment tracking and model deployment. This preference for unification is not aesthetic — it is driven by the observation that maintaining multiple specialized systems creates enormous operational overhead and prevents the kind of cross-workload optimization that a unified engine can achieve.

Simplicity as a force multiplier. Zaharia consistently designs systems that make powerful capabilities accessible to a broad audience. The Spark DataFrame API made distributed data processing feel like working with a local Python Pandas dataframe. MLflow made experiment tracking as simple as adding a few lines of code. This emphasis on usability is deliberate — Zaharia understands that the impact of a technology is limited not by its theoretical power but by the number of people who can effectively use it. For teams building digital products, whether working independently or with agencies like Toimi on data-driven strategies, this principle of accessible power has become a guiding standard for tool selection.

Open source as infrastructure. Every major project Zaharia has created — Spark, Delta Lake, MLflow — has been released as open source. This is not altruism; it is strategy. Open source creates ecosystems. It allows a project to benefit from contributions by thousands of developers, ensures that the technology is battle-tested across diverse use cases, and prevents vendor lock-in that would limit adoption. Zaharia’s approach to open source is pragmatic: he releases core infrastructure as open source while building commercial value in the managed platform, enterprise features, and integration layer above it.

Data-centric thinking. In recent years, Zaharia has become a prominent advocate for what he calls “data-centric AI” — the idea that for many real-world machine learning applications, improving the quality, labeling, and curation of training data yields better results than improving the model architecture. This perspective aligns with his broader engineering philosophy: focus on the foundational layer (in this case, data) rather than optimizing at a higher level of abstraction. Teams managing complex workflows with tools like Taskee for task coordination can apply this same principle — getting the underlying processes right matters more than adding sophisticated tooling on top of flawed foundations.

Legacy and Impact

Matei Zaharia’s legacy is defined by a rare combination: he both invented a foundational technology and built the company that brought it to market at scale. This dual achievement — academic and commercial — places him in a small group of technologists who have shaped both the theory and practice of modern computing.

Apache Spark fundamentally changed how organizations process data. Before Spark, large-scale data processing was the domain of specialized engineers who could write MapReduce jobs and manage Hadoop clusters. After Spark, it became accessible to data scientists and analysts who could express complex computations in Python or SQL. This democratization of big data was as significant as the raw performance improvements. Spark made it possible for a data scientist with a laptop to prototype an analysis on a sample dataset and then scale it to a thousand-node cluster with minimal code changes — a workflow that was unthinkable in the Hadoop era.

The Lakehouse architecture, championed by Databricks, represents Zaharia’s latest attempt to unify a fragmented landscape. Just as Spark unified batch and streaming processing, the Lakehouse aims to unify data warehouses and data lakes — eliminating the need for organizations to maintain separate systems for analytical queries and machine learning workloads. If this vision succeeds — and the rapid growth of Databricks suggests it is succeeding — it will represent another fundamental simplification of the data infrastructure stack.

At Stanford, Zaharia’s research continues to push the boundaries of what is possible at the intersection of systems and AI. His work on systems for foundation models, data quality for machine learning, and efficient inference for large language models addresses some of the most pressing technical challenges of the current AI era. The lineage-based thinking that led to RDDs — the idea that you can reconstruct any state by replaying the sequence of transformations that produced it — continues to influence how researchers think about reproducibility, debugging, and fault tolerance in AI systems.

Zaharia’s trajectory from a graduate student at Berkeley to a Stanford professor and co-founder of a $60 billion company illustrates a pattern that recurs throughout the history of computing: the most transformative technologies often emerge not from incremental improvements to existing systems, but from fundamental rethinking of the underlying abstractions. Linus Torvalds rethought version control with Git. Alan Turing rethought what computation itself meant. Zaharia rethought how distributed systems should manage data in memory — and the result was a technology that changed an industry.

Key Facts About Matei Zaharia

- Born: March 30, 1986, Bucharest, Romania; raised in Kitchener-Waterloo, Ontario, Canada

- Education: B.Sc. in Computer Science, University of Waterloo (2007); Ph.D. in Computer Science, UC Berkeley (2013)

- Major creation: Apache Spark (2010) — the most widely adopted distributed data processing engine

- Company: Co-founded Databricks in 2013, valued at over $60 billion by 2024

- Other projects: Delta Lake (open-source storage layer), MLflow (ML lifecycle platform)

- Academic role: Assistant Professor of Computer Science at Stanford University (2016–present)

- Key publication: “Resilient Distributed Datasets: A Fault-Tolerant Abstraction for In-Memory Cluster Computing” (NSDI 2012) — one of the most cited systems papers of the 2010s

- Awards: ACM Doctoral Dissertation Award (2014), MIT Technology Review 35 Innovators Under 35

- Philosophy: Unification over specialization; accessible design; data-centric AI

- Impact: Spark processes exabytes of data daily across thousands of organizations worldwide

Frequently Asked Questions

What problem did Apache Spark solve that Hadoop could not?

Hadoop MapReduce was designed for single-pass batch processing — reading data from disk, processing it, and writing results back to disk. This worked well for tasks like log analysis or ETL, but it was extremely inefficient for iterative algorithms such as machine learning training, where the same dataset needs to be processed multiple times. Each iteration in Hadoop required a full round-trip to disk, making iterative computations 10x to 100x slower than necessary. Spark solved this by introducing Resilient Distributed Datasets (RDDs), which could persist data in memory across iterations while maintaining fault tolerance through lineage tracking. This in-memory computation model made Spark dramatically faster for iterative workloads and enabled new use cases — interactive data exploration, real-time streaming, and machine learning at scale — that were impractical with Hadoop alone.

What is the Lakehouse architecture and why does it matter?

The Lakehouse architecture, developed by Databricks and based on Zaharia’s research, combines the best features of data warehouses (ACID transactions, schema enforcement, fast SQL queries) with the best features of data lakes (cheap object storage, support for unstructured data like images and text, open file formats). Before the Lakehouse, organizations typically maintained two separate systems — a data lake for raw storage and a data warehouse for analytics — with complex ETL pipelines to move data between them. This duplication created data quality problems, increased costs, and slowed down analysis. The Lakehouse eliminates this two-tier architecture by adding a transactional metadata layer (Delta Lake) on top of cloud object storage, enabling warehouse-quality reliability and performance directly on the data lake. This unification reduces infrastructure costs, simplifies data governance, and enables machine learning and business intelligence to operate on the same data without copying it between systems.

How did Zaharia’s academic work influence his commercial success?

Zaharia’s path from academic researcher to successful entrepreneur illustrates the power of university research labs as incubators for transformative technology. Apache Spark originated as his Ph.D. research project at UC Berkeley’s AMPLab, where the combination of academic rigor and real-world focus produced a system that was both theoretically innovative and practically useful. The academic environment allowed Zaharia to take the time to develop the right abstraction (RDDs) rather than rushing to market with an incremental improvement. The open-source release of Spark during his Ph.D. created a community of users and contributors before the company even existed, giving Databricks a pre-existing ecosystem to build on. Zaharia’s continued appointment at Stanford ensures that his commercial work remains connected to cutting-edge research — a feedback loop that has produced innovations like Delta Lake and MLflow.

What is data-centric AI and why does Zaharia advocate for it?

Data-centric AI is an approach to machine learning that prioritizes improving the quality, labeling accuracy, and curation of training data over improving model architectures. Zaharia advocates for this approach based on empirical evidence that for many practical applications — particularly in industry settings where data is messy, incomplete, or inconsistently labeled — cleaning and improving the training data produces larger accuracy gains than switching to a more sophisticated model. This perspective is consistent with Zaharia’s broader philosophy of focusing on foundational infrastructure. Just as Spark improved data processing by rethinking the storage abstraction rather than optimizing algorithms, data-centric AI improves machine learning by rethinking the data pipeline rather than tweaking neural network architectures. Zaharia’s research group at Stanford has published extensively on tools and techniques for systematic data quality improvement, including methods for detecting labeling errors, measuring data quality, and curating training datasets at scale.