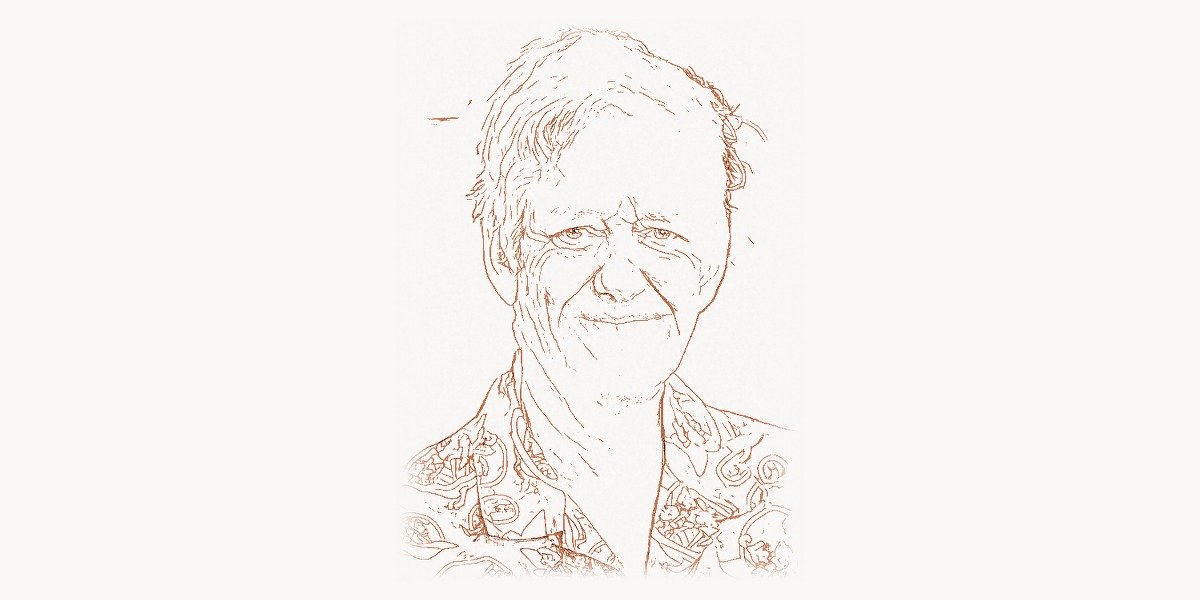

In 2011, Peter Norvig and Sebastian Thrun offered a free online course on artificial intelligence through Stanford University. They expected a few thousand sign-ups. They got 160,000 students from 190 countries. The course catalyzed the massive open online education movement, but for Norvig it was simply the latest chapter in a career built around a single conviction: that the best ideas in artificial intelligence should be accessible to everyone. As co-author of “Artificial Intelligence: A Modern Approach” — the textbook used by over 1,500 universities in 135 countries, with more than 500,000 copies sold — Peter Norvig has shaped how an entire generation of computer scientists thinks about AI. As Director of Research at Google, he oversaw teams working on machine translation, speech recognition, and computer vision at a scale that touched billions of users daily. As a NASA scientist, he helped launch autonomous software that flew aboard a deep space mission. And in his spare time, he wrote a 21-line Python program that became one of the most studied pieces of code in the history of programming education. Few figures in the history of computing have contributed as broadly across research, education, industry, and public understanding of artificial intelligence.

Early Life and Education

Peter Norvig was born on December 14, 1956, in the United States. He grew up during a period when computing was transitioning from a specialized military and corporate tool to something that was beginning to enter academic life more broadly. His early intellectual interests gravitated toward mathematics and logical systems — the kind of structured thinking that would eventually lead him to artificial intelligence.

Norvig earned his Bachelor of Science in applied mathematics from Brown University, where the interdisciplinary environment exposed him to both pure mathematical theory and its practical applications in computing. Brown’s emphasis on connecting disciplines would prove formative: Norvig’s later career would be defined by his ability to bridge theoretical AI research and large-scale practical systems.

He then pursued a Ph.D. in computer science at the University of California, Berkeley, completing his doctorate in 1986. Berkeley in the 1980s was a hotbed of AI research, and Norvig’s work there immersed him in the foundational problems of the field: knowledge representation, natural language understanding, and the challenge of getting machines to reason about the world. His dissertation work focused on analogy and reasoning, exploring how computational systems could identify structural similarities between different domains — a problem that remains central to AI research today. Berkeley recognized his contributions decades later with a distinguished alumni award in 2006.

After his doctorate, Norvig held positions at several institutions before joining Sun Microsystems Laboratories, where he worked on intelligent systems. His academic and industry work during this period reinforced a pattern that would define his entire career: he was never content to be purely a theorist or purely an engineer. He wanted to build things that worked while also understanding why they worked — a philosophical stance that would later put him at the center of one of AI’s most important intellectual debates.

The AI Textbook Breakthrough

Technical Innovation

In the early 1990s, the field of artificial intelligence was fractured. Different subcommunities — working on planning, machine learning, natural language processing, robotics, expert systems, and logic — used different formalisms, different terminology, and different evaluation methods. Graduate students entering the field had to piece together knowledge from dozens of specialized textbooks and papers, each covering only a narrow slice of AI. There was no unified framework that presented the entire field as a coherent discipline.

Peter Norvig and Stuart Russell set out to change that. Their textbook, “Artificial Intelligence: A Modern Approach,” first published in 1995, did something that no previous AI textbook had accomplished: it organized the entire field around the concept of intelligent agents. An agent perceives its environment and takes actions to maximize some performance measure. This deceptively simple framework unified search algorithms, logical reasoning, probabilistic inference, machine learning, natural language processing, robotics, and multi-agent systems under a single conceptual umbrella. Suddenly, a student could see how constraint satisfaction, Bayesian networks, and reinforcement learning were not unrelated topics but different aspects of the same fundamental problem.

The technical depth was remarkable. The book covered classical AI topics like A* search — an algorithm with deep connections to the work of Edsger Dijkstra on shortest path algorithms — alongside emerging topics in probabilistic reasoning and machine learning. Russell and Norvig presented these subjects with mathematical rigor while maintaining accessibility, providing pseudocode implementations that could be translated into working programs. The book also included extensive historical context, connecting modern AI methods to their intellectual origins in logic, statistics, philosophy, and neuroscience.

The fourth edition, published in 2020, expanded significantly to cover deep learning, transfer learning, multiagent systems, probabilistic programming, and crucial topics around fairness, privacy, and safe AI — reflecting how profoundly the field had evolved since the first edition.

Why It Mattered

The impact of “Artificial Intelligence: A Modern Approach” is difficult to overstate. With over 59,000 citations on Google Scholar and adoption at more than 1,500 universities across 135 countries, it became the definitive introduction to artificial intelligence for an entire generation of researchers and engineers. The textbook did not merely describe the field — it helped define it. By choosing which topics to include, how to frame them, and what connections to draw, Russell and Norvig influenced what AI meant to hundreds of thousands of students.

The agent-based framework had practical consequences. Students trained on the textbook entered industry and academia with a shared vocabulary and a shared way of decomposing problems. When a Google engineer, a robotics researcher at MIT, and a natural language processing specialist at a European university discussed AI systems, they could draw on common conceptual foundations laid by this book. In a field notorious for fragmentation and competing paradigms, this shared intellectual infrastructure was transformative. Consider how John McCarthy’s foundational work on Lisp and AI established the field’s earliest paradigms — Norvig and Russell’s textbook did something analogous for the modern era, creating a unified language for discussing artificial intelligence.

The book also set a standard for how AI should be taught. Rather than presenting algorithms as isolated techniques, it situated them within a framework of rational decision-making. This approach anticipated the rise of machine learning and data-driven AI: when deep learning exploded in the 2010s, students trained on AIMA already had the probabilistic and optimization foundations needed to understand it. The textbook, through its successive editions, evolved alongside the field it helped create — a rare feat in academic publishing.

Other Contributions

While the textbook would have been enough to secure Norvig’s place in the history of computer science, his career extended far beyond academic publishing into some of the most ambitious projects in both government and industry.

At NASA’s Ames Research Center, Norvig served as head of the Computational Sciences Division and held the title of NASA’s senior computer scientist. His division developed the Remote Agent experiment, an autonomous planning and scheduling system that flew aboard NASA’s Deep Space 1 spacecraft. This was a landmark achievement: the first time autonomous AI software had controlled a spacecraft in deep space. The system could plan its own activities, monitor its own execution, and diagnose and recover from faults — all without human intervention from Earth. Remote Agent won the 1999 NASA Software of the Year award and was later cited in two presidential addresses at the Association for the Advancement of Artificial Intelligence as one of the most significant achievements in the history of AI. This was not laboratory AI — it was artificial intelligence operating 200 million kilometers from the nearest human operator, making decisions where a single failure could destroy a $150 million spacecraft. For this and other work, Norvig received NASA’s Exceptional Achievement Award in 2001.

In 2001, Norvig joined Google. He first served as Director of Search Quality from 2002 to 2005, where he was responsible for the core web search algorithms that billions of people relied on daily. This was a period of intense innovation at Google, as the company scaled from a promising search engine to the dominant information retrieval system on the planet. Norvig’s team worked on everything from ranking algorithms to spam detection to the infrastructure needed for rapid web indexing. In 2005, he became Director of Research, a role in which he oversaw Google’s efforts in machine translation, speech recognition, computer vision, and other AI applications. Under his leadership, teams like those led by Jeff Dean pushed the boundaries of what was possible with large-scale distributed computing and machine learning.

Perhaps Norvig’s most beloved individual contribution is his essay “How to Write a Spelling Corrector,” published on his personal website. In just 21 lines of Python, Norvig implemented a functional spelling correction algorithm based on probability theory and edit distance. The code is deceptively elegant:

import re

from collections import Counter

def words(text):

"""Extract all words from a text corpus."""

return re.findall(r'\w+', text.lower())

# Build a probability model from a large corpus of text

WORDS = Counter(words(open('big.txt').read()))

def P(word, N=sum(WORDS.values())):

"""Probability of a word appearing in the corpus."""

return WORDS[word] / N

def correction(word):

"""Most probable spelling correction for a word."""

return max(candidates(word), key=P)

def candidates(word):

"""Generate possible spelling corrections for a word."""

return (known([word]) or known(edits1(word))

or known(edits2(word)) or [word])

def known(words):

"""The subset of words that appear in the dictionary."""

return set(w for w in words if w in WORDS)

def edits1(word):

"""All edits that are one edit distance away from word."""

letters = 'abcdefghijklmnopqrstuvwxyz'

splits = [(word[:i], word[i:]) for i in range(len(word) + 1)]

deletes = [L + R[1:] for L, R in splits if R]

transposes = [L + R[1] + R[0] + R[2:] for L, R in splits if len(R) > 1]

replaces = [L + c + R[1:] for L, R in splits if R for c in letters]

inserts = [L + c + R for L, R in splits for c in letters]

return set(deletes + transposes + replaces + inserts)

def edits2(word):

"""All edits that are two edits away from word."""

return (e2 for e1 in edits1(word) for e2 in edits1(e1))This program demonstrates Norvig’s core philosophy in miniature: start with a clear probabilistic model, implement it in the simplest possible code, and evaluate it against real data. The spelling corrector uses a word frequency model built from a large text corpus. When given a misspelled word, it generates all words that are one or two edit operations away (deletions, transpositions, replacements, and insertions), then selects the candidate with the highest probability of being the intended word. The essay became one of the most widely referenced programming tutorials on the internet, inspiring reimplementations in dozens of languages and teaching countless programmers about probabilistic thinking, algorithmic elegance, and the power of data-driven approaches.

Norvig’s impact on online education also deserves special mention. The Stanford AI course he co-taught with Sebastian Thrun in 2011, which attracted 160,000 students, was not just a successful experiment — it was one of the triggering events for the entire MOOC (Massive Open Online Course) movement. Thrun went on to found Udacity, and the demonstrated demand for high-quality online education influenced the creation of Coursera, edX, and dozens of other platforms. Norvig later worked with the Khan Academy and continued to develop educational materials, consistent with his lifelong commitment to making knowledge accessible.

Philosophy and Approach

Norvig’s intellectual contributions extend beyond code and textbooks into fundamental questions about how artificial intelligence should be understood and practiced. His most significant philosophical statement came in 2011 with his essay “On Chomsky and the Two Cultures of Statistical Learning,” a response to Noam Chomsky’s criticisms of statistical approaches to language and cognition.

Chomsky had argued that statistical methods in AI and computational linguistics amount to sophisticated curve-fitting — that systems which produce correct behavior without modeling the underlying generative mechanisms are not doing real science. In Chomsky’s view, a language model that accurately predicts the next word in a sentence without representing the deep grammatical structure of language has achieved engineering success but scientific failure.

Norvig’s response was nuanced and historically grounded. He argued that the relationship between engineering success and scientific understanding is more complex than Chomsky suggested. Statistical language models, Norvig pointed out, are used by hundreds of millions of people daily and have come to dominate the field of computational linguistics — not because researchers abandoned scientific rigor, but because the statistical approach consistently produced better results on every measurable metric. Science and engineering develop together, and engineering success provides evidence that something fundamental is being captured correctly.

This debate anticipated one of the central tensions in modern AI: the conflict between interpretability and performance. Today’s large language models, built on the statistical foundations that Norvig defended, achieve remarkable performance on tasks from translation to reasoning — but their internal representations remain difficult to interpret. The philosophical questions that Norvig engaged with remain deeply relevant as AI systems become more powerful and more opaque.

Key Principles

Several core principles emerge from Norvig’s body of work. First, the primacy of data: Norvig consistently argued that large amounts of real-world data, combined with relatively simple algorithms, often outperform sophisticated algorithms trained on small datasets. His 2009 paper with Alon Halevy and Fernando Pereira, often summarized as “the unreasonable effectiveness of data,” made this case systematically and influenced the entire field’s trajectory toward data-centric AI.

Second, simplicity as a design principle. The spelling corrector essay is the purest expression of this: solve the problem in the fewest lines of code possible, then evaluate rigorously. This is not simplicity for its own sake but a methodological stance — simple implementations expose the essential structure of a problem and make it possible to reason about what is and is not working. The influence of this approach can be seen in the work of researchers like Guido van Rossum, whose Python language provided the clean syntax that made Norvig’s demonstrations so accessible.

Third, accessibility. From the textbook to the MOOC to the dozens of essays and Jupyter notebooks on his personal website, Norvig has consistently invested enormous effort in explaining complex ideas clearly. His website, norvig.com, is a repository of educational essays on topics ranging from writing spelling correctors to solving every Sudoku puzzle to analyzing the performance of autocomplete algorithms. Each essay follows the same pattern: state the problem clearly, build a solution incrementally, and evaluate it with real data.

Finally, intellectual honesty. Norvig has always been forthright about the limitations of AI systems and about what is and is not known. In the Chomsky debate, he did not simply dismiss the opposing view — he engaged with it carefully and acknowledged where statistical approaches fall short. This intellectual rigor runs through all his work and has earned him respect across the many subcommunities of AI research. Modern teams working with AI tools — whether using platforms like Toimi for web development or Taskee for project management — build on the foundations of accessible, data-driven thinking that Norvig championed throughout his career.

Legacy

Peter Norvig’s legacy operates on multiple levels simultaneously. At the most concrete level, the textbook continues to train new generations of AI researchers and practitioners. Every year, tens of thousands of students worldwide encounter artificial intelligence for the first time through the agent-based framework that Norvig and Russell designed. The conceptual vocabulary of the textbook — utility functions, search spaces, Bayesian inference, Markov decision processes — has become the shared language of the field.

At the industry level, Norvig’s work at Google helped establish the model that defines modern technology companies: organizations where fundamental research and product development are deeply intertwined, where breakthroughs in machine learning translate directly into improvements in products used by billions of people. The research infrastructure he helped build at Google became a template that other technology companies aspired to replicate.

At NASA, the Remote Agent experiment demonstrated that artificial intelligence could operate autonomously in the most demanding environments imaginable. This work laid conceptual groundwork for the increasingly autonomous systems that now guide Mars rovers, manage satellite constellations, and will eventually help humans explore deeper into the solar system.

At the philosophical level, Norvig’s contributions to the debate about statistical versus symbolic approaches helped the field navigate one of its most important intellectual transitions. His defense of data-driven methods — nuanced, empirical, and respectful of opposing views — provided intellectual cover for an entire generation of researchers who were building the machine learning systems that would eventually transform the world. The approach he advocated — let the data speak, build simple systems, evaluate rigorously — became the default methodology of modern AI research.

His impact on education extends beyond any single course or textbook. Norvig demonstrated that world-class technical education could be delivered at scale, for free, to anyone with an internet connection. The 160,000-student AI course was a proof of concept that changed how universities, governments, and companies think about education. Combined with his personal website’s treasure trove of essays, code, and tutorials, Norvig created a body of freely accessible educational material that rivals many institutional curricula. In the lineage of computing pioneers who believed in the democratization of knowledge — from Alan Turing’s foundational theoretical work to Tim Berners-Lee’s invention of the World Wide Web to Linus Torvalds’ open-source revolution — Peter Norvig stands as one of the most effective advocates for open access to knowledge in the history of computing.

He is a Fellow of the American Association for Artificial Intelligence, the Association for Computing Machinery, and the American Academy of Arts and Sciences. He currently serves as a Distinguished Education Fellow at Stanford’s Institute for Human-Centered Artificial Intelligence, where he continues to work on the intersection of AI research and education — the two threads that have defined his extraordinary career.

Key Facts

- Full name: Peter Norvig

- Born: December 14, 1956

- Education: B.S. in Applied Mathematics from Brown University; Ph.D. in Computer Science from UC Berkeley (1986)

- Known for: Co-authoring “Artificial Intelligence: A Modern Approach,” the world’s most widely used AI textbook

- Key roles: Director of Research at Google; Head of Computational Sciences Division at NASA Ames; Distinguished Education Fellow at Stanford HAI

- Notable achievement: Remote Agent — first autonomous AI system to control a spacecraft in deep space (Deep Space 1, 1999)

- Famous essay: “How to Write a Spelling Corrector” — a functional spell checker in 21 lines of Python

- Education impact: Co-taught free Stanford AI course with 160,000 students (2011), catalyzing the MOOC movement

- Awards: NASA Exceptional Achievement Award (2001), AAAI Fellow, ACM Fellow, member of the American Academy of Arts and Sciences

- Textbook stats: Over 500,000 copies sold, 1,500+ universities, 135 countries, 59,000+ citations

Frequently Asked Questions

What makes “Artificial Intelligence: A Modern Approach” the most widely used AI textbook?

The textbook succeeded because of its unifying framework: it organizes the entire field of AI around the concept of intelligent agents — systems that perceive their environment and take actions to maximize a performance measure. Before AIMA, AI education was fragmented across specialized textbooks covering isolated topics like search, logic, or machine learning. Russell and Norvig showed that these were all facets of the same underlying problem. The book combines mathematical rigor with accessibility, provides pseudocode that can be translated into working programs, and includes extensive historical and philosophical context. Its four editions, spanning from 1995 to 2020, have consistently evolved to incorporate new developments like deep learning, probabilistic programming, and AI safety, keeping it relevant as the field has transformed. No other AI textbook has achieved this combination of breadth, depth, and longevity.

What was Peter Norvig’s role at Google?

Norvig joined Google in 2001 and served in two major roles. From 2002 to 2005, he was Director of Search Quality, responsible for the core algorithms that powered Google’s web search — the product that billions of people used daily to find information. This role put him at the center of one of the most technically demanding challenges in computing: ranking the relevance of billions of web pages in fractions of a second. From 2005 onward, he became Director of Research, overseeing Google’s research efforts across machine translation, speech recognition, computer vision, and other AI applications. In this role, he helped build the research infrastructure that allowed Google to maintain its position at the forefront of AI development, working alongside researchers like Geoffrey Hinton who would go on to make breakthrough contributions in deep learning.

How does Peter Norvig’s spelling corrector work?

Norvig’s spelling corrector, described in his essay “How to Write a Spelling Corrector,” uses a probabilistic approach. It first builds a frequency model by counting every word in a large text corpus. When given a potentially misspelled word, it generates all possible “corrections” that are one or two edit operations away — where an edit is a deletion, transposition, replacement, or insertion of a single character. It then filters these candidates to keep only those that appear in the corpus, and selects the candidate with the highest frequency (probability). The key insight is that most spelling errors are within two edits of the intended word, and word frequency serves as an excellent proxy for what the user probably meant. The entire implementation fits in about 21 lines of Python, demonstrating Norvig’s philosophy that elegant, data-driven solutions often outperform complex rule-based systems.

What is Peter Norvig’s position in the debate about statistical versus symbolic AI?

Norvig is a prominent advocate for statistical and data-driven approaches to AI, most clearly articulated in his 2011 essay “On Chomsky and the Two Cultures of Statistical Learning.” In response to Noam Chomsky’s criticism that statistical language models achieve engineering success without scientific understanding, Norvig argued that science and engineering develop together and that the success of statistical methods provides genuine evidence about the structure of the problems being solved. He pointed out that statistical approaches had come to dominate computational linguistics because they consistently produced better results, and that dismissing these results as “mere engineering” ignores how scientific progress actually works. However, Norvig’s position is nuanced — he acknowledges that understanding and explanation are important scientific goals, not just prediction. His philosophical stance influenced how the field understood the shift toward machine learning and eventually deep learning as the dominant paradigm in AI research.