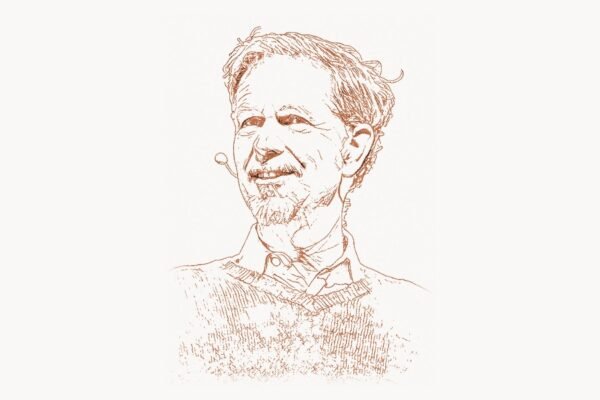

In the world of artificial intelligence, there is a persistent gap between what algorithms can learn in simulation and what robots can actually do in the physical world. Bridging that gap — teaching machines to interact with messy, unpredictable reality — has been one of the hardest challenges in computer science. Pieter Abbeel, a professor at UC Berkeley and co-founder of Covariant, has spent more than two decades attacking this exact problem. His pioneering work in robot learning from demonstration, deep reinforcement learning for robotics, and AI-driven manipulation has fundamentally reshaped how we think about training machines to act in the real world. Where others saw an intractable divide between digital intelligence and physical competence, Abbeel built the bridges that let robots learn by watching, practicing, and adapting.

Early Life and Education

Pieter Abbeel was born in 1977 in Belgium, where he developed an early fascination with mathematics and engineering. He pursued his undergraduate studies at KU Leuven, one of Europe’s leading research universities, earning a degree in electrical engineering. His academic trajectory took a decisive turn when he moved to the United States to pursue graduate work at Stanford University, where he joined the lab of Andrew Ng.

At Stanford, Abbeel found himself at the epicenter of a revolution in machine learning that was just beginning to take shape. Working under Andrew Ng, he was exposed to the idea that robots could learn complex behaviors not through hand-crafted rules but through observation and experience. His doctoral dissertation on apprenticeship learning and inverse reinforcement learning, completed in 2008, laid the conceptual groundwork for much of his later career. The core insight was deceptively simple but technically profound: instead of programming a robot to perform a task step by step, you could have it watch a human expert and learn the underlying reward function that motivated the expert’s behavior.

After completing his PhD, Abbeel joined the faculty at the University of California, Berkeley, where he established the Berkeley Robot Learning Lab. This would become one of the most influential robotics research groups in the world, producing a stream of breakthrough results and training a generation of researchers who now lead labs and companies across the AI landscape.

The Robot Learning from Demonstration Breakthrough

Technical Innovation

Abbeel’s most transformative contribution lies in developing practical, scalable methods for robots to learn from human demonstrations. Before his work, robotics was largely dominated by two paradigms: classical control theory, which required explicit mathematical models of every aspect of a robot’s environment, and early reinforcement learning, which required astronomical amounts of trial-and-error interaction. Both approaches hit walls when confronted with the complexity and variability of real-world tasks.

Abbeel’s approach, rooted in apprenticeship learning and inverse reinforcement learning (IRL), introduced a third path. The key idea behind IRL is that when a human expert performs a task — say, a helicopter pilot executing an aerobatic maneuver — they are implicitly optimizing some internal reward function. Rather than trying to directly copy the expert’s actions, the algorithm infers what objective the expert was trying to achieve, then uses that learned objective to generate its own optimal behavior. This subtle shift from imitation to understanding proved enormously powerful.

One of the most dramatic early demonstrations was the autonomous helicopter project. Abbeel and his colleagues trained a robotic helicopter to perform aerobatic maneuvers — including tic-tocs, chaos, and inverted hovering — by learning from expert human pilots. The system first observed the pilot’s demonstrations, extracted the implicit reward function, and then used reinforcement learning to find a policy that maximized that reward within the robot’s own dynamics. The result was a helicopter that could execute maneuvers at a level matching or exceeding the human pilot.

import numpy as np

class InverseRLAgent:

"""

Simplified inverse reinforcement learning agent.

Learns a reward function from expert demonstrations,

then optimizes a policy against that learned reward.

"""

def __init__(self, state_dim, action_dim, n_features):

self.weights = np.random.randn(n_features) * 0.01

self.state_dim = state_dim

self.action_dim = action_dim

def feature_expectations(self, trajectories, feature_fn, gamma=0.99):

"""Compute expected feature counts from a set of trajectories."""

mu = np.zeros(len(self.weights))

for trajectory in trajectories:

for t, (state, action) in enumerate(trajectory):

mu += (gamma ** t) * feature_fn(state, action)

return mu / len(trajectories)

def update_reward(self, expert_mu, agent_mu, lr=0.01):

"""

Gradient step: adjust reward weights so that the expert's

feature expectations are preferred over the agent's.

"""

gradient = expert_mu - agent_mu

self.weights += lr * gradient

self.weights /= np.linalg.norm(self.weights) + 1e-8

return self.weights

def compute_reward(self, state, action, feature_fn):

"""Evaluate the learned reward for a given state-action pair."""

features = feature_fn(state, action)

return np.dot(self.weights, features)

Why It Mattered

The significance of this work extended far beyond helicopters. By demonstrating that complex robotic behaviors could be acquired through demonstration rather than manual engineering, Abbeel opened a pathway that scaled to dozens of domains: surgical robotics, warehouse automation, autonomous driving, and household manipulation. The approach addressed one of the most fundamental bottlenecks in robotics — the so-called “programming barrier” — which held that every new task required a human engineer to spend weeks or months writing specialized control code.

This work also connected naturally to the deep learning revolution that was transforming the rest of AI. As Geoffrey Hinton, Yann LeCun, and Yoshua Bengio were showing that deep neural networks could learn rich representations from raw data in vision and language, Abbeel was among the first to demonstrate that these same architectures could be harnessed for robotic control — connecting perception directly to action in the physical world.

Other Major Contributions

While robot learning from demonstration remains his signature contribution, Abbeel’s research portfolio spans a remarkable breadth of topics, each pushing the frontier of what machines can do in the physical world.

Deep Reinforcement Learning for Robotics. In the mid-2010s, Abbeel’s group at Berkeley produced a series of landmark papers on applying deep reinforcement learning to robotic manipulation. Building on the foundational work of David Silver and the DeepMind team on game-playing agents, Abbeel showed that similar principles could be extended to continuous control problems with physical robots. His group’s work on guided policy search, trust region policy optimization (TRPO), and generalized advantage estimation provided practical algorithms that made deep RL feasible for real robotic systems, where sample efficiency is critical because every interaction with the physical world takes real time and incurs real costs.

Sim-to-Real Transfer. One of Abbeel’s most impactful lines of research tackled the “reality gap” — the fact that policies trained in simulation often fail catastrophically when deployed on real hardware. His work on domain randomization demonstrated that by training a policy across a wide distribution of simulated environments, with randomized physics, textures, and lighting, the resulting policy could be robust enough to transfer directly to the real world. This insight has become standard practice across the robotics industry.

Meta-Learning for Robotics. Abbeel also made foundational contributions to meta-learning — the idea of “learning to learn.” His research on model-agnostic meta-learning (MAML) and related methods showed that a robot could be trained on a distribution of tasks such that it could rapidly adapt to a new, unseen task with only a handful of demonstrations. This work, much of it done in collaboration with his students including Chelsea Finn, has become a cornerstone of modern few-shot learning research.

Covariant. In 2017, Abbeel co-founded Covariant (originally known as Embodied Intelligence) to bring his research into industrial application. The company develops AI-powered robotic systems for warehouse and logistics automation, using deep learning to enable robots to pick and place a vast variety of objects — a task that seems simple for humans but has been notoriously difficult for machines. Covariant’s approach, which learns from both simulation and real-world interaction, represents the most direct commercialization of Abbeel’s academic research. Organizations seeking to understand how AI-driven automation transforms business operations can explore resources at toimi.pro, which covers digital strategy in the age of machine intelligence.

import torch

import torch.nn as nn

class DomainRandomizedPolicy(nn.Module):

"""

A policy network trained with domain randomization

for sim-to-real transfer in robotic manipulation.

"""

def __init__(self, obs_dim, action_dim, hidden=256):

super().__init__()

self.encoder = nn.Sequential(

nn.Linear(obs_dim, hidden),

nn.LayerNorm(hidden),

nn.ReLU(),

nn.Linear(hidden, hidden),

nn.LayerNorm(hidden),

nn.ReLU(),

)

self.policy_head = nn.Sequential(

nn.Linear(hidden, hidden // 2),

nn.ReLU(),

nn.Linear(hidden // 2, action_dim),

nn.Tanh(), # Bounded continuous actions

)

def forward(self, observation):

"""

Maps raw observation (with randomized visual/physics params)

to a continuous action vector for robot control.

"""

features = self.encoder(observation)

action = self.policy_head(features)

return action

def act(self, observation, noise_std=0.1):

"""Select action with exploration noise for training."""

with torch.no_grad():

mean_action = self.forward(observation)

noise = torch.randn_like(mean_action) * noise_std

return torch.clamp(mean_action + noise, -1.0, 1.0)

Education and MOOC Contributions. Like his former advisor Andrew Ng, Abbeel has been deeply committed to education. He co-created Berkeley’s highly influential courses on robotics and deep reinforcement learning, and his lectures — many of which are freely available online — have become standard resources for students and practitioners worldwide. His teaching has helped democratize access to cutting-edge robotics knowledge.

Philosophy and Approach

Abbeel’s research philosophy is defined by a relentless focus on real-world impact and a deep respect for the difficulty of physical interaction. Unlike researchers who are content to demonstrate results in simulation or on curated benchmarks, Abbeel has consistently pushed for deployment on actual robotic hardware, accepting the messiness and frustration this entails.

Key Principles

- Reality as the ultimate benchmark. Abbeel has repeatedly emphasized that the true test of a robotics algorithm is whether it works on a physical robot in an unstructured environment. Simulated results, while useful for development, are never sufficient. This philosophy has driven his group to maintain extensive hardware labs and to always validate ideas on real systems.

- Learning over engineering. Rather than hand-designing solutions for each new task, Abbeel advocates for general-purpose learning algorithms that can be applied across domains. This conviction — that learning is more scalable than engineering — runs through all his work, from apprenticeship learning to meta-learning.

- Bridging academia and industry. Abbeel is unusual among top academic researchers in his willingness to engage seriously with industrial applications. Through Covariant, he has confronted the challenges of deploying AI at scale in warehouses and factories, gaining insights that feed back into his academic research.

- Sample efficiency matters. In the real world, you cannot run millions of training episodes the way you can in a video game. Abbeel’s group has consistently prioritized algorithms that learn from limited data — whether through demonstrations, sim-to-real transfer, or meta-learning — because sample efficiency is what separates laboratory curiosities from practical systems.

- Collaboration and mentorship. Abbeel has mentored dozens of PhD students and postdocs who have gone on to leading positions at companies like Google, Meta, OpenAI, and Tesla. He views the training of the next generation of researchers as an integral part of his mission, not a secondary activity. Teams managing complex research collaborations and project workflows can benefit from tools like taskee.pro for organizing distributed projects effectively.

Legacy and Impact

Pieter Abbeel’s influence on the field of robotics and AI is difficult to overstate. He arrived at a moment when robotics was largely disconnected from the machine learning revolution, and through his research, teaching, and entrepreneurship, he helped forge the connection that now defines the field.

His work on learning from demonstration showed that robots need not be programmed task by task but could instead acquire skills by observing humans — a paradigm shift that has influenced virtually every modern robotics company. His contributions to deep reinforcement learning for continuous control, alongside researchers like Andrej Karpathy and the teams at DeepMind, helped establish the toolkit that today’s robotics engineers use daily. His work on sim-to-real transfer and domain randomization has become foundational methodology across the industry.

The researchers trained in his lab form a network that spans the most important AI organizations in the world. Chelsea Finn, Rocky Duan, John Schulman (co-creator of PPO and co-founder of OpenAI), and many others spent formative years in Abbeel’s group. This intellectual lineage — connecting the theoretical insights of researchers like Jurgen Schmidhuber and the deep learning pioneers to practical robotic systems — represents one of Abbeel’s most lasting contributions.

Through Covariant, Abbeel has also demonstrated that academic robotics research can translate into viable commercial products. The company’s AI-powered robotic picking systems operate in real warehouses, handling thousands of different products with a reliability that would have been unthinkable a decade ago. This successful translation from lab to factory floor validates Abbeel’s core thesis: that general-purpose learning algorithms, not task-specific engineering, are the key to scalable robotic intelligence.

As the field moves toward foundation models for robotics — large-scale models trained on diverse data that can generalize across tasks — Abbeel’s decades of work on learning, transfer, and adaptation position him as one of the most important voices shaping the future of embodied AI.

Key Facts

- Full name: Pieter Abbeel

- Born: 1977, Belgium

- Education: B.S. in Electrical Engineering from KU Leuven; Ph.D. from Stanford University (advisor: Andrew Ng)

- Primary role: Professor of Electrical Engineering and Computer Sciences at UC Berkeley

- Company founded: Covariant (2017), AI-powered robotics for warehouse automation

- Key research areas: Robot learning from demonstration, inverse reinforcement learning, deep reinforcement learning, sim-to-real transfer, meta-learning

- Notable students: Chelsea Finn, John Schulman, Rocky Duan, Yan Duan, among many others

- Awards: NIPS Best Paper Award, IEEE Robotics and Automation Society Early Career Award, Sloan Research Fellowship, NSF CAREER Award, TR35 (MIT Technology Review Innovators Under 35)

- Known for: Autonomous helicopter aerobatics, domain randomization, MAML, founding one of the first deep RL robotics companies

Frequently Asked Questions

What is robot learning from demonstration, and why is it important?

Robot learning from demonstration (LfD) is a paradigm in which a robot acquires new skills by observing a human or another agent perform a task. Instead of a programmer writing explicit instructions for every possible situation, the robot watches demonstrations and either directly imitates the observed behavior or, in more sophisticated approaches like inverse reinforcement learning, infers the underlying objective that the demonstrator was trying to achieve. This is important because it dramatically reduces the time and expertise needed to teach robots new tasks. In traditional robotics, deploying a robot for a new task might require weeks of engineering. With LfD, a factory worker or surgeon can simply show the robot what to do. Pieter Abbeel’s work was pivotal in making this approach practical for complex, real-world tasks.

How does sim-to-real transfer work in robotics?

Sim-to-real transfer refers to the practice of training a robot’s control policy in a simulated environment and then deploying that policy on a physical robot. The core challenge is the “reality gap” — simulations are always imperfect approximations of the real world, and policies that work perfectly in simulation often fail on real hardware. Abbeel’s key contribution, domain randomization, addresses this by training the policy across a wide distribution of simulated environments with randomized parameters such as friction coefficients, object masses, camera angles, lighting conditions, and visual textures. By forcing the policy to succeed across this diverse range of conditions, it becomes robust enough to handle the specific conditions of the real world, which appear as just one more variation within the training distribution.

What is Covariant, and what does it do?

Covariant is a robotics AI company co-founded by Pieter Abbeel in 2017, originally under the name Embodied Intelligence. The company develops AI-powered robotic systems primarily for warehouse and logistics automation. Its core product enables robotic arms to pick and place a vast variety of objects — different shapes, sizes, materials, and packaging — using deep learning rather than manually programmed routines. This is a significant advance because traditional warehouse robots could only handle a narrow range of pre-specified items. Covariant’s system learns from both simulation and real-world interaction, continuously improving its ability to handle novel objects. The company has deployed its technology with major logistics and retail companies.

How has Pieter Abbeel influenced the broader AI community?

Abbeel’s influence extends well beyond his own published research. As a professor at UC Berkeley, he has trained dozens of PhD students and postdocs who have gone on to leading roles at organizations including OpenAI, Google DeepMind, Meta AI, Tesla, and many startups. His former student John Schulman co-founded OpenAI and developed the PPO algorithm that has become standard in reinforcement learning. Chelsea Finn, another former student, is now a leading professor at Stanford working on meta-learning and robot learning. Abbeel’s freely available lecture series on deep reinforcement learning has been viewed by hundreds of thousands of students worldwide, and his courses at Berkeley have become models for how to teach modern AI. His combination of theoretical depth, practical focus on real hardware, and commitment to open education has made him one of the most influential figures in the field.