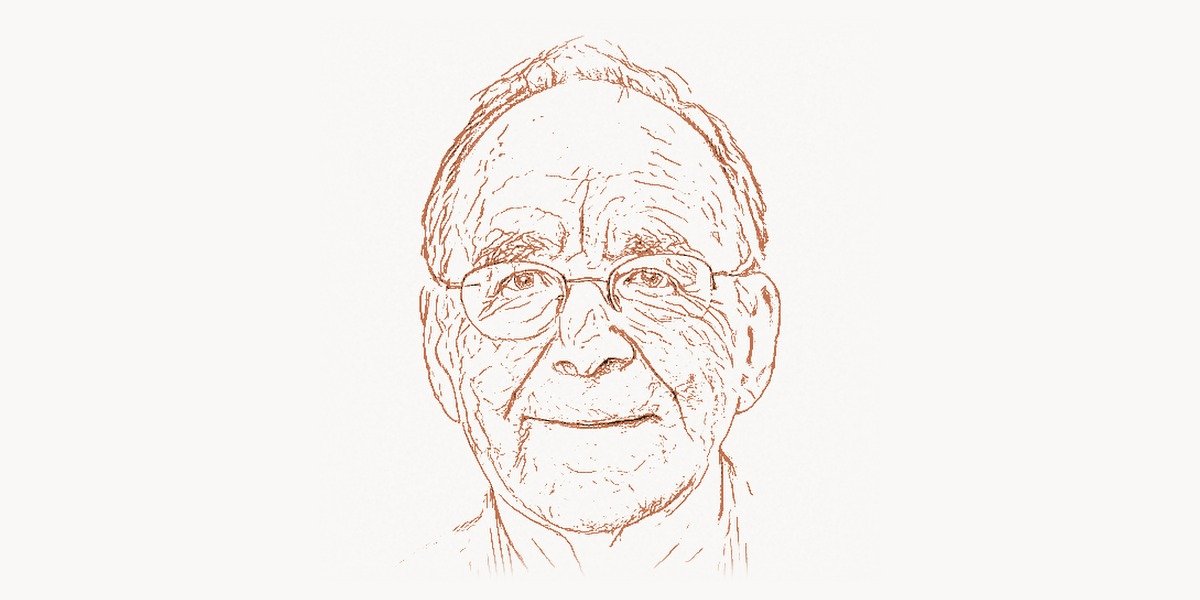

In 1972, a single paper shattered the comfortable assumption that every well-posed problem could be efficiently solved by a computer. Richard Manning Karp, then a professor at the University of California, Berkeley, published “Reducibility Among Combinatorial Problems” — a 40-page manuscript that identified 21 diverse computational problems and proved they were all, in a precise mathematical sense, equally hard. That paper did not merely advance theoretical computer science; it created a new field, gave thousands of researchers a shared language for discussing intractability, and fundamentally altered how we think about the limits of computation. More than five decades later, the question Karp’s work orbits — whether P equals NP — remains the most important unsolved problem in all of computer science.

Early Life and the Road to Computation

Richard Karp was born on January 3, 1935, in Boston, Massachusetts. From childhood, he displayed a remarkable aptitude for mathematics. By the time he entered Harvard University as an undergraduate, his fascination with logic and combinatorial structures was already taking shape. He earned his bachelor’s degree in 1955, then continued at Harvard for his Ph.D., which he completed in 1959 under the supervision of Anthony Oettinger. His doctoral thesis explored the logical structure of automata, firmly situating him at the junction of mathematics and the nascent discipline of computer science.

After completing his doctorate, Karp joined IBM’s Thomas J. Watson Research Center, where he spent over a decade working on problems in combinatorial optimization, network flows, and computational geometry. The IBM years were formative. Karp gained deep exposure to real-world computational challenges — scheduling, routing, resource allocation — that resisted elegant polynomial-time solutions. This practical experience, combined with his theoretical rigor, uniquely positioned him to recognize patterns in computational difficulty that others had overlooked.

The Intellectual Foundation: Cook’s Theorem and the Birth of NP-Completeness

To understand Karp’s contribution, one must first appreciate the work of Alan Turing, who in 1936 formalized what it means for a problem to be “computable” at all. Turing’s framework gave us the theoretical machine that underlies all of modern computing. But computability is only part of the story. A problem might be computable in principle yet require so much time that no computer, however powerful, could solve it before the heat death of the universe.

By the 1960s, researchers had begun to classify problems not just by whether they could be solved, but by how efficiently they could be solved. The class P — problems solvable in polynomial time — emerged as a proxy for “efficiently solvable.” The class NP — problems whose solutions can be verified in polynomial time — captured a broader set of tasks that arise naturally in logistics, scheduling, and design. The central question became: Is every problem whose solution can be quickly checked also a problem that can be quickly solved? In formal terms, does P = NP?

In 1971, Stephen Cook proved a stunning result: the Boolean satisfiability problem (SAT) is NP-complete, meaning that if SAT can be solved in polynomial time, then every problem in NP can be solved in polynomial time. Cook’s theorem was a theoretical masterstroke, but it left a critical gap. SAT is a highly abstract, logic-focused problem. How could one know whether real-world problems — graph coloring, scheduling, bin packing — shared this same hardness?

Karp’s 21 Problems: The Paper That Changed Everything

This is precisely where Richard Karp’s genius became apparent. In his landmark 1972 paper, Karp demonstrated that 21 well-known combinatorial problems could all be reduced to one another in polynomial time. Each was NP-complete. The list was not arbitrary; it was carefully chosen to span a wide range of practical domains. Here are all 21 problems Karp proved NP-complete:

The Complete List of Karp’s 21 NP-Complete Problems

- SATISFIABILITY (SAT) — Determine whether a Boolean formula can be made true

- 0-1 INTEGER PROGRAMMING — Solve a system of linear inequalities with binary variables

- CLIQUE — Find a complete subgraph of size k in a graph

- SET PACKING — Find k mutually disjoint sets from a collection

- VERTEX COVER — Find a minimum set of vertices covering all edges

- SET COVERING — Cover a universe with the fewest subsets

- FEEDBACK NODE SET — Remove the fewest vertices to make a directed graph acyclic

- FEEDBACK ARC SET — Remove the fewest arcs to make a directed graph acyclic

- DIRECTED HAMILTONIAN CIRCUIT — Find a cycle visiting every vertex exactly once in a directed graph

- UNDIRECTED HAMILTONIAN CIRCUIT — Same as above, but for undirected graphs

- SATISFIABILITY (3-SAT) — SAT restricted to clauses with exactly 3 literals

- CHROMATIC NUMBER — Determine the minimum number of colors needed to color a graph

- CLIQUE COVER — Partition a graph’s vertices into the fewest cliques

- EXACT COVER — Find a subcollection of sets that partitions a universe

- HITTING SET — Find a minimum set intersecting every set in a collection

- STEINER TREE — Connect a subset of vertices with a minimum-weight tree

- 3-DIMENSIONAL MATCHING — Find a perfect matching in a 3-partite hypergraph

- KNAPSACK — Maximize value of items fitting within a weight limit

- JOB SEQUENCING — Schedule jobs to meet deadlines and minimize penalties

- PARTITION — Divide a set of integers into two subsets of equal sum

- MAX CUT — Partition a graph’s vertices to maximize the number of crossing edges

The brilliance of the paper was not just in proving these 21 results. It was in demonstrating a methodology — polynomial-time reduction — that any researcher could apply to show that their own favorite hard problem was NP-complete too. Karp created a cascade. Within a few years, hundreds and then thousands of problems had been classified as NP-complete, using chains of reductions that ultimately traced back to Karp’s original 21.

Understanding Polynomial-Time Reductions

The core technique in Karp’s work is the polynomial-time many-one reduction (sometimes called a Karp reduction). The idea is deceptively simple: to show that Problem B is at least as hard as Problem A, you construct a function that transforms any instance of A into an instance of B in polynomial time, such that the answer to A is “yes” if and only if the answer to B is “yes.”

Let us look at a classic example — reducing the Vertex Cover problem to an instance of the Set Cover problem. This demonstrates the kind of structural mapping that Karp pioneered:

def vertex_cover_to_set_cover(graph, k):

"""

Reduce Vertex Cover to Set Cover in polynomial time.

Given a graph G = (V, E) and integer k, construct a Set Cover

instance where:

- Universe U = set of all edges in G

- For each vertex v, create a subset S_v = {edges incident to v}

- Ask: can we cover U with at most k subsets?

A vertex cover of size k exists iff a set cover of size k exists.

"""

# Universe = all edges

universe = set()

for u, v in graph.edges:

universe.add((u, v))

# For each vertex, build the set of its incident edges

subsets = {}

for vertex in graph.vertices:

incident_edges = set()

for u, v in graph.edges:

if u == vertex or v == vertex:

incident_edges.add((u, v))

subsets[vertex] = incident_edges

# The transformation preserves the answer:

# Vertex Cover of size <= k iff Set Cover of size <= k

return SetCoverInstance(

universe=universe,

subsets=list(subsets.values()),

budget=k

)

# This runs in O(|V| * |E|) time — clearly polynomial.

# The correctness proof:

# (=>) If S is a vertex cover, the corresponding sets cover all edges.

# (<=) If C is a set cover, the corresponding vertices touch all edges.This polynomial-time transformation is precisely what makes NP-completeness so powerful: it lets us transfer hardness results from one problem to another. Karp chained such reductions from SAT through all 21 problems, building a web of equivalences.

The Practical Impact: Why NP-Completeness Matters

For practicing software engineers and project managers, NP-completeness is not merely an academic curiosity. It is a daily reality. Whenever a development team encounters an optimization problem that seems to resist efficient solution, understanding NP-completeness tells them whether to keep searching for a fast algorithm or to pivot toward approximation, heuristics, or constraint relaxation. This insight saves enormous amounts of engineering time and resources. As Edsger Dijkstra might have appreciated, knowing a problem is hard is itself a form of progress.

Consider how modern project management tools handle resource scheduling. Assigning developers to tasks with dependencies, deadlines, and skill constraints is a variant of the job sequencing problem — one of Karp's original 21. No project management tool solves this optimally for large instances; instead, they use heuristic methods that deliver good-enough solutions quickly. Karp's framework explains why this is necessary, not a failure of engineering.

The Approximation Revolution

If we cannot solve NP-complete problems exactly in polynomial time (and most experts believe we cannot), the natural question becomes: how close to optimal can we get efficiently? This question launched the field of approximation algorithms, one of the most vibrant areas of modern computer science.

Here is an implementation of a classic 2-approximation algorithm for Vertex Cover — a problem from Karp's list where we can provably guarantee a solution within a factor of 2 of optimal:

def approx_vertex_cover(graph):

"""

2-approximation for Minimum Vertex Cover.

This greedy algorithm guarantees a cover at most

twice the size of the optimal solution.

Strategy: repeatedly pick an uncovered edge,

add BOTH endpoints to the cover, remove all

edges incident to those vertices.

Time complexity: O(|V| + |E|)

"""

cover = set()

remaining_edges = set(graph.edges)

while remaining_edges:

# Pick any uncovered edge (u, v)

u, v = next(iter(remaining_edges))

# Add both endpoints to cover

cover.add(u)

cover.add(v)

# Remove all edges incident to u or v

remaining_edges = {

(a, b) for (a, b) in remaining_edges

if a != u and a != v and b != u and b != v

}

return cover

# Example usage with a small graph:

# Graph: 1--2--3--4--5

# | |

# +---6----+

#

# Optimal vertex cover: {2, 4} (size 2)

# This algorithm might return: {1, 2, 4, 5} (size 4)

# Guarantee: always <= 2 * OPT

# Why this matters in practice:

# - Network monitoring: minimum sensor placement

# - Bioinformatics: minimum gene knockout sets

# - Social networks: minimum influencer selectionThis approximation paradigm, born directly from the realization that exact solutions are likely impossible, now underpins systems from Google's ad placement algorithms to airline crew scheduling and chip design at companies worldwide. Web development agencies confronting complex site architecture optimization problems apply similar heuristic thinking, even if they do not always realize they are grappling with NP-hard problems underneath.

Karp's Broader Contributions to Computer Science

While the 21 NP-complete problems paper is Karp's most famous work, his contributions extend far beyond it. His career spans six decades and touches nearly every corner of theoretical and applied computer science.

Network Flow Algorithms

Before his NP-completeness work, Karp made fundamental contributions to the theory of network flows. Together with Jack Edmonds, he developed the Edmonds-Karp algorithm (1972), which provides a strongly polynomial bound on the maximum flow problem by choosing augmenting paths via breadth-first search. This algorithm, with its O(VE²) time complexity, brought practical efficiency guarantees to a problem central to transportation, telecommunications, and operations research. The work of Donald Knuth on the analysis of algorithms provided a complementary perspective, showing how careful mathematical analysis could illuminate the performance of practical computational methods.

Randomized Algorithms

Karp was also a pioneer in the use of randomness as an algorithmic resource. His work on randomized algorithms, particularly the Rabin-Karp string matching algorithm (developed with Michael O. Rabin in 1987), demonstrated that introducing random choices into an algorithm could yield solutions that were simpler, faster, and more elegant than their deterministic counterparts. The Rabin-Karp algorithm uses hashing to find patterns in strings in expected linear time, and it remains a staple of computer science curricula worldwide.

Probabilistic Analysis of Algorithms

Beyond worst-case analysis, Karp championed the probabilistic study of combinatorial optimization. Rather than asking "how bad can an algorithm perform in the worst case?" he asked "how does it perform on typical inputs?" This perspective, which Claude Shannon would have recognized from his own probabilistic approach to information theory, opened new avenues for understanding why algorithms that are theoretically suboptimal often perform brilliantly in practice.

Awards, Recognition, and the Turing Award

Richard Karp's contributions have been recognized with virtually every major honor in computer science and mathematics. In 1985, he received the ACM Turing Award — often called the "Nobel Prize of Computing" — for his contributions to the theory of algorithms, including his work on NP-completeness and network flow algorithms. The Turing Award, named after the father of theoretical computer science, was a fitting recognition of work that extended Turing's legacy into the realm of computational complexity.

Other accolades include the National Medal of Science (1996), the Harvey Prize from Technion (1998), the Benjamin Franklin Medal (2004), and the Kyoto Prize (2008). He was elected to the National Academy of Sciences, the National Academy of Engineering, and the American Academy of Arts and Sciences. In 2012, he received the IEEE John von Neumann Medal.

Yet Karp's influence extends far beyond prizes. As a professor at Berkeley for over four decades, he has mentored generations of computer scientists who have gone on to shape the field. His students and intellectual descendants populate the faculties of top universities, lead research labs at major tech companies, and continue to push the boundaries of what we understand about computation.

The P vs. NP Problem: Karp's Enduring Legacy

The question that Karp's work made precise — whether P equals NP — is now one of the seven Millennium Prize Problems identified by the Clay Mathematics Institute, carrying a million-dollar bounty. But its significance goes far beyond money. If P were found to equal NP, it would mean that every problem whose solution can be quickly verified can also be quickly solved. Cryptographic systems would collapse, as the hard problems underlying RSA and similar schemes (the kind studied by researchers like Bruce Schneier) would suddenly become tractable. Drug discovery, logistics, artificial intelligence, and countless other fields would be transformed overnight.

Most experts believe P does not equal NP — that there are inherent limits to efficient computation. But no one has been able to prove it. The problem has resisted attack for over half a century, and many of the greatest minds in mathematics and computer science have tried and failed. It stands as a monument to how much we still do not understand about the nature of computation, a humbling reminder even in an age of large language models and quantum processors.

Connections to Modern Computing

Karp's framework reverberates through modern technology in ways both obvious and subtle. Machine learning optimization, where training a neural network involves navigating a vast landscape of possible configurations, frequently encounters NP-hard subproblems. The traveling salesman problem, closely related to the Hamiltonian circuit problems in Karp's list, is the foundational challenge of modern logistics — from Amazon delivery routes to Uber ride matching.

In database theory, the query optimization problem that Edgar Codd would have been intimately familiar with often reduces to NP-hard combinatorial problems. Modern database engines use sophisticated heuristics informed by decades of research that traces back to Karp's classification scheme. Even the constraint satisfaction problems at the heart of Barbara Liskov's work on data abstraction and program correctness connect to the NP-completeness framework.

The Methodology of Reduction: A Lasting Intellectual Tool

Perhaps Karp's most enduring contribution is methodological rather than technical. The idea that you can prove something about one problem by relating it to another — that a chain of polynomial-time reductions can transfer hardness from SAT to graph coloring to scheduling to knapsack — is a way of thinking that has permeated all of computer science. It appears in the study of cryptographic hardness assumptions, in the classification of problems in distributed computing, and in the analysis of online algorithms.

Before Karp, researchers who failed to find efficient algorithms for their favorite problems might have suspected they were not clever enough. After Karp, they could prove their problems were genuinely hard — as hard as thousands of other problems that the entire community had failed to crack. This was intellectually liberating. It redirected effort from futile searches for exact algorithms toward the more productive pursuit of approximation algorithms, parameterized complexity, and average-case analysis.

Personal Philosophy and Teaching Legacy

Those who have studied under Karp describe him as a model of intellectual clarity and generosity. His lectures at Berkeley were legendary for their precision and their ability to make deep ideas feel inevitable. He emphasized the importance of elegance in proofs and the value of choosing the right problem to work on — a lesson that echoes the philosophy of scientists like Allen Newell, who believed that progress comes from sustained focus on the right questions rather than from scattered brilliance.

Karp's approach to research — start with concrete problems, look for patterns, abstract the patterns into general theory — is a model of how mathematical computer science can be done well. It combines the best of applied motivation with the rigor of pure mathematics, a synthesis that remains the gold standard for theoretical work that actually matters.

Frequently Asked Questions

What are Karp's 21 NP-complete problems?

In his 1972 paper "Reducibility Among Combinatorial Problems," Richard Karp identified 21 computational problems — including SAT, Clique, Vertex Cover, Knapsack, Hamiltonian Circuit, Graph Coloring, Set Cover, and others — and proved that all of them are NP-complete. This means that each problem is at least as hard as every other problem in the class NP, and that a polynomial-time solution to any one of them would immediately yield polynomial-time solutions to all problems in NP.

Why is NP-completeness important for software engineers?

NP-completeness provides a rigorous framework for understanding why certain problems resist efficient solution. When a software engineer encounters a problem that is NP-complete, they know that no efficient exact algorithm is likely to exist. This knowledge allows them to make informed decisions about using approximation algorithms, heuristics, or constraint relaxation rather than wasting time searching for an optimal solution. It is one of the most practically useful results in all of theoretical computer science.

What is the difference between P, NP, and NP-complete?

P is the class of problems that can be solved in polynomial time — these are considered "efficiently solvable." NP is the class of problems whose solutions can be verified in polynomial time, even if finding the solution may be much harder. NP-complete problems are the hardest problems in NP: if any single NP-complete problem can be solved in polynomial time, then every problem in NP can be solved in polynomial time. Karp's contribution was showing that many natural, practically important problems are NP-complete.

What is the Rabin-Karp algorithm?

The Rabin-Karp algorithm, developed by Richard Karp and Michael Rabin in 1987, is a string-searching algorithm that uses hashing to find occurrences of a pattern within a text. It computes a rolling hash of the pattern and of successive substrings of the text, allowing it to skip most character-by-character comparisons. It runs in expected O(n + m) time for text of length n and pattern of length m, and is particularly effective for multi-pattern search scenarios, such as plagiarism detection and DNA sequence matching.

Has the P vs. NP problem been solved?

No. As of today, the P vs. NP problem remains unsolved. It is one of the seven Millennium Prize Problems designated by the Clay Mathematics Institute, with a one-million-dollar prize for a correct solution. Most computer scientists and mathematicians believe that P does not equal NP — meaning that there are problems whose solutions can be quickly verified but not quickly found — but no proof or disproof has been achieved despite more than fifty years of intense research effort. It remains the most important open question in computer science.