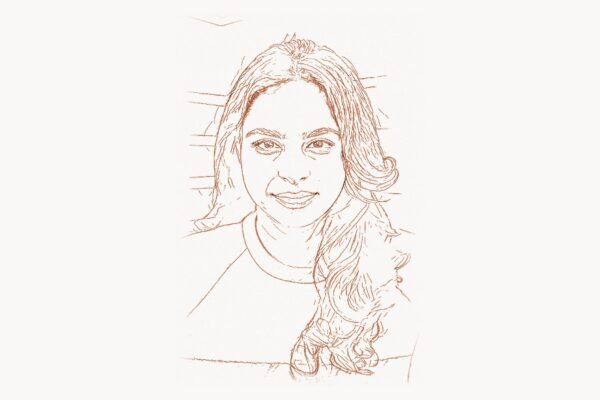

When engineers talk about the systems that made Google possible — not the search algorithm, but the machinery beneath it that stores, processes, and serves data at a scale no one had achieved before — one name appears on virtually every foundational paper: Sanjay Ghemawat. As a Google Senior Fellow and one of the most influential systems engineers alive, Ghemawat co-designed the distributed infrastructure that turned a search startup into the backbone of the modern internet. MapReduce, the Google File System, BigTable, LevelDB, and critical components of TensorFlow — these are not incremental improvements but paradigm shifts that reshaped how humanity handles data. Yet unlike many tech luminaries, Ghemawat has stayed firmly behind the scenes, letting his code and papers speak louder than any keynote ever could.

Early Life and Education

Sanjay Ghemawat was born in 1966 and grew up with an early fascination for mathematics and computing. He pursued his undergraduate studies at the Massachusetts Institute of Technology (MIT), where he earned a Bachelor of Science degree. MIT’s rigorous engineering culture and emphasis on building systems that work shaped his practical approach to computer science — an approach he would carry into every project for decades to come.

Ghemawat went on to earn his Ph.D. from MIT as well, focusing on systems-level computer science. His doctoral work dealt with the kinds of problems that would define his career: how to make software reliable, fast, and capable of handling workloads that would break conventional architectures. During his time at MIT, he absorbed lessons from the institution’s deep tradition in operating systems, compilers, and distributed computing — a lineage that traces back to pioneers like Leslie Lamport, whose theoretical work on distributed systems would later prove essential to everything Ghemawat built.

After completing his doctorate, Ghemawat worked at Digital Equipment Corporation (DEC), where he contributed to systems programming projects. This experience at DEC gave him hands-on knowledge of building production-grade software for real hardware constraints. When Google came calling in 1999, barely a year after its founding, Ghemawat joined and became one of the earliest engineers at a company that would soon need to solve computing problems no one had ever faced before.

The MapReduce Breakthrough

Technical Innovation

By the early 2000s, Google was indexing billions of web pages, and the volume was growing exponentially. Traditional approaches to data processing — single-machine programs, hand-tuned parallel code, proprietary database clusters — could not keep up. Sanjay Ghemawat and Jeff Dean recognized that Google’s engineers were repeatedly solving the same parallelization and fault-tolerance problems from scratch, wasting enormous effort. Their answer was MapReduce, published in 2004.

MapReduce introduced an elegant abstraction: divide computation into two phases. In the Map phase, input data is split across thousands of machines, each applying a user-defined function to produce intermediate key-value pairs. In the Reduce phase, all values sharing the same key are gathered and combined by another user-defined function. The framework handles everything else — partitioning, scheduling, fault tolerance, and data movement across the network.

# Simplified MapReduce word count example

# Map phase: emit (word, 1) for each word in a document

def map_function(document_id, document_text):

results = []

for word in document_text.split():

word = word.lower().strip(".,!?;:")

results.append((word, 1))

return results

# Reduce phase: sum all counts for each word

def reduce_function(word, counts):

return (word, sum(counts))

# The framework handles:

# - Splitting input across 10,000+ machines

# - Scheduling map and reduce tasks

# - Re-executing failed tasks on other machines

# - Sorting and shuffling intermediate data

# - Writing final output to distributed storageThe genius of MapReduce was not in the map or reduce operations themselves — functional programmers had used these concepts for decades. The breakthrough was in the engineering: automatic parallelization across thousands of commodity machines, transparent re-execution of failed tasks, locality-aware scheduling that moved computation to where data resided, and a programming model simple enough that any engineer could write a MapReduce job without understanding distributed systems internals.

Why It Mattered

Before MapReduce, large-scale data processing was the domain of specialized teams with deep expertise in parallel computing. After MapReduce, any programmer who could write a map function and a reduce function could process petabytes. Inside Google, the number of MapReduce jobs grew from 29 in the first month of deployment to over 100,000 per day within a few years.

The 2004 paper describing MapReduce sparked an industry-wide revolution. Doug Cutting and Mike Cafarella built Apache Hadoop as an open-source implementation, creating an entire ecosystem that became the foundation of the big data industry. Companies like Yahoo, Facebook, and countless startups built their data pipelines on Hadoop’s MapReduce. The concept of splitting computation into map and reduce phases became so fundamental that it influenced the design of dozens of subsequent frameworks, from Apache Spark to Google’s own Cloud Dataflow.

MapReduce also changed how engineers think about scale. It proved that you do not need expensive supercomputers to process massive datasets — you need smart software running on cheap, unreliable hardware. This philosophy, pioneered by Ghemawat and Dean, became the defining principle of modern cloud computing and remains at the core of how platforms like Toimi approach scalable web infrastructure for their clients.

Other Major Contributions

MapReduce was just one piece of the infrastructure Ghemawat helped create. His body of work at Google reads like a syllabus for modern distributed systems.

Google File System (GFS): Published in 2003, GFS was the storage layer that made everything else possible. Ghemawat was the lead author on the paper. GFS was designed around a radical assumption: hardware fails constantly, so the file system must treat failure as the norm rather than the exception. It used large chunk sizes (64 MB), replication across multiple machines, and a single master server for metadata to create a file system that could store and serve petabytes of data reliably. GFS directly inspired the Hadoop Distributed File System (HDFS) and influenced every distributed storage system that followed, from Amazon S3 to Azure Blob Storage.

BigTable: Published in 2006, BigTable was a distributed storage system for structured data that scaled to petabytes across thousands of servers. Co-authored by Ghemawat, it provided a sparse, distributed, persistent multi-dimensional sorted map — a data model that sat between traditional relational databases (as pioneered by Edgar F. Codd) and simple key-value stores. BigTable became the storage backend for Google Search, Google Maps, Google Earth, YouTube, and Gmail. Its design influenced Apache HBase, Apache Cassandra, and eventually Google’s own Cloud Bigtable service.

LevelDB: In 2011, Ghemawat and Dean released LevelDB, a fast key-value storage library based on log-structured merge-trees (LSM-trees). Unlike GFS and BigTable, LevelDB was designed for single-machine use and was released as open-source software. Its elegant implementation became the foundation for RocksDB (developed by Facebook), which in turn powers critical systems at companies worldwide. LevelDB demonstrated that Ghemawat’s design sensibility extended beyond distributed systems to crafting clean, efficient single-node data structures.

// LevelDB usage example — the simplicity that made it revolutionary

#include "leveldb/db.h"

#include <iostream>

#include <string>

int main() {

leveldb::DB* db;

leveldb::Options options;

options.create_if_missing = true;

// Open a database (creates it if it doesn't exist)

leveldb::Status status = leveldb::DB::Open(options, "/tmp/testdb", &db);

if (!status.ok()) {

std::cerr << "Unable to open database: " << status.ToString() << std::endl;

return 1;

}

// Write a key-value pair

status = db->Put(leveldb::WriteOptions(), "pioneer", "Sanjay Ghemawat");

// Read it back

std::string value;

status = db->Get(leveldb::ReadOptions(), "pioneer", &value);

std::cout << "Value: " << value << std::endl;

// Clean up

delete db;

return 0;

}TensorFlow Infrastructure: When Google pivoted heavily toward machine learning in the 2010s, Ghemawat contributed to the systems infrastructure underlying TensorFlow. His expertise in distributed computation was critical for enabling TensorFlow to train models across clusters of GPUs and TPUs — the same kind of large-scale coordination challenge he had been solving for over a decade. The deep learning revolution, championed by researchers like Geoffrey Hinton and enabled by datasets like Fei-Fei Li’s ImageNet, required infrastructure that could handle unprecedented computational demands.

Protocol Buffers and other internal tools: Ghemawat also contributed to numerous internal Google tools and libraries that, while less publicly documented, formed the connective tissue of Google’s infrastructure. His fingerprints are on the culture of building reusable, well-tested systems libraries — a practice that has become standard in modern software engineering and that teams at agencies like Taskee apply when architecting reliable project workflows.

Philosophy and Approach

Sanjay Ghemawat is not a conference keynote speaker or a Twitter personality. He is an engineer’s engineer, known for an approach to systems design that prioritizes clarity, reliability, and simplicity above all. His philosophy can be distilled into several core principles that permeate every system he has built.

Key Principles

- Design for failure, not for perfection. Every system Ghemawat has built assumes that hardware will fail, networks will partition, and disks will corrupt. Rather than trying to prevent failures, his systems detect and recover from them automatically. GFS replicates every chunk three times. MapReduce re-executes failed tasks. This philosophy — expect failure and design around it — has become the first commandment of distributed systems engineering.

- Simplicity is the ultimate sophistication. MapReduce succeeded not because it was the most powerful parallel programming model, but because it was the simplest one that solved 80% of Google’s data processing needs. Ghemawat consistently chose straightforward abstractions over clever ones, making his systems accessible to thousands of engineers rather than a handful of specialists.

- Co-design software and hardware awareness. While Ghemawat writes software, he designs with deep awareness of the hardware it runs on. GFS used large chunk sizes because disk seeks were expensive. MapReduce scheduled computation near data because network bandwidth was scarce. This co-design thinking — understanding the physical machine beneath the abstraction — separates world-class systems engineers from merely good ones.

- Measure everything, assume nothing. Ghemawat’s systems are instrumented extensively. Performance decisions are driven by profiling and measurement rather than intuition. This empirical rigor, reminiscent of Donald Knuth’s famous dictum about premature optimization, ensures that engineering effort targets real bottlenecks rather than imagined ones.

- Build for the next order of magnitude. Google’s systems needed to scale 10x every few years. Ghemawat designed not for today’s load but for the load that would arrive in two or three years. This forward-looking approach prevented the costly rewrites that plague companies that optimize only for the present.

- Pair programming as a superpower. Ghemawat and Jeff Dean are perhaps the most famous programming pair in the history of software engineering. Their decades-long collaboration demonstrates that two exceptional engineers working closely together can produce results that far exceed what either could achieve alone. Their partnership has been described by colleagues as a force multiplier — each bringing complementary strengths to the design and implementation of systems.

Legacy and Impact

The systems Sanjay Ghemawat helped create did not just solve Google’s problems — they defined how the entire industry builds large-scale software. The trajectory from GFS to HDFS, from MapReduce to Hadoop and Spark, from BigTable to HBase and Cassandra, represents one of the most complete technology transfers from a single company to the global engineering community in computing history.

Ghemawat was elected to the National Academy of Engineering in 2009, a recognition reserved for engineers who have made outstanding contributions to the field. In 2023, he and Jeff Dean were awarded the ACM Prize in Computing — one of the most prestigious recognitions in computer science — for their foundational contributions to large-scale distributed computing systems. These honors acknowledge what practitioners have known for two decades: the infrastructure Ghemawat designed is the invisible foundation upon which the modern internet rests.

His influence extends far beyond the specific systems he built. The design patterns he pioneered — write-ahead logs for durability, master-worker architectures for coordination, tablet-based sharding for horizontal scalability — appear in virtually every distributed system built since 2003. Engineers who have never read a Ghemawat paper nonetheless use his ideas every day, embedded in the frameworks and cloud services they rely upon.

Perhaps most importantly, Ghemawat exemplifies a model of impact that stands in contrast to the startup-founder archetype. He has spent his entire post-academic career at a single company, going deep rather than broad, solving progressively harder problems within the same domain. His career demonstrates that sustained, focused engineering work can change the world as profoundly as any entrepreneurial venture — a perspective increasingly valued as the industry matures and recognizes the importance of deep technical leadership alongside product innovation, much as Larry Page and Sergey Brin recognized when they entrusted their infrastructure to engineers of Ghemawat’s caliber.

Key Facts

- Full Name: Sanjay Ghemawat

- Born: 1966

- Education: B.S. and Ph.D. from the Massachusetts Institute of Technology (MIT)

- Current Role: Google Senior Fellow (the highest individual contributor rank at Google, equivalent to Senior Vice President)

- Joined Google: 1999 (employee in the first few hundred)

- Key Systems: Google File System (GFS), MapReduce, BigTable, LevelDB, TensorFlow infrastructure

- Primary Collaborator: Jeff Dean — their partnership spans over 25 years

- Honors: Member of the National Academy of Engineering (2009), ACM Prize in Computing (2023, with Jeff Dean), ACM Fellow

- Notable Paper Citations: The GFS, MapReduce, and BigTable papers have collectively been cited over 50,000 times

- Open Source Contribution: LevelDB — the foundation for Facebook’s RocksDB and many other storage engines

- Known For: Quiet, low-profile approach; lets code and papers speak for themselves

Frequently Asked Questions

What is the relationship between Sanjay Ghemawat and Jeff Dean?

Sanjay Ghemawat and Jeff Dean are one of the most celebrated engineering partnerships in the history of computing. They have worked side by side at Google since the early 2000s, co-authoring the landmark papers on MapReduce, BigTable, and other foundational systems. Their collaboration is often cited as the gold standard of pair programming at the highest level. Colleagues have described them as complementary thinkers — Dean often driving the initial architectural vision and Ghemawat bringing extraordinary depth in implementation, optimization, and ensuring reliability at scale. Together, they were jointly awarded the 2023 ACM Prize in Computing for their contributions to large-scale distributed systems.

How did MapReduce change the software industry?

MapReduce fundamentally democratized large-scale data processing. Before its 2004 publication, processing massive datasets required specialized expertise in parallel computing, custom hardware, or expensive proprietary software. MapReduce provided a simple programming model — just write a map function and a reduce function — and the framework handled parallelization, fault tolerance, and data distribution across thousands of machines. The open-source Hadoop implementation brought this capability to every company, launching the entire big data industry. Technologies like Apache Spark, Apache Flink, and modern cloud data services all trace their lineage to the concepts Ghemawat and Dean introduced.

What is LevelDB and why is it significant?

LevelDB is an open-source key-value storage library that Ghemawat and Dean developed at Google and released in 2011. Built on log-structured merge-tree (LSM-tree) principles, it provides extremely fast writes and efficient reads for sorted data. Its significance lies both in its direct use — it is embedded in applications like Google Chrome for IndexedDB storage — and in its influence on subsequent projects. Facebook forked LevelDB to create RocksDB, which has become one of the most widely used embedded storage engines in the world, powering systems at companies including Facebook, LinkedIn, Netflix, and Uber. LevelDB showed that elegant, well-crafted systems software could have an outsized impact through open-source adoption.

Why is Sanjay Ghemawat less well-known than other tech pioneers despite his enormous impact?

Ghemawat’s relative obscurity outside the engineering community reflects his personal style and the nature of infrastructure work. Unlike founders, CEOs, or AI researchers whose products are consumer-facing, Ghemawat builds the invisible plumbing that makes everything else possible. He does not give frequent public talks, does not maintain a social media presence, and does not seek press coverage. His influence flows through papers, code, and the engineers he has mentored rather than through public visibility. Within the systems engineering community, however, he is considered one of the most impactful computer scientists of his generation — a status reflected by his election to the National Academy of Engineering and the ACM Prize in Computing.