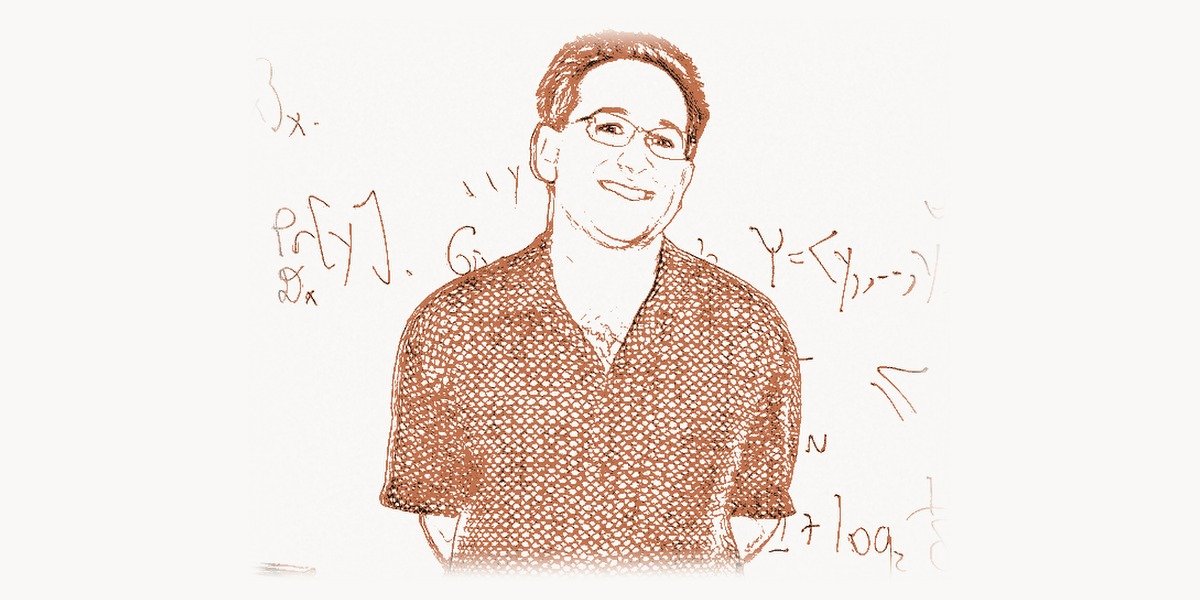

In a field where even the most seasoned physicists often retreat behind equations and the most brilliant computer scientists bury their insights in dense proofs, Scott Aaronson has carved out a singular role: the person who makes quantum computing theory not just accessible, but genuinely exciting. As a professor at the University of Texas at Austin, the author of the celebrated book Quantum Computing Since Democritus, and the creator of the Complexity Zoo — an exhaustive catalog of computational complexity classes — Aaronson has spent over two decades sitting at the exact intersection where theoretical computer science meets quantum mechanics, and somehow making that intersection feel like a place anyone with curiosity can visit.

What sets Aaronson apart is not just technical brilliance — though he has that in abundance — but his relentless commitment to public explanation. Through his blog Shtetl-Optimized, hundreds of public lectures, and a writing style that blends mathematical rigor with humor, he has become the voice of quantum computing skepticism done right: deeply informed, genuinely enthusiastic about the field’s real potential, and completely unwilling to let hype outpace truth. In an era when “quantum” has become a marketing buzzword, Aaronson remains the person most qualified to tell you what quantum computers actually can and cannot do.

From Child Prodigy to Quantum Complexity Pioneer

Scott Joel Aaronson was born on May 21, 1981, in Philadelphia, Pennsylvania. His intellectual gifts were apparent early: he was the kind of child who taught himself programming and devoured advanced mathematics textbooks while his peers were still working through basic algebra. He enrolled at Cornell University at 15, completing his bachelor’s degree in computer science with a focus on theoretical foundations. From Cornell, he moved to the University of California, Berkeley, where he completed his PhD under Umesh Vazirani, one of the founding figures of quantum computation theory.

His doctoral thesis tackled a question that would define his career: what are the fundamental limits of quantum computation? Not the engineering challenges of building a quantum computer — the noisy qubits, the decoherence, the cryogenic cooling — but the deeper, mathematical question of what problems quantum computers can solve efficiently even in principle. This placed him squarely in the tradition of Alan Turing, who asked what could be computed at all, and Stephen Cook, who asked what could be computed efficiently.

After his PhD, Aaronson held a postdoctoral position at the Institute for Advanced Study in Princeton — the same institution where Einstein, Gödel, and John von Neumann had worked. He then joined MIT’s faculty, where he spent over a decade as a professor of electrical engineering and computer science before moving to the University of Texas at Austin in 2016, where he holds the David J. Bruton Jr. Centennial Professorship of Computer Science and directs the Quantum Information Center.

Understanding BQP: What Quantum Computers Can Actually Do

At the heart of Aaronson’s work is the complexity class known as BQP — Bounded-Error Quantum Polynomial Time. If you have heard of P (problems solvable efficiently by classical computers) and NP (problems whose solutions can be verified efficiently), then BQP is their quantum cousin: it is the class of decision problems that a quantum computer can solve efficiently with a bounded probability of error.

The key insight — and the one Aaronson has spent much of his career elucidating — is that BQP is almost certainly not the same as NP. This means quantum computers, even perfect ones, probably cannot solve all NP-complete problems efficiently. They are not magic. They do not try all possible solutions simultaneously, despite what popular science articles often claim. What they do is something subtler and, in Aaronson’s view, more interesting: they exploit quantum interference to amplify the probability of correct answers and suppress incorrect ones, but only for problems with a particular mathematical structure.

The following pseudocode illustrates the conceptual hierarchy of complexity classes that Aaronson has spent his career mapping — showing where BQP sits relative to classical classes:

# Conceptual hierarchy of complexity classes

# As mapped by Aaronson and collaborators

class ComplexityLandscape:

"""

Known and conjectured relationships between major

complexity classes — the 'map' Aaronson navigates.

"""

P = {

"description": "Efficiently solvable by classical computers",

"examples": ["sorting", "shortest path", "linear programming"],

"relationship": "P ⊆ BPP ⊆ BQP ⊆ PSPACE"

}

BPP = {

"description": "Efficiently solvable with randomness (bounded error)",

"examples": ["primality testing (Miller-Rabin)", "polynomial identity"],

"note": "Widely conjectured: BPP = P"

}

BQP = {

"description": "Efficiently solvable by quantum computers (bounded error)",

"examples": ["integer factoring (Shor)", "discrete log", "simulation"],

"key_insight": "BQP ⊄ NP is unknown but suspected for some problems",

"contains": ["P", "BPP"],

"contained_in": ["PSPACE"],

"probably_not_contains": ["NP-complete problems"]

}

NP = {

"description": "Solutions efficiently verifiable by classical computers",

"examples": ["SAT", "traveling salesman", "graph coloring"],

"open_question": "P ≠ NP is the central conjecture"

}

QMA = {

"description": "Quantum analog of NP — quantum Merlin-Arthur",

"examples": ["local Hamiltonian problem (quantum analog of SAT)"],

"relationship": "NP ⊆ QMA ⊆ PSPACE"

}

PSPACE = {

"description": "Solvable with polynomial space, unlimited time",

"examples": ["TQBF", "generalized chess/Go"],

"contains_all_above": True

}

# Aaronson's key contribution: clarifying what quantum

# computers CANNOT do is as important as what they CAN do

#

# Quantum speedup is real but structured:

# - Exponential speedup: factoring, simulation

# - Quadratic speedup: search (Grover's algorithm)

# - No speedup: NP-complete problems (likely)This hierarchy is not just an abstract curiosity. It has direct implications for cryptography, for drug discovery, for materials science, and for our fundamental understanding of the physical universe. Aaronson’s work has been instrumental in placing rigorous boundaries around quantum computational power, ensuring that the field advances on solid theoretical ground rather than wishful thinking.

BosonSampling: A Concrete Path to Quantum Supremacy

Perhaps Aaronson’s most celebrated specific result is the concept of BosonSampling, introduced in a landmark 2011 paper co-authored with his PhD student Alex Arkhipov. The idea was elegantly simple in conception yet profoundly important: instead of trying to build a universal quantum computer capable of running Shor’s algorithm, why not identify a much simpler quantum task that is still classically intractable?

BosonSampling works by sending identical photons (bosons) through a network of beam splitters and phase shifters — a linear optical interferometer — and then measuring where the photons end up. The probability distribution of the output is governed by the permanent of a matrix, a quantity that is known to be #P-hard to compute classically. This means that even approximately sampling from this distribution is believed to be beyond the reach of any classical computer, yet a simple optical setup can do it naturally.

The brilliance of Aaronson and Arkhipov’s argument was not just proposing the experiment but providing a rigorous complexity-theoretic argument for why classical simulation should be hard. They showed that if a classical computer could efficiently sample from the BosonSampling distribution, it would imply a collapse of the polynomial hierarchy — a consequence most complexity theorists consider extremely unlikely. This connected a concrete physical experiment to deep structural questions in computational complexity, following the tradition of thinkers like Avi Wigderson, who spent decades exploring the deep connections between randomness and computation.

Here is a simplified simulation that captures the mathematical essence of BosonSampling — computing the permanent of a matrix, the quantity that makes classical simulation intractable:

import numpy as np

from itertools import permutations

from math import factorial

def matrix_permanent(M):

"""

Compute the permanent of an n×n matrix M.

The permanent is like the determinant, but with all

positive signs — no cancellations. This seemingly

small difference makes it #P-hard to compute exactly

(Valiant, 1979), which is the foundation of the

BosonSampling hardness argument.

permanent(M) = Σ_σ Π_i M[i][σ(i)]

where σ ranges over all permutations of {0,...,n-1}

"""

n = len(M)

total = 0.0

for perm in permutations(range(n)):

product = 1.0

for i in range(n):

product *= M[i][perm[i]]

total += product

return total

def boson_sampling_probability(interferometer, input_modes, output_modes):

"""

Compute the probability of observing bosons in

output_modes given input_modes through a unitary

interferometer matrix.

This is Aaronson & Arkhipov's key insight:

the probability involves the PERMANENT of a submatrix,

not the determinant. Computing these probabilities is

the core of why BosonSampling is classically hard.

P(output | input) = |permanent(U_ST)|² / (m₁!·m₂!·...·n₁!·n₂!·...)

where U_ST is the submatrix of U selected by input/output modes.

"""

n = len(input_modes)

# Extract the relevant submatrix from the interferometer

submatrix = np.array([

[interferometer[out_m][in_m] for in_m in input_modes]

for out_m in output_modes

])

perm = matrix_permanent(submatrix)

probability = abs(perm) ** 2

# Normalize by factoralities of mode occupations

# (simplified for single-photon inputs)

return probability

# Example: 4-mode interferometer with 2 photons

# A random unitary matrix represents the optical network

def random_unitary(n):

"""Generate a random n×n unitary matrix (Haar measure)."""

Z = (np.random.randn(n, n) + 1j * np.random.randn(n, n)) / np.sqrt(2)

Q, R = np.linalg.qr(Z)

D = np.diag(np.diag(R) / np.abs(np.diag(R)))

return Q @ D

np.random.seed(42)

U = random_unitary(4) # 4-mode optical network

# 2 photons enter modes 0 and 1

# Compute probability they exit in modes 2 and 3

input_modes = [0, 1]

output_modes = [2, 3]

prob = boson_sampling_probability(U, input_modes, output_modes)

print(f"Probability of output {output_modes}: {prob:.6f}")

# The key point: for n photons in m modes,

# computing ALL output probabilities requires

# evaluating exponentially many permanents.

# A quantum optical setup samples from this

# distribution naturally — no permanent computation needed.

# This is the quantum advantage.BosonSampling became a major experimental goal in quantum optics. Teams around the world — in China, the United States, and Europe — raced to implement it with increasing numbers of photons. In 2020, a Chinese team led by Jian-Wei Pan demonstrated BosonSampling with 76 photons in their Jiuzhang experiment, claiming quantum computational advantage. Aaronson himself was among those who analyzed and validated these experimental claims, always insisting on the highest standards of evidence.

The Complexity Zoo and Public Communication

One of Aaronson’s most enduring practical contributions is the Complexity Zoo — a comprehensive online catalog of over 500 computational complexity classes and their known relationships. What began as a graduate student project has become an indispensable reference for researchers, essentially serving as the field’s encyclopedia. Each entry describes what the class captures, how it relates to other classes, and what open questions remain.

This effort reflects Aaronson’s deeper commitment to making theoretical computer science legible. His blog, Shtetl-Optimized, launched in 2005, has become one of the most widely read blogs in all of academic computer science. He writes about everything from the latest quantum computing breakthroughs to philosophical questions about consciousness, free will, and the nature of mathematical truth. The blog’s comment sections are legendary for their depth, often featuring substantive exchanges with other leading researchers.

His book Quantum Computing Since Democritus, published in 2013 by Cambridge University Press, is based on a lecture course he taught at the University of Waterloo. It is a remarkable tour through computation, physics, philosophy, and mathematics — starting from ancient Greek atomism and ending with the cutting edge of quantum complexity theory. It has been praised not only for its technical content but for its irreverent, personal style, making it one of the best entry points into the field for advanced undergraduates and curious professionals alike. For those interested in the mathematical foundations that underpin these computational ideas, Alonzo Church’s work on lambda calculus provides essential historical context for how formal computation itself was first defined.

Quantum Supremacy: Defining and Defending the Concept

Aaronson played a crucial role in the discourse around quantum supremacy (later sometimes called “quantum advantage”) — the milestone at which a quantum device performs a computational task that no classical computer can match in any reasonable time. He was instrumental in defining what such a demonstration would need to look like from a complexity-theoretic perspective.

When Google announced its Sycamore quantum supremacy experiment in 2019 — claiming that their 53-qubit processor completed a random circuit sampling task in 200 seconds that would take a classical supercomputer approximately 10,000 years — Aaronson was one of the key outside reviewers. He had been consulted throughout the project and provided theoretical analysis supporting the claim. His involvement lent significant credibility to the announcement, precisely because he was known for his rigor and his willingness to debunk overblown claims.

At the same time, Aaronson has been refreshingly honest about the limitations of these demonstrations. He has repeatedly emphasized that quantum supremacy experiments, while scientifically significant, do not yet produce results that are practically useful. The random circuit sampling performed by Sycamore generates essentially random numbers — impressive as a proof of concept but not yet a practical application. His intellectual honesty on this point has made him a trusted voice even among quantum computing skeptics. This kind of principled rigor in evaluating new computational paradigms echoes the work of Claude Shannon, who established information theory on similarly uncompromising mathematical foundations.

Contributions to Quantum Query Complexity and Oracles

Beyond BosonSampling, Aaronson has made foundational contributions to quantum query complexity — the study of how many times a quantum algorithm must query an oracle (a black-box function) to solve a problem. This area is crucial because many of the separations between classical and quantum computation are best understood in the query model.

One of his most important results in this area, proved with Yaoyun Shi, showed that any total Boolean function that can be computed with Q quantum queries requires at least Q2 classical queries (up to a polynomial). This “quantum-classical query gap” result established that quantum speedups in the query model are always at most polynomial for total functions — a powerful structural constraint.

He has also done groundbreaking work on the collision problem, quantum random walks, the relationship between quantum proofs and classical proofs (studying classes like QMA and QCMA), and the computational complexity of quantum state tomography. His work with Andrew Yao’s communication complexity framework has further illuminated the boundaries of quantum advantage. Each of these contributions deepens our understanding of where quantum mechanics provides genuine computational leverage and where it does not.

Aaronson and Artificial Intelligence: The Consciousness Question

Beyond quantum computing, Aaronson has waded into some of the deepest questions in the philosophy of mind and artificial intelligence. He is one of the most prominent critics of the Penrose-Hameroff “orchestrated objective reduction” theory, which proposes that human consciousness arises from quantum computations in microtubules within neurons. Aaronson has argued, with characteristic precision, that this theory lacks both computational and physical plausibility.

More broadly, Aaronson has engaged thoughtfully with questions about what AI can and cannot achieve. He has written extensively about the relationship between computational complexity and consciousness — whether there are aspects of human thought that are fundamentally beyond what any Turing machine (or quantum Turing machine) can replicate. While he remains agnostic on many of these deep questions, his insistence on framing them in terms of precise computational models has brought much-needed clarity to debates that often devolve into hand-waving.

In recent years, particularly with the rise of large language models, Aaronson took on a consulting role at OpenAI, working on the theoretical foundations of AI safety and alignment. His involvement reflects a growing recognition that the same computational-complexity mindset that illuminates quantum computing can also help us understand the capabilities and limitations of AI systems. Teams working on modern project management for AI development often rely on structured tools to coordinate this kind of multidisciplinary research — platforms like Taskee help bridge the gap between theoretical research planning and practical implementation workflows.

The Quantum Computing Hype Filter

Perhaps Aaronson’s most valuable public service has been his role as what might be called a “quantum hype filter.” As quantum computing has attracted billions of dollars in investment and endless media breathlessness, Aaronson has consistently pushed back against exaggerated claims while remaining genuinely optimistic about the field’s long-term potential.

He has publicly criticized companies that overstate what current quantum hardware can do, media outlets that describe quantum computers as “trying all possibilities simultaneously,” and anyone who claims quantum computers will break all encryption tomorrow. At the same time, he vigorously defends the field against those who claim quantum computing is fundamentally impossible or useless.

This balanced stance — rigorously skeptical yet deeply committed to the field — has earned him enormous respect across computer science and physics. He is frequently called upon to review quantum computing claims, evaluate startup technologies, and advise government agencies on quantum policy. The theoretical work of complexity theorists like Aaronson provides the mathematical rigor that modern tech firms need when evaluating quantum computing investments. For digital agencies navigating the intersection of emerging technology and client delivery, resources like Toimi help teams stay informed about which technological paradigm shifts are real and which are hype.

Teaching and Mentorship

Aaronson’s influence extends well beyond his research papers. He is widely regarded as one of the most gifted lecturers in theoretical computer science. His lecture notes — particularly those from his “Great Ideas in Theoretical Computer Science” and “Quantum Complexity Theory” courses — are freely available online and have been used by students and researchers around the world.

His teaching style mirrors his writing: technically precise but deeply intuitive, full of analogies and thought experiments that make abstract concepts tangible. He has supervised numerous PhD students who have gone on to become leading researchers in their own right, and his approach to mentorship emphasizes both technical depth and the importance of clear communication — a legacy that has shaped the next generation of quantum information scientists, much as Donald Knuth’s legendary courses at Stanford shaped generations of computer scientists before him.

Awards and Recognition

Aaronson’s contributions have earned him numerous accolades. He received the Alan T. Waterman Award from the National Science Foundation in 2012, one of the highest honors for early-career scientists in the United States. He was named an ACM Fellow and a Fellow of the American Association for the Advancement of Science. He has received the Tomassoni-Chisesi Prize in Physics from Sapienza University of Rome, the EATCS-ICALP Award, and the ACM Prize in Computing in 2020 — recognizing his transformative contributions to quantum computing theory.

These awards reflect not just the depth of his research but the breadth of his impact: few living computer scientists have contributed as much to both the technical foundations and public understanding of their field.

Legacy and Continuing Impact

Scott Aaronson’s legacy is still very much in progress. At UT Austin, he continues to push the boundaries of quantum complexity theory, explore the connections between computation and physics, and train the next generation of researchers. His work on BosonSampling has opened an entire experimental subfield. His complexity-theoretic approach to quantum supremacy has given the field a rigorous framework for evaluating progress. And his public communication has set a standard for how scientists can engage with the broader world without sacrificing accuracy.

In a field where the gap between reality and marketing has never been wider, Aaronson serves as an essential bridge between the deeply technical world of quantum theory and the public that funds and is affected by its development. He embodies a vision of the scientist not as an ivory-tower recluse but as a public intellectual — someone who believes that the most profound ideas in science deserve to be explained, debated, and shared. He stands in a long lineage of computer scientists who combined theoretical depth with remarkable exposition, from Edsger Dijkstra, whose eloquent notes on structured programming reshaped how we think about code, to the present day.

Whether quantum computers eventually transform medicine, materials science, cryptography, and artificial intelligence — or whether their impact turns out to be more modest than the hype suggests — Scott Aaronson will have played a central role in ensuring that the field advanced on the basis of truth rather than wishful thinking. And in science, that may be the most important contribution of all.

Frequently Asked Questions

What is Scott Aaronson best known for?

Scott Aaronson is best known for his contributions to quantum computational complexity theory, particularly the concept of BosonSampling (co-developed with Alex Arkhipov), which provided a concrete path toward demonstrating quantum computational advantage. He is also widely known for his book Quantum Computing Since Democritus, his blog Shtetl-Optimized, the Complexity Zoo reference, and his role as a public communicator who explains what quantum computers can and cannot actually do.

What is BosonSampling and why is it important?

BosonSampling is a computational task in which identical photons are sent through a linear optical network and their output distribution is measured. The mathematical structure of this distribution involves computing matrix permanents, which is known to be classically intractable (#P-hard). Aaronson and Arkhipov showed that efficiently simulating BosonSampling classically would collapse the polynomial hierarchy — providing strong evidence that even a simple quantum optical device can outperform any classical computer on this specific task, without needing a full universal quantum computer.

Can quantum computers solve NP-complete problems efficiently?

Almost certainly not, and this is one of Aaronson’s most important messages. While quantum computers offer genuine speedups for certain problems — factoring integers (Shor’s algorithm), searching unsorted databases (Grover’s algorithm with a quadratic speedup), and simulating quantum systems — there is strong evidence that they cannot efficiently solve NP-complete problems like the traveling salesman problem or Boolean satisfiability. The complexity class BQP, which captures quantum polynomial-time computation, is believed to not contain NP-complete problems.

What is the Complexity Zoo?

The Complexity Zoo is an online reference created by Aaronson that catalogs over 500 computational complexity classes and their known relationships. It includes everything from well-known classes like P, NP, and BQP to highly specialized ones studied in specific research contexts. The Zoo has become an indispensable resource for researchers in theoretical computer science, providing a structured overview of the vast landscape of computational complexity.

What role did Aaronson play in the quantum supremacy debate?

Aaronson was deeply involved in defining what a credible quantum supremacy demonstration would require from a theoretical perspective. He served as an outside reviewer and consultant for Google’s Sycamore experiment in 2019, which claimed to achieve quantum supremacy through random circuit sampling. Aaronson provided complexity-theoretic analysis supporting the claim while also honestly noting that the task performed was not yet practically useful — exemplifying his commitment to both validating genuine progress and tempering unwarranted hype.