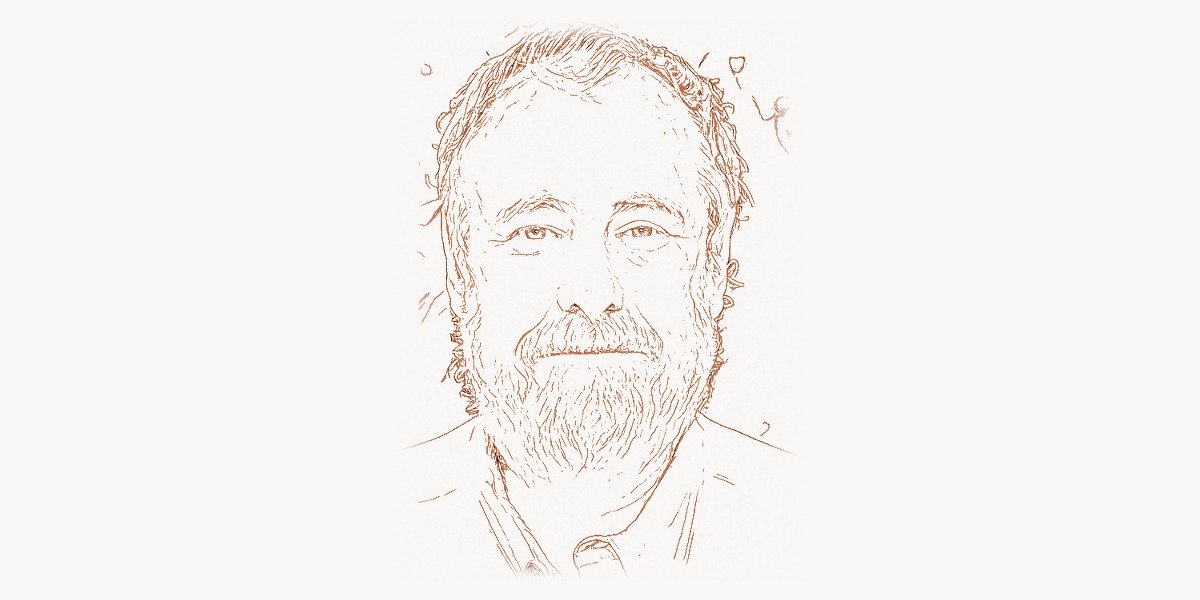

On September 19, 1982, at 11:44 AM, a computer science professor at Carnegie Mellon University typed three characters into a university bulletin board system that would permanently alter how billions of people communicate. The message was simple: he suggested using the character sequence :-) to mark jokes and :-( to indicate seriousness on the CMU online message boards. The professor was Scott Fahlman, and what seemed like a trivial solution to a mundane problem — people misreading the tone of online posts — became one of the most culturally significant innovations in the history of digital communication. But reducing Fahlman to the emoticon inventor does a disservice to a career that spans four decades of groundbreaking artificial intelligence research at one of the world’s premier institutions. From his early work on semantic networks and connectionist models to his ongoing development of SCONE, a sophisticated knowledge representation system, Fahlman has spent his career wrestling with the hardest problems in AI — not the narrow pattern-matching that drives today’s large language models, but the deep, structural understanding of meaning that would be required for machines to truly comprehend the world.

Early Life, Education, and the Road to Carnegie Mellon

Scott Elliott Fahlman was born on March 21, 1948, in Medina, Ohio, a small city about thirty miles south of Cleveland. He grew up in a middle-class household during the early years of the computing revolution, when transistors were replacing vacuum tubes and the first programming languages were being created. From an early age, Fahlman demonstrated an aptitude for mathematics and an unusual ability to think about abstract structures — skills that would serve him well in the nascent field of artificial intelligence.

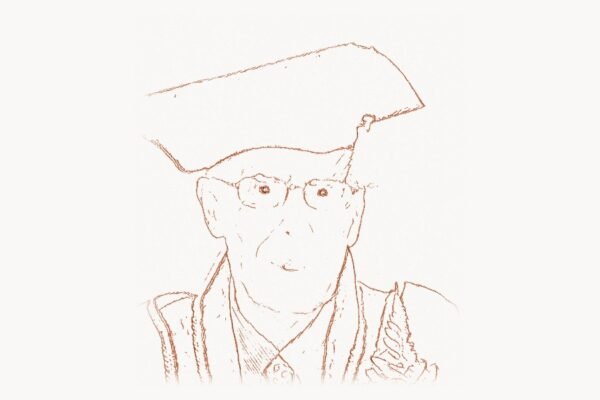

Fahlman earned his bachelor’s degree from the Massachusetts Institute of Technology in 1969, studying electrical engineering and computer science during a period when MIT was becoming the epicenter of AI research. The MIT Artificial Intelligence Laboratory, co-founded by Marvin Minsky and John McCarthy, was producing ideas that would define the field for decades. Working in this environment, Fahlman absorbed the fundamental debates that shaped early AI — symbolic versus subsymbolic approaches, knowledge representation versus learning, the role of logic versus intuition.

After completing his undergraduate work, Fahlman continued at MIT for his graduate studies. He earned his PhD in 1977, writing his dissertation on a system called NETL — a network-based knowledge representation system that used parallel processing to perform inheritance reasoning over semantic networks. This work would prove prophetic: decades before connectionism became the dominant paradigm in AI, Fahlman was already thinking about how networks of simple units could represent and reason about complex knowledge structures.

NETL: A Parallel Semantic Network

Fahlman’s doctoral work on NETL (Network Language) was one of the earliest serious attempts to use massively parallel computation for knowledge representation and reasoning. The core insight was elegant: instead of representing knowledge as logical formulas processed sequentially, NETL stored knowledge as a network of nodes connected by labeled links, with inheritance and reasoning computed by passing markers through the network in parallel.

In a traditional knowledge representation system of the era, answering a question like “Can a canary fly?” required a chain of logical deductions: a canary is a bird, birds can fly, therefore a canary can fly. In NETL, the answer emerged naturally from the network structure — the marker representing “canary” propagated through the “is-a” link to “bird,” which had a “can” link to “fly.” The entire inference happened in time proportional to the depth of the network, not the number of facts stored in it.

This approach anticipated many of the ideas that would later drive the connectionist revolution in AI. Fahlman recognized early on that the sequential bottleneck of traditional von Neumann architecture was a fundamental obstacle to building intelligent systems. His vision of massively parallel knowledge processing influenced subsequent work in both the symbolic and connectionist traditions.

// Simplified example of a semantic network traversal

// inspired by Fahlman's NETL marker-passing approach

class SemanticNode {

constructor(name) {

this.name = name;

this.links = []; // outgoing typed connections

this.markers = {}; // parallel marker flags

}

addLink(type, target) {

this.links.push({ type, target });

}

}

class SemanticNetwork {

constructor() {

this.nodes = new Map();

}

addNode(name) {

const node = new SemanticNode(name);

this.nodes.set(name, node);

return node;

}

// Marker-passing inference: propagate up IS-A hierarchy

// to check if entity inherits a property

canInherit(entityName, propertyType, propertyValue) {

const visited = new Set();

const queue = [entityName];

while (queue.length > 0) {

const current = queue.shift();

if (visited.has(current)) continue;

visited.add(current);

const node = this.nodes.get(current);

if (!node) continue;

// Check direct property links

for (const link of node.links) {

if (link.type === propertyType &&

link.target.name === propertyValue) {

return {

result: true,

path: [...visited],

source: current

};

}

// Propagate markers up IS-A hierarchy

if (link.type === 'IS-A') {

queue.push(link.target.name);

}

}

}

return { result: false, path: [...visited], source: null };

}

}

// Build a simple knowledge base

const net = new SemanticNetwork();

const canary = net.addNode('canary');

const bird = net.addNode('bird');

const animal = net.addNode('animal');

const fly = net.addNode('fly');

const breathe = net.addNode('breathe');

canary.addLink('IS-A', bird);

bird.addLink('IS-A', animal);

bird.addLink('CAN', fly);

animal.addLink('CAN', breathe);

// Query: Can a canary fly?

const result = net.canInherit('canary', 'CAN', 'fly');

console.log(result);

// { result: true, path: ['canary', 'bird'], source: 'bird' }

// Query: Can a canary breathe?

const result2 = net.canInherit('canary', 'CAN', 'breathe');

console.log(result2);

// { result: true, path: ['canary', 'bird', 'animal'], source: 'animal' }The NETL system, published as a book by MIT Press in 1979, established Fahlman as a leading figure in knowledge representation research. It also laid the groundwork for his subsequent contributions to connectionist computing and the Boltzmann machine.

Moving to Carnegie Mellon and the Rise of Connectionism

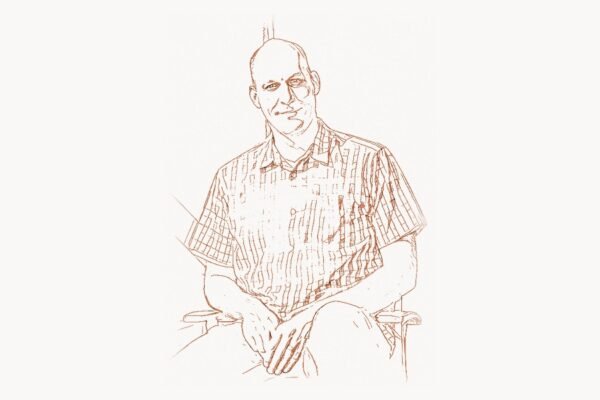

After completing his PhD at MIT, Fahlman joined the faculty of Carnegie Mellon University’s Computer Science Department in 1978. CMU was already a powerhouse in AI research, thanks to the foundational work of Allen Newell and Herbert Simon, who had established the school’s tradition of rigorous computational approaches to intelligence. Fahlman fit naturally into this environment, bringing his expertise in parallel network architectures and knowledge representation.

At CMU, Fahlman continued developing his ideas about parallel computation for AI. In 1983, he published a highly influential paper proposing the “cascade-correlation” learning algorithm, which addressed some of the limitations of backpropagation in training neural networks. Unlike standard backpropagation, which required the network architecture to be fixed before training, cascade-correlation dynamically added hidden units during training, building the network from the bottom up. Each new unit was trained to correlate maximally with the residual error, and then its weights were frozen before the next unit was added.

This work was part of the broader connectionist movement that was reshaping AI in the 1980s. While researchers like Geoffrey Hinton focused on learning algorithms, Fahlman was particularly interested in the architectural question — how to design network structures that could efficiently represent and reason about complex knowledge. His contributions bridged the gap between the symbolic AI tradition he had been trained in and the emerging neural network paradigm.

The Birth of the Emoticon: September 19, 1982

The story of the emoticon begins with a practical problem that will be familiar to anyone who has been misunderstood in an email or text message. In the early 1980s, the CMU computer science department ran a series of online bulletin boards — precursors to modern forums — where faculty and students discussed everything from technical problems to campus humor. These boards had a persistent problem: without vocal tone, facial expressions, or body language, it was often impossible to tell when someone was joking. Humorous posts were taken seriously, sarcastic comments provoked genuine outrage, and hypothetical scenarios were mistaken for real emergencies.

The immediate catalyst was a discussion about what would happen if a mercury spill occurred in an elevator — a physics thought experiment that some readers took as a genuine safety warning. After various proposals for how to mark jokes (including symbols like # and &), Fahlman proposed a simple, elegant solution. His original message, recovered from backup tapes by CMU researcher Mike Jones twenty years later, read in part that he suggested using the character sequence :-) to mark things that were not serious, and :-( to mark things that were.

The proposal was immediately adopted on the CMU bulletin boards and quickly spread to other universities connected through early computer networks. Within months, it had reached ARPANET sites across the country. Within a few years, emoticons had become a universal feature of digital communication. Today, the emoji systems built into every smartphone, messaging app, and social media platform are direct descendants of those three characters Fahlman typed in 1982. The Unicode Consortium has standardized over 3,600 emoji characters — a vast expansion of the simple smiley face, but built on the same insight that text-based communication needs visual markers for emotional tone.

# Simple emoticon parser demonstrating the evolution

# from Fahlman's original ASCII sequences to modern emoji

import re

from dataclasses import dataclass

from typing import Optional

@dataclass

class EmoticonMatch:

original: str

meaning: str

emoji: str

position: int

# Mapping of classic ASCII emoticons to meanings and emoji

EMOTICON_MAP = {

# Fahlman's originals (1982)

':-)': ('happy / joking', '\U0001f642'),

':-(': ('sad / serious', '\U0001f641'),

# Early derivatives (1980s)

';-)': ('winking', '\U0001f609'),

':-D': ('laughing', '\U0001f604'),

':-P': ('tongue out / teasing', '\U0001f61b'),

':-O': ('surprised', '\U0001f62e'),

":'-(': ('crying', '\U0001f622'),

':-/': ('skeptical', '\U0001f615'),

':-|': ('neutral / deadpan', '\U0001f610'),

'8-)': ('cool / sunglasses', '\U0001f60e'),

# Nose-free variants (1990s simplification)

':)': ('happy / joking', '\U0001f642'),

':(': ('sad / serious', '\U0001f641'),

';)': ('winking', '\U0001f609'),

':D': ('laughing', '\U0001f604'),

':P': ('tongue out / teasing', '\U0001f61b'),

}

def parse_emoticons(text: str) -> list[EmoticonMatch]:

"""

Scan text for ASCII emoticons using longest-match-first

strategy. Returns list of EmoticonMatch objects sorted

by position in the original text.

"""

matches = []

# Sort patterns longest-first to prevent partial matches

sorted_patterns = sorted(

EMOTICON_MAP.keys(),

key=len,

reverse=True

)

# Build combined regex, escaping special chars

escaped = [re.escape(p) for p in sorted_patterns]

combined = re.compile('|'.join(escaped))

for match in combined.finditer(text):

pattern = match.group()

meaning, emoji = EMOTICON_MAP[pattern]

matches.append(EmoticonMatch(

original=pattern,

meaning=meaning,

emoji=emoji,

position=match.start()

))

return matches

def convert_to_emoji(text: str) -> str:

"""Replace ASCII emoticons with Unicode emoji equivalents."""

result = text

sorted_patterns = sorted(

EMOTICON_MAP.keys(),

key=len,

reverse=True

)

for pattern in sorted_patterns:

_, emoji = EMOTICON_MAP[pattern]

result = result.replace(pattern, emoji)

return result

# Example usage

message = "Great talk today :-) The demo was surprising :-O"

print(f"Original: {message}")

print(f"Converted: {convert_to_emoji(message)}")

# Parse and inspect matches

for m in parse_emoticons(message):

print(f" Found '{m.original}' at pos {m.position} "

f"-> {m.meaning} {m.emoji}")SCONE: A Knowledge Representation System for Common Sense

While the emoticon brought Fahlman worldwide fame, his most substantial intellectual contribution may be SCONE (the Scone Knowledge-Base System), a project he began in the late 1990s and has continued developing through the present day. SCONE represents Fahlman’s answer to what he considers the central unsolved problem in AI: how to give machines the vast, interconnected web of common-sense knowledge that humans take for granted.

The name SCONE is not an acronym but a reference to the Stone of Scone, the ancient stone upon which Scottish monarchs were crowned — a foundation stone, just as common-sense knowledge is the foundation upon which all higher reasoning rests. The system is built on a rich ontology of types, instances, roles, and relations, organized into multiple overlapping contexts that can represent different perspectives, hypothetical situations, and states of affairs.

What distinguishes SCONE from other knowledge representation systems is its emphasis on efficient, network-based reasoning. Drawing on his early work with NETL, Fahlman designed SCONE to perform inheritance reasoning, type checking, and contextual inference through marker-passing algorithms that exploit the structure of the knowledge network rather than relying on general-purpose logical inference. This makes SCONE fast and scalable for the kinds of reasoning that common-sense AI requires — the quick, intuitive judgments about what things are, how they relate, and what is likely to be true given what is already known.

Fahlman has argued forcefully that modern deep learning systems, despite their impressive performance on pattern recognition and language generation tasks, fundamentally lack the structured knowledge representation that SCONE provides. A large language model can produce fluent text about canaries and birds, but it does not maintain an explicit, inspectable model of the category hierarchy that connects them. SCONE aims to provide exactly that kind of structured understanding, complementing the statistical pattern-matching of neural networks with rigorous, verifiable reasoning. This vision of hybrid AI systems — combining the strengths of neural and symbolic approaches — is now gaining traction across the field, as researchers recognize the limitations of purely statistical models. Tools that help development teams manage complex software projects increasingly need this kind of structured knowledge processing behind the scenes.

Contributions to Programming Language Design

Fahlman’s influence extends beyond AI into programming language design and implementation. He was a key contributor to the development of Common Lisp, serving on the design committee that standardized the language in the 1980s. Common Lisp was the dominant language for AI research throughout the 1980s and 1990s, and Fahlman’s contributions to its design — particularly in the areas of object-oriented programming and the condition system for error handling — reflected his deep understanding of what AI researchers needed from a programming language.

He was the primary author of the specification for Common Lisp’s object system (originally called “New Flavors”), which contributed to what eventually became CLOS (Common Lisp Object System). This work influenced the design of object-oriented features in many subsequent programming languages. The condition system Fahlman helped design for Common Lisp remains one of the most sophisticated error-handling mechanisms in any programming language, allowing programs to signal conditions, offer restarts, and handle errors at different levels of abstraction without unwinding the call stack.

This programming language work was not separate from Fahlman’s AI research — it was driven by it. Building complex AI systems like NETL and SCONE required programming tools that could handle the intricate data structures, dynamic typing, and interactive development workflows that AI research demands. Fahlman’s contributions to Common Lisp ensured that the language met these needs, and his practical experience building AI systems informed his language design decisions.

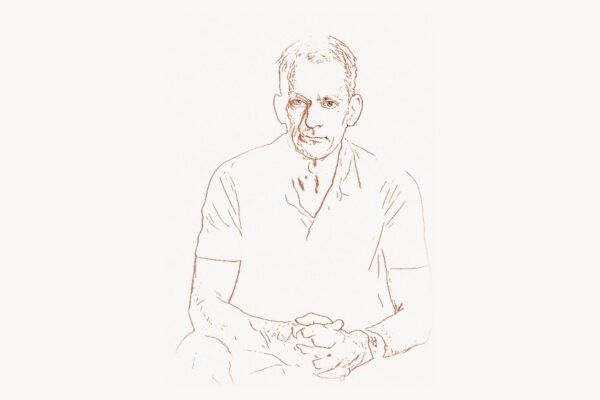

The Boltzmann Machine and Connectionist Computing

In the early 1980s, Fahlman collaborated with Geoffrey Hinton and Terrence Sejnowski on the development of the Boltzmann machine, one of the earliest stochastic neural network architectures capable of learning internal representations. The Boltzmann machine used principles from statistical mechanics — specifically, simulated annealing — to learn probability distributions over its inputs. This was groundbreaking work that helped establish the theoretical foundations for deep learning.

Fahlman’s contribution to this collaboration was particularly important in bridging the gap between symbolic and subsymbolic AI. While Hinton and Sejnowski came from a more purely connectionist perspective, Fahlman brought his deep expertise in knowledge representation and structured reasoning. The result was a richer understanding of how network-based computation could support both the pattern recognition that neural networks excel at and the structured reasoning that symbolic AI provides.

The CMU AI community during this period was remarkably productive, bringing together researchers with diverse perspectives on intelligence. Fahlman’s ability to work across the symbolic-connectionist divide made him a particularly valuable member of this community, and his ideas about how to combine the strengths of both approaches continue to influence the field today.

Legacy and Influence on Modern AI

Fahlman’s career illustrates a pattern that is common among the most influential computer scientists: the ability to see connections between apparently unrelated problems and to work across traditional disciplinary boundaries. His journey from semantic networks to parallel computing to emoticons to knowledge representation reflects a consistent intellectual thread — the conviction that intelligence, whether natural or artificial, depends on rich, structured representations of knowledge, and that the key to building intelligent systems lies in finding efficient computational mechanisms for reasoning over these representations.

In the modern AI landscape, Fahlman’s ideas are more relevant than ever. The limitations of pure deep learning — hallucinations, lack of common sense, inability to explain reasoning — are precisely the problems that Fahlman has been working on for decades. The growing interest in neuro-symbolic AI, which combines neural networks with structured knowledge representations, vindicates his long-standing argument that both approaches are necessary. As modern task management platforms integrate more sophisticated AI features, the need for the kind of structured knowledge representation Fahlman championed becomes increasingly apparent.

At Carnegie Mellon, Fahlman has mentored generations of students who have gone on to leadership positions across industry and academia. His teaching has emphasized the importance of thinking deeply about representation — not just how to learn patterns from data, but how to organize knowledge in ways that support robust, flexible reasoning. This emphasis on representation over raw computation reflects a wisdom about AI that the field is only now beginning to fully appreciate.

The emoticon remains his most widely known contribution, and Fahlman has accepted this legacy with characteristic humor. He has noted in interviews that being known as the emoticon guy is a somewhat unusual distinction for an AI researcher, but he recognizes that the emoticon solved a genuine problem in human communication and that its impact on digital culture has been profound. The three characters he typed in 1982 were a small thing, but they changed how the entire connected world expresses emotion through text — and in doing so, they demonstrated a principle that Fahlman has championed throughout his career: that the most elegant solutions are often the simplest ones.

Frequently Asked Questions

Did Scott Fahlman really invent the emoticon?

Yes, Scott Fahlman proposed the use of :-) and :-( character sequences on the Carnegie Mellon University bulletin board system on September 19, 1982. While various typographic marks and symbols had been used informally before, Fahlman’s proposal was the first documented, widely adopted system for marking emotional tone in digital text communication. The original message was recovered from backup tapes in 2002 by CMU researcher Mike Jones, confirming the date and content of Fahlman’s proposal.

What is the SCONE knowledge base system?

SCONE is a knowledge representation system developed by Fahlman at Carnegie Mellon University, designed to provide machines with structured common-sense knowledge. Unlike statistical AI models, SCONE organizes knowledge into networks of types, instances, roles, and relations, organized across multiple contexts. It uses efficient marker-passing algorithms for inheritance reasoning and contextual inference, building on ideas Fahlman first explored in his NETL dissertation. SCONE aims to complement deep learning systems by providing the structured, verifiable reasoning capabilities they lack.

What is the cascade-correlation learning algorithm?

Cascade-correlation is a neural network learning algorithm developed by Fahlman and Christian Lebiere at CMU in the late 1980s. Unlike standard backpropagation, which requires the network architecture to be predetermined, cascade-correlation dynamically constructs the network during training. It starts with a minimal network and progressively adds hidden units, each trained to correlate maximally with the remaining error. Once a unit is added, its input weights are frozen, and only the output connections are adjusted. This approach is faster than standard backpropagation and automatically determines the appropriate network size for a given problem.

How did Fahlman contribute to the Lisp programming language?

Fahlman was a key member of the committee that designed and standardized Common Lisp in the 1980s. He was the primary author of the specification for the language’s object system (originally called “New Flavors”), which contributed to what became CLOS (Common Lisp Object System). He also helped design the condition system for error handling, which remains one of the most sophisticated exception-handling mechanisms in any programming language. His contributions were driven by the practical needs of AI research, ensuring that Common Lisp provided the tools AI researchers needed for complex knowledge representation work.

What is Fahlman’s position on modern deep learning and large language models?

Fahlman has been a thoughtful critic of the view that deep learning alone can achieve general artificial intelligence. He argues that while modern neural networks excel at pattern recognition and statistical language modeling, they fundamentally lack the structured knowledge representations needed for genuine understanding and common-sense reasoning. He advocates for hybrid or neuro-symbolic approaches that combine the learning capabilities of neural networks with the structured reasoning of systems like SCONE. This position, once considered contrarian, is gaining increasing support as researchers confront the limitations of purely statistical models, including hallucinations and the inability to perform reliable logical reasoning.