In 1991, a young German graduate student at the Technical University of Munich made a discovery that most of his professors considered irrelevant. Sepp Hochreiter, working on his diploma thesis under the supervision of Jürgen Schmidhuber, mathematically proved that the standard recurrent neural networks of the era suffered from a fatal flaw: their gradients vanished exponentially as they tried to learn long-range dependencies in sequential data. It was not a minor inconvenience. It was a fundamental barrier that made it impossible for these networks to learn patterns that stretched more than about ten timesteps into the past. Four years later, Hochreiter and Schmidhuber published their solution — the Long Short-Term Memory network, or LSTM — an architecture that would become one of the most influential inventions in the entire history of artificial intelligence. For the next two decades, LSTM networks powered virtually every major advance in sequence modeling: machine translation at Google, voice recognition in Siri and Alexa, handwriting recognition, music generation, drug discovery, and the early language models that preceded today’s transformer-based systems. The story of Sepp Hochreiter is the story of a researcher who identified a problem that everyone else was ignoring, solved it with mathematical precision, and then spent decades watching his solution transform the world.

Early Life and Education

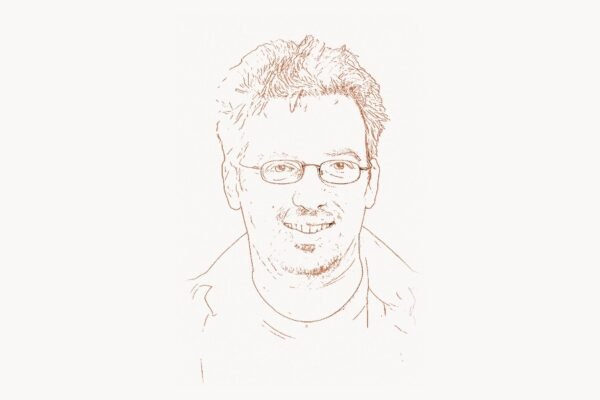

Sepp Hochreiter was born on February 14, 1967, in the municipality of Mühldorf am Inn in Upper Bavaria, Germany. Growing up in a region known more for its baroque churches and Alpine landscapes than for its contributions to computer science, Hochreiter developed an early aptitude for mathematics and the natural sciences. His intellectual curiosity drew him toward the abstract and the formal — the kinds of problems where rigorous thinking could yield definitive answers.

Hochreiter enrolled at the Technical University of Munich (TUM), one of Germany’s most prestigious engineering universities, where he studied computer science. TUM had a strong tradition in both theoretical and applied computing, and it was there that Hochreiter first encountered the emerging field of neural networks. The late 1980s were an interesting time for the discipline: Geoffrey Hinton, David Rumelhart, and Ronald Williams had recently demonstrated the power of backpropagation for training multi-layer networks, and there was renewed optimism that neural approaches could solve problems that had defeated symbolic AI. But there were also deep unresolved questions — questions that the prevailing enthusiasm tended to paper over rather than address.

It was at TUM that Hochreiter came under the supervision of Jürgen Schmidhuber, a young and ambitious researcher who was building a group focused on learning algorithms and neural computation. Schmidhuber encouraged his students to think boldly and to tackle fundamental problems rather than incremental ones. This environment proved ideal for Hochreiter, whose instinct was to dig into the mathematical foundations of neural networks rather than simply to apply existing architectures to new datasets. The result was a diploma thesis — equivalent to a master’s thesis in the Anglo-American system — that would shake the foundations of the field.

For his diploma thesis in 1991, Hochreiter conducted a detailed mathematical analysis of the gradient flow in recurrent neural networks. Recurrent networks, unlike feedforward networks, have connections that loop back on themselves, allowing them to process sequences of inputs and maintain a form of memory. They were considered the natural architecture for tasks involving time series, language, and any other data with temporal structure. But Hochreiter’s analysis revealed a devastating problem.

The LSTM Breakthrough

Technical Innovation

The problem Hochreiter identified in 1991 was what became known as the vanishing gradient problem. When a recurrent neural network is trained using backpropagation through time — unfolding the network across timesteps and computing gradients of the loss with respect to earlier weights — the gradients must pass through a long chain of multiplicative operations. If the eigenvalues of the recurrent weight matrix are less than one (which they typically are), these gradients shrink exponentially with each timestep. After just ten to twenty steps, the gradient signal is effectively zero. The network cannot learn to associate an input at timestep 1 with an output at timestep 50 because the information about that early input has been completely washed away during training.

This was not merely a practical inconvenience that could be solved with more computing power or better learning rates. It was a mathematical inevitability rooted in the fundamental dynamics of gradient-based learning in recurrent architectures. Hochreiter proved it rigorously, and the implication was stark: standard recurrent neural networks, as conceived by researchers like Marvin Minsky‘s contemporaries and successors, simply could not learn long-range dependencies. The entire architecture was broken for the tasks that mattered most.

Having diagnosed the disease, Hochreiter and Schmidhuber set about designing the cure. The result, published in their landmark 1997 paper “Long Short-Term Memory” in the journal Neural Computation, was an entirely new kind of recurrent unit. The LSTM cell introduced a revolutionary concept: the constant error carousel. Instead of allowing the hidden state to be continuously overwritten by new inputs — the source of the vanishing gradient — the LSTM maintained a separate cell state that could carry information across arbitrarily long sequences without degradation. The cell state was a linear, self-connected unit whose gradient, by design, neither vanished nor exploded.

To control the flow of information into and out of this cell state, Hochreiter and Schmidhuber introduced a system of gates — multiplicative units that learned when to store information, when to retrieve it, and when to erase it. The original LSTM had an input gate and an output gate. The input gate controlled what new information was written to the cell state. The output gate controlled what information from the cell state was exposed to the rest of the network. A later refinement by Felix Gers, Schmidhuber, and Fred Cummins added the forget gate, which controlled when old information in the cell state should be discarded. The gating mechanism was the key innovation. Each gate was itself a neural network — a sigmoid layer that learned to produce values between 0 and 1, effectively learning when to open and close the flow of information. This made the LSTM a self-regulating memory system.

import torch

import torch.nn as nn

class LSTMCellExplained(nn.Module):

"""

Demonstrates the internal mechanics of the LSTM cell

as designed by Hochreiter & Schmidhuber (1997).

The key insight: a cell state with gated access

that maintains constant error flow across timesteps.

"""

def __init__(self, input_size, hidden_size):

super().__init__()

self.hidden_size = hidden_size

# Four gate transformations computed in parallel

# (input, forget, cell candidate, output)

self.gates = nn.Linear(input_size + hidden_size, 4 * hidden_size)

def forward(self, x_t, h_prev, c_prev):

# Concatenate input with previous hidden state

combined = torch.cat([x_t, h_prev], dim=-1)

gate_values = self.gates(combined)

# Split into four separate gate outputs

i_gate, f_gate, g_candidate, o_gate = gate_values.chunk(4, dim=-1)

# Input gate: what new information to store

i_t = torch.sigmoid(i_gate)

# Forget gate (Gers et al., 2000): what old info to discard

f_t = torch.sigmoid(f_gate)

# Candidate cell state: proposed new information

g_t = torch.tanh(g_candidate)

# Output gate: what to expose to the network

o_t = torch.sigmoid(o_gate)

# CORE MECHANISM — the "constant error carousel":

# Cell state update preserves long-range gradient flow.

# Information persists unless explicitly gated out.

c_t = f_t * c_prev + i_t * g_t

# Hidden state: filtered view of the cell state

h_t = o_t * torch.tanh(c_t)

return h_t, c_t

# Example: processing a sequence with LSTM

input_size, hidden_size, seq_len = 10, 64, 100

lstm_cell = LSTMCellExplained(input_size, hidden_size)

# Initialize hidden and cell states

h = torch.zeros(1, hidden_size)

c = torch.zeros(1, hidden_size)

# Process 100 timesteps — LSTM can learn dependencies

# across ALL of these, unlike vanilla RNNs

sequence = torch.randn(seq_len, 1, input_size)

for t in range(seq_len):

h, c = lstm_cell(sequence[t], h, c)

print(f"Final hidden state norm: {h.norm().item():.4f}")

print(f"Cell state norm after {seq_len} steps: {c.norm().item():.4f}")

# Cell state maintains meaningful values even after 100 steps —

# this is the constant error carousel in actionWhy It Mattered

The LSTM architecture solved a problem that had been silently crippling an entire class of neural networks. Before LSTM, recurrent networks could handle short sequences — a few words, a handful of timesteps — but they failed catastrophically on the longer sequences that characterize real-world data. Human language has dependencies that stretch across paragraphs. Musical structure spans entire compositions. Financial time series contain patterns that play out over weeks and months. The vanishing gradient problem meant that none of these could be modeled effectively. LSTM changed that in a single stroke.

The impact was not immediate. The 1997 paper was recognized as important within the neural network community, but the broader AI and machine learning world of the late 1990s and early 2000s was dominated by other approaches — support vector machines, random forests, and graphical models. Hardware limitations also constrained the practical application of LSTMs. It was only in the late 2000s and early 2010s, as GPU computing became widely available and large datasets became accessible, that LSTM networks began to demonstrate their full potential.

When they did, the results were dramatic. In 2009, an LSTM network designed by Schmidhuber’s group won the ICDAR connected handwriting recognition competition. In 2013, Alex Graves — a student of both Schmidhuber and Hinton — demonstrated that deep LSTM networks could achieve breakthrough results in speech recognition. Google adopted LSTM for Google Voice search and Google Translate in 2015, and Apple incorporated LSTM into Siri. By 2016, LSTM networks were processing the queries of billions of users daily. Ilya Sutskever, who would co-found OpenAI, used LSTM-based sequence-to-sequence models in his influential work on machine translation at Google, directly building on the foundation that Hochreiter had established.

The LSTM also opened the door to a broader family of gated recurrent architectures. The Gated Recurrent Unit (GRU), introduced by Yoshua Bengio‘s group in 2014, simplified the LSTM by merging the forget and input gates and combining the cell state with the hidden state. Attention mechanisms, which allow networks to focus on specific parts of a sequence regardless of distance, built on the same intuition about selective information flow. And the transformer architecture — introduced in the 2017 paper “Attention Is All You Need” — while it replaced recurrence entirely with self-attention, was motivated by the same problem that LSTM had addressed: how to model long-range dependencies in sequential data. The transformer can be seen as a descendant, if not of LSTM’s architecture, then of the problem it solved and the principle it established — that neural networks need explicit mechanisms for controlling information flow across time.

Other Major Contributions

While LSTM remains Hochreiter’s most famous contribution, his broader body of work extends across several important areas of machine learning and computational biology.

The Vanishing Gradient Problem. Hochreiter’s 1991 diploma thesis, in which he formally identified and analyzed the vanishing gradient problem, is itself a landmark contribution independent of LSTM. The problem had been observed empirically by other researchers, but Hochreiter provided the first rigorous mathematical analysis demonstrating that the gradient signal in standard recurrent networks decays exponentially with the number of timesteps. This analysis influenced not only the development of LSTM but also the broader understanding of training dynamics in deep networks. The techniques later developed by other researchers to mitigate gradient issues in very deep feedforward networks — including residual connections (used in ResNets), batch normalization, and careful weight initialization — all address variants of the same fundamental problem that Hochreiter characterized in 1991.

Self-Normalizing Neural Networks (SNNs). In 2017, Hochreiter and his colleagues Günter Klambauer, Thomas Unterthiner, and Andreas Mayr published a paper introducing self-normalizing neural networks. The key idea was to use the Scaled Exponential Linear Unit (SELU) activation function, which has the mathematical property that it automatically normalizes the activations across layers during forward propagation. In a standard deep feedforward network, activations can either explode or shrink as they pass through many layers, requiring techniques like batch normalization to keep them in a reasonable range. SELU-based networks avoid this problem by design: the activation function itself drives the mean and variance of the activations toward fixed points, ensuring stable training without additional normalization layers. This work demonstrated that careful mathematical design of activation functions could eliminate the need for auxiliary normalization techniques, simplifying network architectures and improving training stability.

import numpy as np

def selu(x, alpha=1.6732632423543772, scale=1.0507009873554805):

"""

Scaled Exponential Linear Unit (SELU) — Hochreiter et al. (2017).

The specific alpha and scale values are not arbitrary:

they are derived mathematically to ensure that activations

converge to zero mean and unit variance during forward

propagation, achieving "self-normalization" without

batch normalization or layer normalization.

"""

return scale * np.where(x > 0, x, alpha * (np.exp(x) - 1))

# Demonstrate self-normalization across deep layers

np.random.seed(42)

layer_count = 50

neurons = 256

x = np.random.randn(1000, neurons) # Standard normal input

for layer in range(layer_count):

# Lecun normal initialization (required for SELU)

W = np.random.randn(neurons, neurons) / np.sqrt(neurons)

x = selu(x @ W)

print(f"After {layer_count} layers:")

print(f" Mean: {x.mean():.4f} (target: ~0)")

print(f" Std: {x.std():.4f} (target: ~1)")

# Activations remain normalized without batch normalization —

# this is the self-normalization property of SELUDeepTox and Computational Biology. Hochreiter applied deep learning methods to toxicology prediction with the DeepTox system, which won the NIH Tox21 Data Challenge in 2014. DeepTox used deep neural networks to predict the toxicity of chemical compounds based on their molecular structure — a task of enormous practical importance for drug development and environmental safety. The system demonstrated that deep learning could outperform traditional cheminformatics methods on large-scale toxicity prediction, and it helped establish deep learning as a serious tool in computational chemistry and pharmacology. This work reflected Hochreiter’s broader interest in applying rigorous machine learning methods to problems in biology and medicine.

Flat Minima and Generalization. Hochreiter’s early work also explored the relationship between the geometry of the loss landscape and the generalization ability of neural networks. His 1997 paper with Schmidhuber, “Flat Minima,” argued that networks whose parameters lie in broad, flat regions of the loss surface generalize better than those in sharp, narrow minima. This insight anticipated by nearly two decades the modern line of research on sharpness-aware minimization (SAM) and the relationship between loss landscape geometry and generalization — a topic that has become central to understanding why deep learning works as well as it does. Managing the complexity of modern AI research — from tracking hyperparameter experiments to coordinating multi-team publication efforts — demands disciplined project workflows, and platforms like Taskee provide the structure needed to keep such research on track.

Modern Hopfield Networks. In more recent work, Hochreiter has contributed to the revival and modernization of Hopfield networks — associative memory models originally developed by John Hopfield in the 1980s. Hochreiter and collaborators demonstrated that modern Hopfield networks with continuous states and exponential interaction functions have dramatically increased storage capacity compared to classical Hopfield networks, and they showed that the attention mechanism in transformers can be understood as a special case of the modern Hopfield network update rule. This work provides a theoretical bridge between classical associative memory models and the transformer architectures that dominate contemporary AI.

Philosophy and Approach

Key Principles

Sepp Hochreiter’s approach to research is distinguished by a commitment to mathematical rigor that is unusual even in a field as technical as machine learning. Where many researchers in AI proceed primarily by experimentation — training models on benchmarks and reporting results — Hochreiter has consistently sought to understand the mathematical foundations of why architectures and algorithms behave as they do. His identification of the vanishing gradient problem was not the result of noticing that recurrent networks sometimes failed; it was the result of a careful mathematical analysis of the eigenvalue structure of recurrent weight matrices and the exponential dynamics of gradient flow. Similarly, the LSTM was not designed by trial and error but by deriving the mathematical conditions under which a recurrent unit could maintain stable gradient flow, and then engineering an architecture that satisfied those conditions.

This mathematical-first philosophy stands in contrast to the increasingly empirical culture of modern AI research, where architectures are often designed by intuition and validated by scaling. Hochreiter has been vocal about the importance of theoretical understanding, arguing that genuine scientific progress requires not just models that work but models whose success is understood. This perspective aligns with the tradition of researchers like Judea Pearl, who has similarly argued that machine learning needs deeper theoretical foundations — particularly around causality — to move beyond pattern matching toward genuine understanding.

Since 2006, Hochreiter has led the Institute for Machine Learning at Johannes Kepler University (JKU) in Linz, Austria. Under his leadership, JKU has become one of Europe’s leading centers for deep learning research. The institute’s work spans theoretical foundations, novel architectures, and applications in biology and chemistry. Hochreiter has also been involved in the development of the ELLIS (European Laboratory for Learning and Intelligent Systems) network, which aims to establish European AI research as a counterweight to the concentration of AI talent and resources in the United States and China.

Hochreiter’s position on the current state of AI research is nuanced. He recognizes the extraordinary practical achievements of deep learning but is cautious about claims that current systems exhibit genuine intelligence or understanding. He has emphasized that the success of models like GPT and its successors is largely a function of scale — massive datasets and massive compute — and that the field still lacks fundamental understanding of why these models work as well as they do. He has also pointed to the limitations of current architectures, including the quadratic computational cost of transformer self-attention, and has argued for the development of new architectures grounded in stronger theoretical principles. Building robust digital platforms that integrate AI capabilities effectively requires not only cutting-edge models but also thoughtful system architecture and user experience design.

One of Hochreiter’s most distinctive intellectual contributions is the idea that the relationship between classical associative memory models and modern deep learning is deeper than most researchers realize. His work on modern Hopfield networks suggests that the attention mechanism — the core innovation of the transformer — is not an arbitrary engineering choice but is connected to fundamental principles of associative recall in neural systems. This kind of theoretical unification, connecting seemingly disparate ideas into a coherent framework, is characteristic of Hochreiter’s approach and reflects his belief that the most important advances in AI will come from deeper understanding rather than larger models.

Legacy and Impact

Sepp Hochreiter’s legacy is built on contributions that have shaped the trajectory of artificial intelligence at the most fundamental level. The LSTM architecture, which he co-invented in 1997, became the dominant approach to sequence modeling for nearly two decades and remains widely used today. Every machine translation system, speech recognition engine, and text generation model built between 2000 and 2017 was either directly based on LSTM or on architectures that LSTM made possible. Google, Apple, Amazon, Microsoft, Facebook, and virtually every other major technology company deployed LSTM-based systems at scale. The economic value created by LSTM applications — in translation, voice assistants, search, recommendation systems, and autonomous vehicles — is measured in hundreds of billions of dollars.

The vanishing gradient analysis that preceded LSTM was equally foundational. By providing a rigorous mathematical explanation for why recurrent networks failed to learn long-range dependencies, Hochreiter gave the field a clear diagnosis of a problem that had been confusing researchers for years. This analysis did not just motivate LSTM; it influenced the design of every subsequent architecture for sequence modeling, including GRUs, attention mechanisms, and transformers. The principle that neural architectures must be explicitly designed to support stable gradient flow — rather than hoping that training algorithms will somehow compensate for architectural flaws — is now a cornerstone of deep learning practice, and it traces directly to Hochreiter’s 1991 thesis.

The broader influence of Hochreiter’s work extends to the current generation of AI researchers and practitioners. Andrej Karpathy‘s influential blog post “The Unreasonable Effectiveness of Recurrent Neural Networks” — which introduced a generation of programmers to the power of character-level LSTM language models — demonstrated concepts that Hochreiter had made possible. Yann LeCun‘s work on convolutional networks addressed the spatial domain in much the same way that LSTM addressed the temporal domain, and the combination of CNNs and LSTMs powered many of the breakthrough systems of the 2010s, from image captioning to video understanding. Ian Goodfellow‘s generative adversarial networks, while architecturally different from LSTMs, benefited from the same GPU computing ecosystem and deep learning toolkit that LSTM helped to establish.

Hochreiter’s influence on the field is recognized through numerous awards and honors. He received the IEEE CIS Neural Network Pioneer Award in 2021, one of the most prestigious recognitions in computational intelligence. His original LSTM paper is one of the most cited papers in the entire history of computer science, with tens of thousands of citations. He has been recognized as one of the most influential AI researchers in the world by multiple academic ranking systems.

Perhaps most importantly, Hochreiter’s career demonstrates a model of scientific work that prioritizes depth over breadth, understanding over performance metrics, and mathematical rigor over empirical scaling. In an era when AI research is increasingly dominated by the resources of large corporations and the relentless pursuit of benchmark improvements, Hochreiter’s example serves as a reminder that the most impactful advances often come from individuals who take the time to understand the fundamental problems before attempting to solve them. The LSTM was not the product of a massive research lab or billions of dollars in compute. It was the product of a graduate student who looked carefully at the mathematics, identified a problem that everyone else had overlooked, and designed an elegant solution grounded in first principles.

Key Facts

- Full name: Josef “Sepp” Hochreiter

- Born: February 14, 1967, Mühldorf am Inn, Bavaria, Germany

- Education: Diploma and PhD in Computer Science, Technical University of Munich

- Known for: Co-inventing LSTM (1997), discovering the vanishing gradient problem (1991), self-normalizing neural networks (2017), modern Hopfield networks

- Positions: Professor and Head of the Institute for Machine Learning, Johannes Kepler University Linz, Austria (since 2006)

- Awards: IEEE CIS Neural Network Pioneer Award (2021)

- Key collaborator: Jürgen Schmidhuber (co-inventor of LSTM)

- LSTM applications: Google Translate, Siri, Alexa, handwriting recognition, music generation, drug discovery, financial modeling

- Citations: The 1997 LSTM paper has received tens of thousands of citations, making it one of the most cited papers in computer science

- Research interests: Deep learning theory, computational biology, modern Hopfield networks, self-normalizing architectures

Frequently Asked Questions

What is LSTM and why was it so important?

LSTM (Long Short-Term Memory) is a type of recurrent neural network architecture co-invented by Sepp Hochreiter and Jürgen Schmidhuber in 1997. It solved the vanishing gradient problem — the fundamental inability of standard recurrent networks to learn relationships between events separated by many timesteps. LSTM introduced a cell state with learnable gates that control information flow, allowing the network to remember important information for hundreds or thousands of timesteps. This made it possible to build effective neural networks for language translation, speech recognition, time series prediction, and many other tasks involving sequential data. LSTM was the dominant sequence modeling architecture from roughly 2000 to 2017 and remains widely used today.

What is the vanishing gradient problem that Hochreiter discovered?

The vanishing gradient problem occurs when training recurrent neural networks using backpropagation through time. As gradients are propagated backward through many timesteps, they must pass through repeated multiplications by the recurrent weight matrix. If the largest eigenvalue of this matrix is less than one — which is typically the case — the gradients shrink exponentially, becoming effectively zero after just 10-20 timesteps. This means the network cannot learn to associate an early input with a later output if they are separated by more than a few steps. Hochreiter formally proved this in his 1991 diploma thesis, providing the theoretical foundation that motivated the development of LSTM and later gated architectures. The same problem also affects very deep feedforward networks, where it is addressed by techniques like residual connections and normalization layers.

How does LSTM relate to modern transformer models?

Transformer models, introduced in 2017, largely replaced LSTM as the dominant architecture for language modeling and sequence processing. However, the relationship between the two is one of inheritance rather than opposition. Transformers were developed to address the same fundamental problem that LSTM solved — modeling long-range dependencies in sequential data — but they used a different mechanism: self-attention instead of recurrent gating. Self-attention allows every position in a sequence to directly attend to every other position, avoiding the sequential processing bottleneck of recurrent networks. Hochreiter’s own recent work on modern Hopfield networks has shown that the transformer’s attention mechanism can be understood as a form of associative memory retrieval, suggesting deep theoretical connections between LSTM’s gating approach and the transformer’s attention approach. Both architectures represent different engineering solutions to the same underlying mathematical challenge that Hochreiter first characterized in 1991.

What is Hochreiter working on now?

As of the mid-2020s, Hochreiter leads the Institute for Machine Learning at Johannes Kepler University in Linz, Austria. His current research focuses on several areas: modern Hopfield networks, which provide a theoretical framework connecting classical associative memory to transformer attention mechanisms; new architectures designed to overcome the quadratic computational cost of transformer self-attention; applications of deep learning to computational biology and drug discovery; and the theoretical foundations of why deep learning generalizes as well as it does. He is also involved in the ELLIS (European Laboratory for Learning and Intelligent Systems) network, working to strengthen European AI research. His work on the xLSTM architecture, published in 2024, represents an effort to revive and modernize the LSTM concept with exponential gating and enhanced memory structures, potentially offering advantages over transformers for certain sequence modeling tasks.