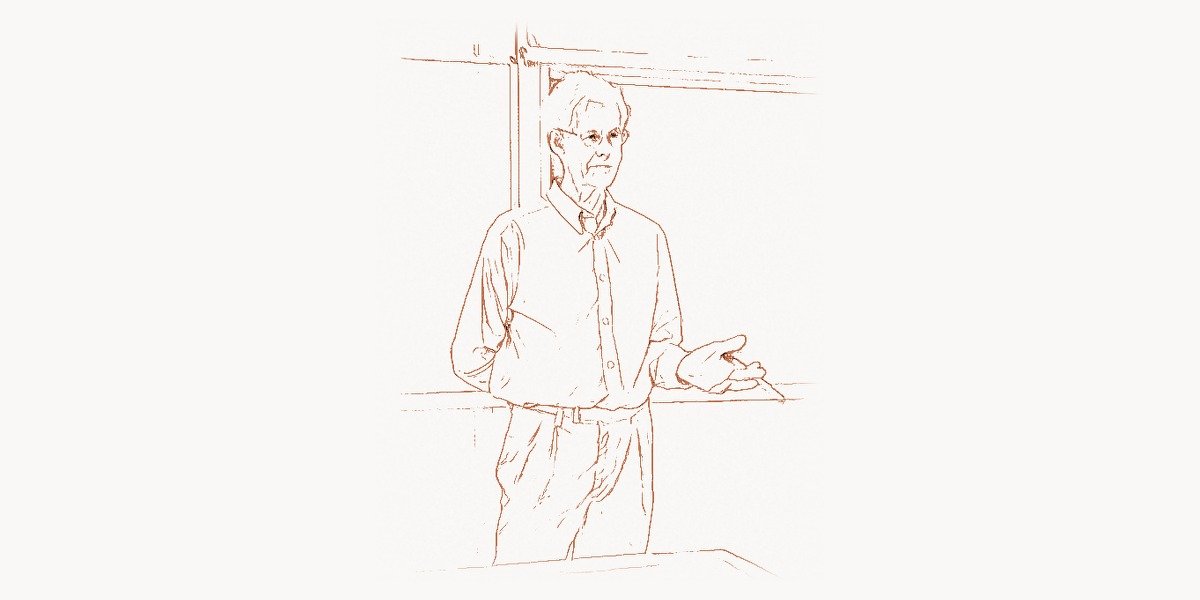

In 1971, at a conference in Stony Brook, New York, a young mathematician from the University of Toronto stood before an audience of logicians and computer scientists and presented a 14-page paper that would become one of the most consequential documents in the history of computing. Stephen Arthur Cook, then just 32 years old, formally proved that the Boolean satisfiability problem (SAT) was what he called “NP-complete” — a result that crystallized an entire field of study and gave rise to the most famous unsolved question in mathematics and computer science: does P equal NP? More than five decades later, the Clay Mathematics Institute still offers a million-dollar bounty for anyone who can settle the question Cook made precise. His work didn’t just ask a question — it built the language, the framework, and the conceptual architecture that transformed computational complexity from scattered observations into a rigorous scientific discipline.

Early Life and Academic Formation

Stephen Arthur Cook was born on December 14, 1939, in Buffalo, New York. Growing up in a middle-class American household, Cook showed an early aptitude for mathematics and logical thinking. His father was a chemist, and the scientific temperament at home encouraged a rigorous, evidence-based worldview that would serve Cook well in his academic career.

Cook completed his undergraduate studies at the University of Michigan, where he earned a Bachelor of Science degree in 1961. Michigan’s mathematics department was already a powerhouse, and the intellectual environment there gave Cook a strong foundation in both pure mathematics and the emerging discipline of theoretical computer science. He then moved to Harvard University for his doctoral work, studying under the legendary John von Neumann-influenced tradition of mathematical rigor applied to computing.

At Harvard, Cook completed his Ph.D. in 1966 under the supervision of Hao Wang, a logician who worked on mechanical theorem proving and the foundations of mathematics. Wang’s influence on Cook was profound — the emphasis on formal logic, decidability, and the boundary between what machines can and cannot compute became central themes in Cook’s entire career. Cook’s dissertation explored the complexity of multiplying matrices and the minimum number of steps required for certain computations, foreshadowing his lifelong obsession with understanding the inherent difficulty of computational problems.

The Road to NP-Completeness

After Harvard, Cook briefly held a position at the University of California, Berkeley. However, in a decision that would prove fateful for the history of computer science, Berkeley declined to offer Cook tenure — a decision that many have since called one of the most spectacular misjudgments in academic history. Cook moved to the University of Toronto in 1970, where he would remain for the rest of his career and where he would produce his most important work.

The intellectual climate of the late 1960s was ripe for Cook’s breakthrough. Alan Turing had already established the foundations of computability theory in the 1930s, proving that certain problems were fundamentally undecidable. But Turing’s work drew a binary line — problems were either computable or not. What Turing’s framework didn’t address was the vast landscape of problems that were technically solvable but might require impossibly long computation times. If a problem could be solved in principle but required more time than the age of the universe, was it really “solved” in any practical sense?

Researchers like Jack Edmonds and Juris Hartmanis had begun exploring the idea that computational problems could be classified by how much time (or space) they required to solve. The notion of polynomial time — problems solvable in time proportional to some polynomial function of the input size — was emerging as the dividing line between “tractable” and “intractable.” But a systematic theory of complexity classes was still missing.

The Cook-Levin Theorem: A Landmark in Computer Science

In 1971, Cook published his seminal paper “The Complexity of Theorem-Proving Procedures” at the ACM Symposium on Theory of Computing (STOC). In this paper, Cook introduced the concept of NP-completeness and proved that the Boolean satisfiability problem (SAT) was NP-complete. Independently, Leonid Levin in the Soviet Union arrived at essentially the same result, which is why the foundational theorem is often called the Cook-Levin theorem.

To understand why this result was so revolutionary, we need to unpack the complexity classes involved. The class P consists of all decision problems solvable in polynomial time by a deterministic Turing machine. The class NP consists of all decision problems for which a proposed solution can be verified in polynomial time. Every problem in P is automatically in NP (if you can solve it quickly, you can certainly check a solution quickly), but the great question is whether the reverse holds: can every problem whose solution is easily checked also be easily solved?

Cook proved that SAT has a remarkable property: every problem in NP can be efficiently reduced to SAT. This means that if anyone could find a polynomial-time algorithm for SAT, they would automatically have a polynomial-time algorithm for every problem in NP. In other words, SAT is at least as hard as the hardest problems in NP. This is what it means to be NP-complete.

The proof technique Cook used — polynomial-time reducibility — became the standard tool for the entire field. His approach was to show that the computation of any nondeterministic Turing machine could be encoded as a Boolean formula that is satisfiable if and only if the machine accepts its input. This encoding translates the structure of computation itself into the language of propositional logic.

How Cook’s Reduction Works

The essence of Cook’s proof is an elegant encoding. Given any NP problem and a nondeterministic Turing machine that solves it, Cook showed how to construct a Boolean formula that captures the entire computation. The formula uses variables to represent the state of the machine at each time step, the position of the read/write head, and the contents of each tape cell. Clauses in the formula enforce that the computation follows the machine’s transition rules, starts in the correct initial configuration, and ends in an accepting state.

Here is a simplified illustration of how a SAT solver checks satisfiability using the DPLL (Davis-Putnam-Logemann-Loveland) algorithm, one of the foundational algorithms in this space:

def dpll(clauses, assignment):

"""

DPLL Algorithm for Boolean Satisfiability (SAT)

A backtracking search algorithm that determines whether

a set of clauses (in CNF) is satisfiable.

Each clause is a set of literals (positive or negative integers).

Assignment is a dict mapping variable -> True/False.

"""

# Unit propagation: if a clause has one literal, it must be true

changed = True

while changed:

changed = False

for clause in clauses:

unset = [l for l in clause if abs(l) not in assignment]

false_count = sum(

1 for l in clause

if abs(l) in assignment

and assignment[abs(l)] != (l > 0)

)

if false_count == len(clause):

return False # Conflict: clause is unsatisfiable

if len(unset) == 1 and false_count == len(clause) - 1:

# Unit clause: force the remaining literal to be true

lit = unset[0]

assignment[abs(lit)] = (lit > 0)

changed = True

# Check if all clauses are satisfied

all_satisfied = True

for clause in clauses:

satisfied = any(

abs(l) in assignment and assignment[abs(l)] == (l > 0)

for l in clause

)

if not satisfied:

all_satisfied = False

break

if all_satisfied:

return True

# Choose an unassigned variable (branching heuristic)

all_vars = {abs(l) for clause in clauses for l in clause}

unassigned = [v for v in all_vars if v not in assignment]

if not unassigned:

return False

var = unassigned[0]

# Try assigning True

assignment[var] = True

if dpll(clauses, dict(assignment)):

return True

# Backtrack and try False

assignment[var] = False

if dpll(clauses, dict(assignment)):

return True

# Backtrack: remove assignment

del assignment[var]

return False

# Example: (x1 OR NOT x2) AND (NOT x1 OR x3) AND (x2 OR x3)

# Variables: 1=x1, 2=x2, 3=x3; negative = NOT

clauses = [{1, -2}, {-1, 3}, {2, 3}]

result = dpll(clauses, {})

print(f"Satisfiable: {result}") # Output: Satisfiable: TrueThe DPLL algorithm, published in 1962, predates Cook’s theorem but became one of the most important practical tools for attacking SAT instances after Cook showed that SAT was the canonical hard problem. Modern SAT solvers, which can handle instances with millions of variables, are direct descendants of this algorithm, enhanced with techniques like conflict-driven clause learning (CDCL), watched literals, and sophisticated branching heuristics.

The P vs NP Question

The immediate consequence of Cook’s theorem was the formalization of the P vs NP question. If P = NP, then every problem whose solution can be efficiently verified can also be efficiently solved. This would have staggering implications: cryptographic systems could be broken, optimization problems in logistics and scheduling could be solved optimally, and mathematical proofs could be generated automatically. If P ≠ NP (which most computer scientists believe), then there exists a fundamental asymmetry in nature between finding solutions and checking them.

In 2000, the Clay Mathematics Institute named P vs NP as one of its seven Millennium Prize Problems, offering a $1,000,000 reward for a correct solution. It remains unsolved. The problem is not merely an abstract curiosity — it strikes at the heart of what computation can accomplish and what the fundamental limits of algorithmic reasoning are.

Claude Shannon had defined the limits of communication; Turing had defined the limits of computability; Cook defined the limits of efficient computation. Together, these three frameworks form the theoretical bedrock of computer science.

Impact on Cryptography and Security

One of the most immediate practical consequences of NP-completeness theory is its relationship to cryptography. Modern cryptographic systems, including RSA (developed by Ron Rivest, Adi Shamir, and Leonard Adleman), rely on the assumption that certain computational problems are inherently difficult. The security of RSA, for instance, depends on the assumption that factoring large numbers cannot be done in polynomial time.

If P = NP, then every NP problem — including the ones that underpin modern encryption — could be solved efficiently. This would render virtually all current cryptographic systems insecure. The entire infrastructure of internet security, digital signatures, and secure communications would collapse. Cook’s formalization of the problem thus has direct implications for cybersecurity, e-commerce, and digital privacy. For teams building modern web applications and managing project workflows, understanding these computational boundaries is essential. Tools like Toimi help development teams coordinate complex projects where algorithmic efficiency directly impacts product quality and delivery timelines.

Karp’s 21 Problems and the Expansion of NP-Completeness

Shortly after Cook’s breakthrough, Richard Karp published his landmark 1972 paper identifying 21 problems as NP-complete. By showing polynomial-time reductions from SAT to problems in graph theory, number theory, scheduling, and combinatorics, Karp demonstrated that NP-completeness was not an isolated phenomenon but a pervasive feature of computational problems across all of computer science.

Karp’s 21 problems included classics such as the Clique problem, Vertex Cover, Hamiltonian Circuit, Graph Coloring, Subset Sum, and the Traveling Salesman Problem (in its decision version). The technique Karp used — showing chains of reductions — became the standard method for proving NP-completeness. Here is a simplified example demonstrating a reduction from 3-SAT to the Independent Set problem, illustrating how one NP-complete problem is transformed into another:

def reduce_3sat_to_independent_set(clauses):

"""

Polynomial-time reduction from 3-SAT to Independent Set.

Given a 3-SAT instance (list of clauses, each with 3 literals),

construct a graph where finding an independent set of size k

(where k = number of clauses) is equivalent to satisfying

the 3-SAT formula.

Returns: (graph_edges, nodes, target_k)

"""

nodes = []

edges = []

# Step 1: Create a triangle (3-clique) for each clause

# Each node represents a literal in that clause

for i, clause in enumerate(clauses):

clause_nodes = []

for j, literal in enumerate(clause):

node = (i, j, literal) # (clause_index, pos, literal)

clause_nodes.append(node)

nodes.append(node)

# Add edges within the same clause (triangle edges)

# This ensures we pick at most one literal per clause

for a in range(len(clause_nodes)):

for b in range(a + 1, len(clause_nodes)):

edges.append((clause_nodes[a], clause_nodes[b]))

# Step 2: Add edges between contradictory literals

# Connect x_i in one clause to NOT x_i in another clause

# This prevents selecting contradictory assignments

for i, node_a in enumerate(nodes):

for j, node_b in enumerate(nodes):

if i >= j:

continue

if node_a[0] == node_b[0]:

continue # Same clause — already connected

# Check if literals are contradictory

if node_a[2] == -node_b[2]:

edges.append((node_a, node_b))

target_k = len(clauses) # Need one literal true per clause

return nodes, edges, target_k

# Example: (x1 OR x2 OR NOT x3) AND (NOT x1 OR x3 OR x4)

# Encoding: x1=1, x2=2, x3=3, x4=4 (negative = NOT)

clauses = [

[1, 2, -3], # Clause 1: x1 OR x2 OR NOT x3

[-1, 3, 4], # Clause 2: NOT x1 OR x3 OR x4

]

nodes, edges, k = reduce_3sat_to_independent_set(clauses)

print(f"Nodes ({len(nodes)}): {nodes}")

print(f"Edges ({len(edges)}):")

for e in edges:

print(f" {e[0]} -- {e[1]}")

print(f"Target independent set size: {k}")

print(f"\nA size-{k} independent set exists IFF the 3-SAT formula is satisfiable.")This reduction captures the essence of NP-completeness proofs: you take a known NP-complete problem and show that any instance of it can be systematically transformed into an instance of the target problem, preserving the answer. If you could solve Independent Set in polynomial time, you could solve 3-SAT (and therefore all of NP) in polynomial time.

Proof Complexity and Beyond

While the Cook-Levin theorem remains his most celebrated achievement, Cook’s contributions extend far beyond that single result. He made foundational contributions to proof complexity, a subfield that studies the lengths of proofs in various formal systems. This work is deeply connected to the P vs NP question: if you could show that certain proof systems require exponentially long proofs for certain tautologies, it would provide strong evidence (and potentially a pathway to proving) that P ≠ NP.

Cook and his longtime collaborator Robert Reckhow introduced the concept of a propositionally sound proof system and showed that a proof system in which every tautology has a short (polynomial-length) proof exists if and only if NP = coNP. This elegant characterization connected proof theory to complexity theory in a way that opened entirely new research directions.

Cook also made important contributions to the study of parallel computation and the complexity class NC (Nick’s Class), which captures problems solvable in polylogarithmic time with a polynomial number of processors. His work helped establish the theoretical foundations for understanding when problems can be efficiently parallelized — a question that has become increasingly important in the era of multi-core processors and distributed computing.

The Turing Award and Recognition

In 1982, Stephen Cook received the ACM Turing Award — often called the Nobel Prize of computing — “for his advancement of our understanding of the complexity of computation in a significant and profound way.” He joined the ranks of other theoretical giants like Edsger Dijkstra and Donald Knuth, who received the award for their contributions to the mathematical foundations of computer science.

Beyond the Turing Award, Cook has received numerous honors including the CRM-Fields-PIMS Prize (1999), fellowship in the Royal Society of London and the Royal Society of Canada, and membership in the United States National Academy of Sciences. He was appointed as a University Professor at the University of Toronto — the highest academic rank at that institution — in recognition of his extraordinary impact on the field.

Cook’s Influence on Modern Computer Science

The framework Cook established has influenced virtually every area of computer science. In algorithm design, when researchers encounter a new optimization problem, one of the first questions they ask is whether the problem is NP-complete. If it is, they know that (assuming P ≠ NP) finding an exact polynomial-time algorithm is hopeless, and they turn instead to approximation algorithms, heuristics, or special-case solutions.

In artificial intelligence, many core problems — planning, scheduling, constraint satisfaction, logical inference — are NP-complete or harder. Understanding this has shaped the development of AI systems, pushing researchers toward techniques like local search, simulated annealing, genetic algorithms, and neural network approximations rather than exact methods. Yoshua Bengio‘s work on deep learning, for instance, can be viewed partly as finding practical ways to navigate the landscape of computationally hard optimization problems.

In database theory, query optimization is intimately connected to complexity theory. The problem of finding the optimal execution plan for a SQL query is NP-hard in general, which is why database systems like those developed by Michael Stonebraker use sophisticated heuristics rather than exact optimization.

In software engineering and project management, scheduling and resource allocation problems — assigning developers to tasks, planning sprint cycles, optimizing build pipelines — are often NP-hard. Modern project management platforms use approximation algorithms and heuristic approaches to solve these problems at scale. Taskee exemplifies this approach, applying intelligent task management techniques to help teams navigate the inherent complexity of software project coordination.

The Philosophy of Computational Difficulty

Cook’s work raised deep philosophical questions that continue to resonate. The P vs NP question is not just about algorithms — it is about the nature of creativity and verification. If P = NP, then any problem where you can recognize a correct answer can also be efficiently solved. This would mean that the ability to appreciate a beautiful proof, a great symphony, or an elegant piece of code implies the ability to create one. Most people’s intuition rebels against this — surely creation is harder than recognition? — and this intuition is one reason most experts believe P ≠ NP.

Cook himself has been careful about making predictions. While he has expressed the belief that P ≠ NP, he has also emphasized the enormous difficulty of proving it. In his view, resolving the question may require entirely new mathematical techniques that have not yet been invented. The difficulty of the P vs NP problem may itself be evidence of the gap between what we can state and what we can prove — a meta-level reflection of the very asymmetry the problem describes.

Teaching and Mentorship

Throughout his career at the University of Toronto, Cook has been a dedicated teacher and mentor. He has supervised numerous Ph.D. students who have gone on to make significant contributions to complexity theory and theoretical computer science. His graduate courses on computational complexity are legendary in the field, known for their rigor and depth.

Cook’s pedagogical approach reflects his research philosophy: start with clean, precise definitions, build up the theory systematically, and always keep the big questions in view. Many of his former students recall that Cook had a gift for taking immensely complicated ideas and presenting them with clarity and elegance, making the deep structure of computational complexity visible even to newcomers.

Legacy and Continuing Relevance

More than half a century after Cook’s landmark paper, the framework he established remains the organizing principle of theoretical computer science. The P vs NP question is not just alive — it is more relevant than ever. As society becomes increasingly dependent on computation, the question of what computers can and cannot do efficiently takes on ever greater urgency.

Quantum computing presents both a challenge and an extension to Cook’s framework. While quantum computers can solve certain problems (like factoring, via Shor’s algorithm) faster than any known classical algorithm, most complexity theorists believe that quantum computers cannot solve NP-complete problems in polynomial time. If this is correct, then Cook’s framework survives even the quantum revolution, and NP-completeness remains a fundamental barrier.

In machine learning, the training of deep neural networks involves solving non-convex optimization problems that are NP-hard in the worst case. The remarkable practical success of gradient descent on these problems remains theoretically mysterious, and understanding it is one of the great open questions at the intersection of complexity theory and learning theory.

Stephen Cook transformed computer science by asking the right question at the right time, with the right tools. His work gave the field a common language for discussing computational difficulty, a precise framework for classifying problems, and a central open question that continues to drive research. The P vs NP problem stands as a monument not just to Cook’s genius but to the power of asking questions that illuminate the deepest structures of computation itself.

Frequently Asked Questions

What exactly is the P vs NP problem?

The P vs NP problem asks whether every computational problem whose solution can be quickly verified (in polynomial time) can also be quickly solved (in polynomial time). P is the class of problems solvable in polynomial time, while NP is the class of problems whose solutions are verifiable in polynomial time. If P = NP, verification and solution are equally easy; if P ≠ NP, some problems are fundamentally harder to solve than to check. Cook’s 1971 theorem showed that the Boolean satisfiability problem (SAT) is the hardest problem in NP, meaning that solving SAT efficiently would solve every NP problem efficiently.

Why is the Cook-Levin theorem so important?

The Cook-Levin theorem is important because it established that SAT is NP-complete — the first problem proven to have this property. This means every problem in NP can be efficiently reduced to SAT. Before Cook’s proof, researchers had no systematic way to demonstrate that a problem was “as hard as the hardest problems in NP.” Cook’s theorem provided both the concept and the technique (polynomial-time reduction) that became the standard tool for classifying problem difficulty. It essentially created the field of NP-completeness theory and provided the theoretical basis for thousands of subsequent results.

What are the practical consequences of NP-completeness?

When a problem is proven to be NP-complete, it tells algorithm designers that (assuming P ≠ NP) there is no efficient exact algorithm for all instances of that problem. This has enormous practical consequences: instead of searching for perfect algorithms, engineers develop approximation algorithms that find near-optimal solutions, heuristic methods that work well in practice despite lacking theoretical guarantees, or algorithms that exploit special structure in real-world instances. Modern SAT solvers, for example, can handle enormous instances despite the theoretical worst-case hardness, because real-world SAT instances have exploitable structure.

How did Stephen Cook’s work influence cryptography?

Cook’s formalization of computational hardness provided the theoretical foundation for modern cryptography. Cryptographic systems rely on the assumption that certain problems (like factoring large numbers or computing discrete logarithms) are computationally intractable. If P = NP, these problems would all have efficient solutions, and modern encryption would be broken. Cook’s framework gave cryptographers a precise language for discussing the security assumptions underlying their systems and connected the security of practical systems to fundamental questions in complexity theory.

Has anyone made progress toward solving P vs NP?

Despite more than fifty years of effort by the world’s best mathematicians and computer scientists, the P vs NP problem remains unsolved. Significant progress has been made in understanding what proof techniques cannot resolve the question — results like the Baker-Gill-Solovay theorem (1975) showed that relativization techniques are insufficient, and the Razborov-Rudich “natural proofs” barrier (1997) showed that another broad class of techniques faces fundamental obstacles. These barrier results suggest that resolving P vs NP will require genuinely new mathematical ideas. Cook himself has contributed to this understanding through his work on proof complexity, which explores the lengths of proofs in various formal systems and connects directly to the P vs NP question.