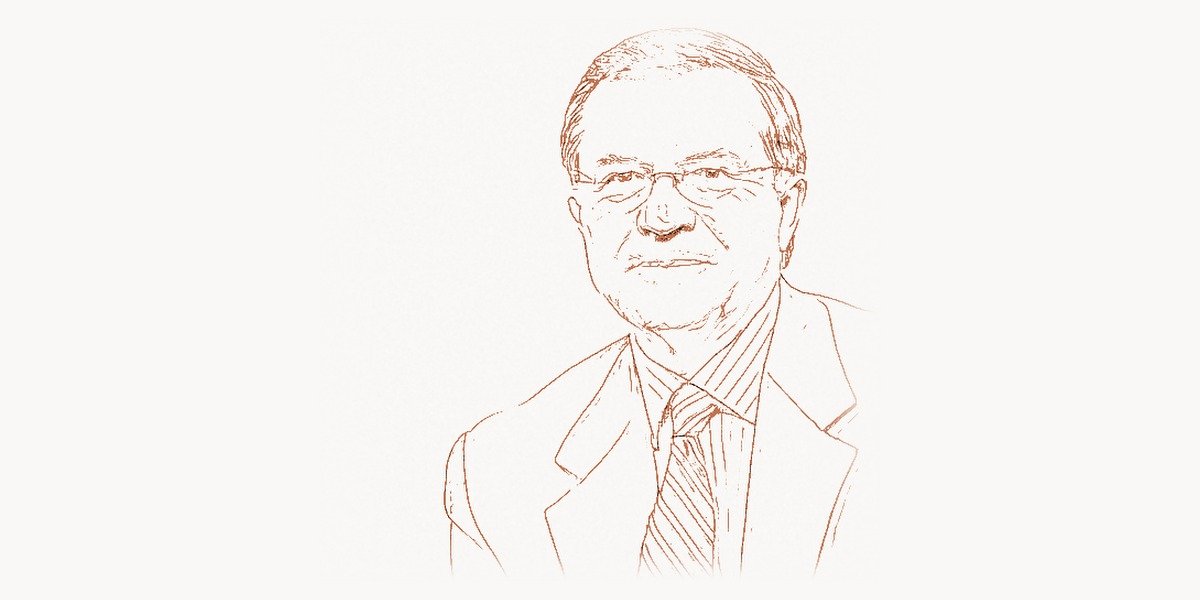

Before the deep learning revolution captured headlines, before GPUs ran neural networks at scale, a biophysicist at the Salk Institute was quietly building the mathematical bridge between how the brain computes and how machines might learn. Terry Sejnowski did not just theorize about neural networks — he co-invented one of the foundational architectures, the Boltzmann machine, that would shape the trajectory of artificial intelligence for decades. His career sits at the remarkable intersection of neuroscience, physics, and computer science, where understanding the brain and building intelligent systems become inseparable pursuits.

Early Life and Education

Terrence Joseph Sejnowski was born on June 13, 1947, in Cleveland, Ohio. Growing up in an era when computers filled entire rooms and the human brain remained a nearly total mystery, Sejnowski developed a fascination with both physical systems and the mind that would define his life’s work. He showed exceptional aptitude in mathematics and science early on, driven by an insatiable curiosity about how things work at their most fundamental level.

Sejnowski pursued his undergraduate studies at Case Western Reserve University, where he earned a bachelor’s degree in physics in 1968. The choice of physics was deliberate — it gave him the rigorous mathematical toolkit that would later prove indispensable for modeling neural systems. His studies in statistical mechanics and thermodynamics would echo throughout his later contributions to machine learning, particularly in the energy-based models that became his signature achievement.

He then moved to Princeton University for graduate work, earning his Ph.D. in physics in 1978. At Princeton, Sejnowski was immersed in a rich intellectual environment where the boundaries between disciplines were more permeable than at most institutions. It was during this period that he began seriously considering how the principles of physics — equilibrium, energy minimization, stochastic processes — could illuminate the operations of biological neural circuits. His doctoral work laid the groundwork for a new kind of thinking about computation: one rooted not in von Neumann architectures, but in the distributed, noisy, massively parallel processing of the brain.

Career and the Boltzmann Machine

After completing his doctorate, Sejnowski joined the faculty at Johns Hopkins University, where he quickly became a central figure in the emerging field of computational neuroscience. It was during the early 1980s that his most famous collaboration began — with Geoffrey Hinton, then a young researcher working on neural network models at Carnegie Mellon University. Together, they would create something that fundamentally altered the landscape of artificial intelligence.

Technical Innovation

The Boltzmann machine, introduced by Sejnowski and Hinton in 1985, was a type of stochastic recurrent neural network that drew its theoretical foundations from statistical mechanics. The core insight was profound: by treating a neural network as a physical system with an energy function, you could use the mathematics of thermodynamic equilibrium — specifically the Boltzmann distribution — to train networks to learn complex probability distributions over their inputs.

The architecture consisted of visible units (which interfaced with data) and hidden units (which captured latent structure). The learning algorithm worked through a process of simulated annealing, gradually lowering a computational “temperature” to allow the network to settle into states that minimized a global energy function. This was fundamentally different from the perceptron-based approaches that had dominated earlier neural network research.

The key mathematical insight can be illustrated through the energy function at the heart of the Boltzmann machine:

import numpy as np

def boltzmann_energy(visible, hidden, weights, visible_bias, hidden_bias):

"""

Compute the energy of a Boltzmann machine configuration.

E(v, h) = -sum(a_i * v_i) - sum(b_j * h_j) - sum(v_i * w_ij * h_j)

Lower energy states are more probable under the Boltzmann distribution:

P(v, h) = exp(-E(v, h)) / Z

where Z is the partition function (normalization constant)

"""

# Bias terms for visible and hidden units

visible_term = np.dot(visible_bias, visible)

hidden_term = np.dot(hidden_bias, hidden)

# Interaction term between visible and hidden units

interaction = np.dot(visible, np.dot(weights, hidden))

# Energy is negative sum of all terms

energy = -(visible_term + hidden_term + interaction)

return energy

def sigmoid(x):

return 1.0 / (1.0 + np.exp(-x))

def sample_hidden(visible, weights, hidden_bias):

"""

Sample hidden unit activations given visible units.

P(h_j = 1 | v) = sigmoid(b_j + sum(v_i * w_ij))

"""

activation = hidden_bias + np.dot(visible, weights)

probabilities = sigmoid(activation)

return (np.random.random(len(hidden_bias)) < probabilities).astype(float)

What made the Boltzmann machine theoretically elegant was its use of a learning rule derived from information theory. The weight update rule aimed to maximize the likelihood of the training data by adjusting weights to make the network's internal model match the statistical structure of the observed data. The learning process effectively measured the difference between correlations when the network was driven by data ("clamped" phase) and when it was running freely ("free" phase).

However, exact inference in full Boltzmann machines was computationally intractable. This challenge led directly to the development of Restricted Boltzmann Machines (RBMs), where connections between units in the same layer were eliminated. This restriction made efficient learning possible through a technique called contrastive divergence, which Hinton later refined. RBMs became the building blocks for Deep Belief Networks, one of the key architectures that launched the deep learning renaissance of the 2000s.

Why It Mattered

The Boltzmann machine was significant for several reasons that extended far beyond its immediate practical applications. First, it established a rigorous mathematical connection between statistical physics and machine learning, demonstrating that the tools physicists used to study thermal equilibrium could be directly applied to the problem of learning from data. This interdisciplinary bridge opened floodgates of research.

Second, the Boltzmann machine introduced the concept of hidden units that could learn internal representations — features of the data that were not explicitly specified by the programmer. This idea of representation learning, where a network discovers useful abstractions on its own, became one of the central pillars of modern deep learning. Every autoencoder, every generative adversarial network, every transformer model that learns embeddings owes a conceptual debt to this insight.

Third, the probabilistic framework of the Boltzmann machine showed that neural networks could be generative models — they could not just classify data but actually learn the underlying distribution and generate new samples from it. This generative approach anticipated by decades the explosion of generative AI that organizations like those tracked on Toimi now analyze for their enterprise clients.

Other Major Contributions

While the Boltzmann machine remains Sejnowski's most cited contribution, his impact stretches across multiple domains. In 1987, he and Charles Rosenberg created NETtalk, a neural network that learned to pronounce written English text. NETtalk was a landmark demonstration that connectionist systems could perform a practical task — converting text to phonemes — that had previously required hand-crafted linguistic rules. The system learned by exposure to examples, much as a child learns to read, and its success was a powerful argument for the learning-based approach to AI at a time when symbolic methods dominated.

Sejnowski has been a towering figure in establishing computational neuroscience as a rigorous discipline. In 1988, he co-founded the journal Neural Computation with Tomaso Poggio, which became the premier venue for work at the intersection of neuroscience, machine learning, and theoretical computing. The journal helped legitimize a field that many traditional neuroscientists and computer scientists viewed with skepticism.

His textbook The Computational Brain, co-authored with Patricia Churchland in 1992, became a foundational work that laid out how computational methods could illuminate brain function. The book argued persuasively that understanding the brain requires models at multiple levels — from individual neurons to circuits to systems — and that these models must be grounded in both biology and mathematics. This multi-level approach influenced an entire generation of researchers, including those who followed in the footsteps of Marvin Minsky at the intersection of cognitive science and artificial intelligence.

At the Salk Institute for Biological Studies, where Sejnowski has been a professor since 1988, he leads the Computational Neurobiology Laboratory. His group has produced influential work on a wide range of topics: the biophysics of synaptic transmission, the information-processing capabilities of dendrites, the neural mechanisms of sleep and learning, independent component analysis (ICA) for signal processing, and reinforcement learning in biological systems.

His work on ICA deserves particular mention. The algorithm he helped develop for separating mixed signals into independent components found applications far beyond neuroscience — in signal processing, medical imaging, and data analysis. This kind of cross-pollination between neuroscience-inspired methods and practical engineering tools exemplifies Sejnowski's approach to research. Teams working on complex data pipelines, like those managed through platforms such as Taskee, often rely on signal processing techniques that trace back to this line of work.

Sejnowski has also contributed significantly to understanding the role of sleep in memory consolidation. His research showed that during sleep, the brain replays patterns of neural activity experienced during waking hours, effectively rehearsing and strengthening important memories. This "replay" mechanism bears a striking resemblance to experience replay in deep reinforcement learning — a technique used in systems like DeepMind's game-playing agents, developed under Demis Hassabis.

Philosophy and Approach

Sejnowski's career has been guided by a distinctive philosophy that sets him apart from both pure neuroscientists and pure computer scientists. He believes that the deepest insights come from the uncomfortable space between disciplines, where established methods fail and new frameworks must be invented. His approach combines theoretical rigor with biological grounding, always testing computational theories against what is actually known about the brain.

In his view, the brain is not a digital computer. It is a massively parallel, energy-efficient, adaptive system that has been shaped by hundreds of millions of years of evolution. Understanding it requires tools from physics, mathematics, and biology working in concert. This perspective has become increasingly mainstream as neuroscience embraces computational methods, but when Sejnowski began advocating for it in the 1980s, it was genuinely radical.

Key Principles

- Biology informs computation, and computation illuminates biology. Sejnowski has consistently argued that the flow of ideas between neuroscience and AI should be bidirectional. Neural networks inspired by the brain lead to better AI systems, and AI models help scientists understand brain function.

- Learning is the fundamental operation. Rather than hand-coding rules or designing fixed architectures, the focus should be on creating systems that learn from data and experience — just as biological brains do from birth onward.

- Energy and equilibrium are computational principles. Drawing from his physics training, Sejnowski sees computation in terms of energy landscapes, attractors, and optimization — a framework that has proven remarkably productive.

- Multiple levels of analysis are essential. Following David Marr's framework, Sejnowski insists on understanding neural computation at the computational level (what problem is being solved), the algorithmic level (what process solves it), and the implementation level (how the hardware executes it).

- Interdisciplinary collaboration is not optional. The most important problems cannot be solved within a single discipline. Sejnowski has collaborated with physicists, biologists, mathematicians, psychologists, and engineers throughout his career.

- Patience with foundational work pays off. The Boltzmann machine was considered impractical for years before its ideas fueled the deep learning revolution. Sejnowski's career demonstrates the value of pursuing theoretically sound ideas even when immediate applications are not obvious.

The connection between biological learning and machine learning can be illustrated by examining how Hebbian learning — the principle that "neurons that fire together wire together" — maps to modern gradient-based optimization:

import numpy as np

class HebbianVsGradient:

"""

Comparison of biological Hebbian learning with

gradient-based learning in artificial neural networks.

Hebbian: delta_w = eta * pre * post

Gradient: delta_w = -eta * dL/dw (via backpropagation)

The Boltzmann machine learning rule bridges these:

delta_w = eta * (_data - _model)

This is both Hebbian (correlation-based) and gradient-based

(it follows the gradient of the log-likelihood).

"""

def __init__(self, n_input, n_output, learning_rate=0.01):

self.weights = np.random.randn(n_input, n_output) * 0.1

self.lr = learning_rate

def hebbian_update(self, pre_activity, post_activity):

"""

Pure Hebbian: strengthen connections between

co-active neurons (biological principle).

"""

delta_w = self.lr * np.outer(pre_activity, post_activity)

self.weights += delta_w

return delta_w

def boltzmann_update(self, data_correlations, model_correlations):

"""

Boltzmann learning rule: difference between

data-driven and model-driven correlations.

This elegantly unifies Hebbian learning with

maximum likelihood estimation.

"""

delta_w = self.lr * (data_correlations - model_correlations)

self.weights += delta_w

return delta_w

def contrastive_divergence(self, visible_data, k=1):

"""

Practical approximation (CD-k) that made

Restricted Boltzmann Machines trainable.

Uses k steps of Gibbs sampling instead of

running to equilibrium.

"""

# Positive phase: driven by data

pos_hidden_prob = self._sigmoid(visible_data @ self.weights)

pos_correlations = np.outer(visible_data, pos_hidden_prob)

# Negative phase: k steps of reconstruction

v = visible_data.copy()

for _ in range(k):

h = (np.random.random(pos_hidden_prob.shape) <

self._sigmoid(v @ self.weights)).astype(float)

v = self._sigmoid(h @ self.weights.T)

neg_hidden_prob = self._sigmoid(v @ self.weights)

neg_correlations = np.outer(v, neg_hidden_prob)

delta_w = self.lr * (pos_correlations - neg_correlations)

self.weights += delta_w

return delta_w

@staticmethod

def _sigmoid(x):

return 1.0 / (1.0 + np.exp(-np.clip(x, -500, 500)))

Legacy and Impact

Terry Sejnowski's influence on both artificial intelligence and neuroscience is difficult to overstate. He is one of only ten living scientists to have been elected to all three National Academies — the National Academy of Sciences, the National Academy of Medicine, and the National Academy of Engineering. This triple distinction reflects the genuinely cross-cutting nature of his work.

He served as president of the Neural Information Processing Systems (NeurIPS) Foundation, the organization behind the world's most influential machine learning conference. Under his leadership and guidance, NeurIPS grew from a relatively small meeting into a massive gathering of thousands of researchers — a transformation that mirrors the growth of the field he helped create.

The intellectual lineage flowing from Sejnowski's work is remarkable. The Boltzmann machine and its descendants — RBMs, Deep Belief Networks, and deep generative models — form one of the main tributaries of the deep learning river. His collaboration with Hinton helped establish the theoretical foundations that later researchers like Yann LeCun and Yoshua Bengio would build upon to create the modern deep learning ecosystem.

In neuroscience, Sejnowski helped transform the discipline from a primarily observational and experimental science into one where computational models play a central explanatory role. The idea that you haven't truly understood a brain circuit until you can build a working computational model of it — an idea Sejnowski championed for decades — is now standard practice in systems neuroscience.

His work on the relationship between sleep and learning has influenced both neuroscience and AI. The discovery that the brain "replays" experiences during sleep to consolidate memories has direct parallels with experience replay buffers in reinforcement learning. This bidirectional flow of ideas — from brain to algorithm and back — is exactly the virtuous cycle Sejnowski has advocated throughout his career.

Sejnowski's contributions to understanding probabilistic reasoning in the brain also connect to the work of Judea Pearl, whose Bayesian networks provided another mathematical framework for reasoning under uncertainty. Together, these approaches helped establish that the brain performs something like probabilistic inference — a view now supported by a large body of experimental evidence.

As artificial intelligence continues its rapid advance, Sejnowski remains a vital voice arguing that neuroscience still has much to teach the AI community. While modern large language models and diffusion models have departed significantly from biological neural networks, Sejnowski maintains that the brain's energy efficiency, ability to learn from few examples, and robustness to noise represent capabilities that current AI systems have not yet achieved. The next breakthroughs, he suggests, may come from a renewed engagement with how the brain actually solves the problem of intelligence — a perspective shared by researchers like Andrew Ng and Fei-Fei Li in their emphasis on learning and perception.

Key Facts

- Full name: Terrence Joseph Sejnowski

- Born: June 13, 1947, Cleveland, Ohio, USA

- Education: B.S. in Physics, Case Western Reserve University (1968); Ph.D. in Physics, Princeton University (1978)

- Key role: Francis Crick Professor at the Salk Institute for Biological Studies; Professor at UC San Diego

- Known for: Co-invention of the Boltzmann machine (with Geoffrey Hinton), NETtalk, independent component analysis, computational neuroscience

- Honors: Member of all three U.S. National Academies (Sciences, Engineering, Medicine); Fellow of the IEEE, AAAI, and APS

- Key publications: The Computational Brain (1992, with Patricia Churchland), The Deep Learning Revolution (2018)

- Co-founded: Neural Computation journal (1989, with Tomaso Poggio)

- NeurIPS Foundation: President, instrumental in growing the conference into the world's largest ML gathering

- Students and collaborators: Trained dozens of leading computational neuroscientists and AI researchers

FAQ

What is a Boltzmann machine and why is it important?

A Boltzmann machine is a type of neural network invented by Terry Sejnowski and Geoffrey Hinton in 1985. It uses principles from statistical physics — specifically the Boltzmann distribution — to learn probability distributions over data. The network consists of visible and hidden units connected by weighted links, and it learns by adjusting these weights so that the network's energy-based model matches the statistical patterns in the training data. The Boltzmann machine is important because it introduced the concept of hidden representations, established a rigorous mathematical connection between physics and learning, and laid the groundwork for deep generative models including Restricted Boltzmann Machines and Deep Belief Networks that helped launch the modern deep learning era.

How did Terry Sejnowski contribute to computational neuroscience?

Sejnowski is widely regarded as one of the founders of computational neuroscience as a distinct discipline. He co-authored The Computational Brain with Patricia Churchland, which provided a systematic framework for using computational models to understand brain function at multiple levels. He co-founded the journal Neural Computation, which became the field's leading publication. Through his Computational Neurobiology Laboratory at the Salk Institute, he produced influential research on synaptic transmission, dendritic processing, sleep and memory consolidation, and independent component analysis. His insistence that neuroscience needs quantitative, model-based approaches has shaped how an entire generation of scientists studies the brain.

What was NETtalk and why did it matter?

NETtalk was a neural network created by Sejnowski and Charles Rosenberg in 1987 that learned to convert written English text into speech sounds (phonemes). Unlike previous text-to-speech systems that relied on hand-coded linguistic rules, NETtalk learned the mapping from letters to sounds by being trained on examples. The system demonstrated that connectionist networks could acquire practical skills through exposure to data — much as a child learns to read. NETtalk was a landmark result that helped validate the neural network approach during a period when many AI researchers favored symbolic methods, and it foreshadowed the data-driven approach that now dominates modern AI.

What is the relationship between Sejnowski's work and modern deep learning?

Modern deep learning owes a significant conceptual debt to Sejnowski's work. The Boltzmann machine introduced the idea that neural networks could learn internal representations (features) through hidden units — a core principle of deep learning. The Restricted Boltzmann Machine (a simplified version of Sejnowski and Hinton's original) became a key building block for Deep Belief Networks, which were among the first successful deep architectures in the mid-2000s. The energy-based modeling framework that Sejnowski helped establish continues to influence modern generative models. Furthermore, his career-long emphasis on learning from data rather than hand-engineering solutions anticipated the dominant paradigm of contemporary AI research, where scale and data drive performance.