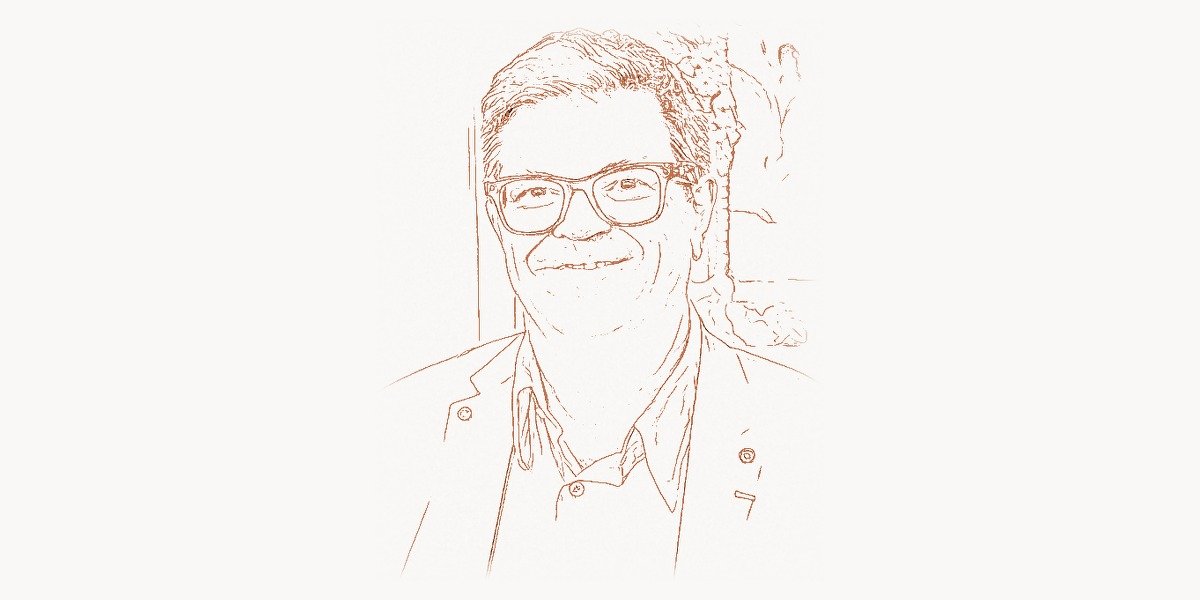

In 1989, a young French researcher at AT&T Bell Labs published a paper that would reshape the entire field of artificial intelligence. Yann LeCun, working with a small team, demonstrated that a neural network architecture called a convolutional neural network could learn to recognize handwritten digits directly from raw pixel data — without any hand-crafted feature engineering. The system, called LeNet, processed images through layers of learnable filters that detected edges, then shapes, then entire characters, mimicking the hierarchical processing of the mammalian visual cortex. It worked so well that the United States Postal Service adopted it to read ZIP codes on envelopes. By the late 1990s, LeCun’s system was reading between 10 and 20 percent of all handchecks deposited in the US banking system. This was not a laboratory curiosity — it was a production machine learning system processing billions of dollars in transactions. But the road from that 1989 paper to the deep learning revolution of the 2010s was anything but straightforward. For over a decade, LeCun and his small community of neural network believers faced skepticism, funding cuts, and academic marginalization. The story of Yann LeCun is the story of a scientist who was right too early — and who persisted long enough to be vindicated on a global scale. His convolutional neural networks are now the backbone of computer vision, medical imaging, autonomous driving, and dozens of other fields that define modern AI. In 2018, LeCun received the Turing Award — computing’s highest honor — alongside Geoffrey Hinton and Yoshua Bengio, the three researchers most responsible for the deep learning revolution.

Early Life and Education

Yann LeCun was born on July 8, 1960, in Soisy-sous-Montmorency, a commune in the northern suburbs of Paris, France. His family name was originally Le Cun (two words), a Breton name from the Brittany region of western France. He later merged it into “LeCun” after moving to the United States because American systems frequently dropped the space, causing confusion in databases and publications. The name has no capitalization rules in Breton — he chose the camelCase rendering himself, a fitting convention for someone who would spend his career at the intersection of mathematics and computation.

LeCun showed an early aptitude for engineering and mathematics. He was fascinated by machines and how things worked. As a teenager, he became interested in artificial intelligence after reading a popular science article about the perceptron — the simple neural network model proposed by Frank Rosenblatt in 1957. The idea that a machine could learn from examples, rather than being explicitly programmed, captivated him.

He studied electrical engineering at ESIEE Paris (Ecole Superieure d’Ingenieurs en Electrotechnique et Electronique), graduating in 1983. He then pursued a PhD in computer science at Universite Pierre et Marie Curie (now part of Sorbonne Universite) in Paris. His doctoral research, completed in 1987, focused on learning algorithms for neural networks. This was a critical period: the backpropagation algorithm — the method for training multi-layer neural networks by propagating error gradients backward through the network — had been popularized in 1986 by David Rumelhart, Geoffrey Hinton, and Ronald Williams. LeCun independently developed a similar learning procedure and immediately saw its potential for pattern recognition tasks.

During his PhD, LeCun was influenced by the work of several key figures. He studied under Francoise Fogelman-Soulie and worked with Maurice Milgram on handwriting recognition. He was also deeply influenced by the theoretical neuroscience work of David Hubel and Torsten Wiesel, who had won the Nobel Prize in 1981 for discovering that neurons in the visual cortex respond to specific features — edges, orientations, motion — in a hierarchical fashion. This biological insight would become the architectural foundation of convolutional neural networks.

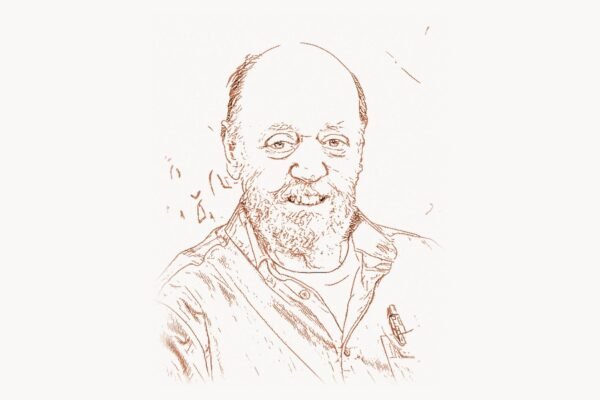

After completing his PhD, LeCun spent a year as a postdoctoral researcher at the University of Toronto, working in Geoffrey Hinton’s lab. This experience was formative. Hinton was one of the few researchers in the world who believed deeply in neural networks at a time when the approach was widely considered a dead end. The intellectual environment in Hinton’s lab gave LeCun the confidence to pursue his vision of building learning machines that could process visual data.

The CNN Breakthrough

Technical Innovation

In 1988, LeCun joined AT&T Bell Labs in Holmdel, New Jersey, where he was given the freedom and computational resources to develop his ideas. The result was LeNet — specifically LeNet-5, the most well-known version — a convolutional neural network architecture that became the template for virtually all subsequent work in deep learning for computer vision.

The core innovation of a CNN is the convolutional layer. Instead of connecting every input pixel to every neuron (as in a fully connected network), a convolutional layer slides a small learnable filter — typically 3×3 or 5×5 pixels — across the entire input image. This filter detects a specific local pattern (an edge, a corner, a texture) wherever it appears in the image. The key insight is weight sharing: the same filter is applied at every spatial position, which dramatically reduces the number of parameters and makes the network translation-invariant. An edge detector that works in the top-left corner also works in the bottom-right.

LeCun stacked multiple convolutional layers, interspersed with pooling layers (which reduce spatial resolution, making the representation progressively more compact and abstract), followed by fully connected layers for the final classification. The result was a hierarchy of feature detectors: the first layer learned to detect edges and simple textures; the second layer combined these into corners, junctions, and small shapes; the third layer assembled these into parts of characters; and the final layers recognized complete digits. This hierarchical feature extraction — from simple to complex, from local to global — mirrors the architecture of the biological visual cortex that Hubel and Wiesel described.

# Core structure of LeNet-5 — LeCun's 1998 architecture

# This is the network that read millions of checks and ZIP codes

# Architecture: INPUT -> CONV -> POOL -> CONV -> POOL -> FC -> FC -> OUTPUT

import numpy as np

class ConvolutionalLayer:

"""

The key innovation: learnable filters that slide across the image.

Weight sharing means the same filter detects the same feature

regardless of its position in the image.

"""

def __init__(self, num_filters, filter_size, input_channels):

# Each filter is a small matrix of learnable weights

# LeNet-5 used 5x5 filters — small windows that scan the image

self.filters = np.random.randn(

num_filters, input_channels, filter_size, filter_size

) * 0.01

self.biases = np.zeros(num_filters)

self.filter_size = filter_size

def forward(self, input_image):

"""

Slide each filter across the input image.

At each position, compute the dot product between the filter

and the local image patch. This produces a 'feature map' —

a spatial map of where the learned pattern occurs.

"""

batch, channels, height, width = input_image.shape

out_h = height - self.filter_size + 1

out_w = width - self.filter_size + 1

output = np.zeros((batch, len(self.filters), out_h, out_w))

for f_idx, filt in enumerate(self.filters):

for i in range(out_h):

for j in range(out_w):

# Extract the local patch

patch = input_image[:, :,

i:i+self.filter_size,

j:j+self.filter_size]

# Dot product: does this patch match the filter?

output[:, f_idx, i, j] = (

np.sum(patch * filt, axis=(1, 2, 3))

+ self.biases[f_idx]

)

return output

# LeNet-5 architecture (1998):

# Layer 1: 6 filters of 5x5 on 32x32 grayscale input -> 6@28x28

# Pool 1: 2x2 average pooling -> 6@14x14

# Layer 2: 16 filters of 5x5 -> 16@10x10

# Pool 2: 2x2 average pooling -> 16@5x5

# FC 1: 400 -> 120 neurons

# FC 2: 120 -> 84 neurons

# Output: 84 -> 10 classes (digits 0-9)

#

# Total parameters: ~60,000

# Compare to modern CNNs: ResNet-50 has ~25 million parameters

# But the PRINCIPLES are identicalThe training process used backpropagation — the same algorithm that LeCun had studied during his PhD. The network was shown tens of thousands of labeled handwritten digits. For each digit, it computed a prediction, measured the error, and propagated the error gradient backward through all the layers, adjusting every filter weight to reduce the error. After thousands of iterations, the filters self-organized into meaningful feature detectors without any human specification of what features to look for. This was the fundamental shift: instead of a human engineer designing a feature extraction pipeline (as was standard in computer vision at the time), the features were learned automatically from data.

Why It Mattered

LeNet’s practical impact was immediate and measurable. AT&T deployed the system for check reading and ZIP code recognition. It processed real-world data at scale — noisy, messy handwriting from millions of different people — and it worked. This was a stark contrast to the academic pattern recognition systems of the era, which often achieved impressive results on clean laboratory datasets but failed in production.

But the deeper significance was architectural. LeCun demonstrated a general-purpose approach to visual recognition: take raw pixel data as input, apply learnable hierarchical feature extraction, and train the entire system end-to-end with gradient descent. This approach — which seems obvious in retrospect — was revolutionary in the late 1980s and 1990s. The dominant paradigm in computer vision was hand-engineered feature pipelines: Gabor filters, SIFT descriptors, histogram of oriented gradients, carefully designed by domain experts. LeCun’s system replaced all of that with a single trainable architecture.

The approach would not achieve its full potential until the 2010s, when three things converged: massively larger datasets (ImageNet, with millions of labeled images), massively more powerful hardware (GPUs, originally designed for video games, repurposed for matrix multiplication), and deeper architectures (AlexNet in 2012, which was essentially a scaled-up LeNet). But the architectural principles — convolution, pooling, hierarchical feature learning, end-to-end training — were all established by LeCun in the late 1980s and formalized in his landmark 1998 paper with Leon Bottou, Yoshua Bengio, and Patrick Haffner.

Other Major Contributions

While CNNs are LeCun’s most famous contribution, his impact on artificial intelligence extends far beyond a single architecture.

The MNIST Dataset. In 1998, LeCun and his collaborators created the MNIST dataset — a collection of 70,000 handwritten digit images (60,000 training, 10,000 test) that became the most widely used benchmark in machine learning. For nearly two decades, MNIST was the standard test for new classification algorithms. Virtually every machine learning paper published between 1998 and 2015 included MNIST results. The dataset was instrumental in making machine learning research reproducible and comparable. It was also small enough to be practical on the limited hardware of the era, which contributed to its ubiquity. Today, MNIST is considered too simple for serious research (modern architectures achieve over 99.8% accuracy), but its role in democratizing machine learning research was enormous. If you have ever written a tutorial on neural networks in Python or tested a new framework, chances are you used MNIST.

Energy-Based Models. LeCun has been a longstanding advocate for energy-based models (EBMs) — a generalization of probabilistic models where the learning system assigns a scalar energy to each configuration of variables. Low energy corresponds to likely (or desirable) configurations; high energy corresponds to unlikely ones. This framework unifies many different learning approaches: supervised classification, generative modeling, structured prediction, and more. LeCun has argued that EBMs provide a more natural and flexible framework for learning than traditional probabilistic models, particularly for complex structured outputs where computing exact probabilities is intractable.

Self-Supervised Learning. In recent years, LeCun has become the most prominent advocate for self-supervised learning — a training paradigm where systems learn representations from unlabeled data by predicting parts of the input from other parts. Instead of requiring millions of human-labeled examples (supervised learning), a self-supervised system might learn to predict the missing part of an image, the next word in a sentence, or the future state of a video sequence. LeCun has argued that self-supervised learning is the key to achieving human-level intelligence, because humans and animals learn primarily from observation — not from labeled examples. A child does not need 10,000 labeled images of cats to learn what a cat looks like; they learn from unstructured visual experience. LeCun’s Joint Embedding Predictive Architecture (JEPA) is his proposed framework for building AI systems that learn through prediction in a latent representation space, rather than generating predictions in pixel space.

# Self-Supervised Learning: the paradigm LeCun champions

# Instead of requiring labeled data, the model learns by prediction

# This example illustrates the core idea behind JEPA

import torch

import torch.nn as nn

class SimpleJEPABlock(nn.Module):

"""

Simplified Joint Embedding Predictive Architecture (JEPA).

LeCun's key insight: predict in REPRESENTATION space,

not in pixel space. This avoids the model wasting capacity

on predicting irrelevant details (exact pixel values).

Two encoders produce embeddings of different views.

A predictor learns to map one embedding to the other.

"""

def __init__(self, embed_dim=256, pred_dim=128):

super().__init__()

# Context encoder: processes the visible part of the input

self.context_encoder = nn.Sequential(

nn.Linear(784, 512),

nn.LayerNorm(512),

nn.GELU(),

nn.Linear(512, embed_dim),

)

# Target encoder: processes the masked/target part

# Updated via exponential moving average (no gradients)

self.target_encoder = nn.Sequential(

nn.Linear(784, 512),

nn.LayerNorm(512),

nn.GELU(),

nn.Linear(512, embed_dim),

)

# Predictor: maps context embedding -> target embedding

# This is where the "world model" learning happens

self.predictor = nn.Sequential(

nn.Linear(embed_dim, pred_dim),

nn.GELU(),

nn.Linear(pred_dim, embed_dim),

)

def forward(self, context_input, target_input):

# Encode both views into abstract representations

context_emb = self.context_encoder(context_input)

pred_emb = self.predictor(context_emb)

# Target encoder is NOT trained by gradient descent

# Instead, its weights are a moving average of context_encoder

with torch.no_grad():

target_emb = self.target_encoder(target_input)

# Loss: how well can we predict the target representation

# from the context representation?

# No pixel reconstruction — only abstract representation matching

loss = nn.functional.mse_loss(pred_emb, target_emb)

return loss

# Why this matters:

# - No labeled data needed (self-supervised)

# - Learns abstract representations, not pixel details

# - The predictor acts as a primitive "world model"

# - LeCun argues this is closer to how animals learn:

# predicting what will happen next in abstract terms,

# not reconstructing every sensory detailDjVu Image Compression. In the late 1990s, LeCun developed the DjVu image compression format at AT&T Labs, designed specifically for scanned documents. DjVu achieved compression ratios 5 to 10 times better than PDF for scanned pages while maintaining readability. The technology was used by the Internet Archive and other digital library projects to compress millions of scanned books and documents. While less well-known than his AI work, DjVu demonstrated LeCun’s ability to build practical systems that operated at scale.

Contributions to Open Source and Research Infrastructure. LeCun has been a consistent advocate for open research and open-source tools. His early neural network library, Lush (a Lisp dialect for numerical computing), was one of the first open-source deep learning frameworks. At Meta (formerly Facebook), his research lab FAIR (Facebook AI Research) has released numerous open-source models and tools, including PyTorch — now the dominant framework for deep learning research. The decision to make PyTorch open source, which LeCun supported and championed, fundamentally shaped the deep learning ecosystem. Researchers who build AI systems today, whether using modern code editors or cloud-based notebooks, almost certainly use PyTorch or frameworks influenced by it.

Philosophy and Approach

Key Principles

LeCun’s intellectual philosophy is characterized by several distinctive positions that set him apart from many of his contemporaries in AI research.

Learning over engineering. Throughout his career, LeCun has argued that systems should learn representations from data rather than having representations designed by human engineers. This principle, obvious today, was deeply controversial in the 1990s and 2000s when hand-crafted features dominated computer vision, speech recognition, and natural language processing. LeCun’s insistence that end-to-end learning would eventually surpass hand-engineered pipelines was vindicated dramatically in the 2010s.

Skepticism of large language models as a path to AGI. Despite working at Meta, one of the companies most heavily invested in large language models, LeCun has been publicly skeptical that autoregressive language models (the architecture behind GPT and similar systems developed by Sam Altman’s OpenAI) can lead to human-level intelligence. He argues that these models are fundamentally limited because they are trained only on text — which represents a tiny fraction of human experience — and because they generate outputs token by token without any internal planning or world model. He has compared current LLMs to a “very smart parrot” and has argued that true intelligence requires grounded understanding of the physical world, which text alone cannot provide.

World models and planning. LeCun’s vision for the future of AI centers on systems that build internal models of the world and use those models to plan actions. He envisions architectures that, like humans, can simulate the consequences of actions before taking them — a capacity he calls “mental simulation.” This is fundamentally different from the current dominant paradigm of scaling up pattern matching on ever-larger datasets. LeCun’s proposed architecture, which he calls the World Model or the JEPA framework, would combine perception, memory, and planning in a single integrated system.

Open science and public engagement. LeCun is one of the most publicly engaged AI researchers in the world. He is highly active on social media, where he regularly debates AI policy, corrects misconceptions about AI capabilities, and argues against what he considers AI doomerism — the view that advanced AI poses an existential risk to humanity. He has publicly disagreed with prominent AI safety researchers, including Geoffrey Hinton (his Turing Award co-laureate), arguing that the risks of AI are manageable and that the benefits far outweigh the dangers. This willingness to engage in public debate, often contentiously, reflects a deep conviction that AI development should be open, transparent, and not controlled by a small number of companies or governments.

Persistence through AI winters. Perhaps LeCun’s most important quality is sheer persistence. During the late 1990s and 2000s — a period sometimes called the “neural network winter” — funding for neural network research dried up, major conferences rejected neural network papers, and the dominant paradigms in machine learning were support vector machines and graphical models. LeCun, along with Hinton and Bengio, continued working on neural networks when it was professionally disadvantageous to do so. They organized workshops, published in niche venues, and kept the research community alive. When deep learning exploded in 2012, the foundational work was already done — because a handful of stubborn researchers had refused to abandon it. Teams across the industry now use tools like Toimi to manage the complex AI projects that LeCun’s foundational work made possible.

Legacy and Impact

Yann LeCun’s impact on technology and science is difficult to overstate. Convolutional neural networks — the architecture he pioneered — are the foundation of modern computer vision. Every time your phone unlocks via face recognition, every time a self-driving car identifies a pedestrian, every time a medical imaging system detects a tumor, the underlying technology traces directly back to LeCun’s work at Bell Labs in the late 1980s.

The numbers are staggering. As of the mid-2020s, CNN-based systems process billions of images per day across social media platforms, search engines, security systems, medical imaging pipelines, and industrial quality control. The ImageNet competition — which AlexNet (a CNN) won decisively in 2012, triggering the deep learning revolution — was fundamentally a vindication of the approach LeCun had been advocating for over two decades. AlexNet’s creator, Alex Krizhevsky, was a student of Geoffrey Hinton, but the architecture was a direct descendant of LeNet.

At Meta, where LeCun has served as Chief AI Scientist since 2013, he built FAIR into one of the most productive and influential AI research labs in the world. FAIR’s contributions include PyTorch (the dominant deep learning framework), advances in self-supervised learning for vision and language, open-source large language models (the LLaMA family), and fundamental research in robotics, computer vision, and natural language processing. LeCun’s insistence on open research — publishing papers, releasing code, sharing models — has shaped the culture of the field and has been instrumental in the rapid progress of AI research.

The 2018 Turing Award, shared with Geoffrey Hinton and Yoshua Bengio, recognized the trio’s collective contributions to deep learning. But each brought a different emphasis: Hinton contributed the Boltzmann machine, backpropagation refinements, and dropout; Bengio contributed sequence modeling, attention mechanisms, and generative adversarial network theory; LeCun contributed convolutional networks, practical deployment, and a relentless focus on learning representations from data. Together, they created the theoretical and practical foundations for the AI revolution that has transformed technology, industry, and society in the 2020s — an era where even task management platforms incorporate AI capabilities that would have been science fiction when LeCun first demonstrated LeNet.

LeCun’s influence extends beyond his own research. As a professor at New York University (where he has taught since 2003), he has trained a generation of AI researchers. His students and postdocs have gone on to lead AI teams at major technology companies and research institutions around the world. His freely available deep learning course at NYU has been studied by thousands of students and practitioners. His vision of AI — grounded in learning, world models, and open science — continues to shape the direction of the field, even as debates rage about the best path to more capable AI systems.

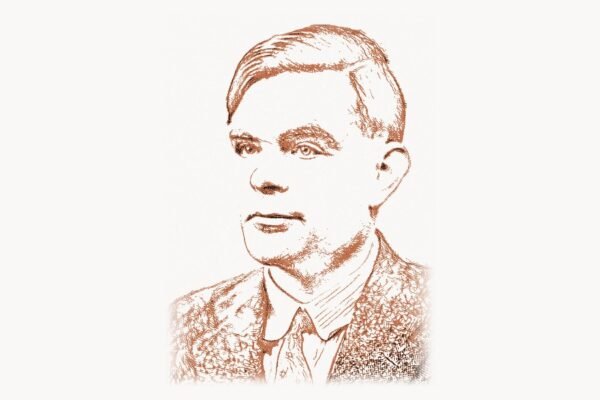

The conceptual lineage from Alan Turing’s theoretical foundations of computation, through John McCarthy’s formalization of AI as a field, to LeCun’s practical demonstration that machines can learn to see — this is one of the great intellectual arcs of the twentieth and twenty-first centuries. LeCun’s contribution was to show that the brain’s approach to visual processing — hierarchical, local, learned — could be implemented in silicon and trained with data. That insight, pursued with extraordinary persistence through decades of skepticism, changed the world.

Key Facts

- Full name: Yann Andre LeCun (born Le Cun)

- Born: July 8, 1960, Soisy-sous-Montmorency, France

- Education: ESIEE Paris (Diplome d’Ingenieur, 1983); PhD in Computer Science, Universite Pierre et Marie Curie, Paris (1987)

- Key positions: AT&T Bell Labs (1988-1996), AT&T Labs-Research (1996-2003), New York University Professor (2003-present), Chief AI Scientist at Meta/Facebook (2013-present)

- Major invention: Convolutional Neural Networks (LeNet, 1989-1998)

- Turing Award: 2018, shared with Geoffrey Hinton and Yoshua Bengio, for conceptual and engineering breakthroughs in deep learning

- Other honors: IEEE Neural Network Pioneer Award (2014), AAAI Fellow, IEEE Fellow, ACM Fellow, member of the US National Academy of Engineering, member of the French Academie des Sciences

- Key datasets: MNIST (co-creator, 1998) — the most widely used machine learning benchmark for two decades

- Research focus (current): Self-supervised learning, Joint Embedding Predictive Architecture (JEPA), energy-based models, world models for autonomous AI

- Open source contributions: Lush (early neural network library), champion of PyTorch, advocate for open AI research at Meta/FAIR

FAQ

What is a convolutional neural network and why was LeCun’s version revolutionary?

A convolutional neural network (CNN) is a type of deep learning architecture designed specifically for processing grid-structured data like images. The key innovation is the convolutional layer, which uses small learnable filters that slide across the input image to detect local patterns — edges, textures, shapes — regardless of where they appear. LeCun’s version was revolutionary because it was the first to demonstrate that these filters could be learned automatically from data using backpropagation, eliminating the need for hand-crafted feature engineering. His LeNet architecture established the template — convolutional layers, pooling layers, fully connected layers, trained end-to-end — that all modern CNNs still follow. Before LeCun, computer vision relied on features designed by human experts; after LeCun, the features were learned by the network itself.

Why did LeCun receive the Turing Award and what does it mean for AI research?

LeCun received the 2018 ACM A.M. Turing Award (often called the Nobel Prize of computing) together with Geoffrey Hinton and Yoshua Bengio for their collective work on deep learning. The award recognized that these three researchers, often called the “Godfathers of Deep Learning,” laid the conceptual and engineering foundations for the deep learning revolution that has transformed AI since 2012. LeCun’s specific contribution was the convolutional neural network and his decades of work on learning representations from data. The award validated neural network research after decades of skepticism from the mainstream AI and machine learning communities, and it signaled that deep learning was not a passing trend but a fundamental shift in how intelligent systems are built.

How does LeCun’s vision for AI differ from the large language model approach?

LeCun has been publicly skeptical that scaling up autoregressive large language models (like GPT) will lead to human-level AI. He argues that LLMs are limited because they learn only from text, which represents a fraction of human knowledge and experience. Humans learn primarily through sensory observation of the physical world — vision, touch, spatial reasoning — not through language. LeCun advocates for AI systems that build internal world models — mental simulations of how the world works — and use those models to plan and reason. His proposed Joint Embedding Predictive Architecture (JEPA) learns by predicting abstract representations rather than generating raw pixels or tokens. This approach, he argues, is closer to how biological intelligence works and is more likely to produce systems that truly understand the world rather than merely generating plausible text. This debate — world models versus language models — is one of the central intellectual conflicts in AI research today, with practitioners using tools built on frameworks like Linux-based infrastructure to push both approaches forward.